User guide¶

Your first steps with TrackMe¶

Access TrackMe main interface¶

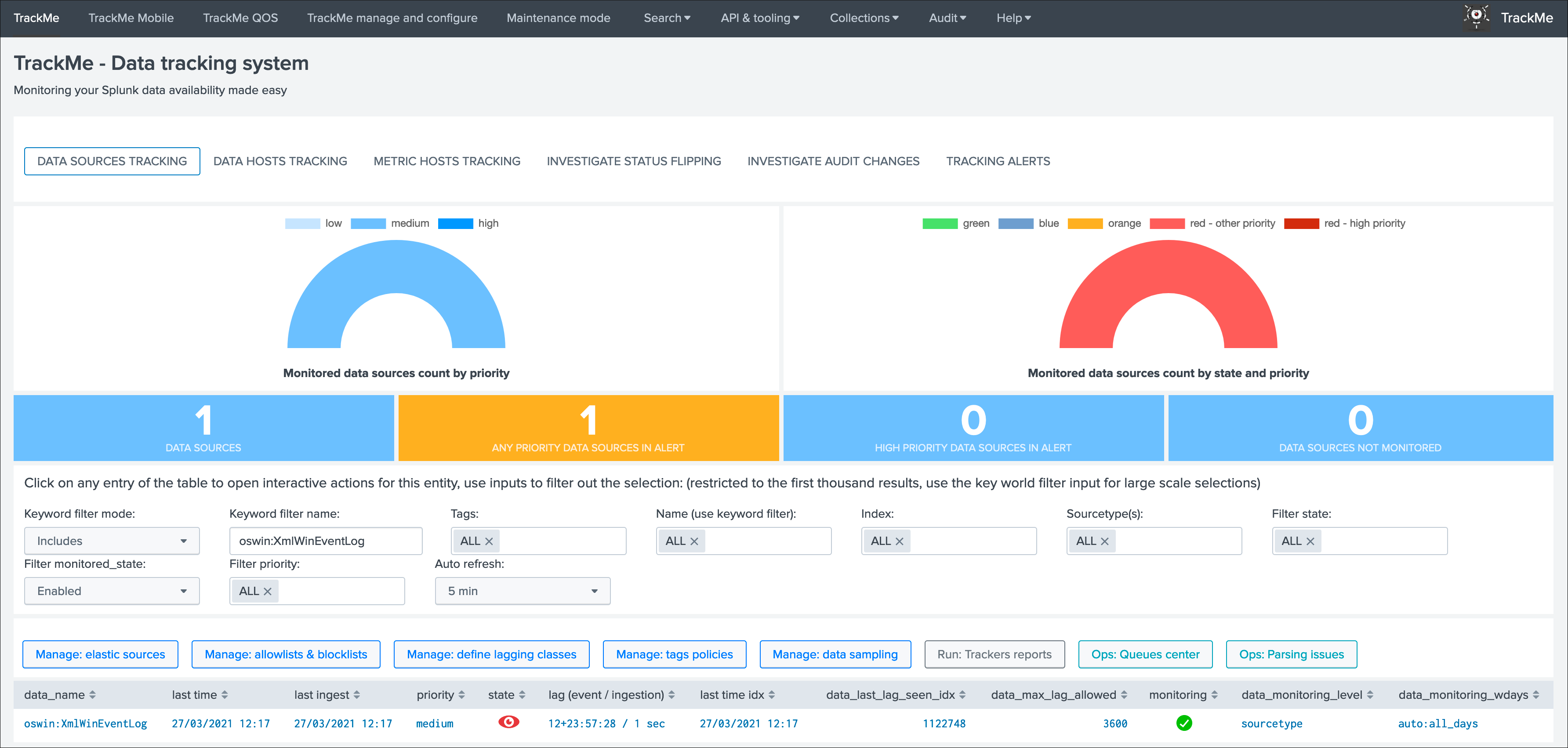

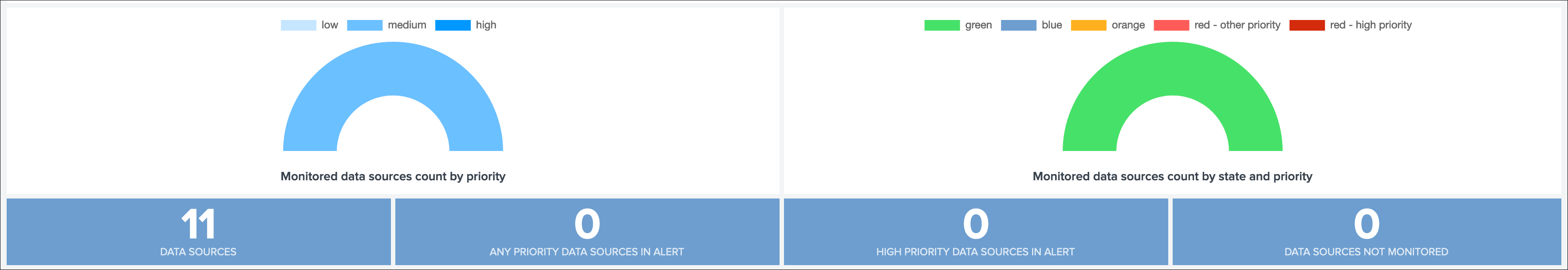

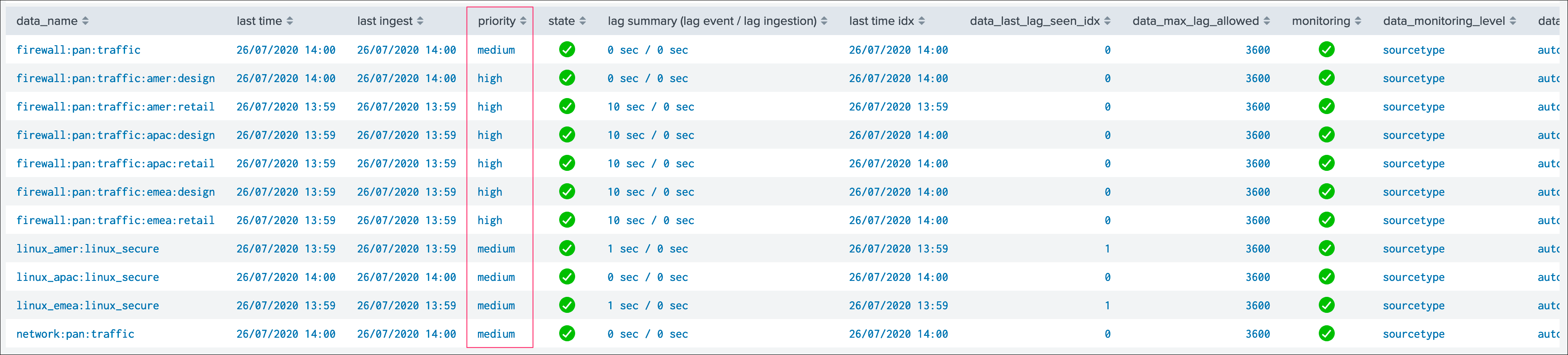

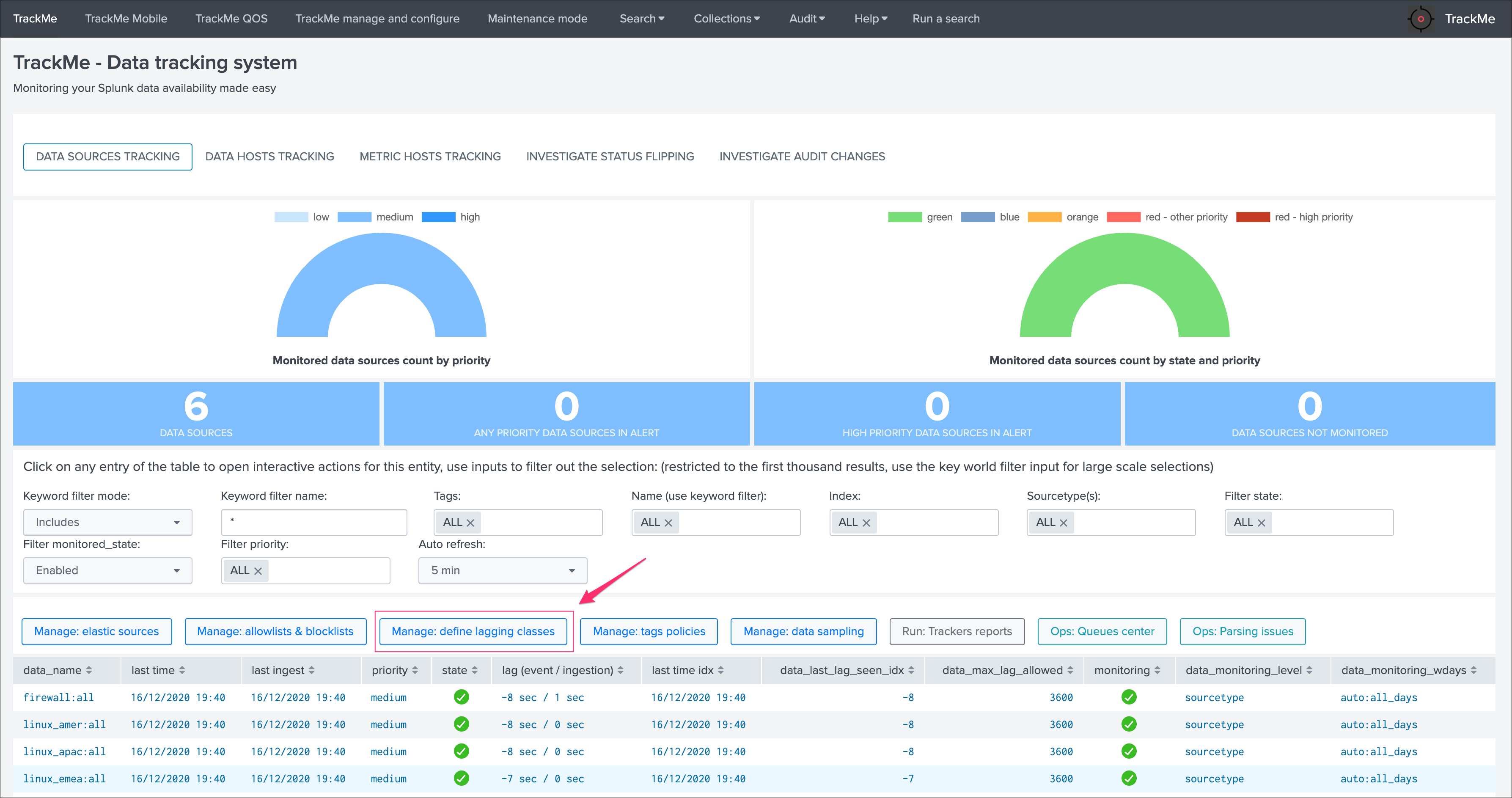

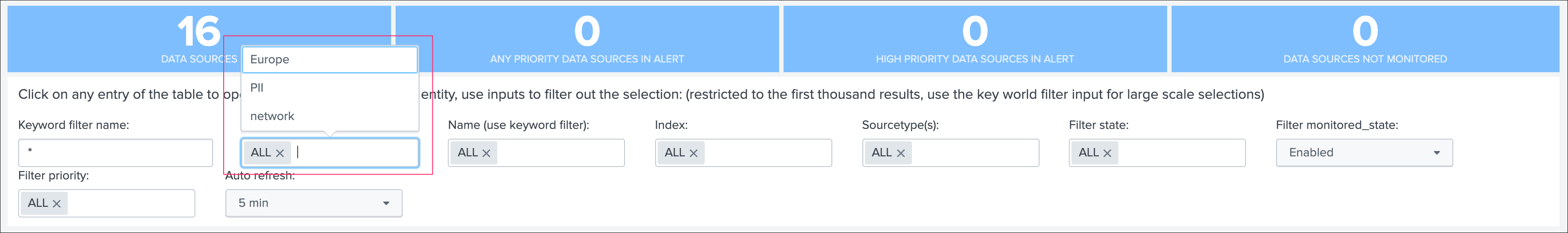

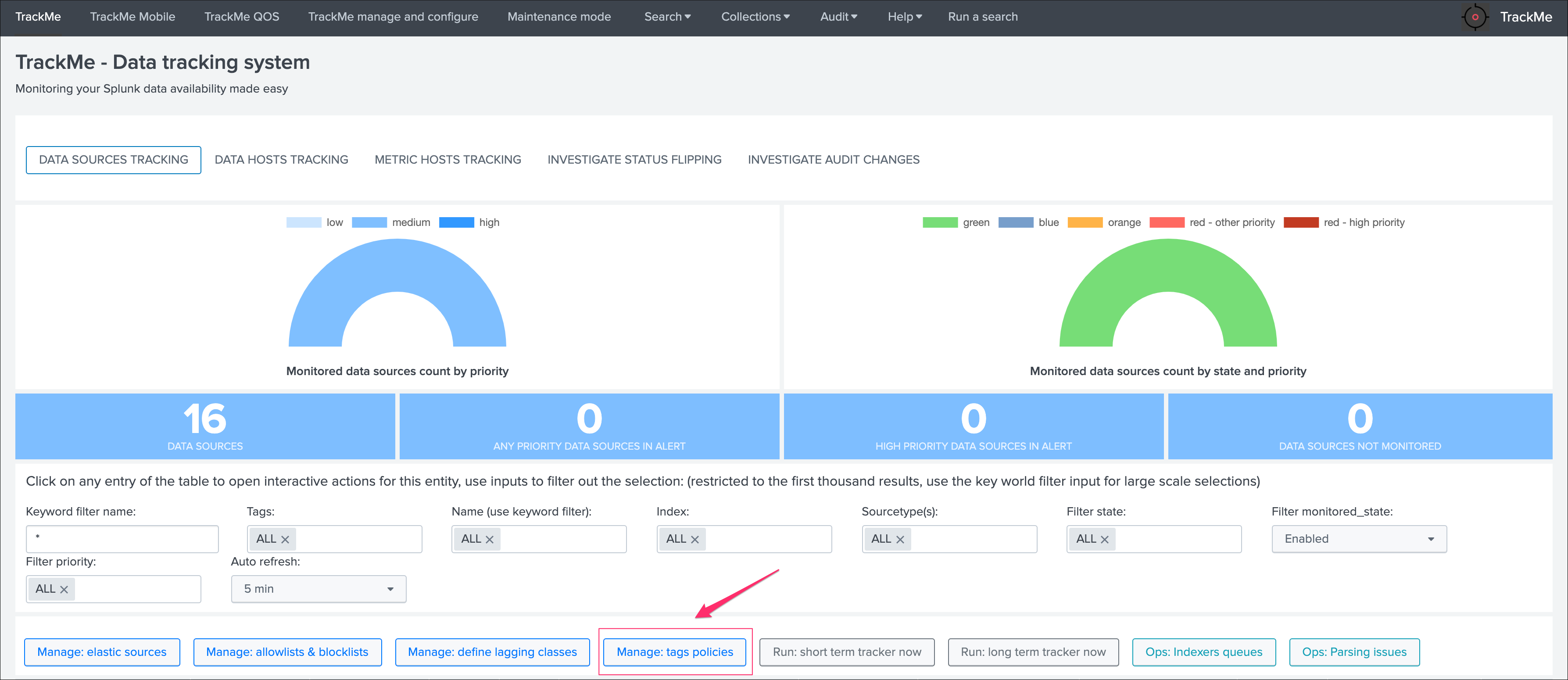

When you open the application, you access by default to the main TrackMe UI and especially to the data sources tracking tab, if the tracker reports have already been executed at least once, the application will expose the data that was discovered in your environment:

Tip

If the UI is empty and no data sources are showing up:

- You can wait for the short term trackers execution which are scheduled to run every 5 minutes

- Or manually run the data sources tracker by clicking on the button “Run: short term tracker now” (we will come back to the tracker notion later in this guide)

Data Sources tracking and features¶

Data Source main screen¶

Let’s click on any entry in the table:

Warning

If you do not see the full window (called modal window), review your screen resolution settings, TrackMe requires a minimal high enough resolution when navigating through the app*

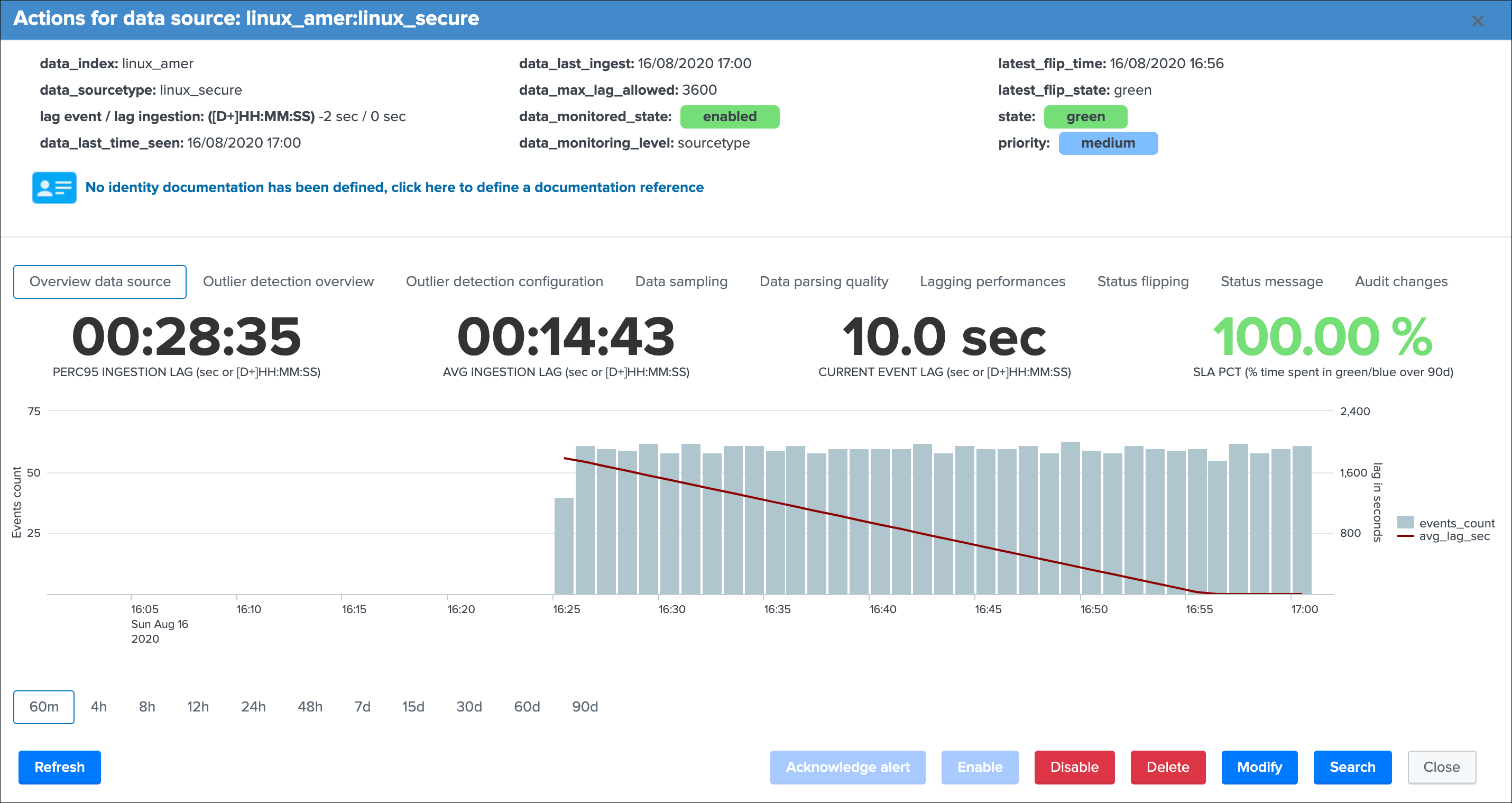

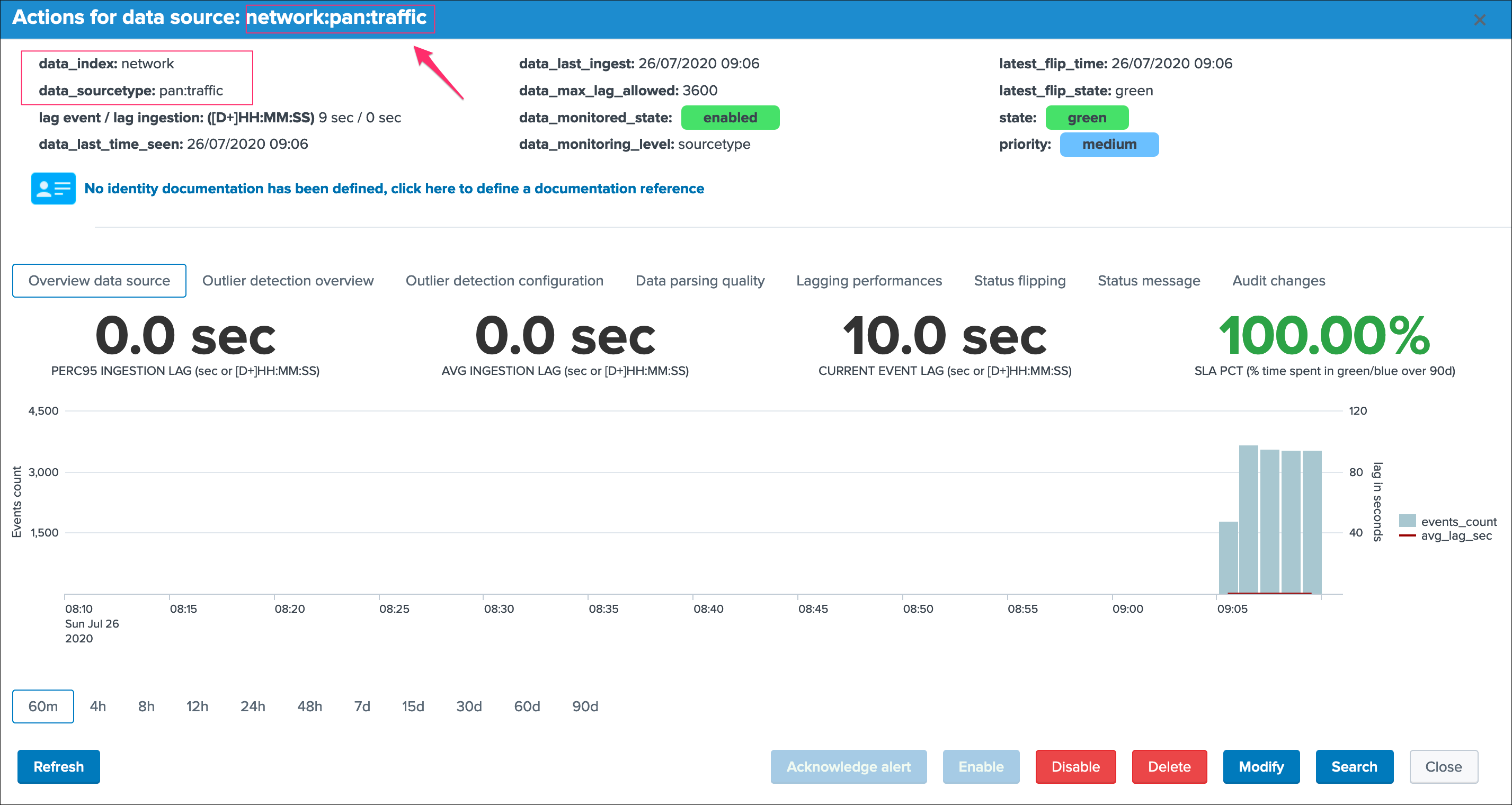

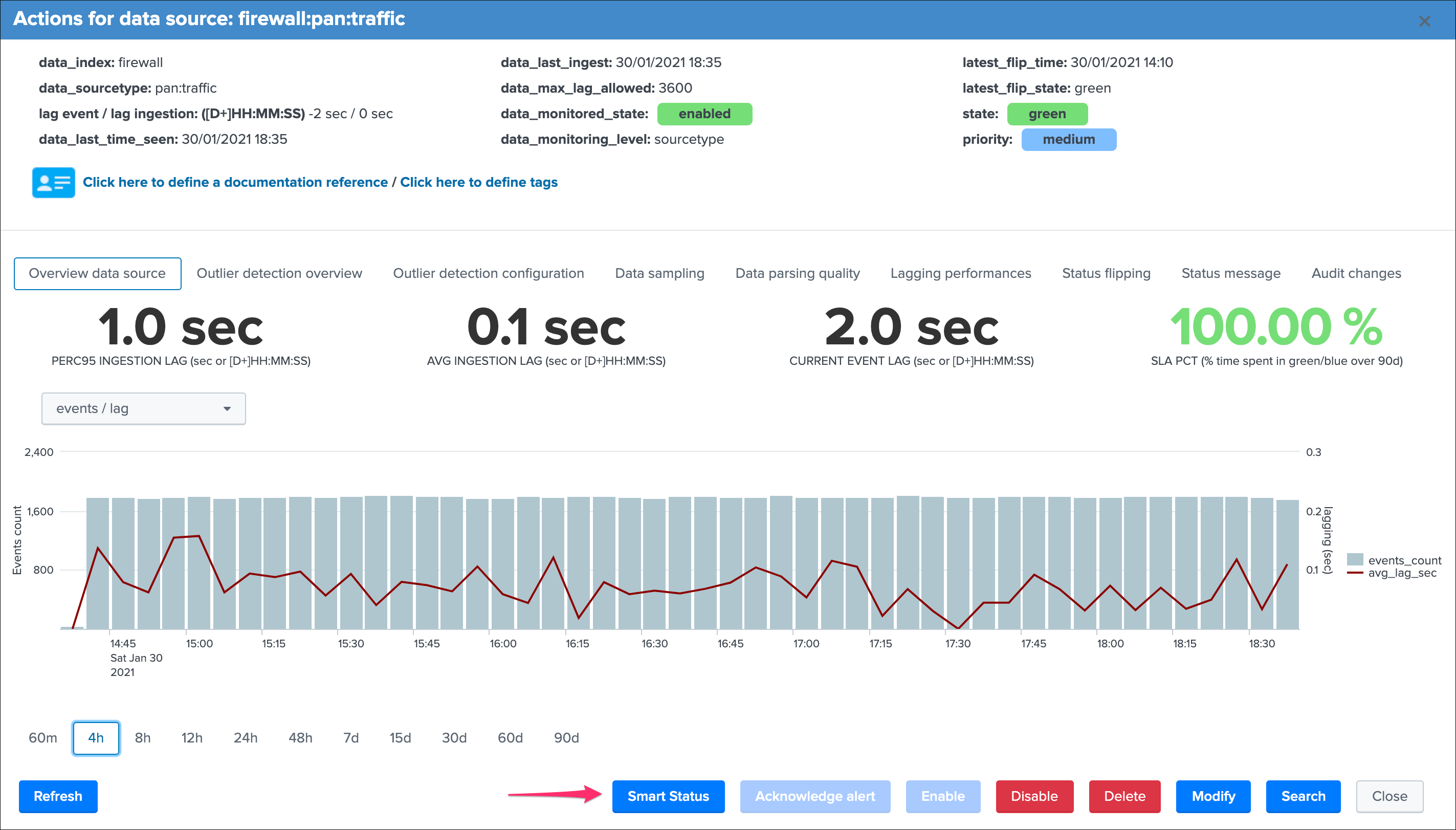

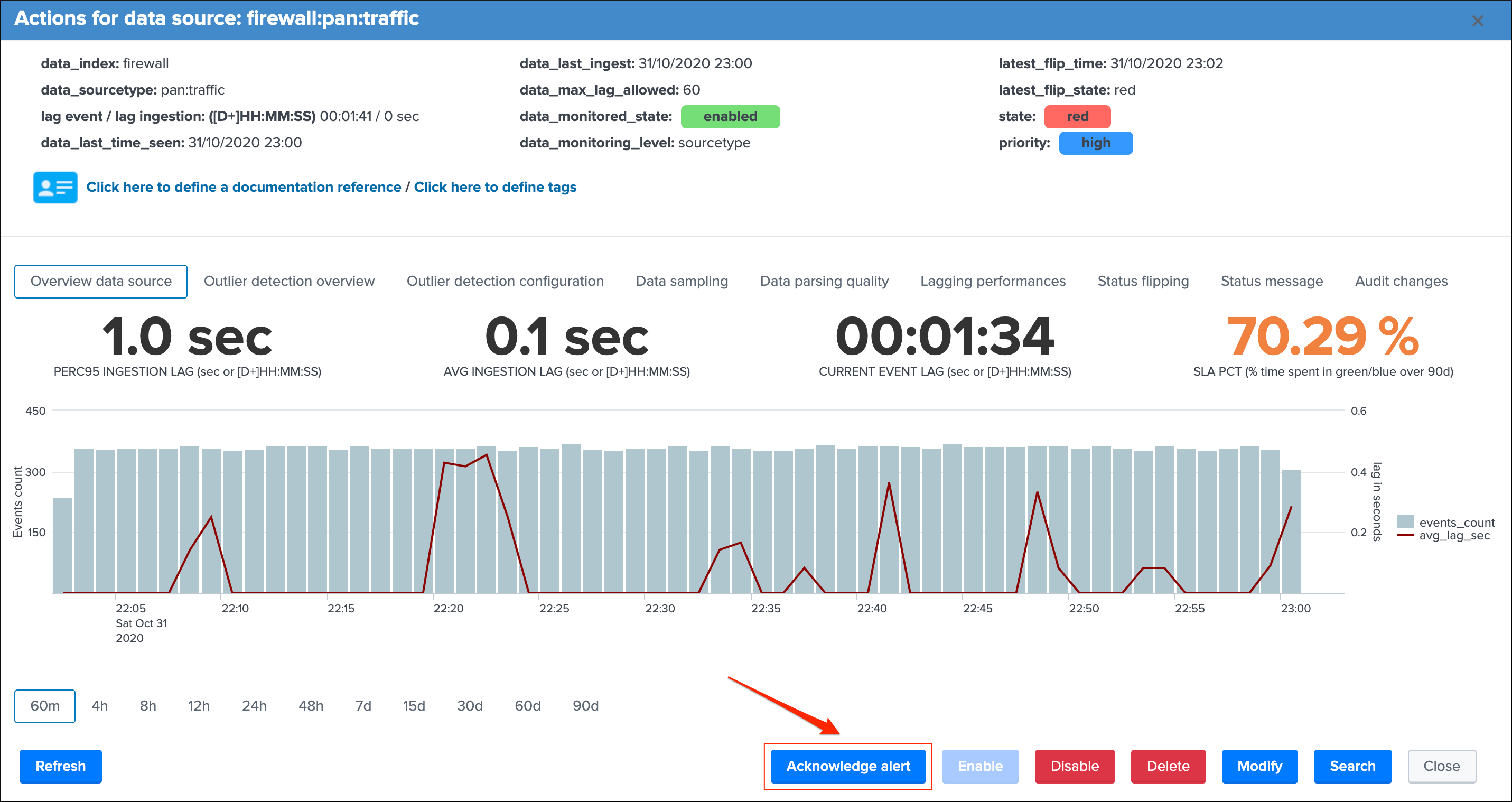

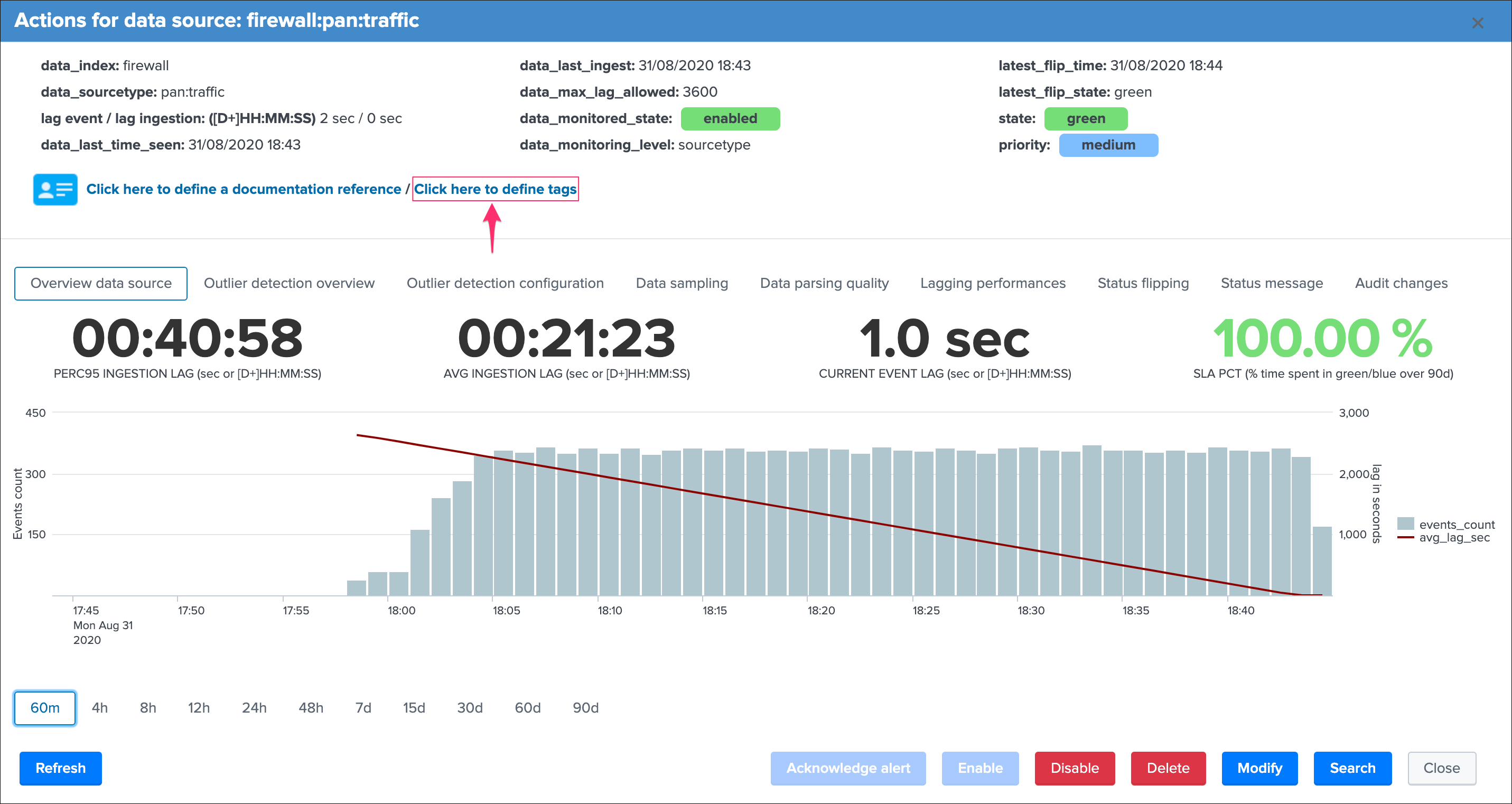

The modal window “open-up” is the user main interaction with TrackMe, depending on the context different information, charts, calculations and options are provided.

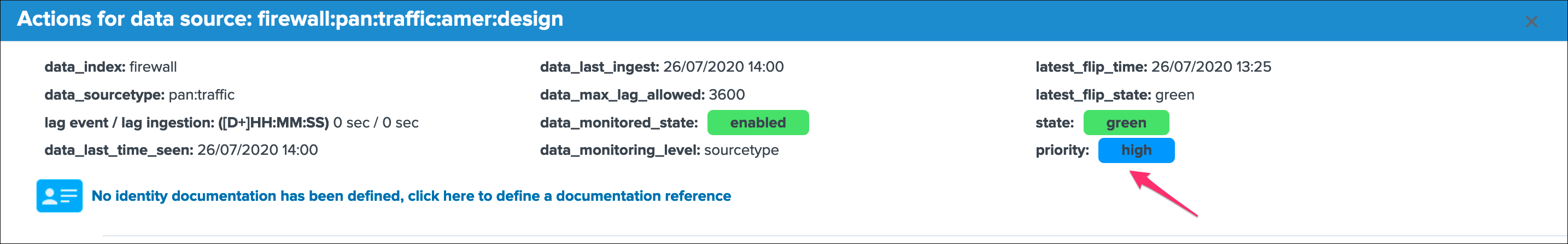

In the context of the data sources tracking, let’s have a deeper look at top part of the window:

Let’s review these information:

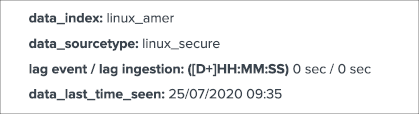

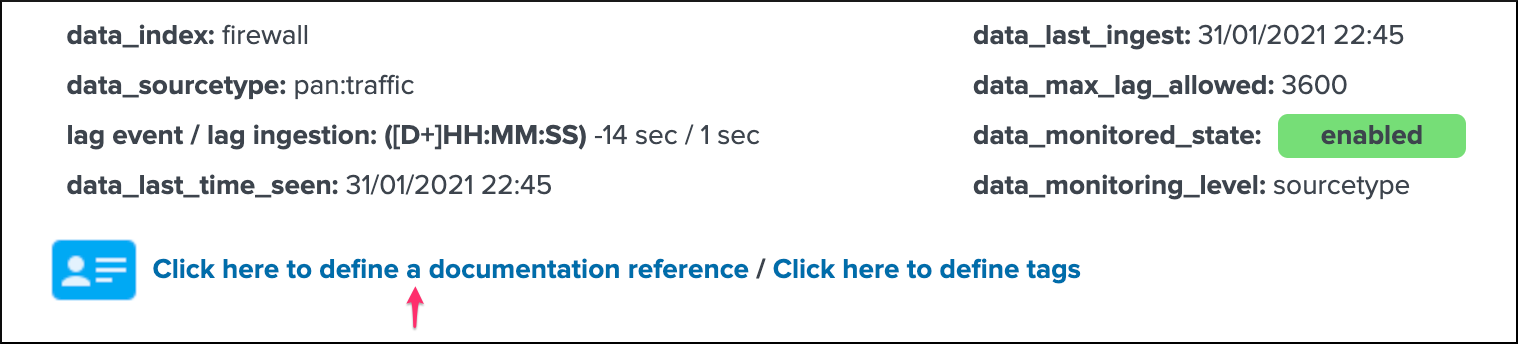

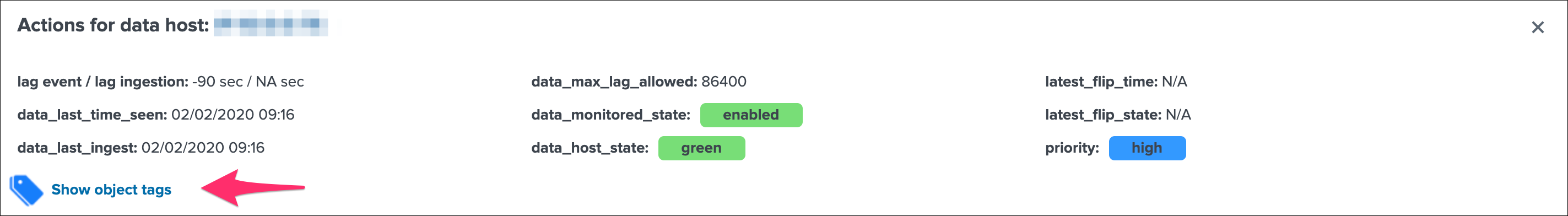

group 1 left screen

data_indexis the name of the Splunk index where the data residesdata_sourcetypeis the Splunk sourcetype for this entitylag event / lag ingestion: ([D+]HH:MM:SS)exposes the two main lagging metrics handled by TrackMe, the lag from the event point of view, and the lag from the ingestion point of view, we will come back to that very soondata_last_time_seenis the last date time TrackMe has detected data available for this data source, from the event time stamp point of view

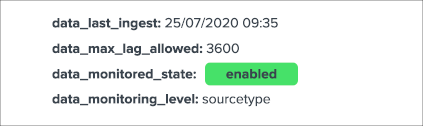

group 2 middle screen

data_last_ingestis the last date time TrackMe has detected data ingested by Splunk for the data source, this can differ from the very last event available in the data source (more after)data_max_lag_allowedis the value in seconds that TrackMe will use as the main information to define the status of the data source, by default it is defined to 1 hour (3600 seconds)data_monitored_stateis a flag which tells TrackMe that this data source should be actively monitored, this is “enabled” by default and be defined within the UI to “disabled” (the red “Disable” button in the entity window)data_monitoring_levelis a flag which tells TrackMe how to take into account other sourcetypes available in that same index when defining the current status of the entity

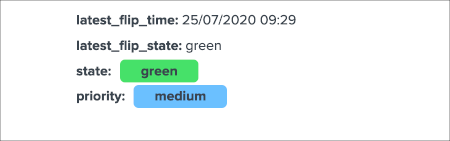

group 3 right screen

latest_flip_timeis the latest date time a change was detected in the state of the entitylatest_flip_statesis the state to which it moved at that timestateis the current state, there are different states: green / orange / blue / grey / red (more explanations to come)priorityrepresents the priority of the entity, by default all entities are added as “medium”, priority is used in different parts of the app and alerts, there are 3 level of priority: low / medium / high

group 4 bottom

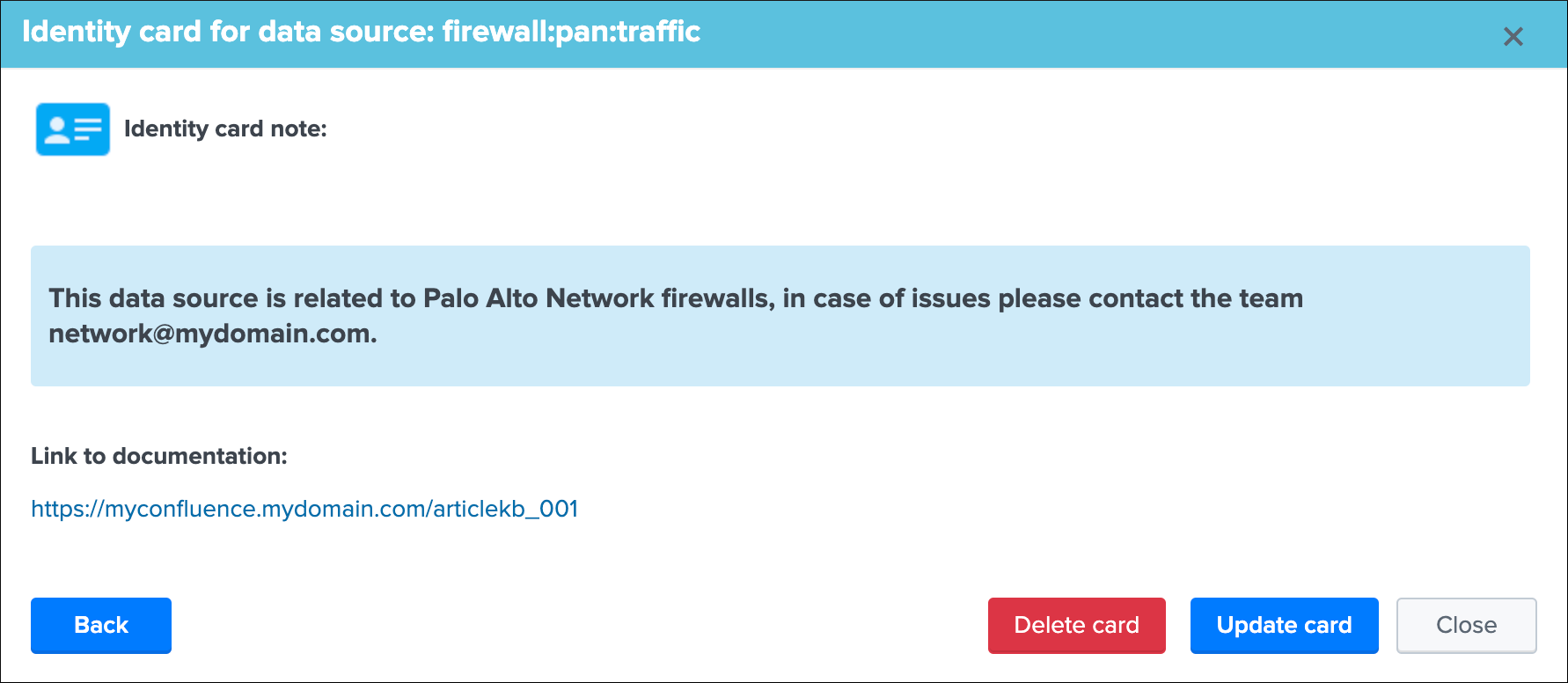

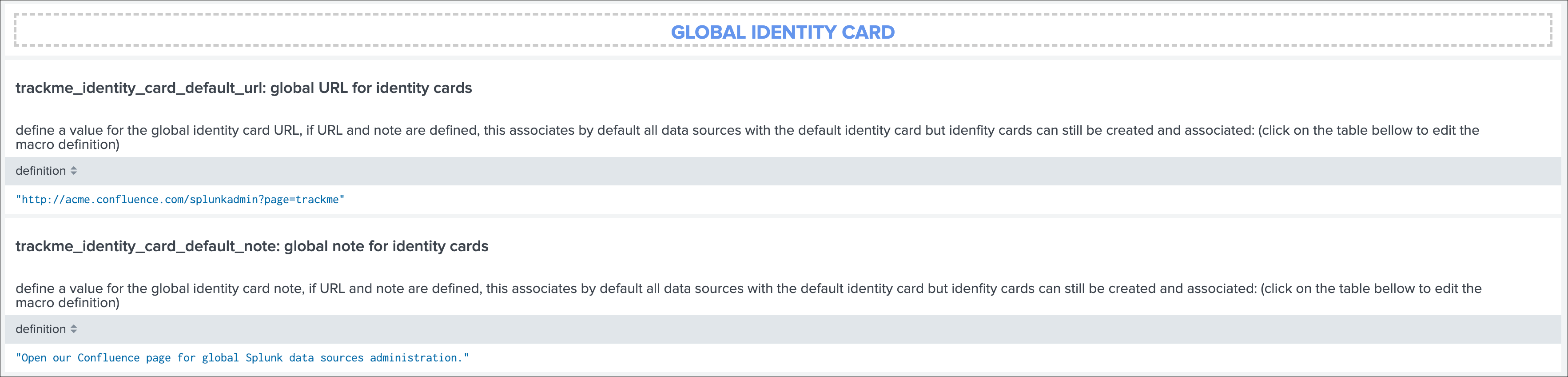

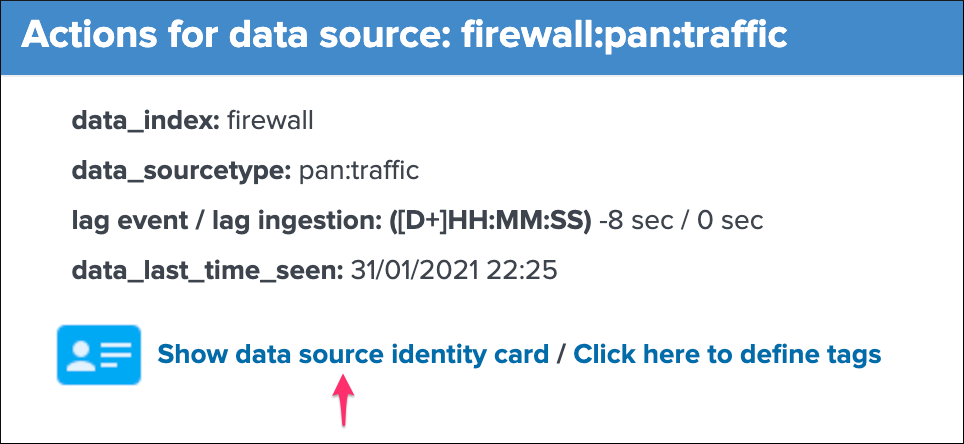

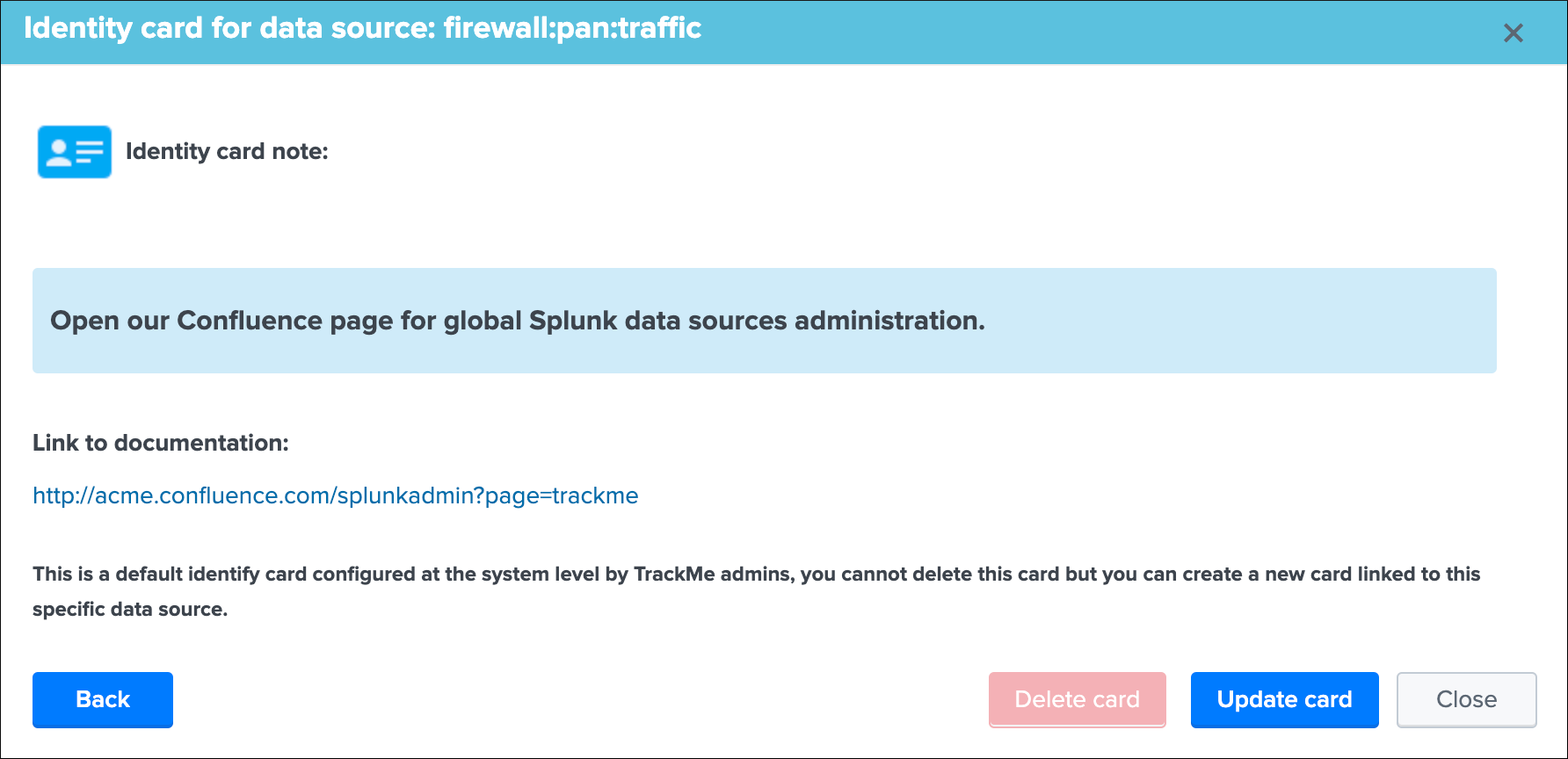

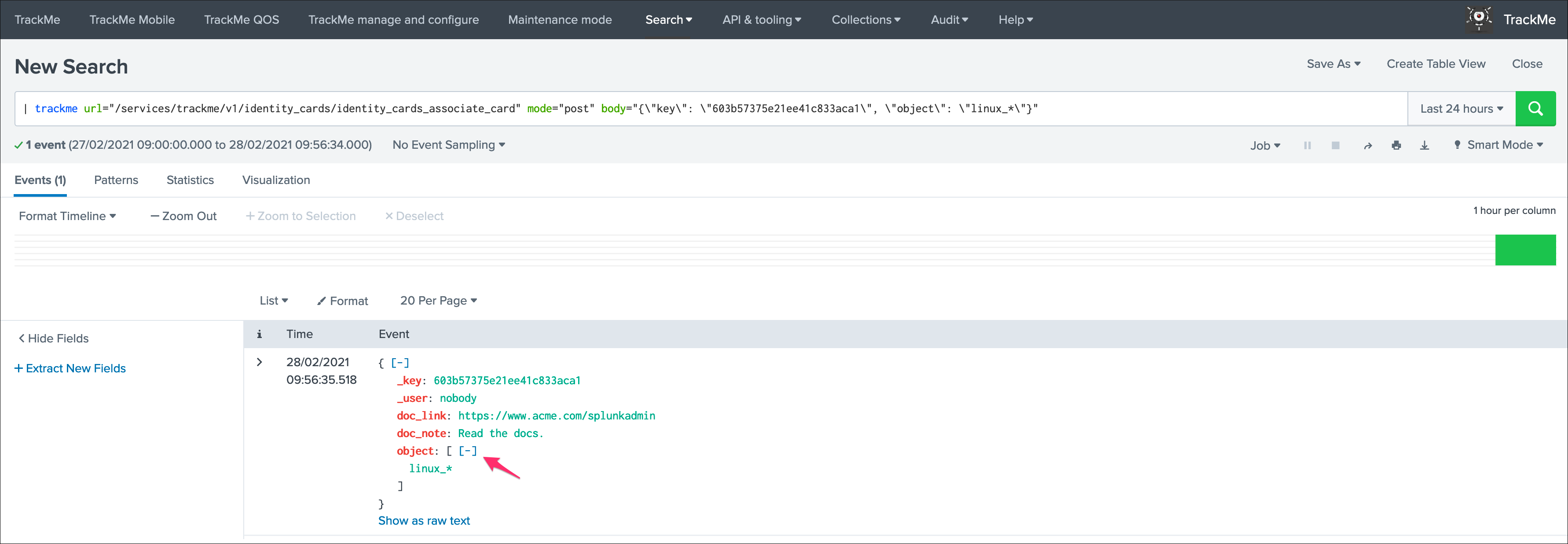

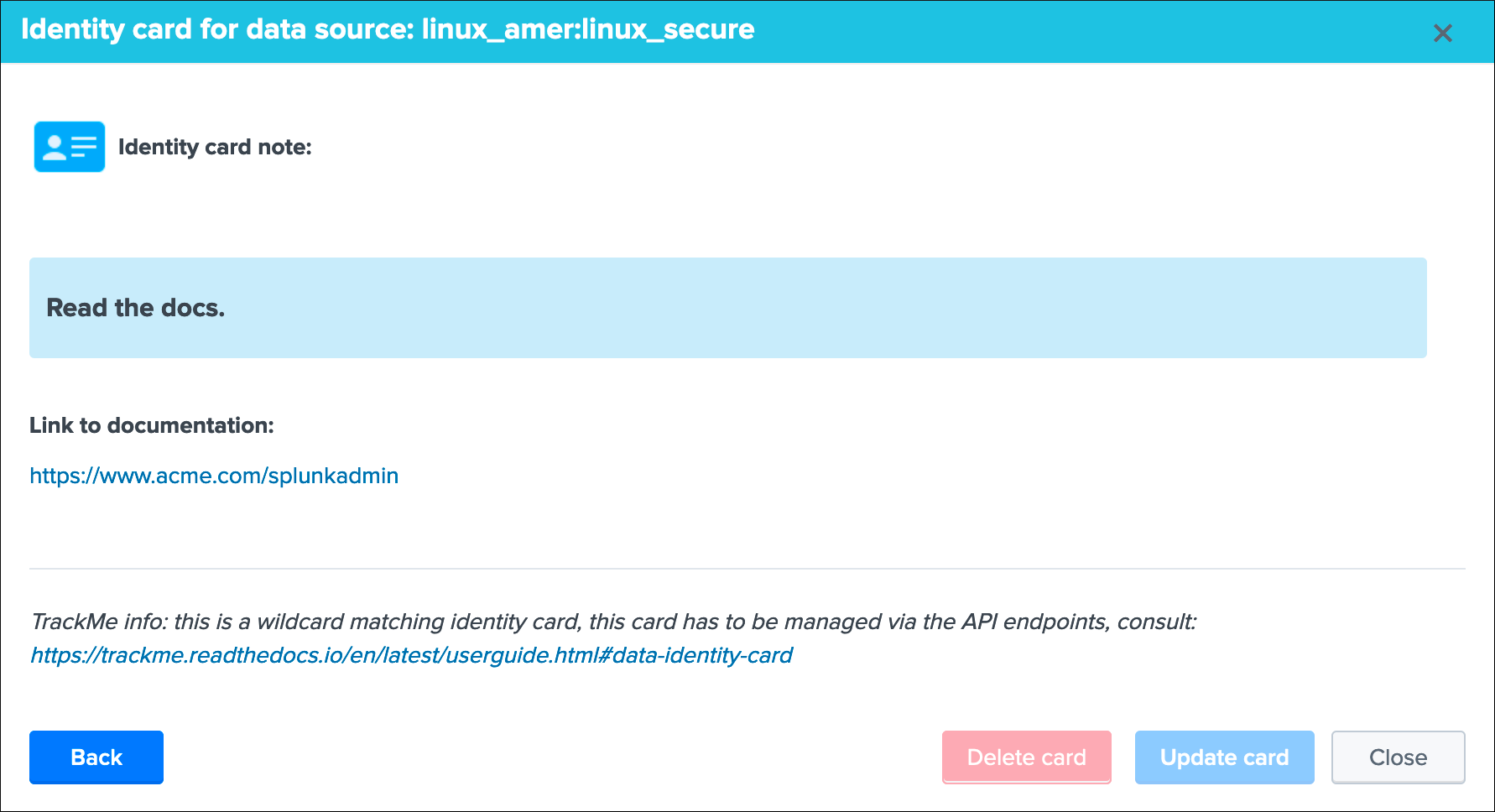

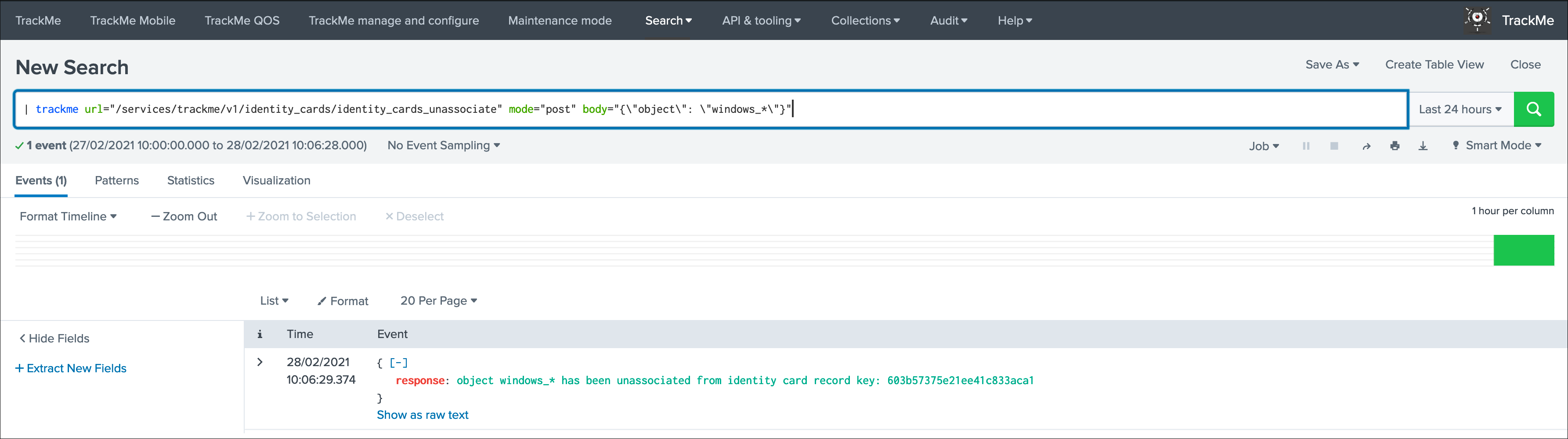

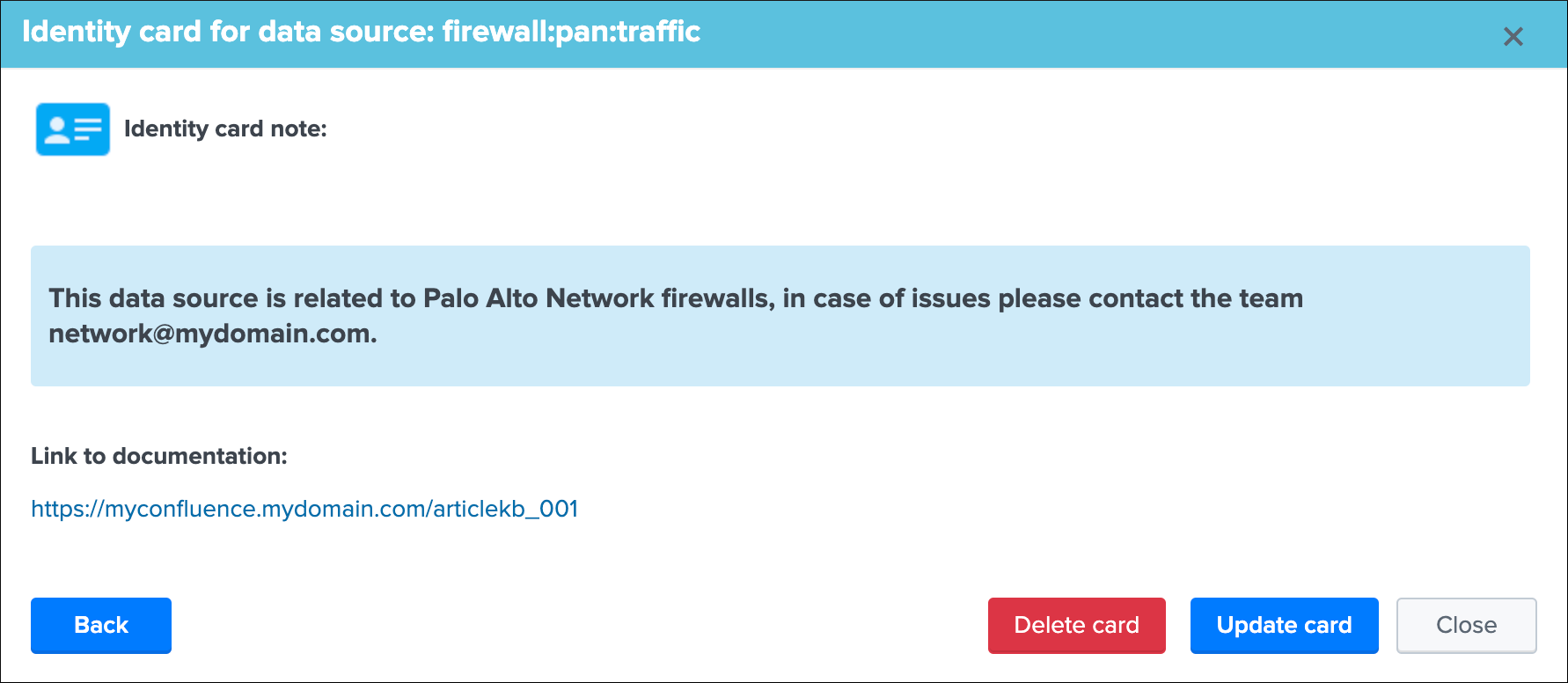

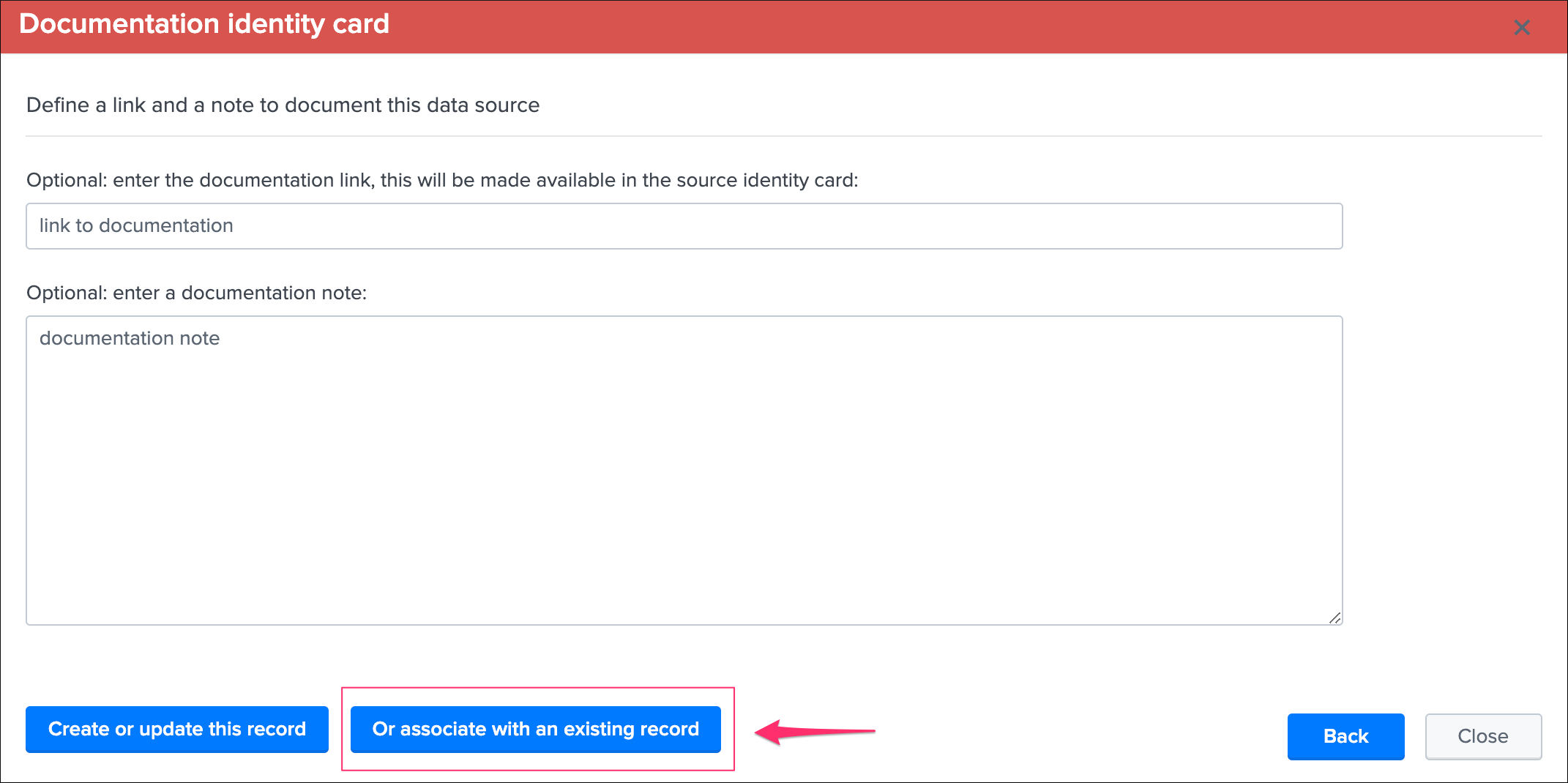

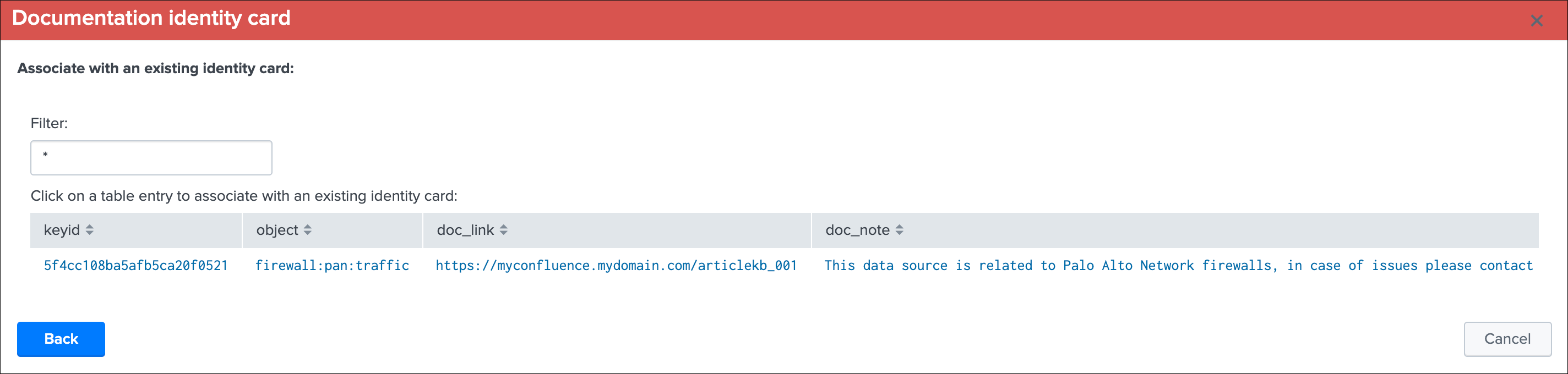

Identity documentation cardis a feature that allows you create an information card (hyperlink and a text note), and link that card to any number of data sources.- By default, no identity card is defined which is exposed by this message, if an identity card is created and linked to the entity, the message will turn into a link that once clicked exposes in a new window the context of the card

- Use this feature to quickly reference the main information for someone accessing to TrackMe and when there is an issue on the data source, which would provide a link to whatever you want (your Confluence, etc) and a quick help text. (at least a hyperlink or a text note must be defined)

See Data identity card for more details about the feature.

Data source screen tabs¶

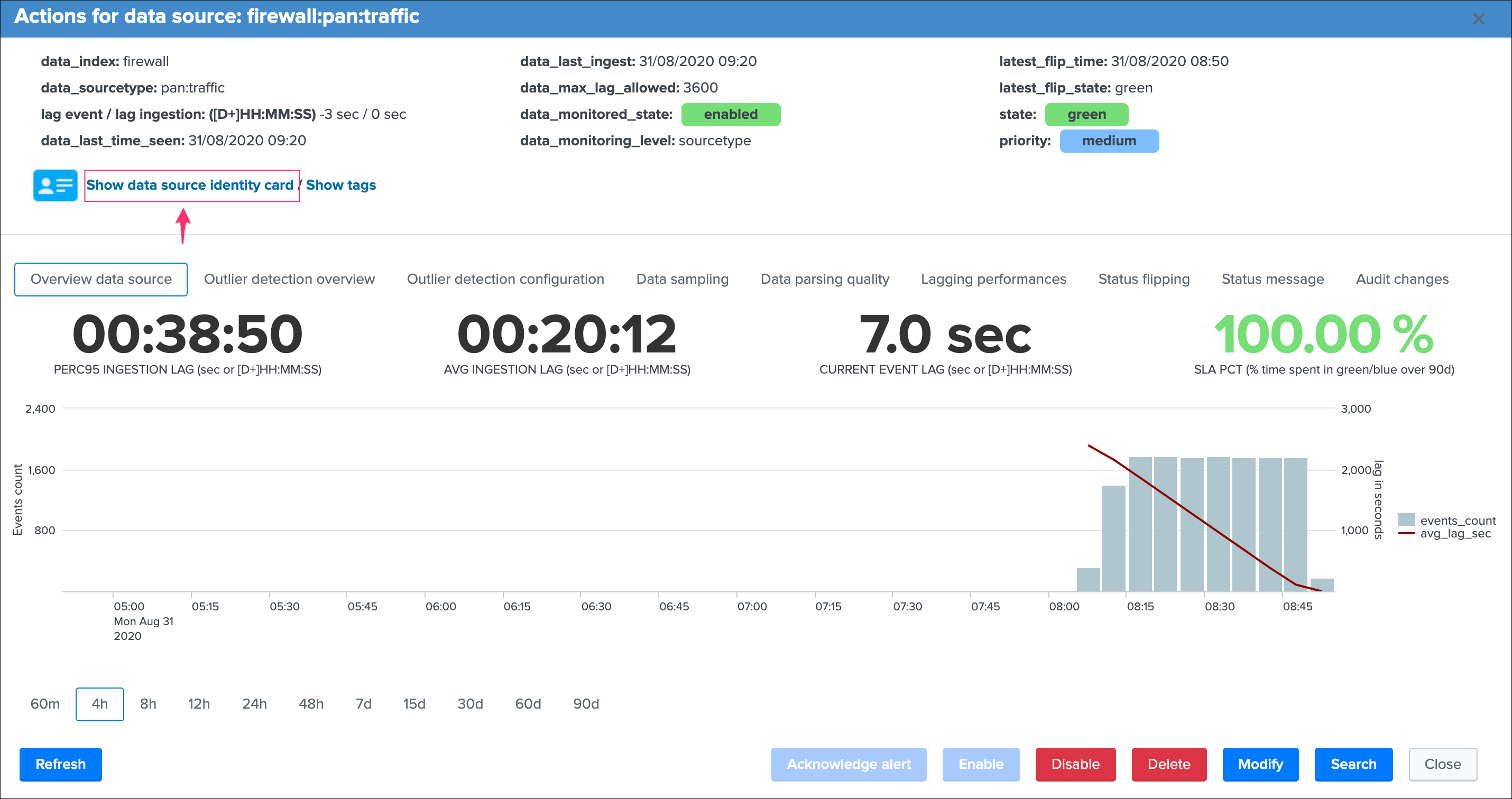

Let’s have a look now at next part of the modal window:

Starting by describing the tabs available in this window:

Overview data sourceis the current view that exposes the main information and metrics for this entityOutlier detection overviewexposes the event outliers detection chartOutlier detection configurationprovides different options to configure the outliers detectionData samplingshows the results from the data sampling & event format recognition engineData parsing qualityexposes indexing time parsing issues such as truncation issues for this sourcetype, if any.Lagging performancesexposes the event lag and ingestion lag recorded metrics in the metric indexStatus flippingexposes all status flipping events that were stored in the summary indexStatus messageexposes the current status of the data source in a human friendly mannerAudit changesexposes all changes recorded in the audit KVstore for that entity

Overview data source tab¶

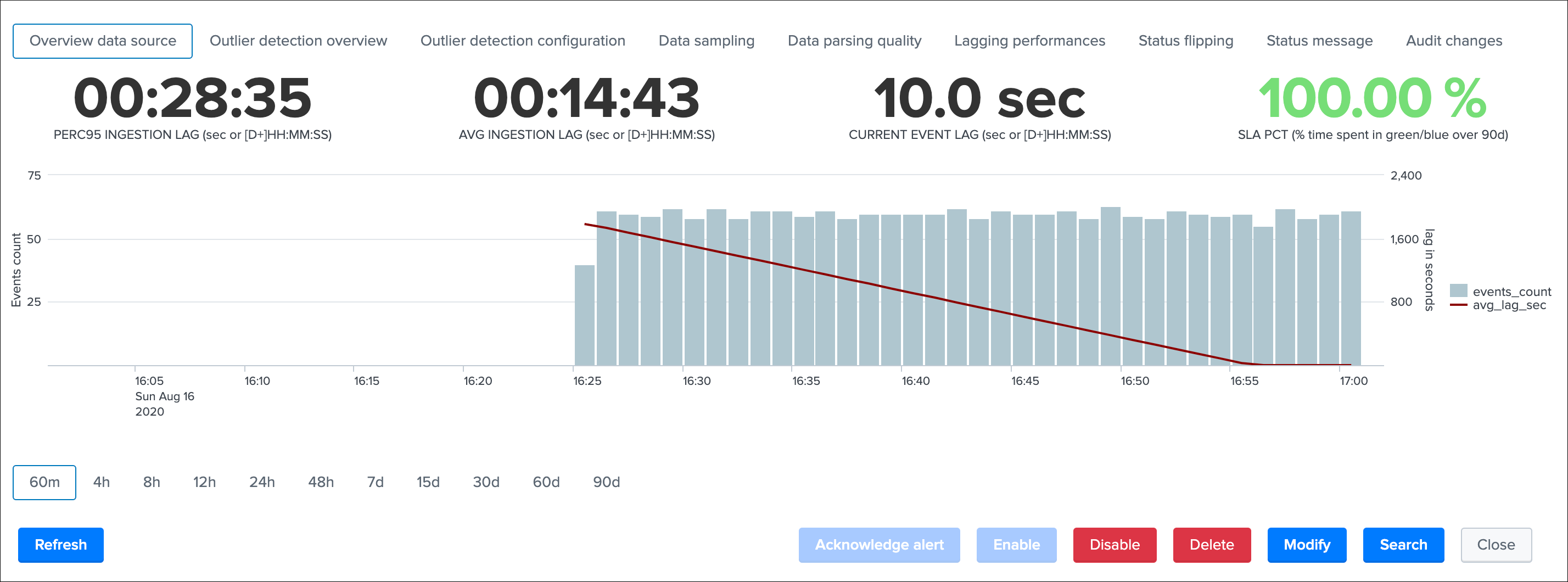

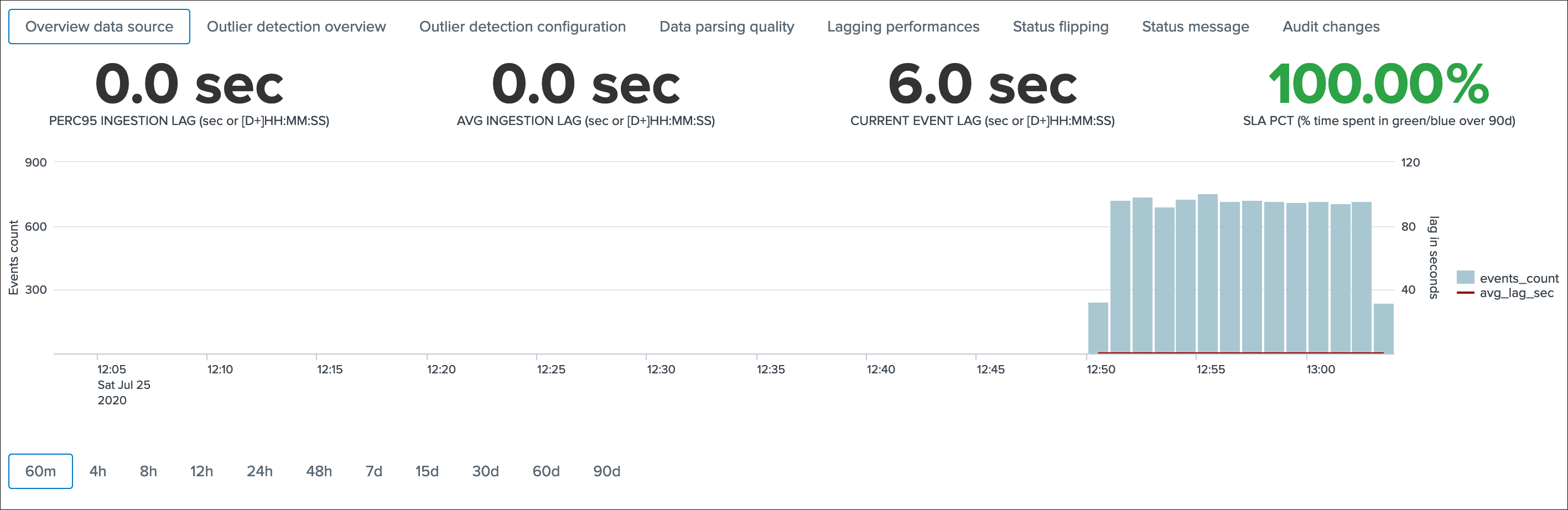

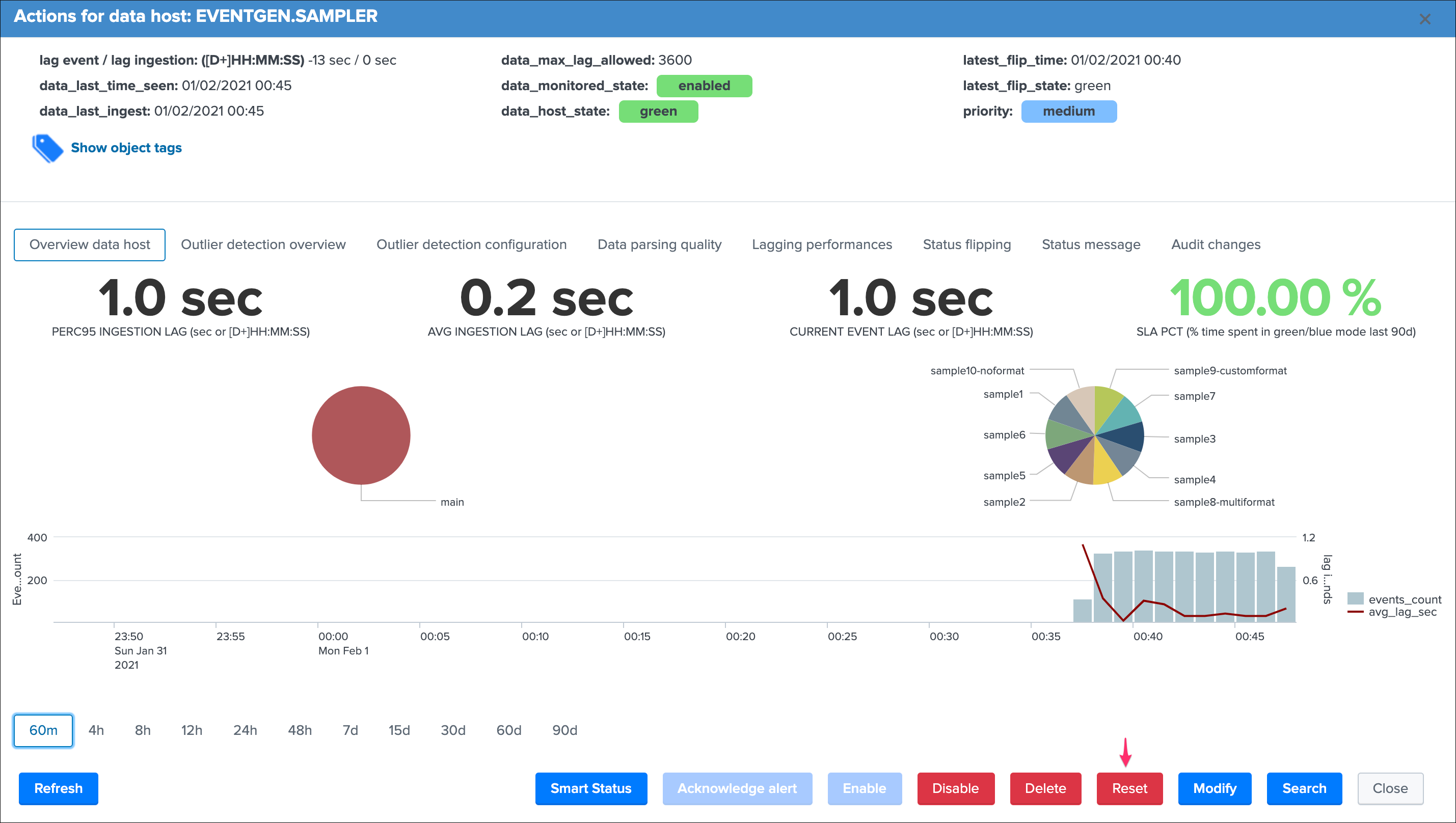

This screen exposes several single forms with the following calculations:

PERC95 INGESTION LAGis the percentile 95 of the lag ingestion determined for this entity (_indextime - _time)AVG INGESTION LAGis the average lag ingestion for that entityCURRENT EVENT LAGis the current event lag calculated for this entity (now() - _time), this basically exposes how late this data source compared between now and the very last event in the entitySLA PCTis the SLA percentage which basically exposes the percent of time that entity has spent in a not green / blue state

Finally, a chart over time exposes the event count and the ingestion lag for that entity.

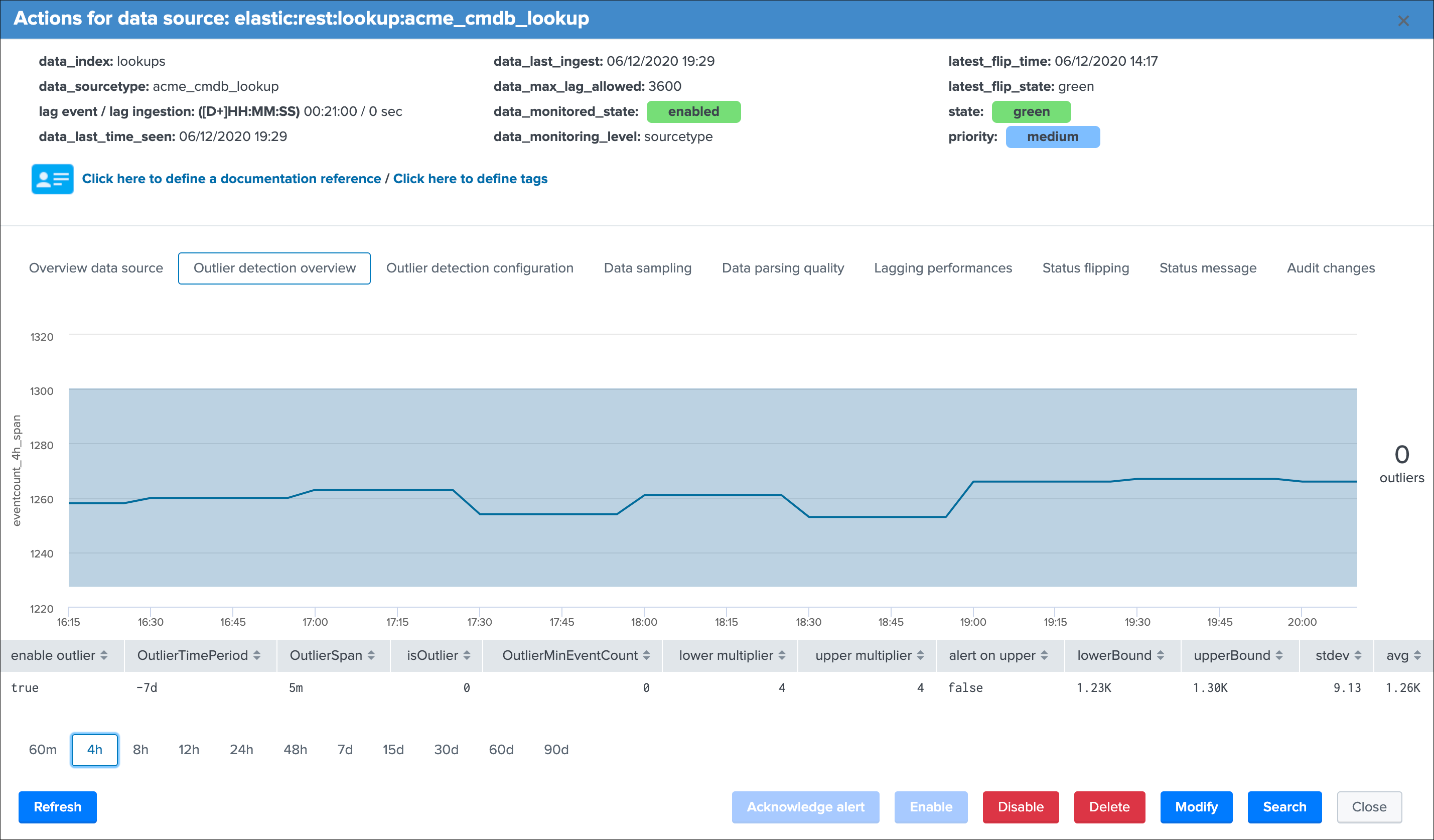

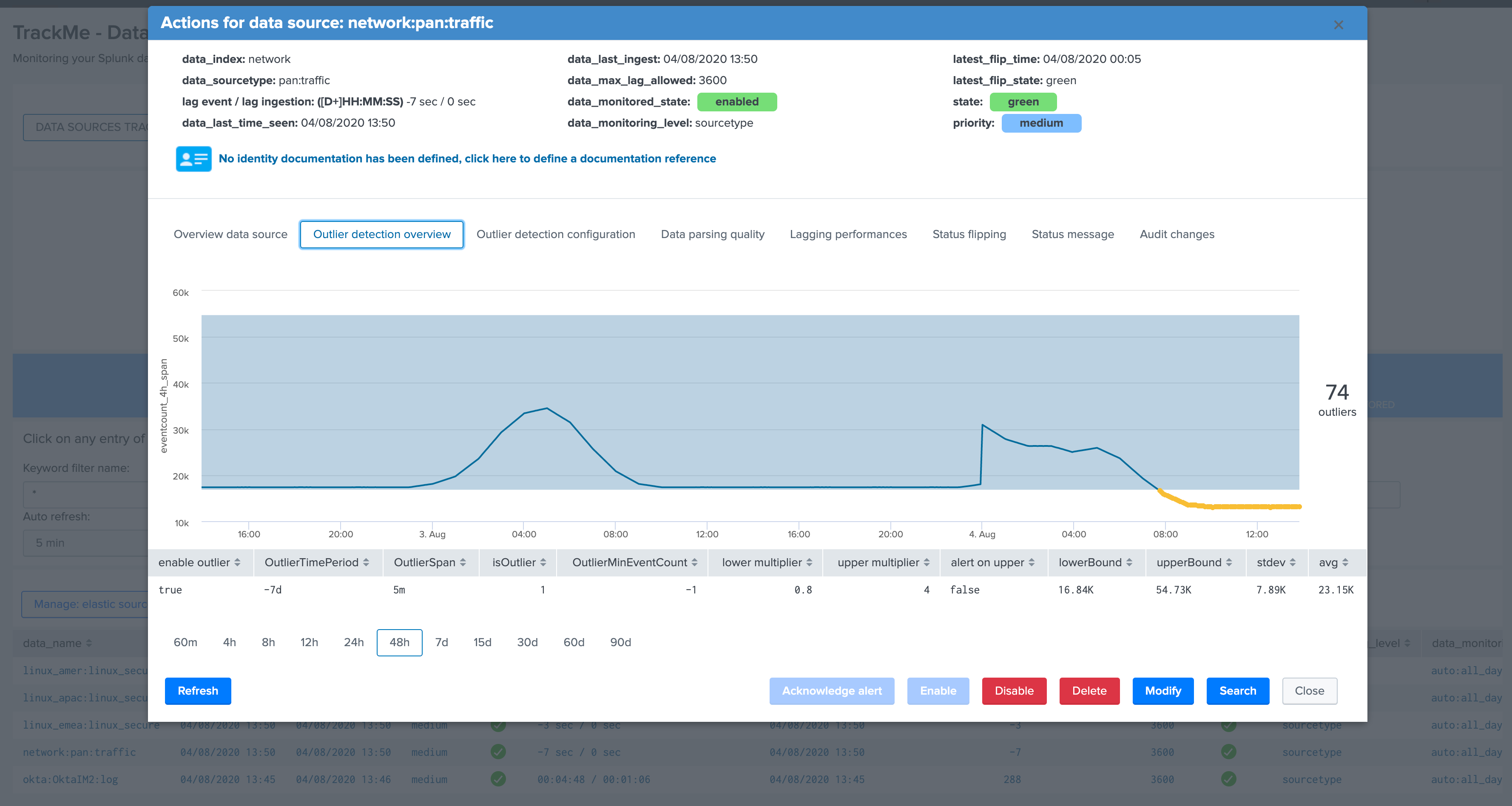

Outlier detection overview¶

This screen exposes the events outliers detection results over time, the purpose of the outliers detection is to provide advanced capabilities to detect when the number of events produced in the scope of an entity goes below or above a certain level, which level gets automatically defined upon the historical behaviour of the data.

For this purpose, every time the short term tracker runs, it records different metrics which includes the number of events on per 4 hours time window. (which matches the time frame scope of the short term tracker)

Then in short, a scheduled report runs every hour to perform lower bound and upper bound calculations depending on different configurable factors.

Assuming the outliers detection is enabled, if the workflow detects a significant gap in the event count, and optionally an increase too, the state of the entity will be affected and potentially turn red.

The table at the bottom of the screen provides additional information:

enable outliercan be true or false and defines if the outliers detection is taken into account for the state definition of that entityOutlierTimePeriodis a time frame period between a list of restricted values, which defines the time period the backend will be looking at during for the lower bound, upper bound and standard deviation calculationOutlierSpanis used when rendering the outliers over time chart and does not influence the detection (for example if a data source emits data every 30 minutes you will want to apply a more relevant value for a better rendering)isOutlieris the current status, a value of 0 indicates that no outliers are currently active for this entity, a value of 1 indicates TrackMe detected outliers currentlyOutlierMinEventCountis an optional static value that can be defined for the lower bound, this is useful if you want to statically specific the minimal per 4 hours event count to be acceptedlower multiplieris a multiplier that is used for the automatic definition of the lower bound, decreasing or increasing will impact the value of the lower bound definitionupper multiplieris a multiplier that is used for the automatic definition of the upper bound, decreasing or increasing will impact the value of the upper bound definitionalert on upperdefines if upper outliers should be taken into account and affect the state if an abnormal number of events is coming in, default is falselowerBoundis the lower threshold, an event count below this value will be considered as outliersupperBoundis the upper threshold, an event count above this value will be considered as outlier, but will only impact the state if the alert on upper is truestdevis the standard deviation calculated by the workflow for this entity, and is used as the reference for the lower and upper bound calculation associated with the lower and upper multipliersavgrepresents the average 4 hours amount of event count for this entity

See Outliers detection and behaviour analytic for more details about the feature.

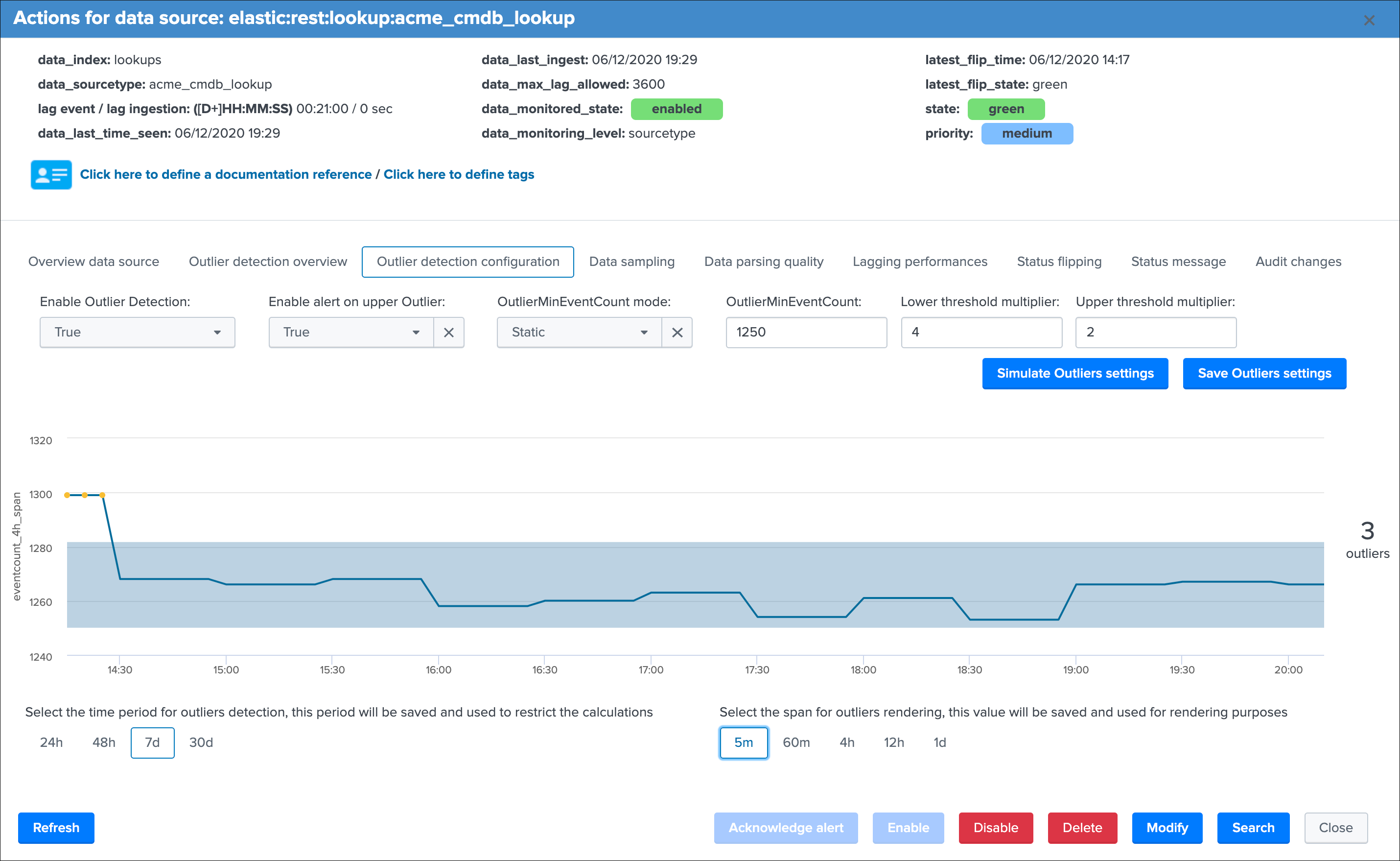

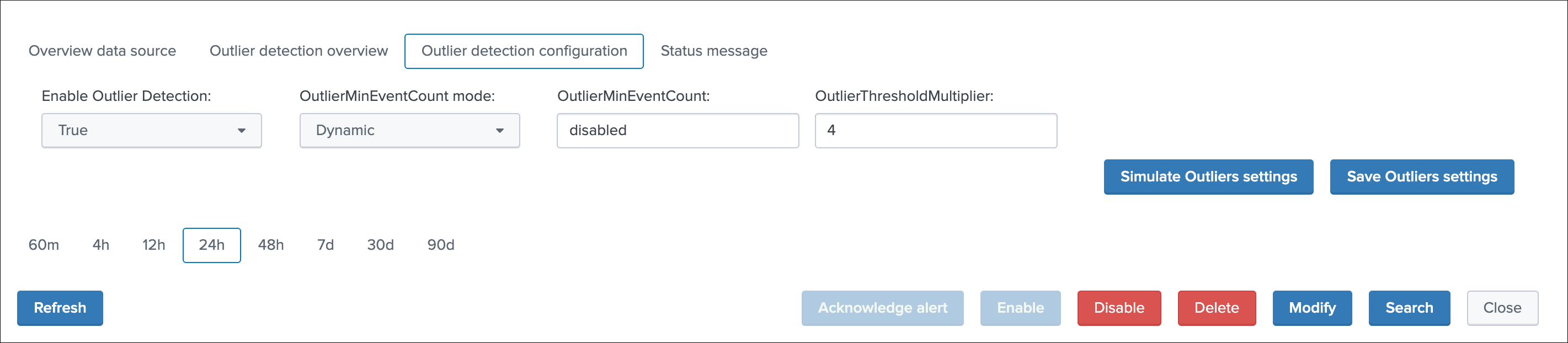

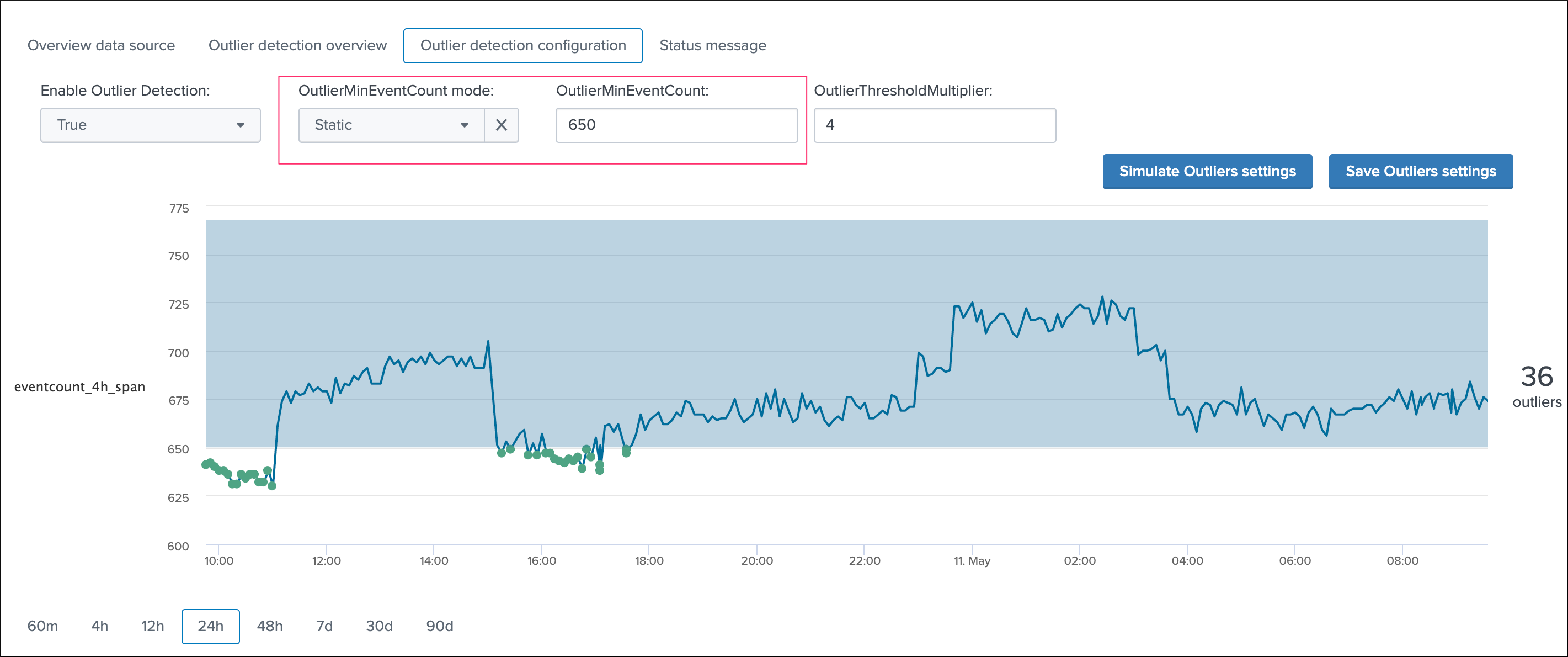

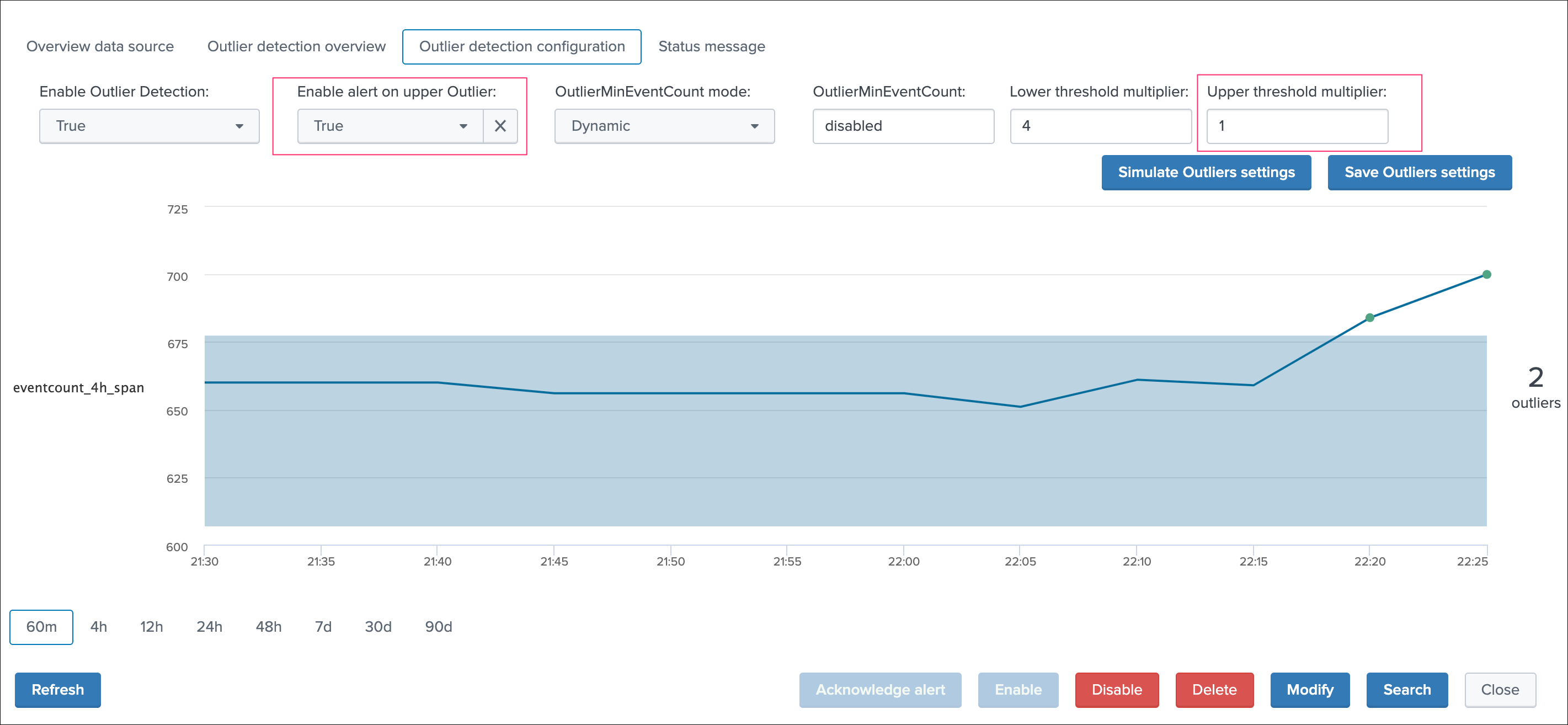

Outlier detection configuration¶

This is the screen provided to configure the outliers detection for a given entity, which exposes a simulation of the results over time, allowing you to train your settings before they are applied.

On the top part of the screen you will interact with the settings exposes in the previous section:

Enable Outlier Detection:you can choose to disable the Outliers detection for a given entity, default is enabledEnable alert on upper Outlier:you can choose to alert on upper outliers detection, default is falseOutlierMinEventCount mode:you can choose to let the workflow defining dynamically the lower bound value, or define yourself a static threshold if you need itOutlierMinEventCount:static lower bound value if static threshold is usedLower threshold multiplier:the multiplier for the lower band calculation, must be a numerical value which will impact the lower bound calculation (the lower the multiplier is, the closer to the actual standard deviation the calculation will be)Upper threshold multiplier:the multiplier for the upper band calculation, must be a numerical value which will impact the upper bound calculation (the lower the multiplier is, the closer to the actual standard deviation the calculation will be)

Finally, there are two time related settings to interact with:

time period for outliers detectiondefines the time frame TrackMe will be looking at for the outliers calculations (lower/upper bands etc) which is using the recorded metrics every time the short term trackers ranspan for outliers renderingis an additional setting which impact the graphical rendering within the outliers screen, but not the results of the outliers detection itself

See Outliers detection and behaviour analytic for more details about the feature.

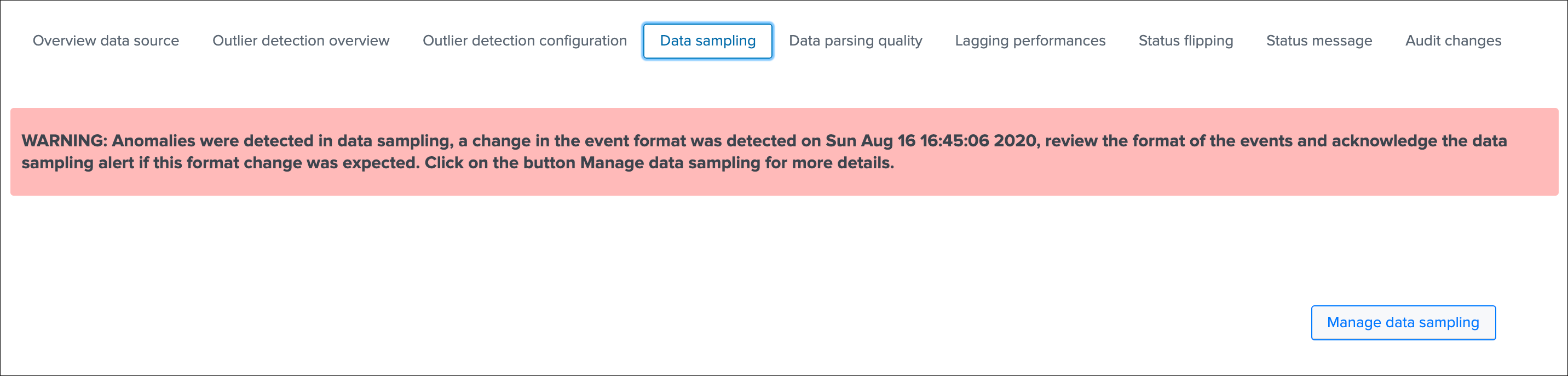

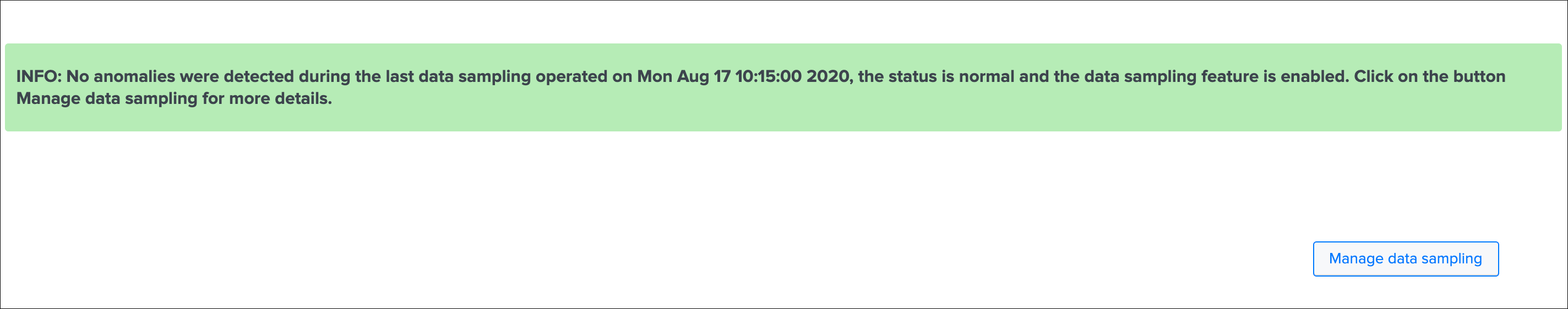

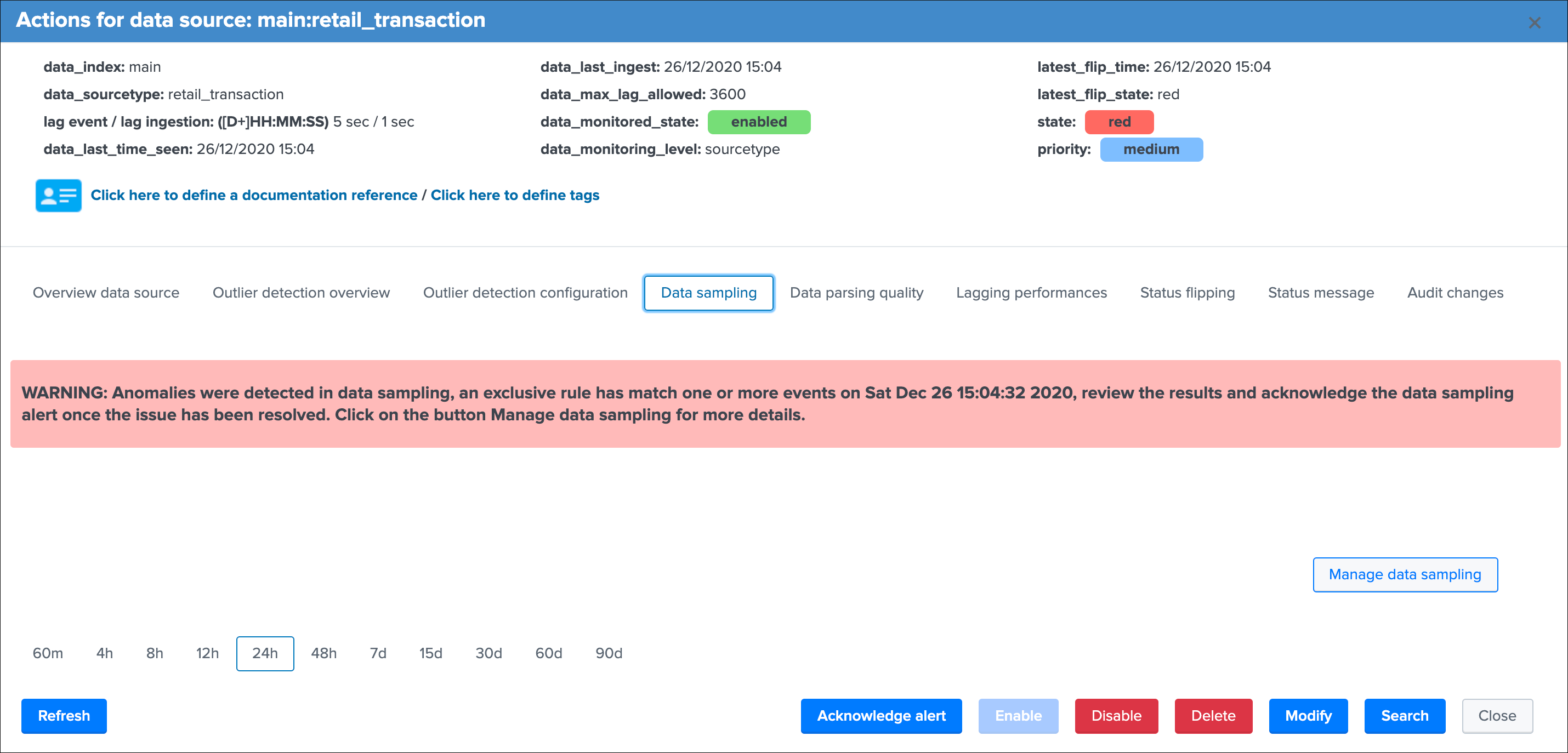

Data sampling¶

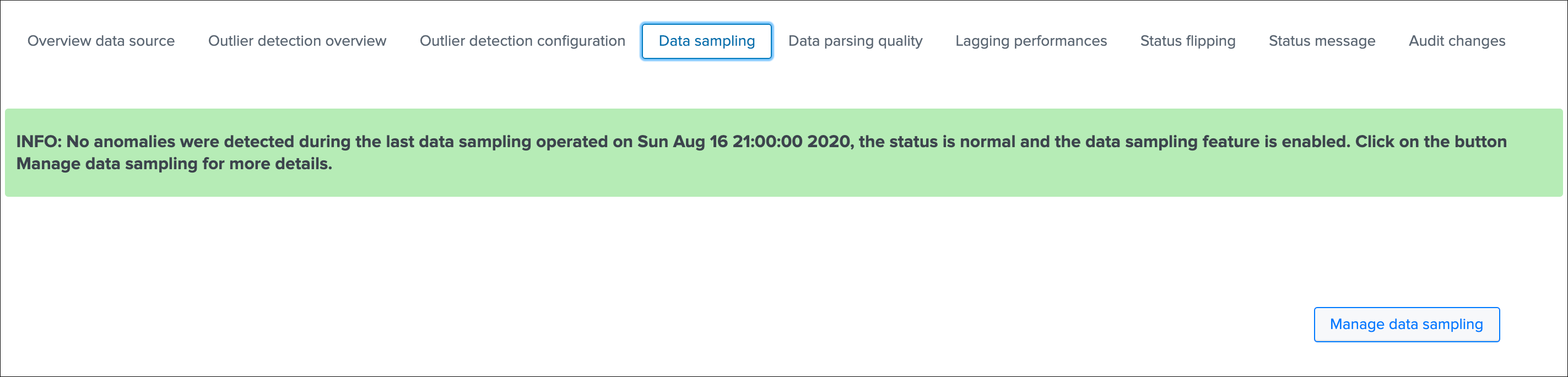

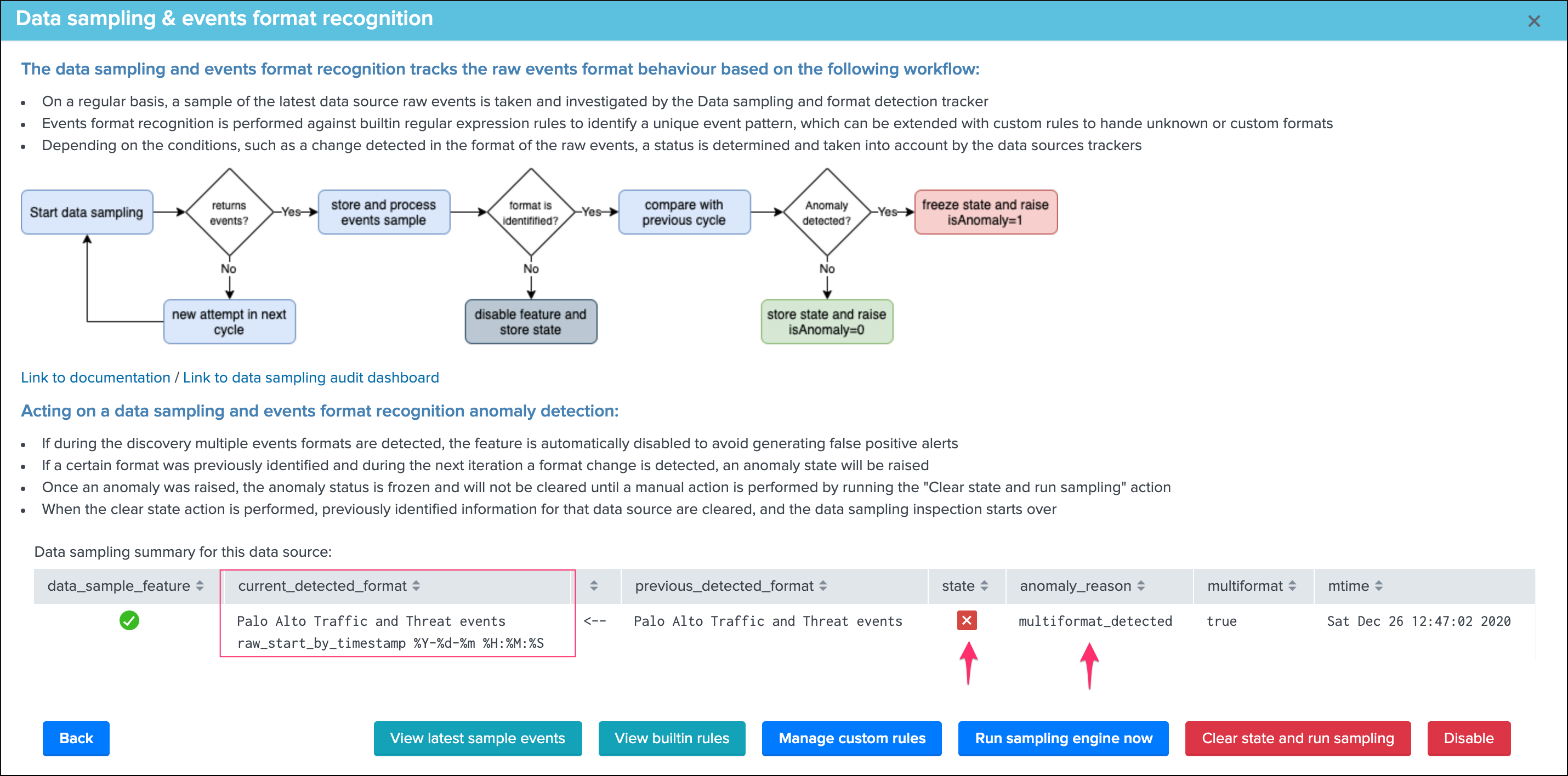

The data sampling tab exposes the status of the data sampling and format recognition engine:

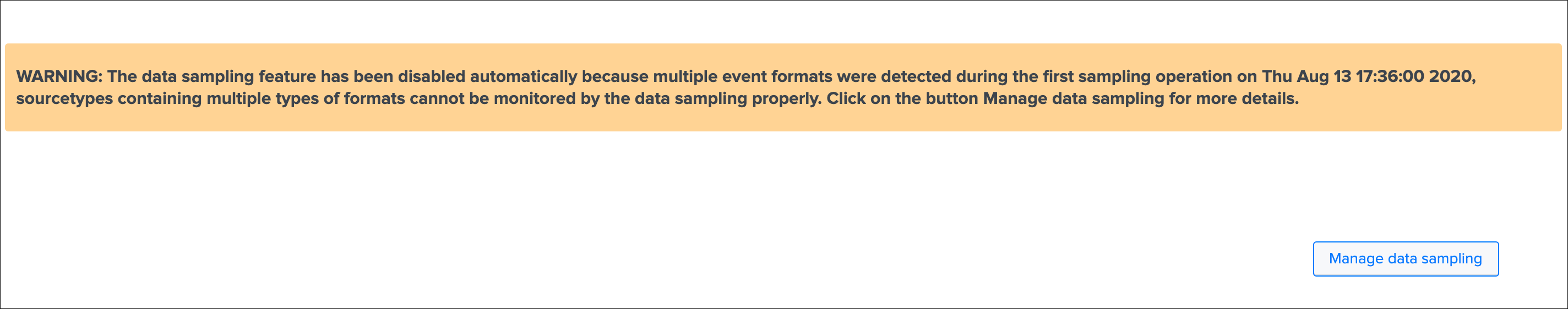

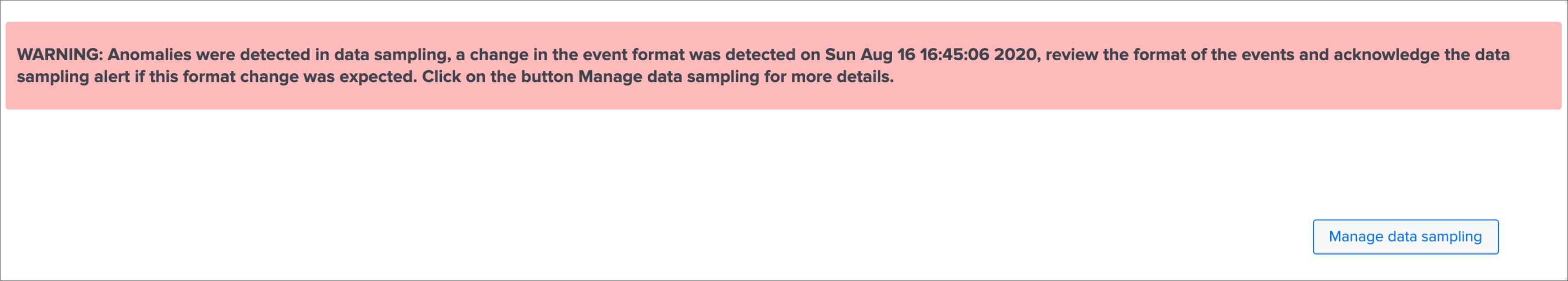

The data sampling message can be:

green:if no anomalies were detectedblue:if the data sampling did not handle this data source yetorange:if conditions do not allow to handle this data source, which can be multi-format detected at discovery, or no identifiable event formats (data sampling will be deactivated automatically)red:if anomalies were detected by the data engine, anomalies can be due to a change in the event format, or multiple events formats detected post discovery

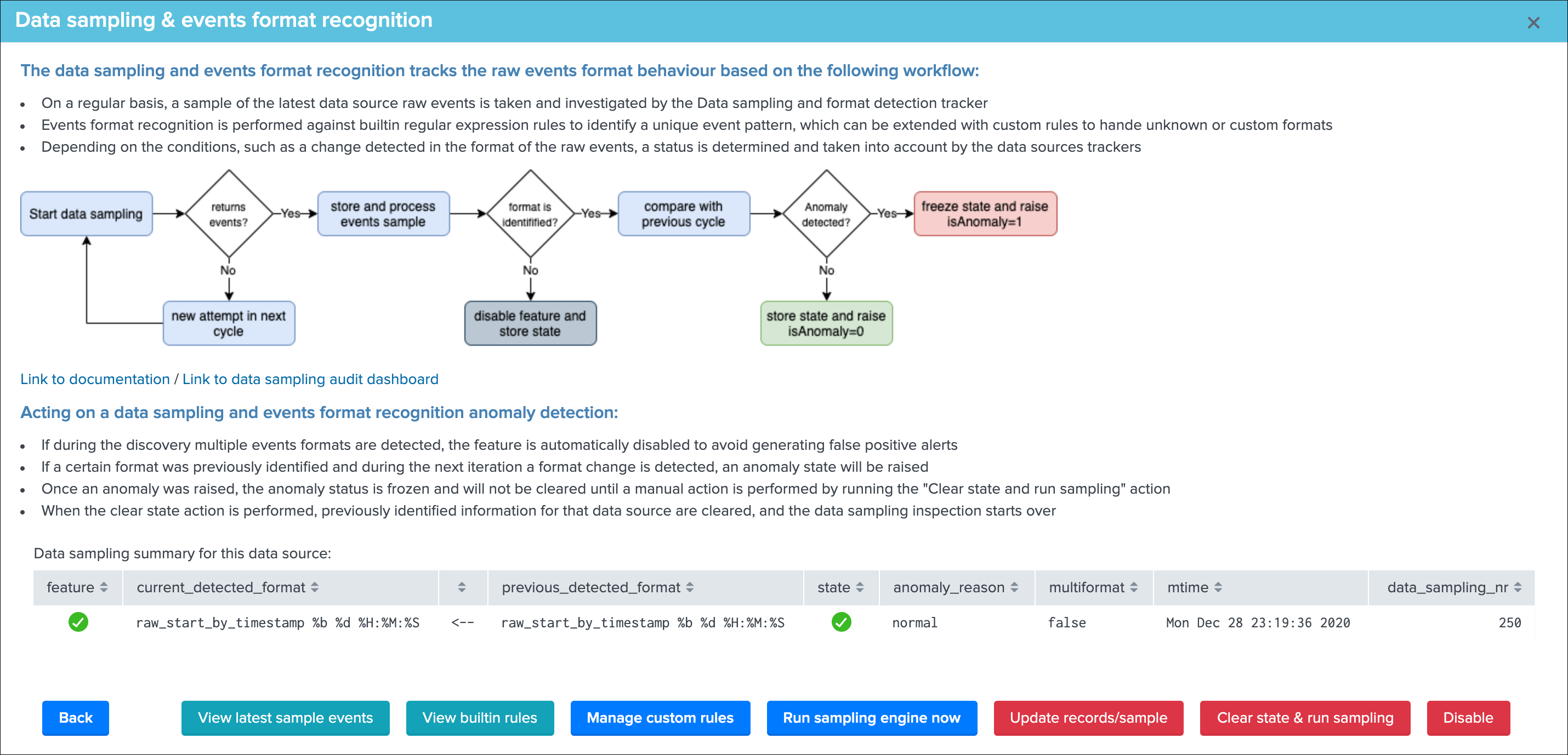

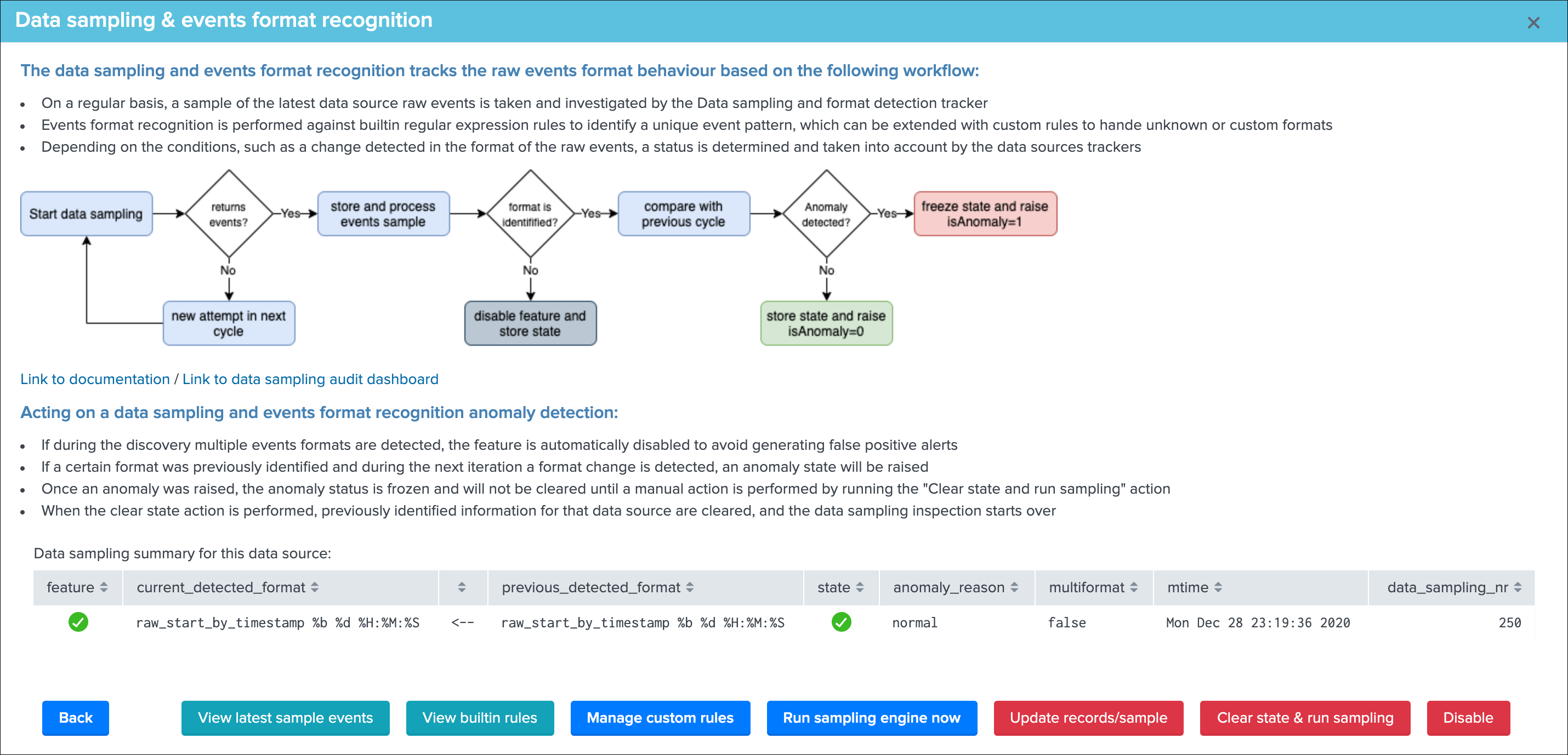

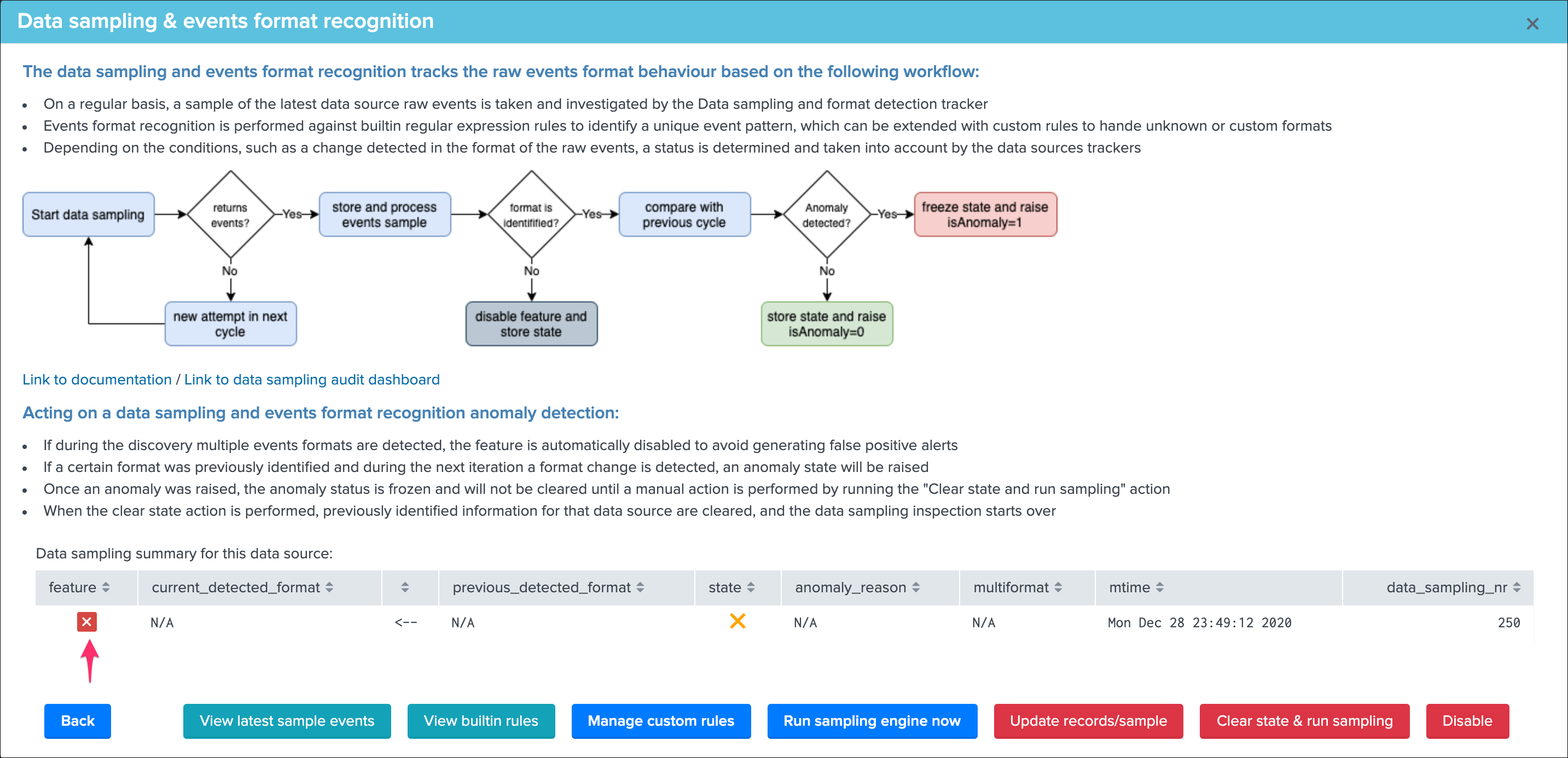

The button Manage data sampling provides summary information about the data samping status and access to data sampling related features:

Quick button access:

View latest sample events:open in search access to the last sample of raw events that were processed (raw events and identified format)View builtin rules:view the builtin rules (builtin rules are regular expressions rules provided by default)Manage custom rules:view, create and delete custom rules to handle any format that would not be recognized by the builtin rulesRun sampling engine now:runs the sampling engine now for this data sourceClear state and run sampling:clears the previously known states and run the sampling engine as it was the first time the engine handles this data source

See Data sampling and event formats recognition for more details about the feature.

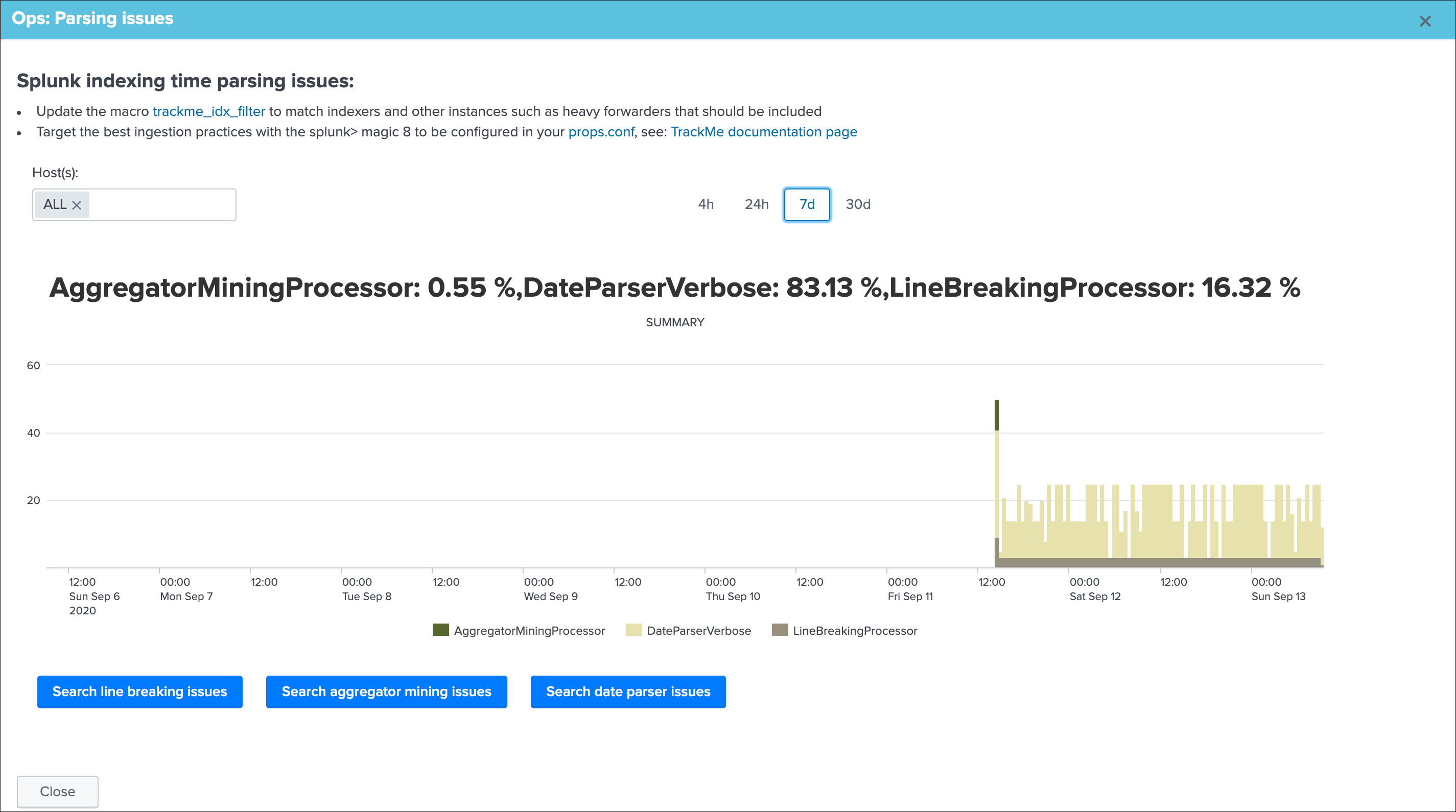

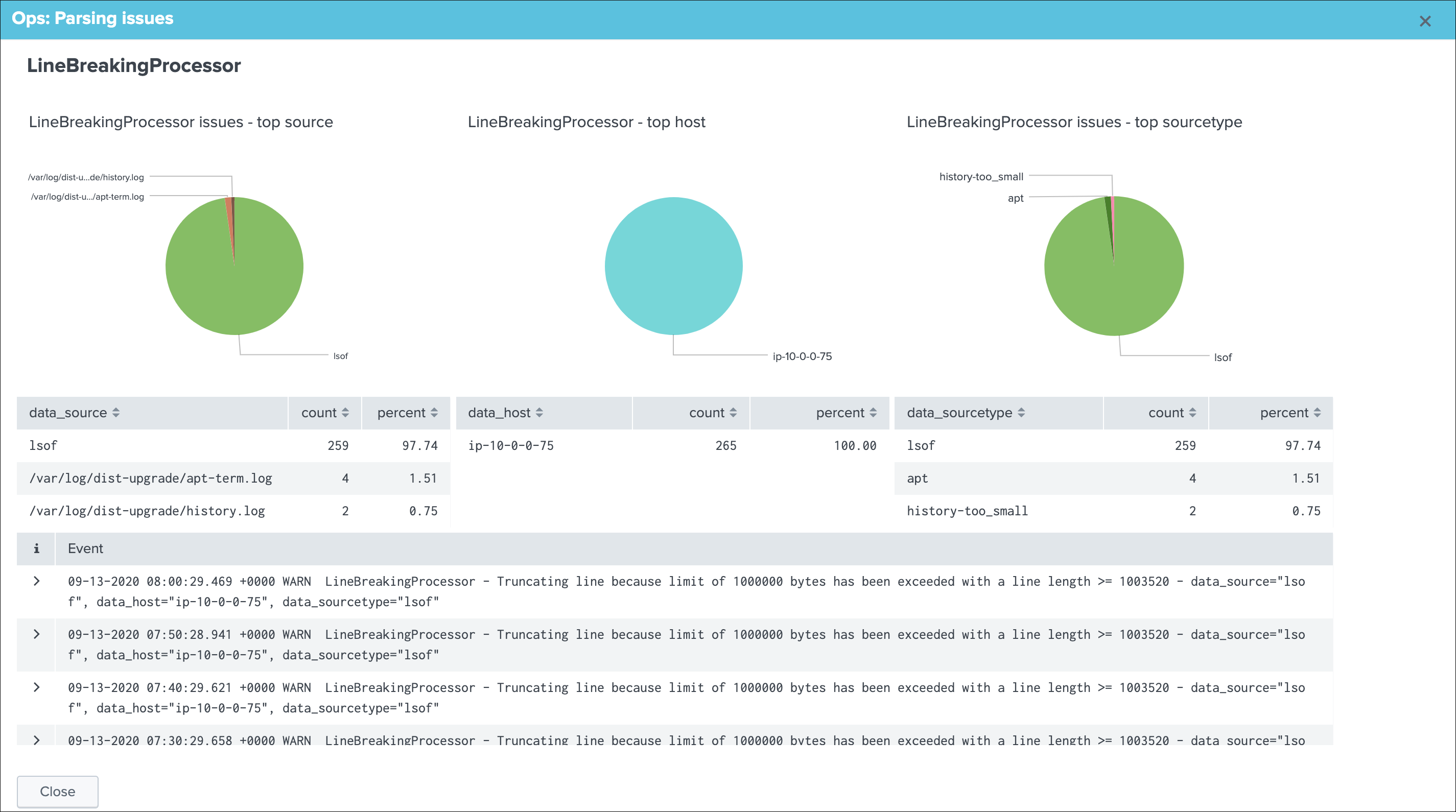

Data parsing quality¶

The data parsing quality screen exposes if there are any indexing time parsing issues found for this sourcetype:

Note: for data sources, the scope of indexing time parsing issues happens on the sourcetype level from a Splunk point of view, this means that if there are any parsing issues found for this sourcetype, this can be linked to this data source but as well with any other data source that looks at the same sourcetype.

Under normal conditions, this screen should not show any parsing errors, if there are any, these should be fixed.

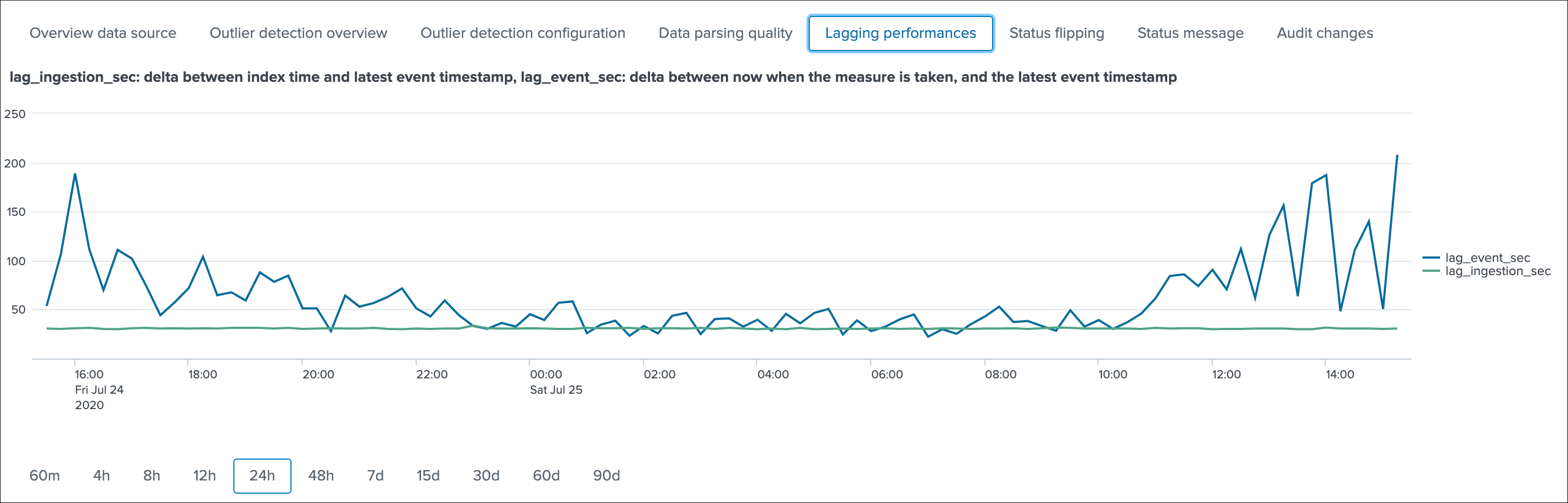

Lagging performances¶

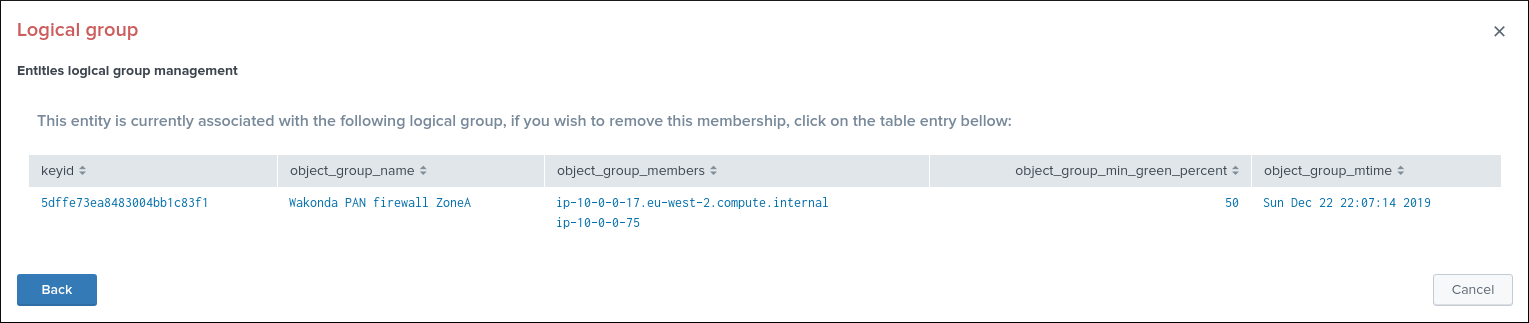

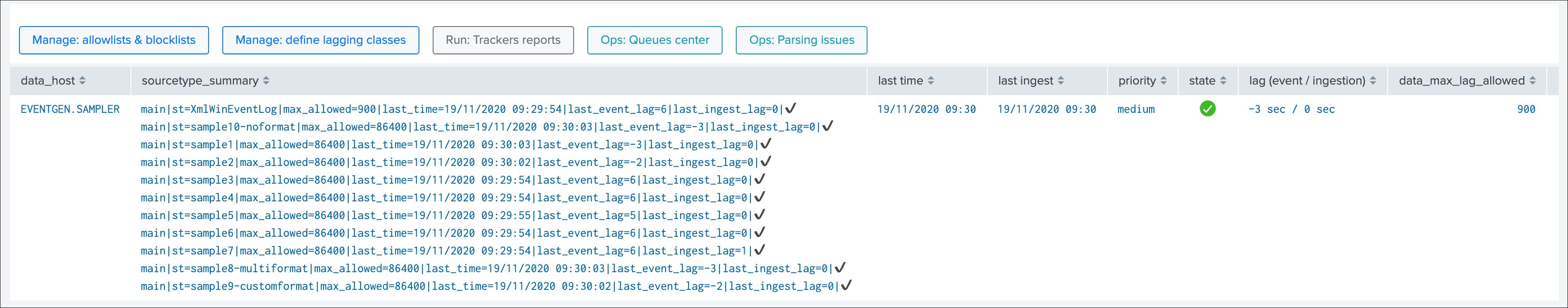

This screen exposes the event and ingestion lagging metrics that have been recorded each time the short trackers ran, these metrics are stored via a call to the mcollect command and stored into a metric store index:

The following mcatalog search can be used to expose the metrics stored in the metric store and the dimensions:

| mcatalog values(metric_name) values(_dims) where index=* metric_name=trackme.*

The main dimensions are:

object_categorywhich represents the type of entities, being data_source or data_hostobjectwhich is the entity unique identifier, data_name for data sources, data_host for data hosts

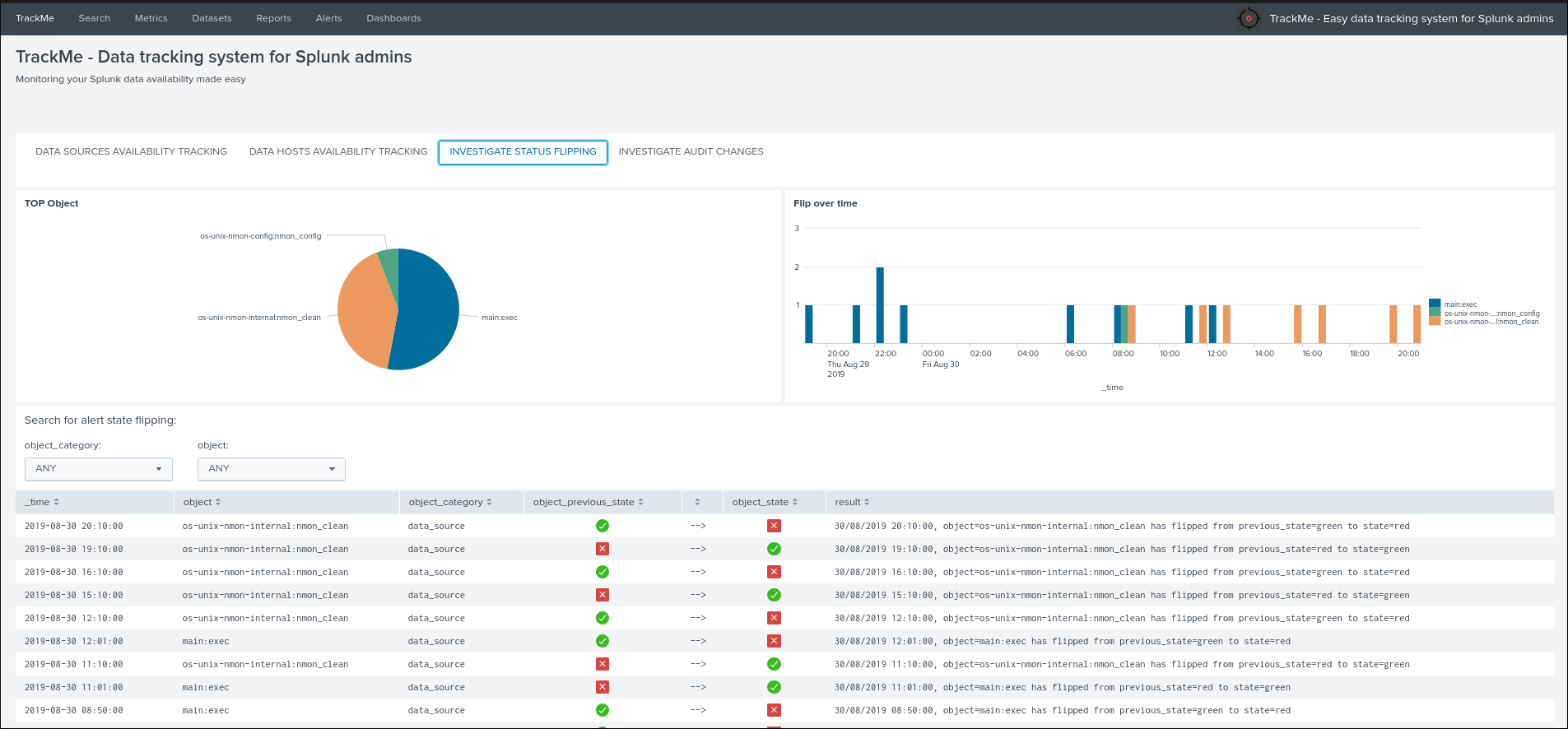

Status flipping¶

This screen exposes all the flipping status events that were recorded for that entity during the time period that is selected:

Key information:

- Anytime an entity changes from a state to another, a record is generated and indexed in the summary index

- When an entity is first added to the collection during its discovery, the origin state will be discovered

- The target state is the state (green / red and so forth) that the entity has switched to

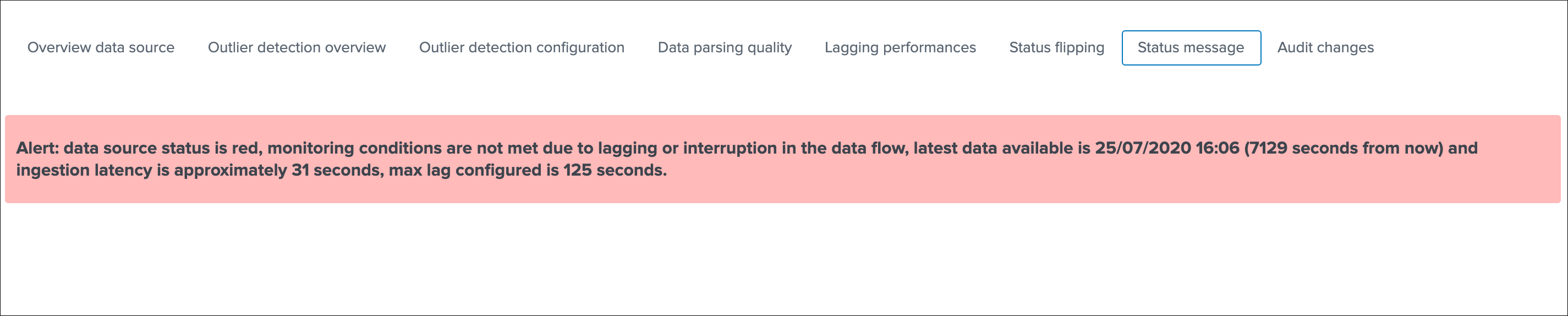

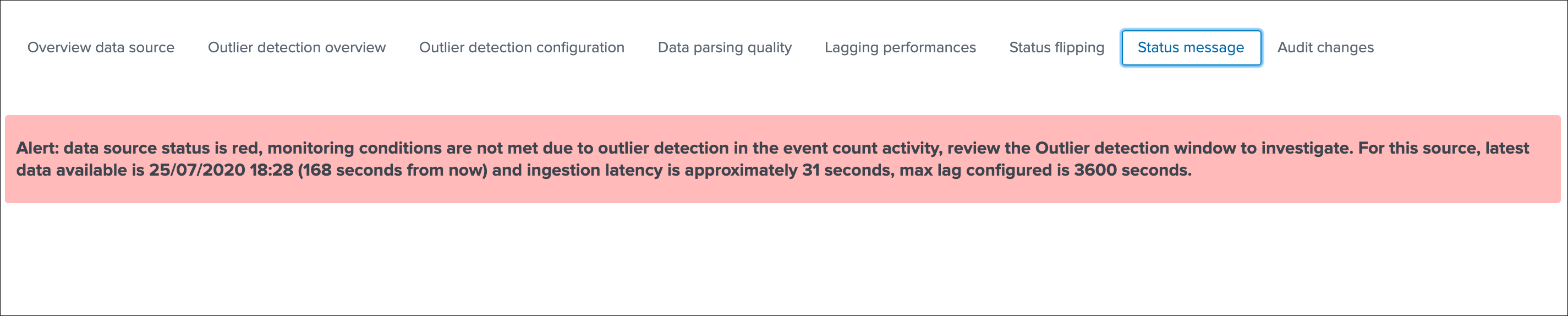

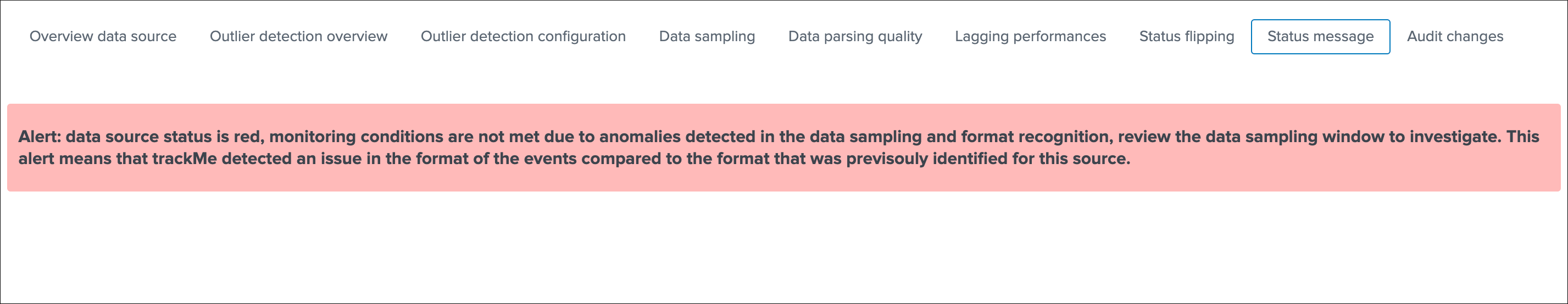

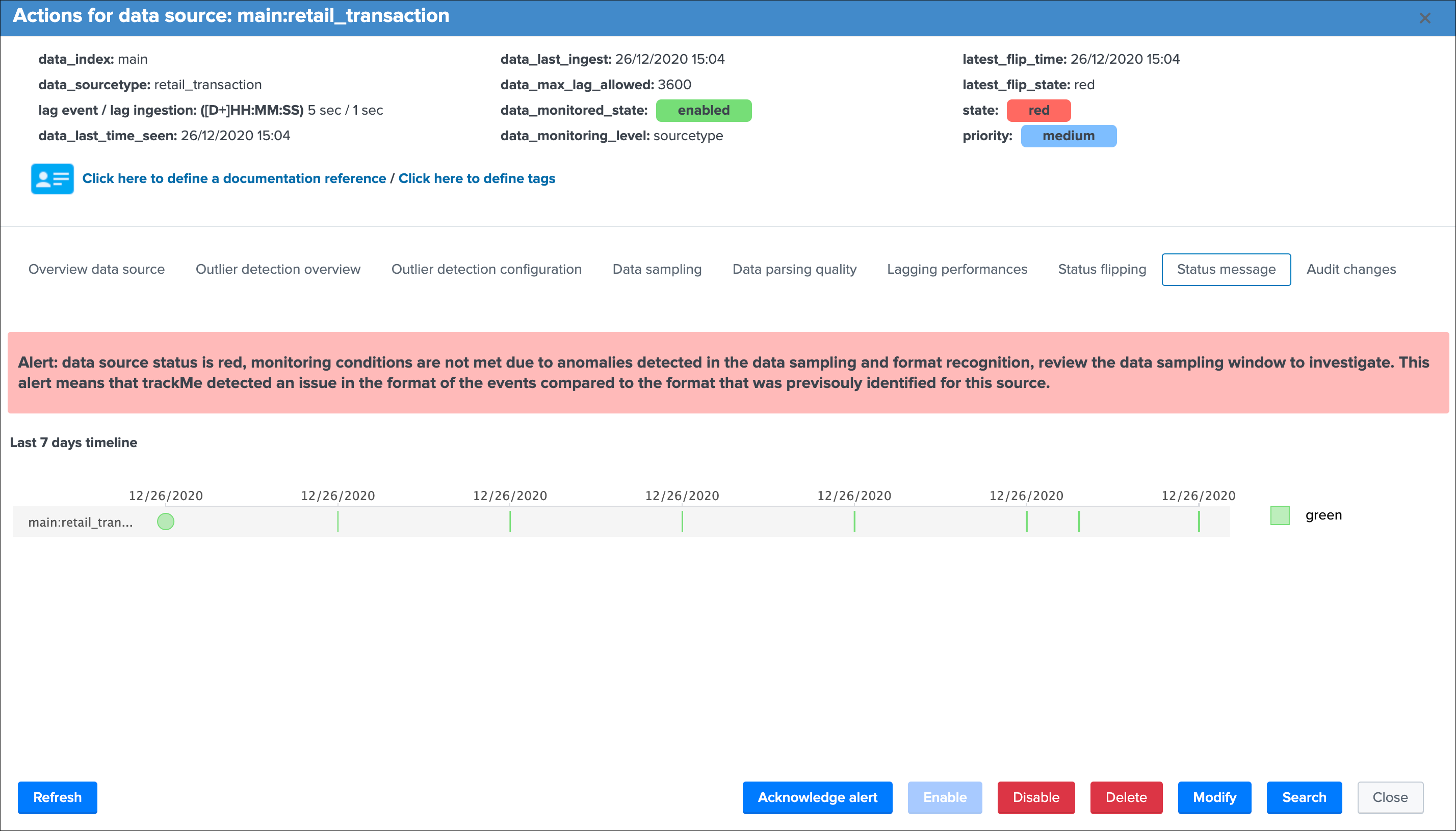

Status message¶

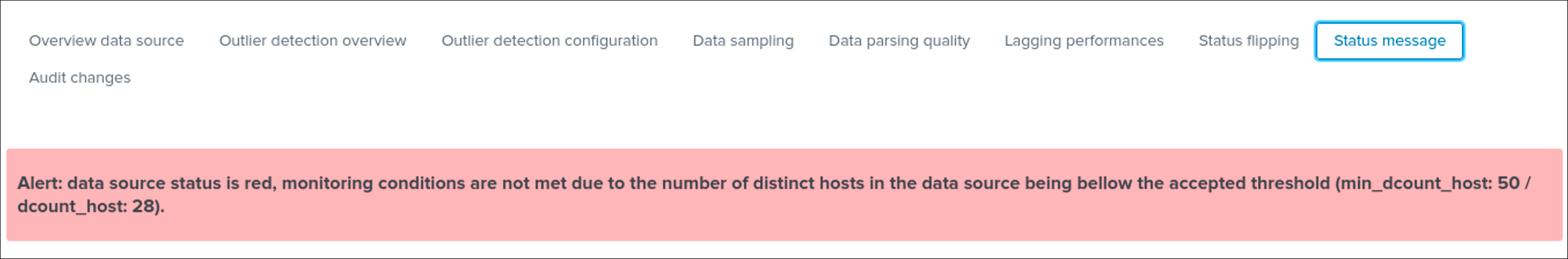

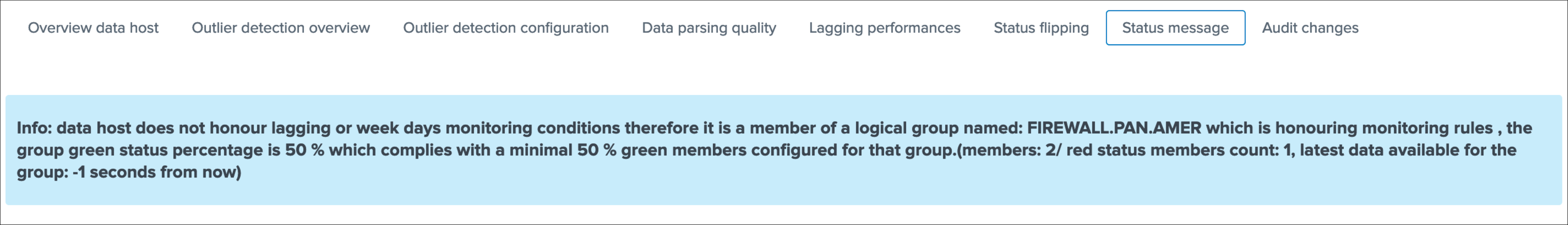

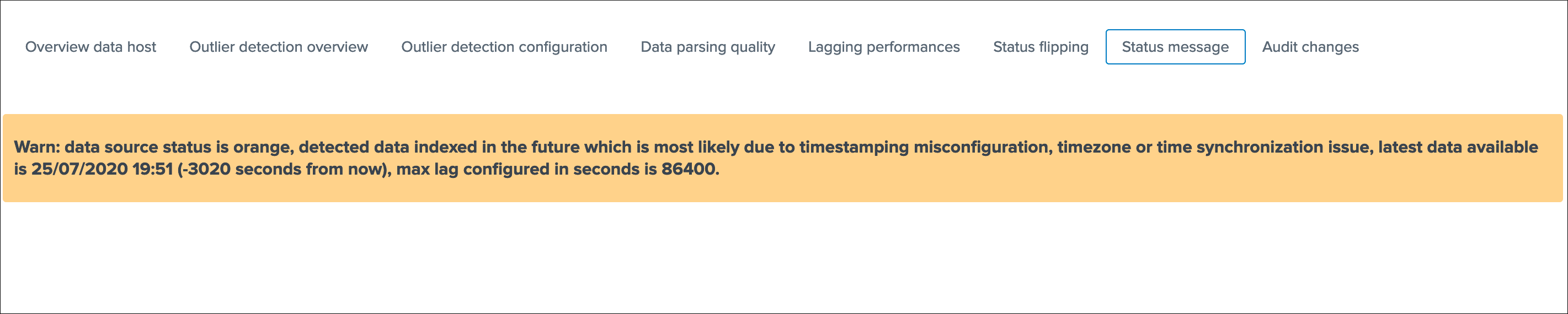

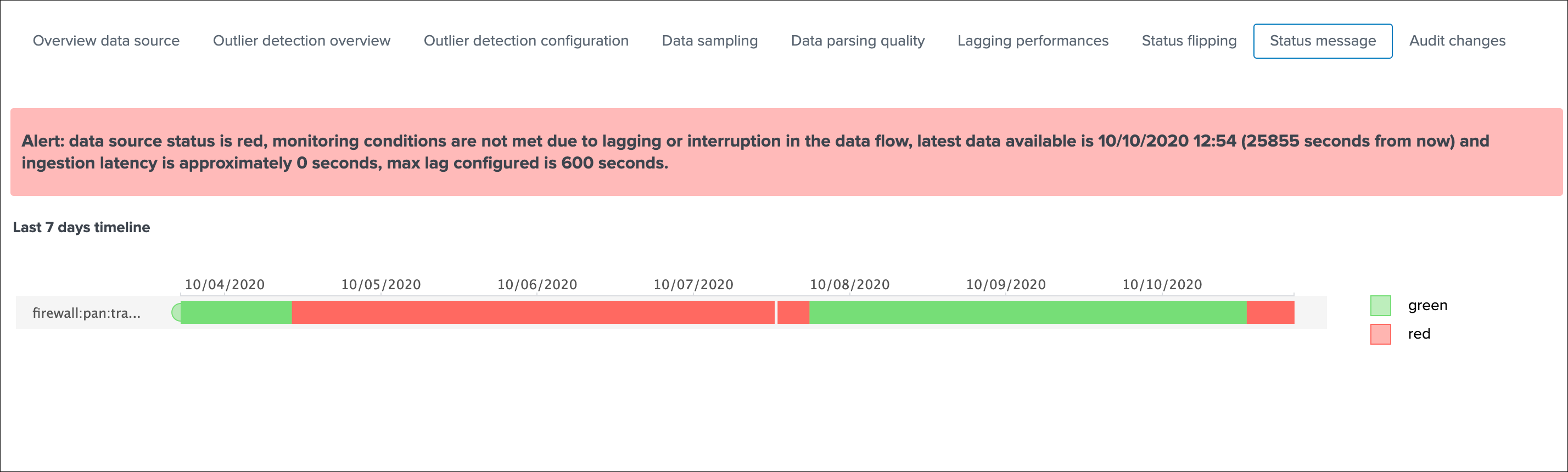

This screen exposes a human friendly message describing the current state of the entity, depending on the conditions the message will appear as green, red, orange or blue:

example of a green state:

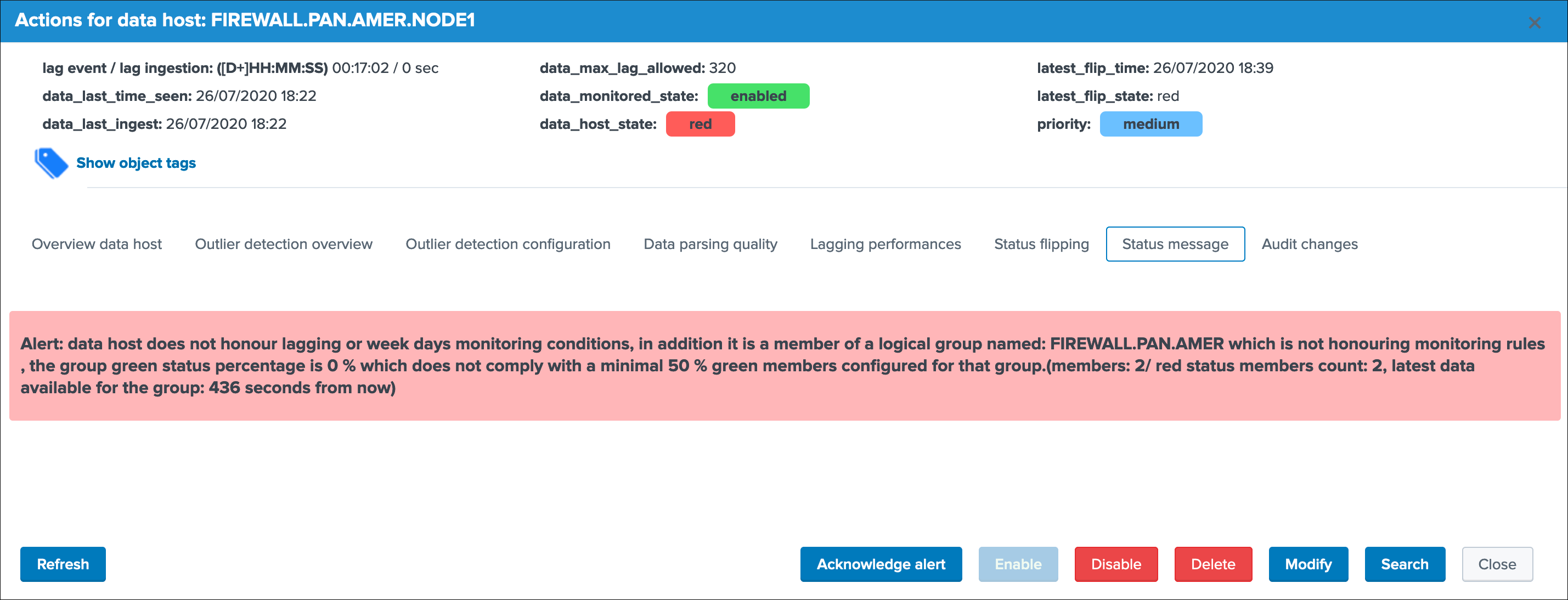

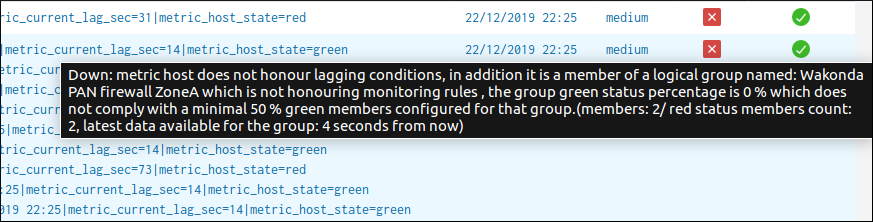

example of a red state due to lagging conditions not met:

example of a red state due to outliers detection:

example of a red state due to data sampling anomalies detected:

example of a red state due to hosts dcount threshold not reached:

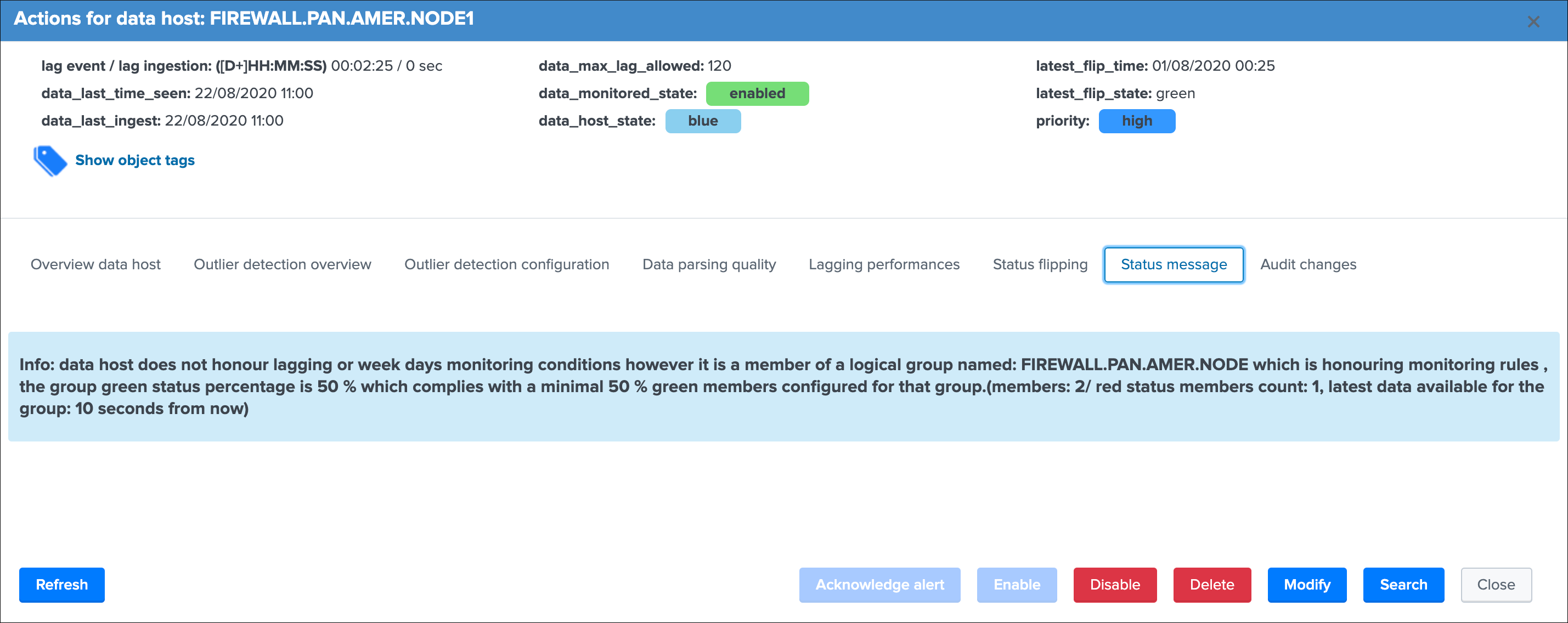

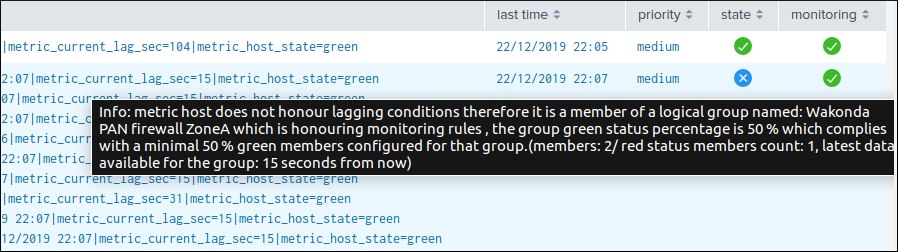

example of a blue state due to logical groups monitoring conditions not met (applies to data hosts and metrics hosts only):

example of an orange state due to data indexed in the future:

In addition, an integration using the timeline custom view provides an enhanced overview of the entity status over time:

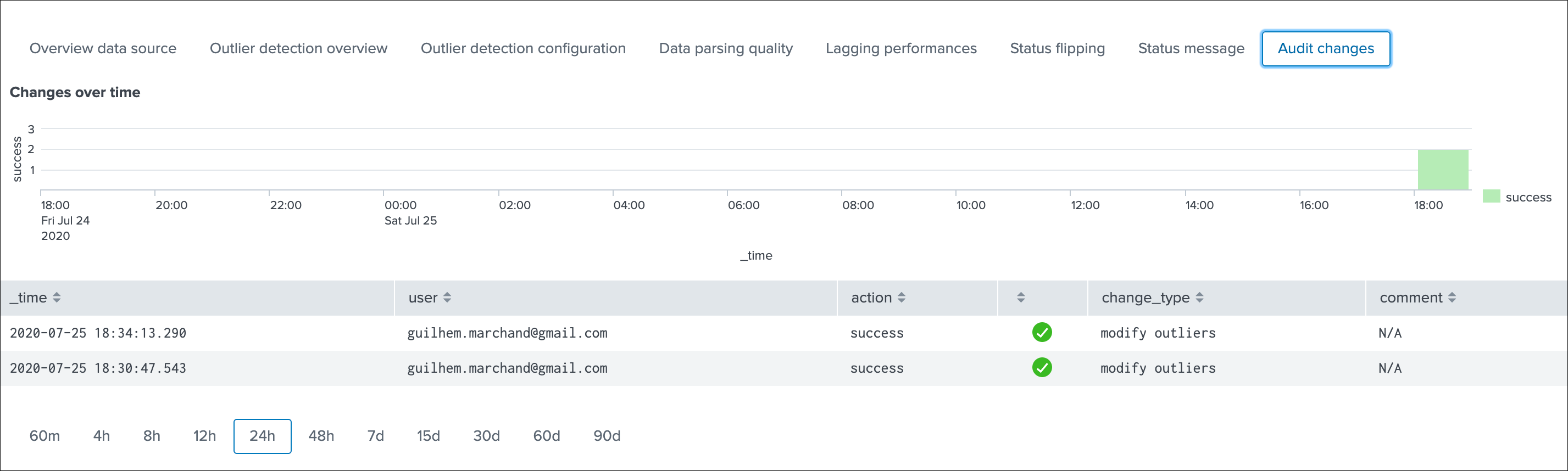

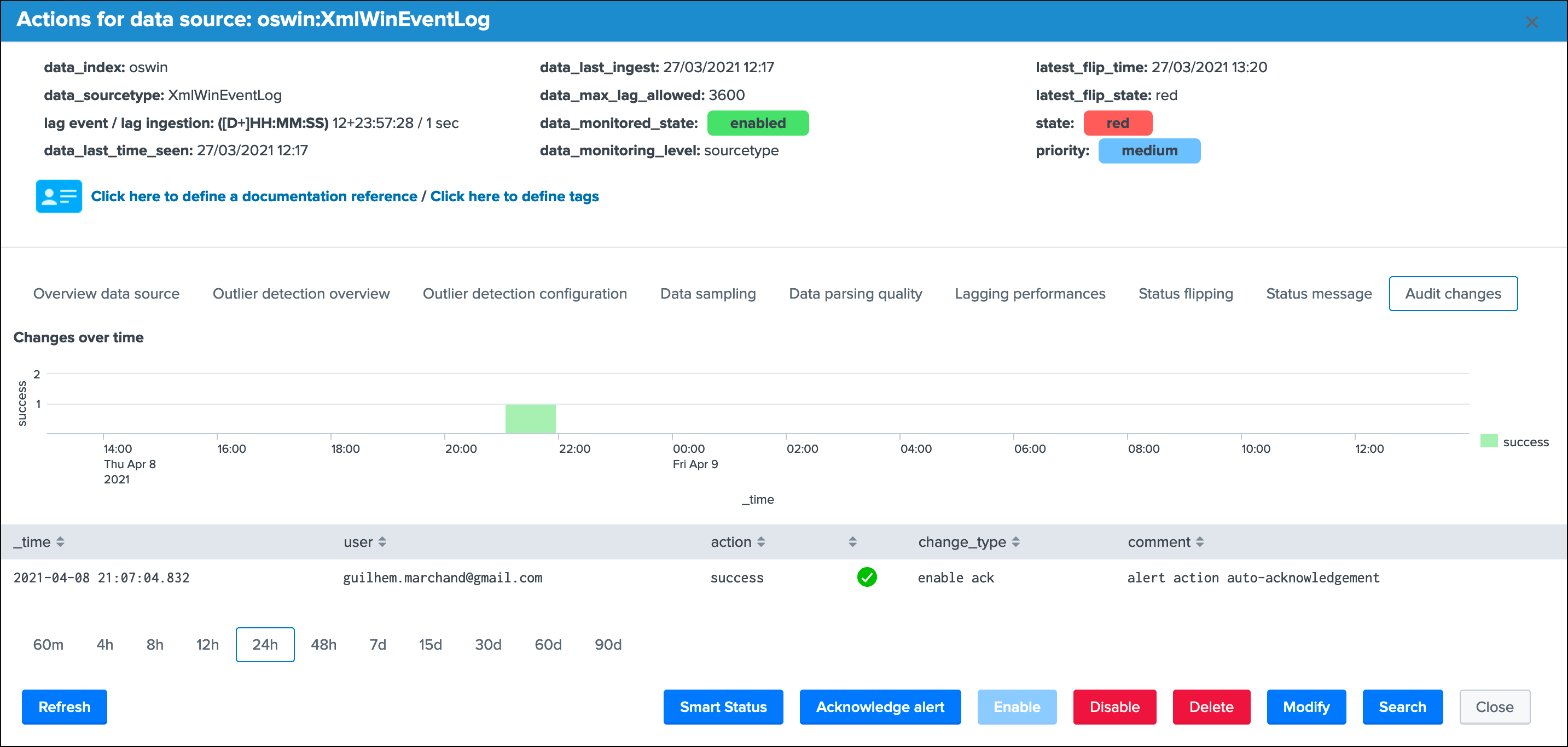

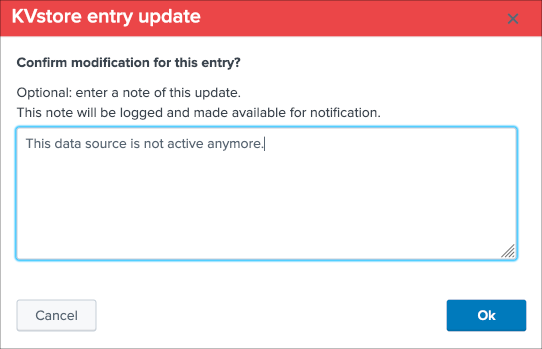

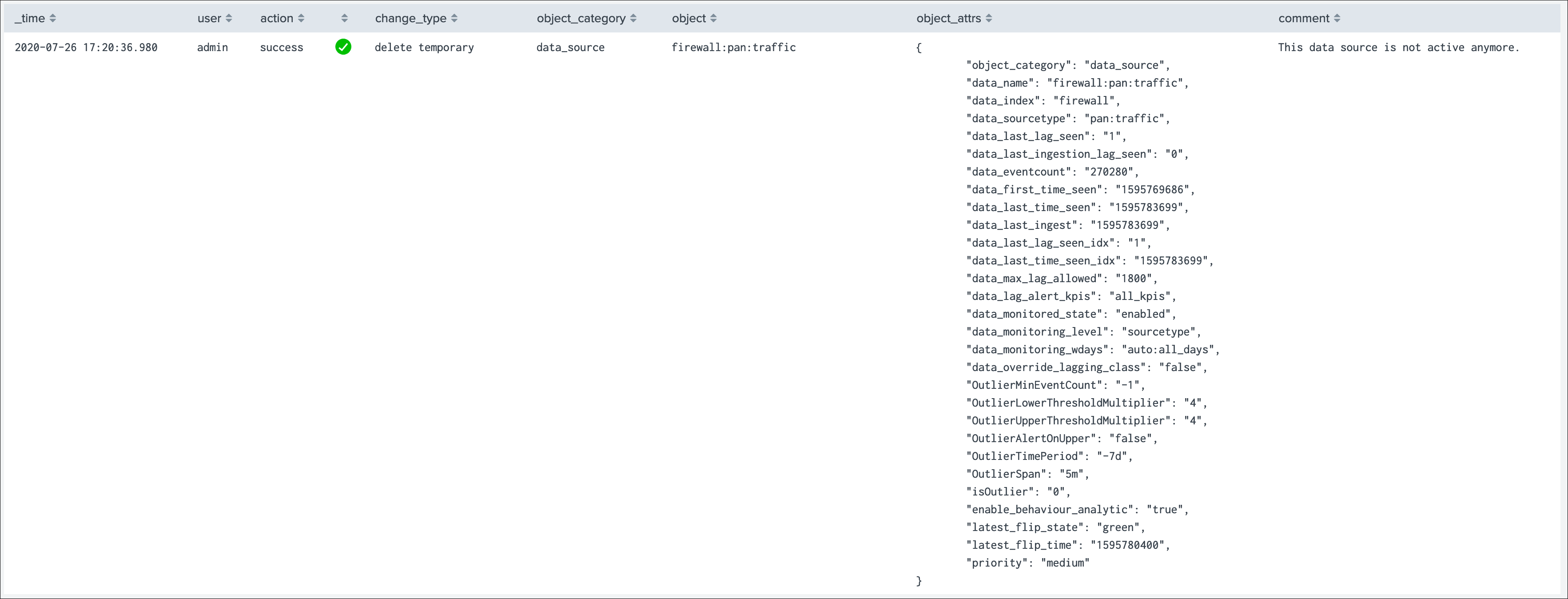

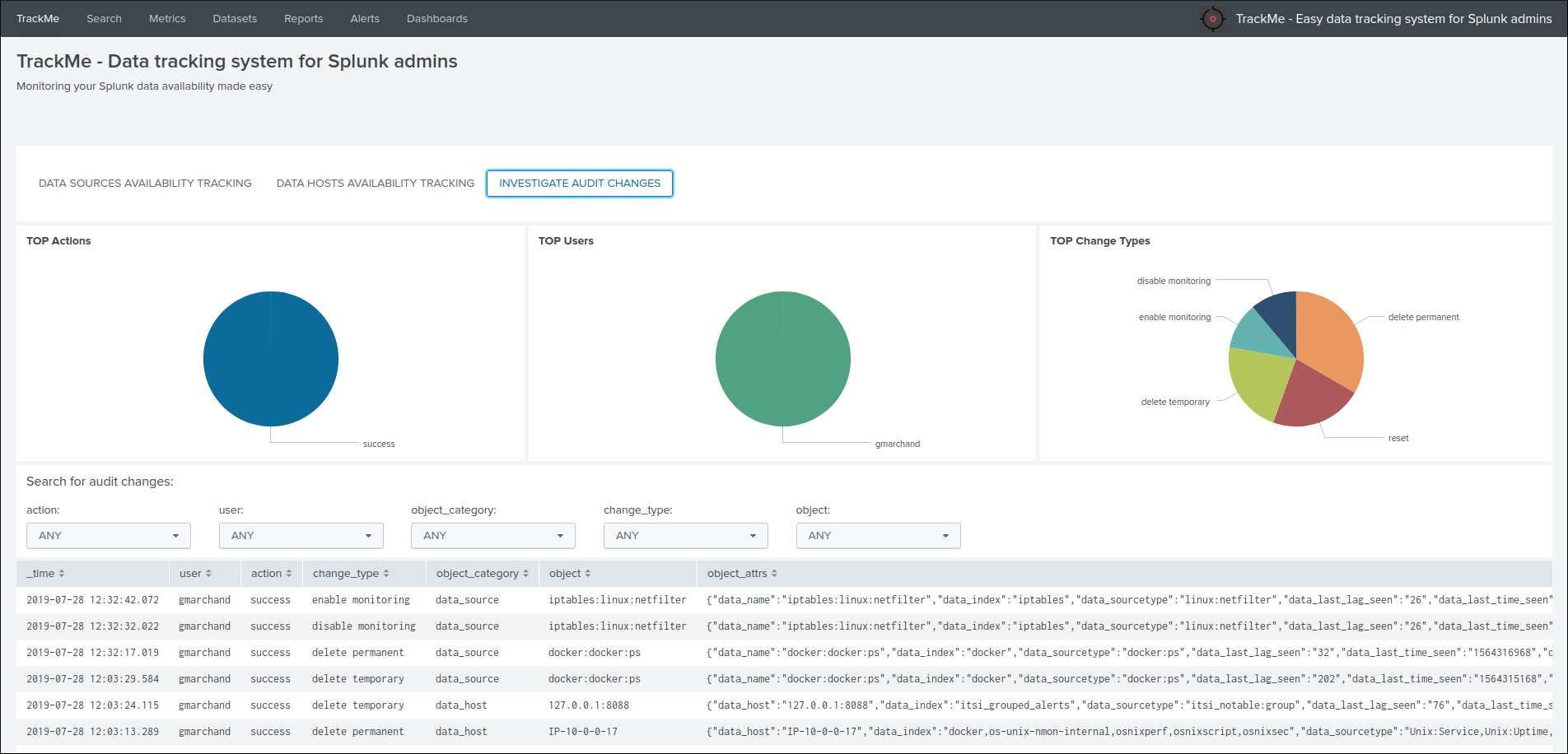

Audit changes¶

This final screen exposes all changes that were applied within the UI to that entity which are systematically recorded in the audit KVstore:

See Auditing changes for more details about the feature.

Action buttons¶

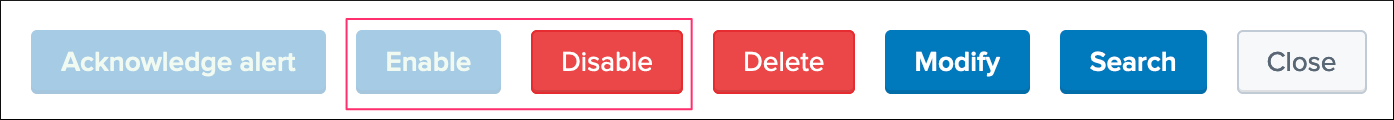

Finally, the bottom part of the screen provides different buttons which lead to different actions:

Actions:

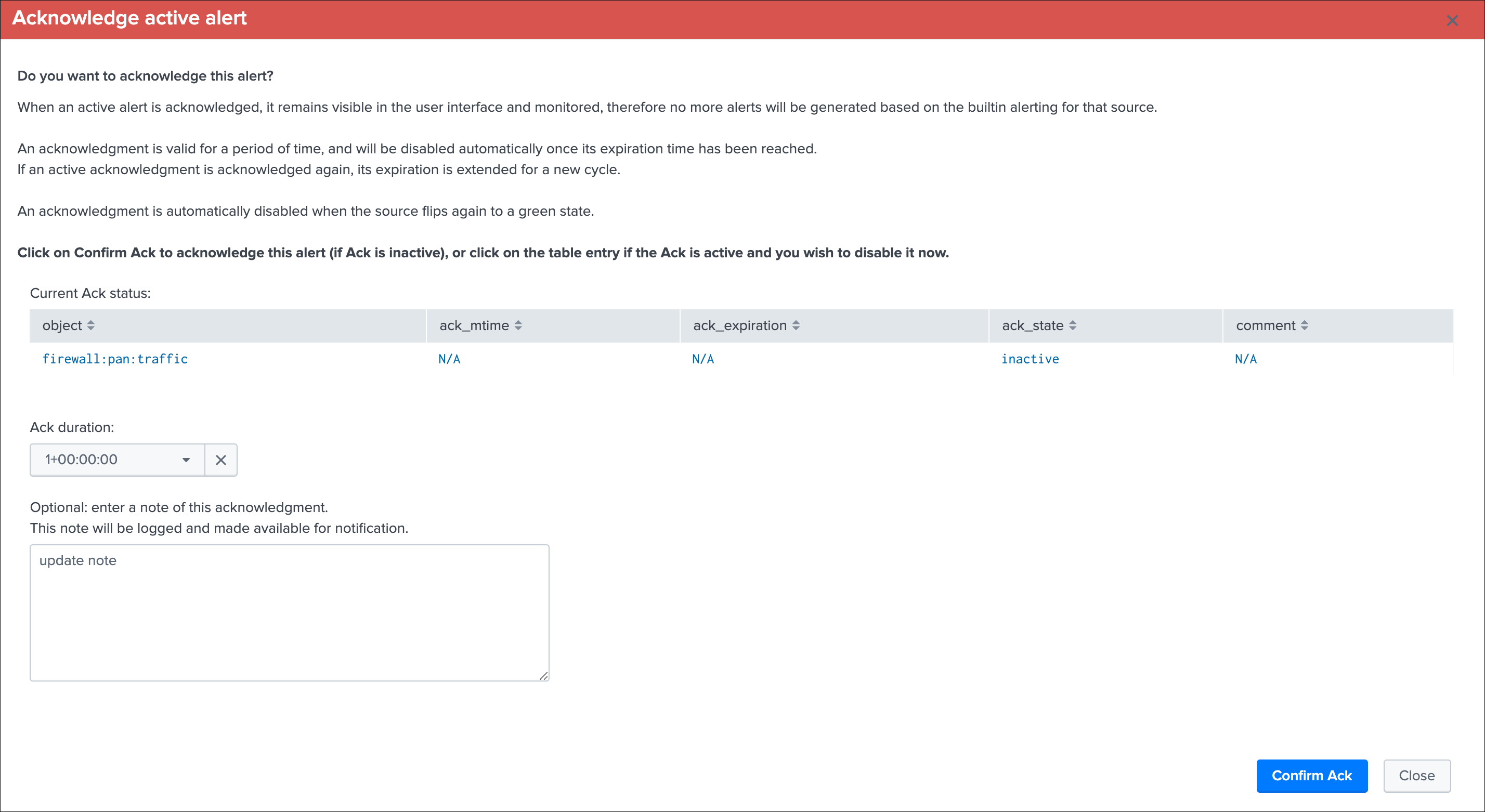

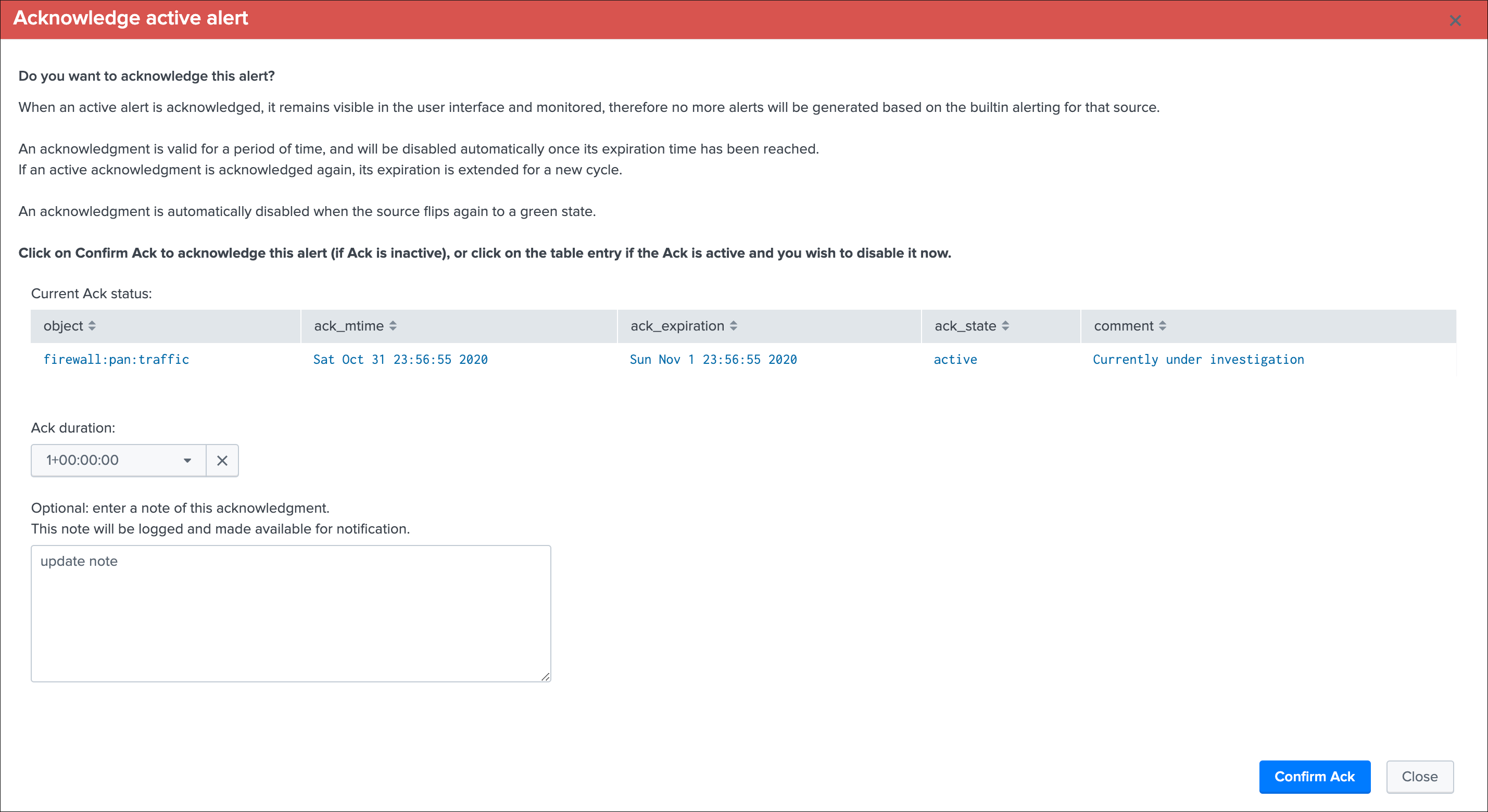

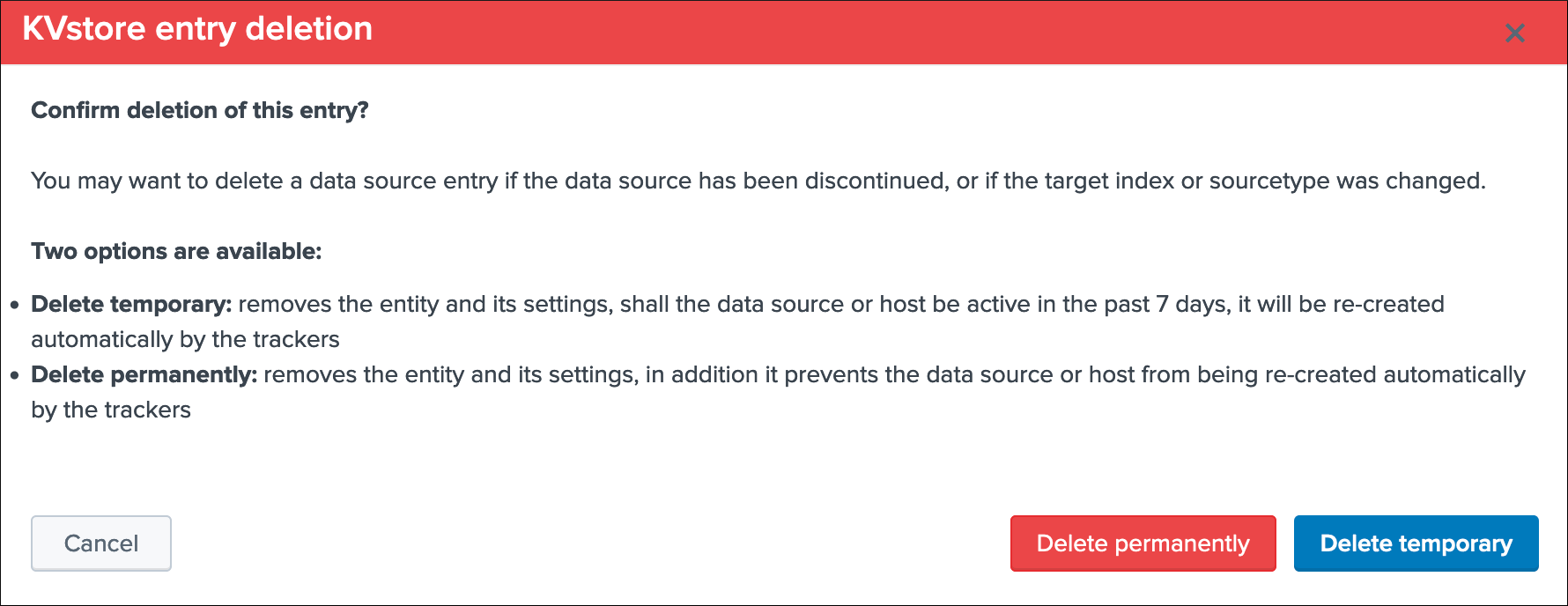

Refreshwill refresh all values related to this entity, it will actually run a specific version of the tracker and update the KVstore record of this data source. Charts and other calculations are refreshed as well.Smart Statusis a powerful TrackMe REST API endpoint that does automated analysis and conditional correlations to provide an advanced status of the entity, and fast the investigaton of an issue root cause.Acknowledge alertcan only be clicked if the data source is effectively in a red state, acknowledging an alert prevent the out of the box alerts from triggering a new alert for this entity until the acknowledgment expires.Enablecan only be clicked if the monitoring state is disabled, if clicked and confirmed, the value of the fielddata_monitored_statewill switch from disabled to enabledDisableopposite of the previousModifyprovides access to the unified modification window which allows interacting with different settings related to this entitySearchopens a search window in a new tab for that entity

See Alerts tracking for more details about the acknowledgment feature and alert related configurations

See Data source unified update for more details about the unified update UI for data sources

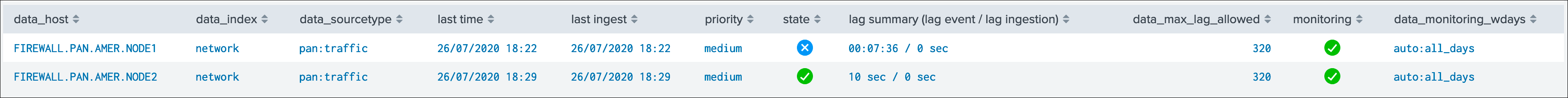

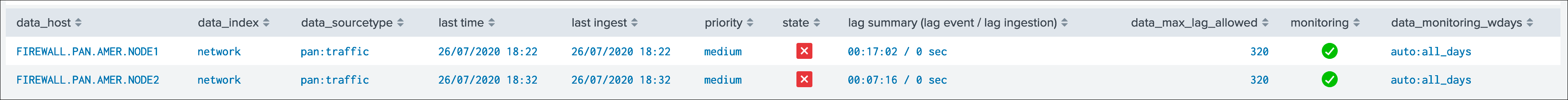

Data Hosts tracking and features¶

Rather than duplicating all the previous explanations, let’s expose the differences between the data sources and data hosts tracking.

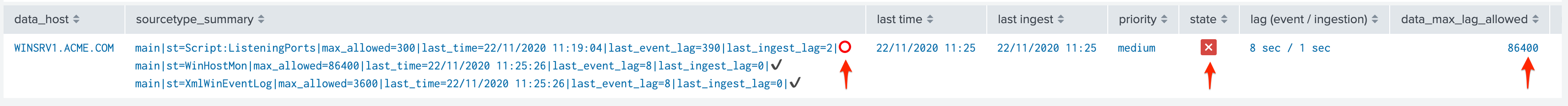

Data host monitoring¶

Data hosts monitoring does data discovery on a per host basis, relying on the Splunk host Metadata.

To achieve this, TrackMe uses tstats based queries to retrieve and record valuable Metadata information, in a simplistic form this is very similar to the following query:

| tstats count, values(sourcetype) where index=* by host

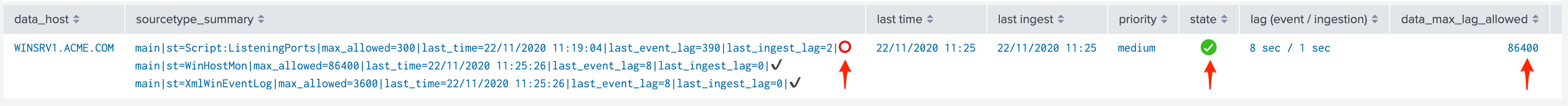

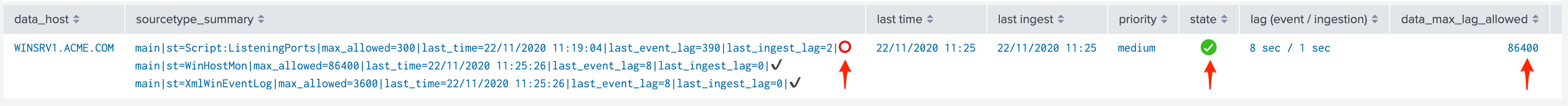

Particularities of data hosts monitoring¶

The features are almost equivalents between data sources and data hosts, with a few exceptions:

state condition:the data host entity state depends on the global data host alerting policy (which is defined globally and can be overriden on a per host basis)- Depending on the policy, he host state will turn red if either no more sourcetypes are generating data (track per host policy), or any of the sourcetypes monitored for the host has turned red (track per sourcetype policy)

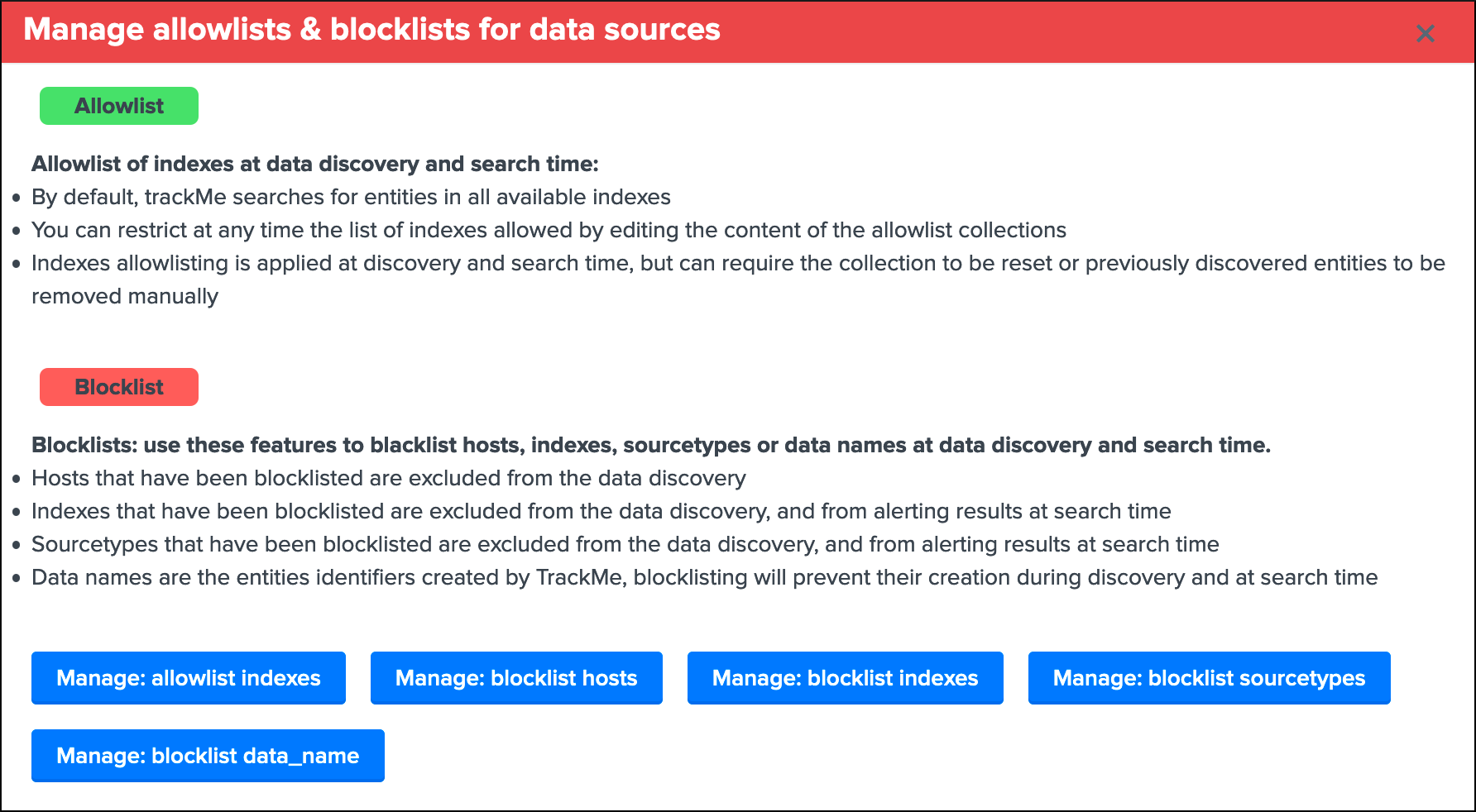

- Using

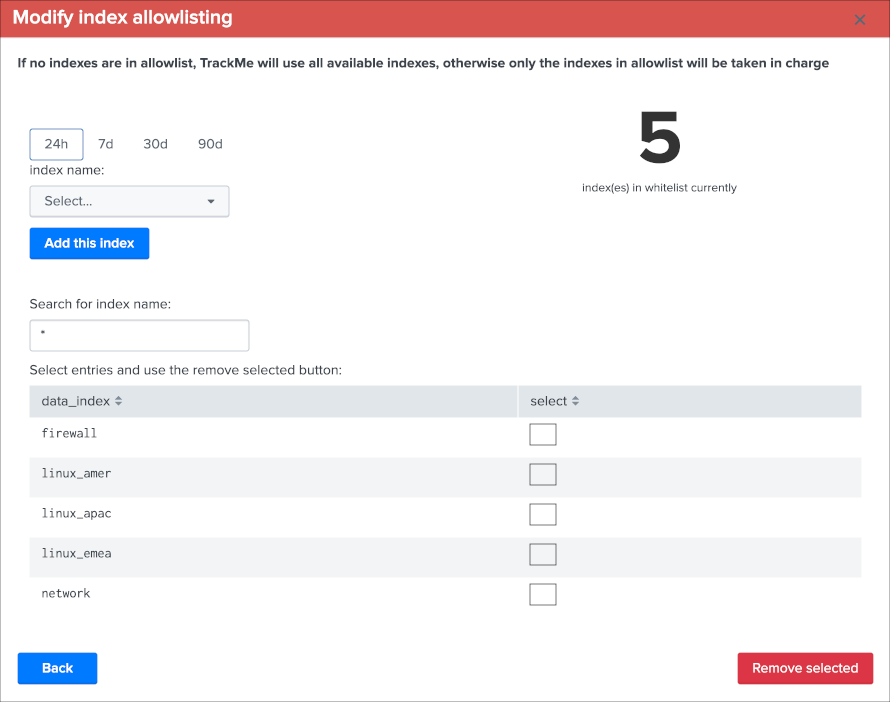

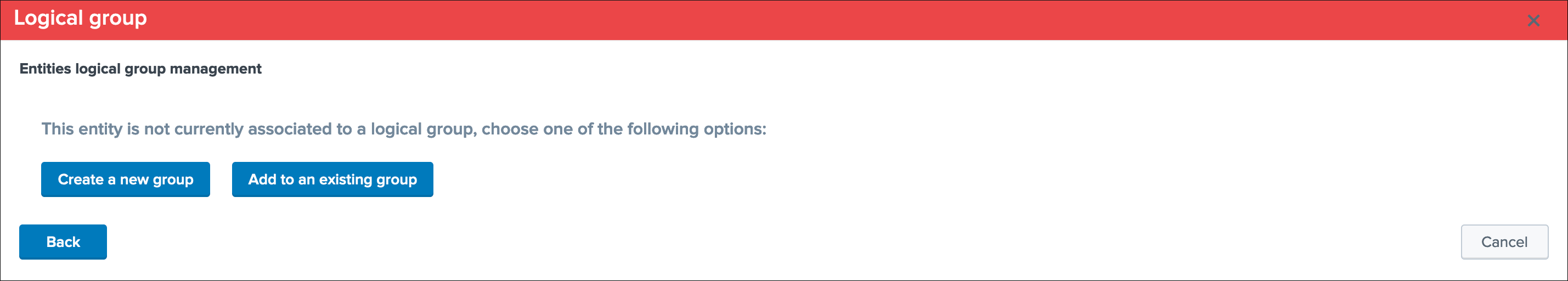

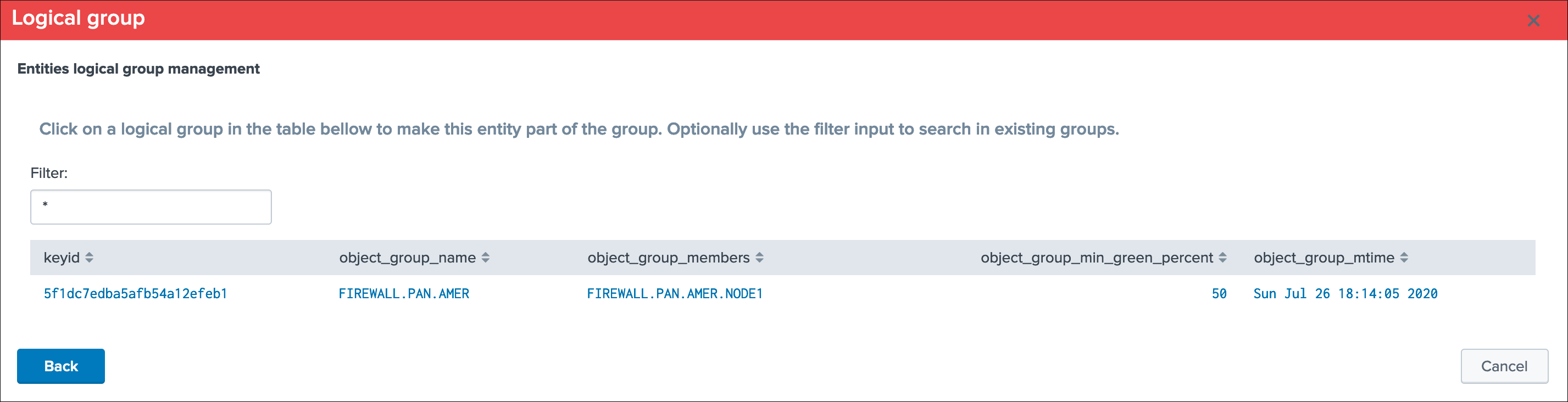

allowlists and blocklistsprovide additional granularity to define what data has to be included or is excluded during the searches Outliers detectionis available for data hosts too and would help detecting significant changes such as a major sourcetype that is not ingested anymorelogical group: a data host can be part of a logical group, this feature is useful for example to handle a couple of active / passive entities (example with firewalls) where the passive entity will not be generating any data activelyobject tags: this is an additional feature to data hosts and metric hosts that allows looking against a third party lookup, such as your CMDB data stored in Splunk, or the Splunk Enterprise Security assets knowledge, to provide an active link and access quickly these enrichment information

See Logical groups (clusters) for more details on this feature

See Enrichment tags for more details om this feature

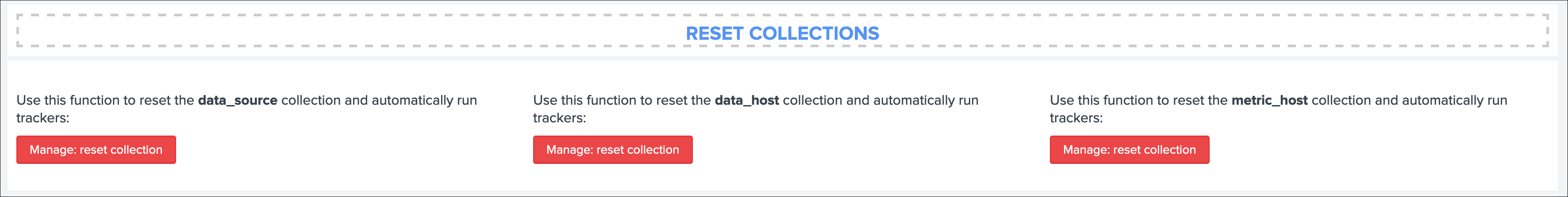

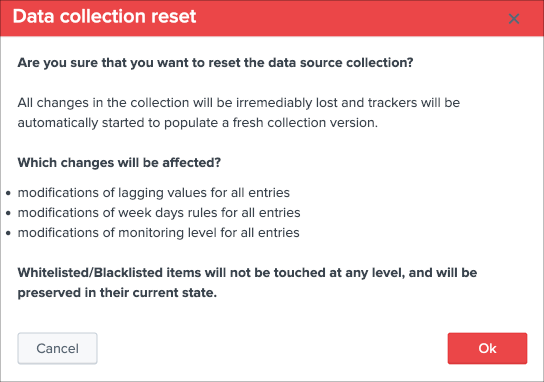

Additionally, if there has been indexes migrations, or if one or more sourcetypes have been decomissioned, this will affect the state of a given host if the alert policy is defined to track per sourcetype, you can reset the knowledge of indexes and sourcetypes on a per host basis via the reset button:

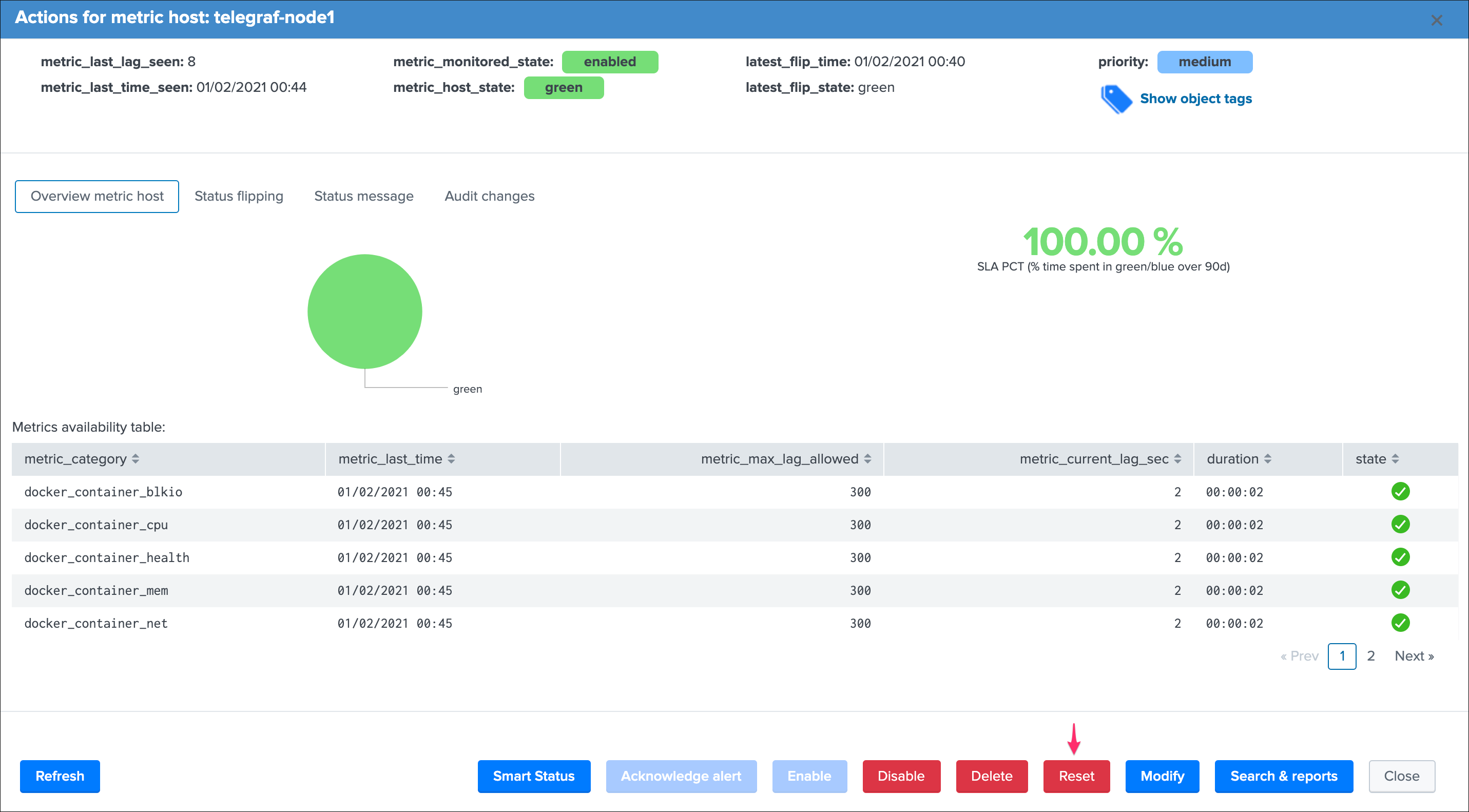

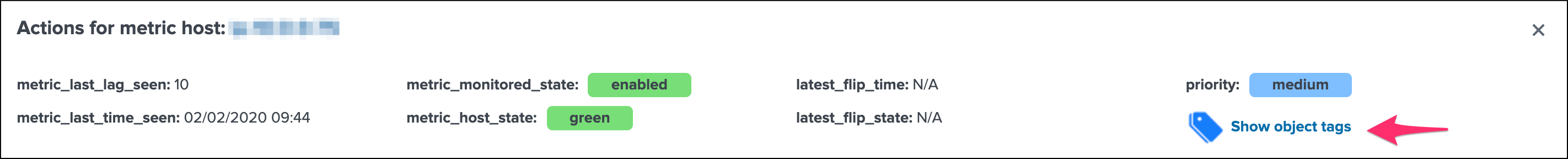

Metric Hosts tracking and features¶

Metric hosts tracking is the third main notion in TrackMe, and deals with tracking hosts sending metrics to the Splunk metric store, let’s expose the feature particularities.

Metric host monitoring¶

The metric hosts feature tracks all metrics send to the Splunk metric store on a per host basis.

In a very simplistic form, the notion is similar to performing a search looking at all metrics with mstats on a per host basis and within a short time frame:

| mstats latest(_value) as value where index=* metric_name="*" by metric_name, index, host span=1s

Then, the application groups all metrics on per metric metric category (the first metric name segment) and a per host basis.

Particularities of metric hosts monitoring¶

Compared to data sources and data hosts tracking, metric hosts tracking provides a similar level of features, with a few exceptions:

state condition:the metric host state is conditioned by the availability of each metric category that was discovered for that entity- Shall a metric category stop from being emitted, the state will be affected accordingly

- Using

allowlists and blocklistsprovide additional granularity to define the include and exclude conditions of the metric discovery Outliers detectionis not available for metrics hostslogical group: a metric host can be part of a logical group, this feature is useful for example to handle a couple of active / passive entities (example with firewalls) where the passive entity will not be generating any metrics activelyobject tags: this is an additional feature to data hosts and metric hosts that allows looking against a third party lookup, such as your CMDB data stored in Splunk, or the Splunk Enterprise Security assets knowledge, to provide an active link and access quickly these enrichment information- Metric hosts tracking relies on the

default max lag allowedpermetric categorywhich is defined by default to 5 minutes (300 seconds) and can be managed by creatingmetric SLA policies - The entity screen provides some metric specific search options to provide insights against these specific entities and their metrics

Additionally, if a metric category stops being emitted this affects the global status of the entity, if these metrics are decomissioned you can reset the host metrics knowledge:

Triggering this action will remove the current knowledge of metric categories for this entity only and trigger a fresh discovery without losing additional settings like the priority.

See Logical groups (clusters) for more details on this feature

See Enrichment tags for more details om this feature

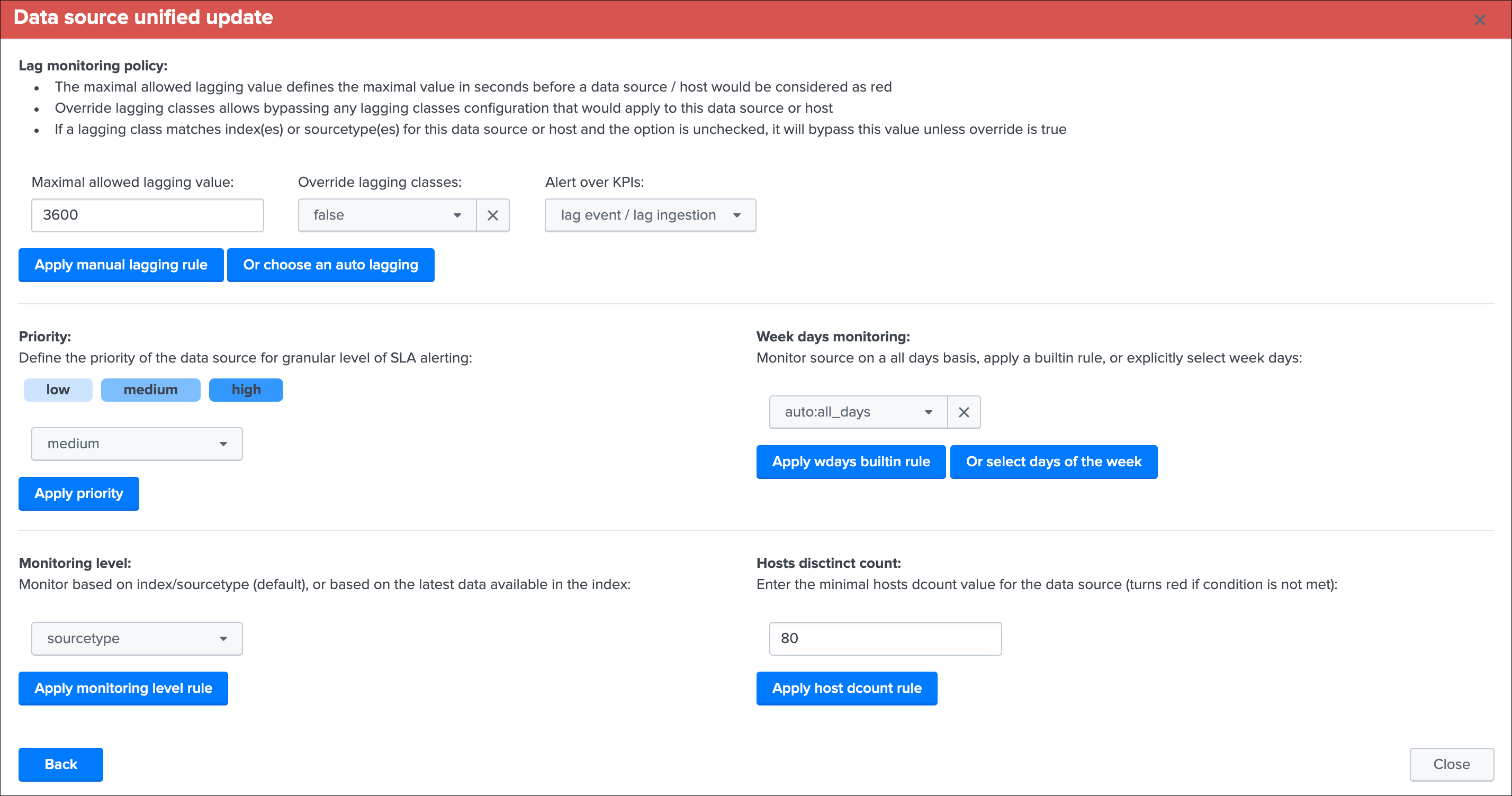

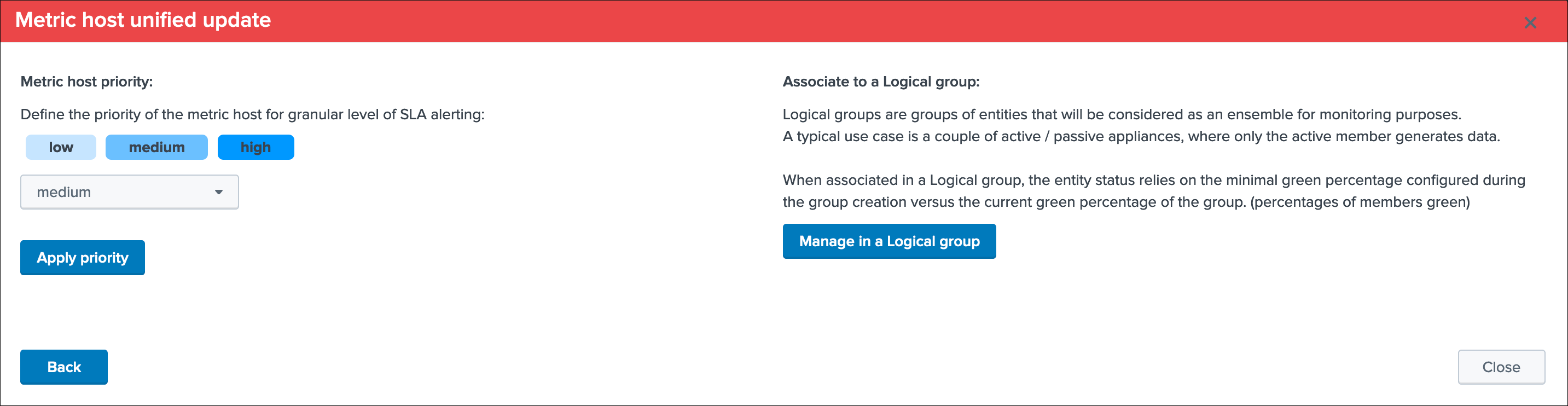

Unified update interface¶

For each type of tracking, a unified update screen is available by clicking on the modify button when looking at a specific entity:

These interfaces are called unified as their main purpose is to provide a central place in the UI where the modification of the main key parameters would be achieved.

In this screens, you will define the priority level assignment, modify the lagging policy, manage logical groups, etc.

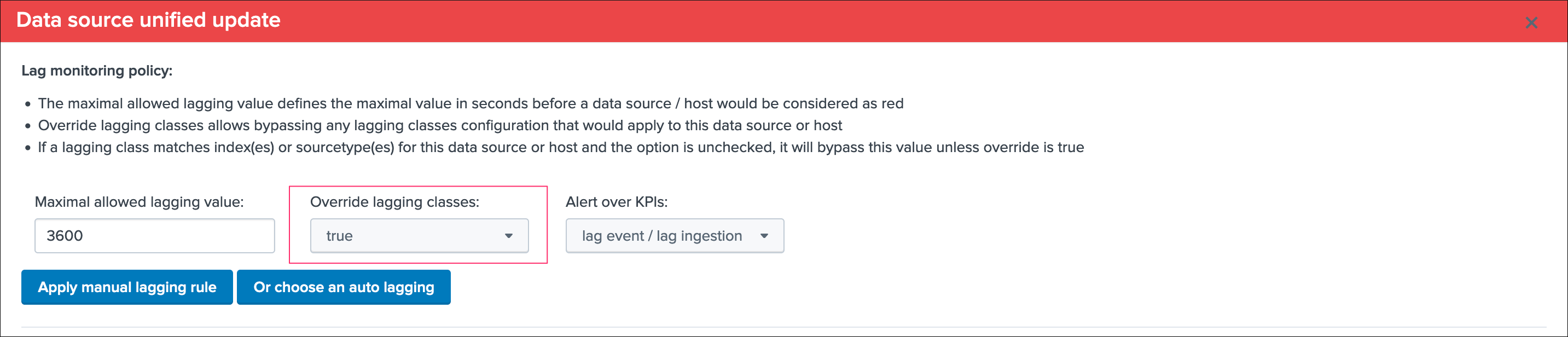

Data source unified update¶

Data hosts unified update¶

Metric hosts unified update¶

Unified update interface features¶

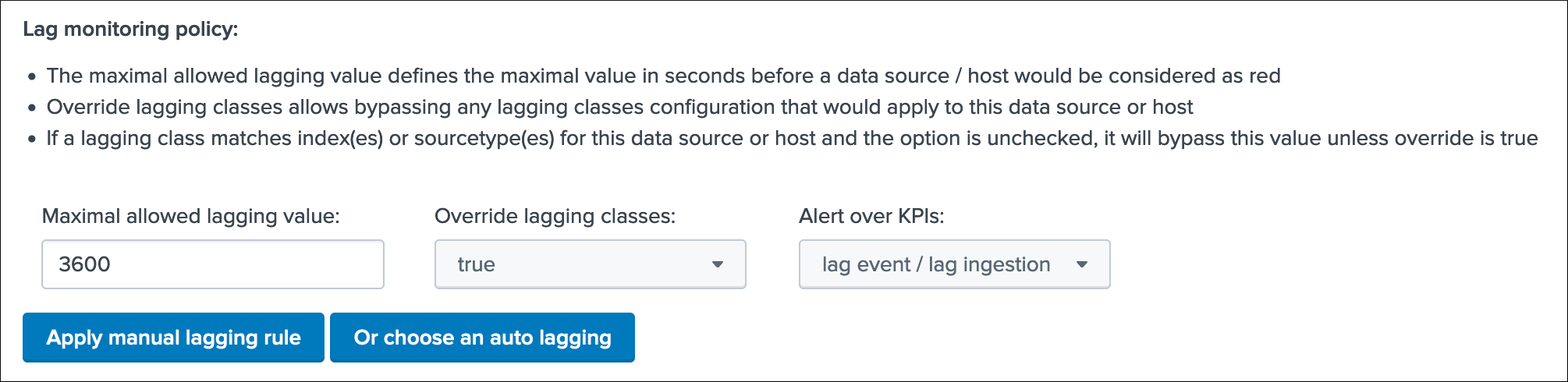

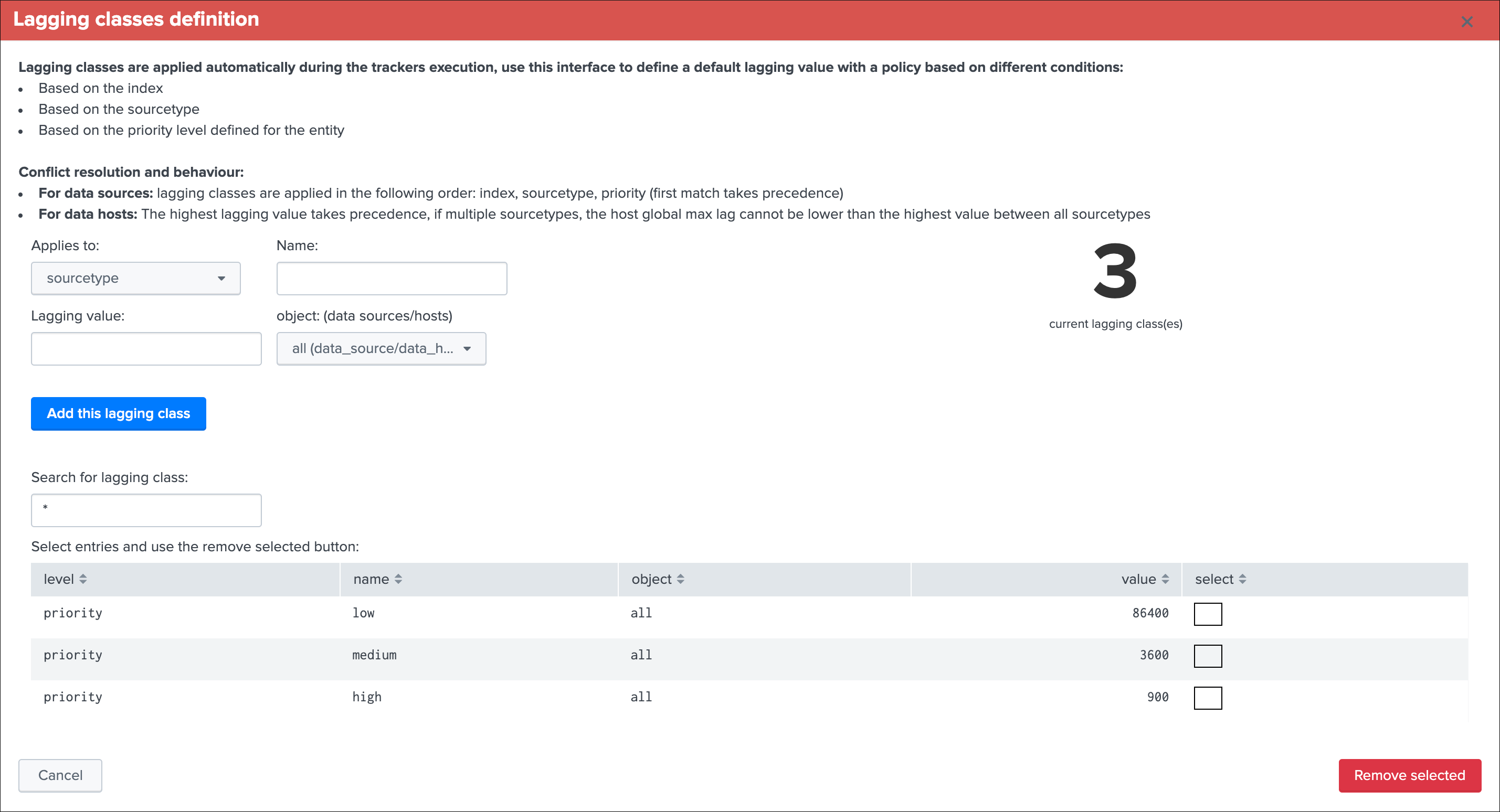

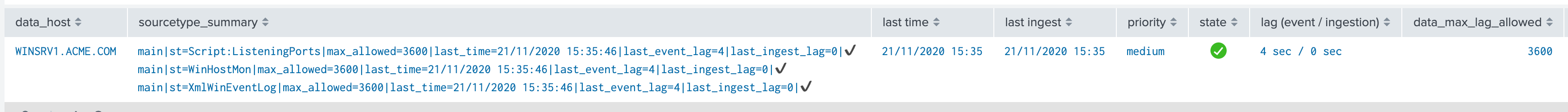

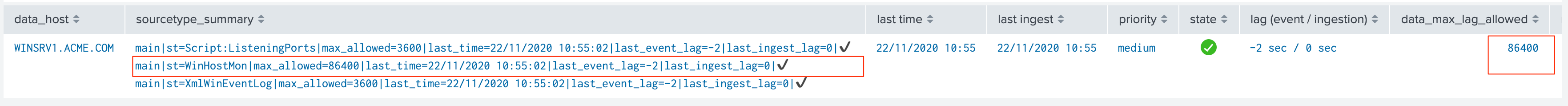

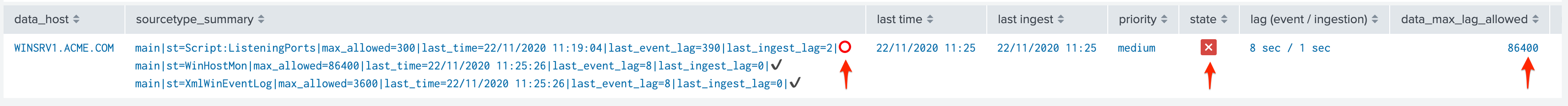

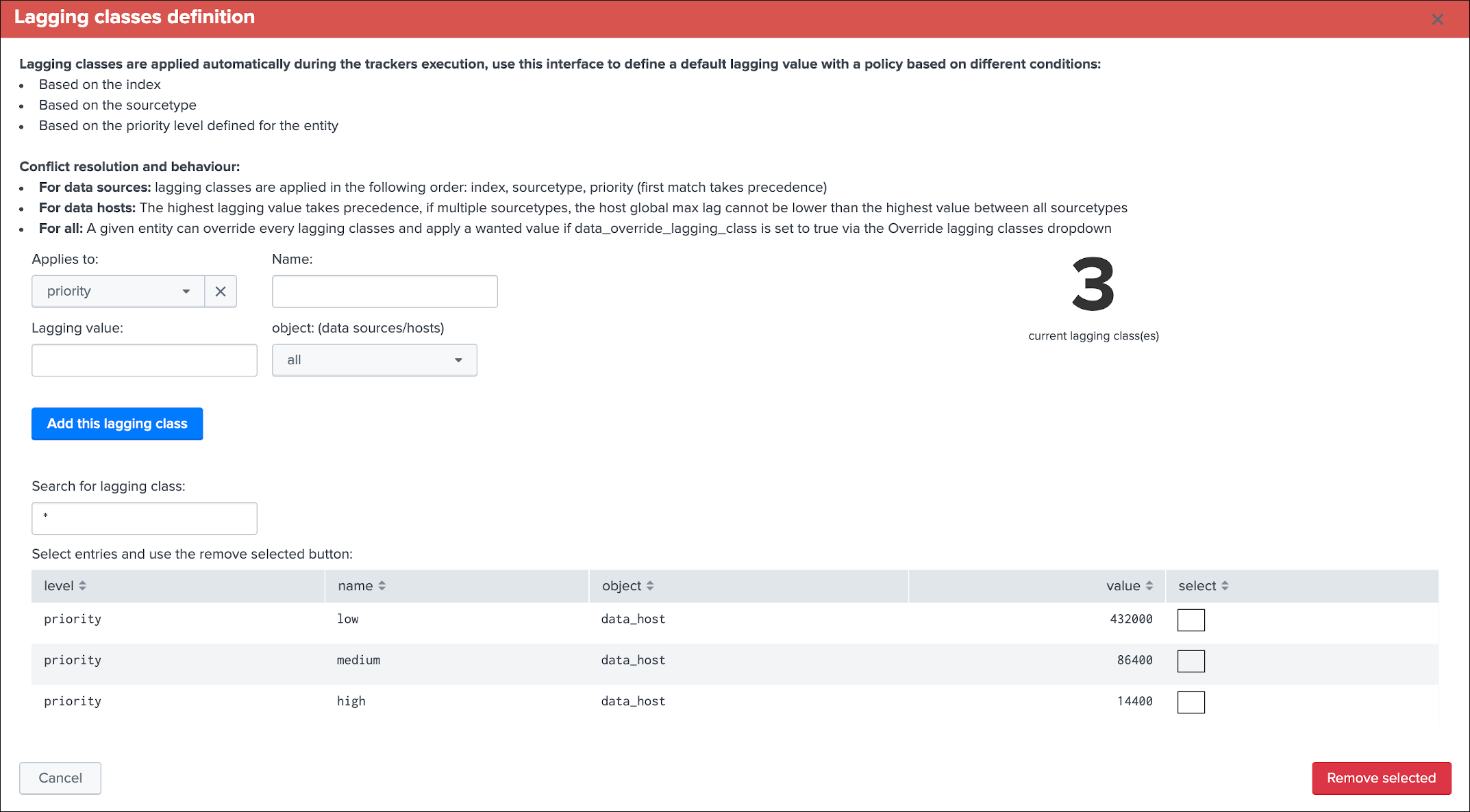

Lag monitoring policy:

In this part of the screen you will define:

- The

max lag allowedvalue that conditions the state definition of the entity depending on the circumstances - This value is in

secondsand will be taken into account by the trackers to determine the colour of the state Override lagging classesallows bypassing any lagging class that would have defined and could be matching the conditions (index, sourcetype) of this entity- You can choose which

KPIswill be taken into account to determine the state regarding themax lag allowedand the two main lagging performance indicators - For data hosts, the

alerting policyallows controlling how to consider the green/red state assignment in regards with the state of each sourcetype indexed by the host

See Lagging classes for more details about the lagging classes feature.

See Alerting policy for data hosts for more details about the alerting policy feature.

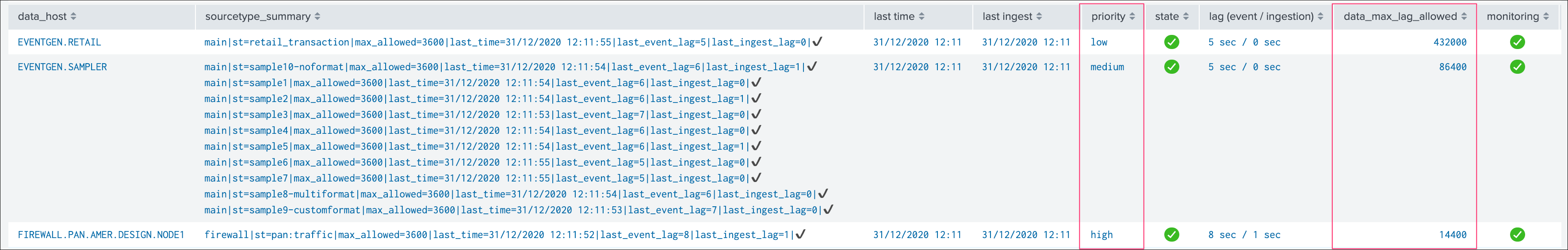

Priority:

This is where you can define the priority of this entity. The priority is by default set to medium can by any of:

lowmediumhigh

Using the priority allows granular alerting and improves the global situation visibility of the environment within the main screens.

See Priority management for more details about this feature

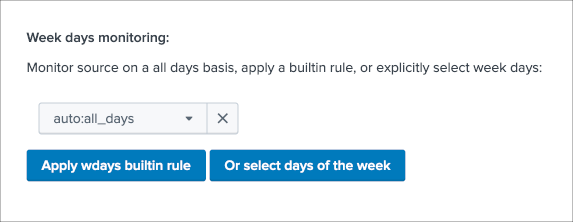

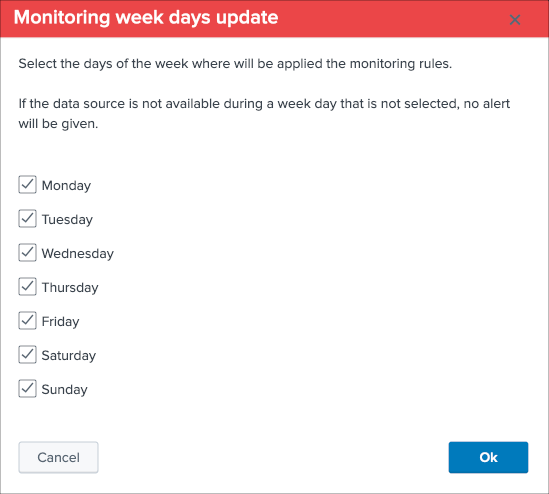

Week days monitoring:

Week days monitoring allows using specific rules for data sources and data hosts regarding the day of the week, by default monitoring rules are always applied, therefore using week days rules allow influencing the red state depending on the current day of the week. (which would switch to orange accordingly)

See Week days monitoring for more details about this feature

Monitoring level:

This option allows you to ask TrackMe to consider the very last events available at the index level rather than the specific sourcetype related to the entity.

This influences the state definition:

- If a data source or host is set to

sourcetype, what conditions the state is meeting the monitoring rules for that sourcetype only (default behaviour) - If it is set to

index, instead of defining a red state because the monitoring conditions are not met, we will consider if there are events available at the index level according to the monitoring rules - The purpose of this feature is to allow interacting with this data source (in that context let’s talk about sourcetypes) without generating an alert as long as data is actively sent to that index

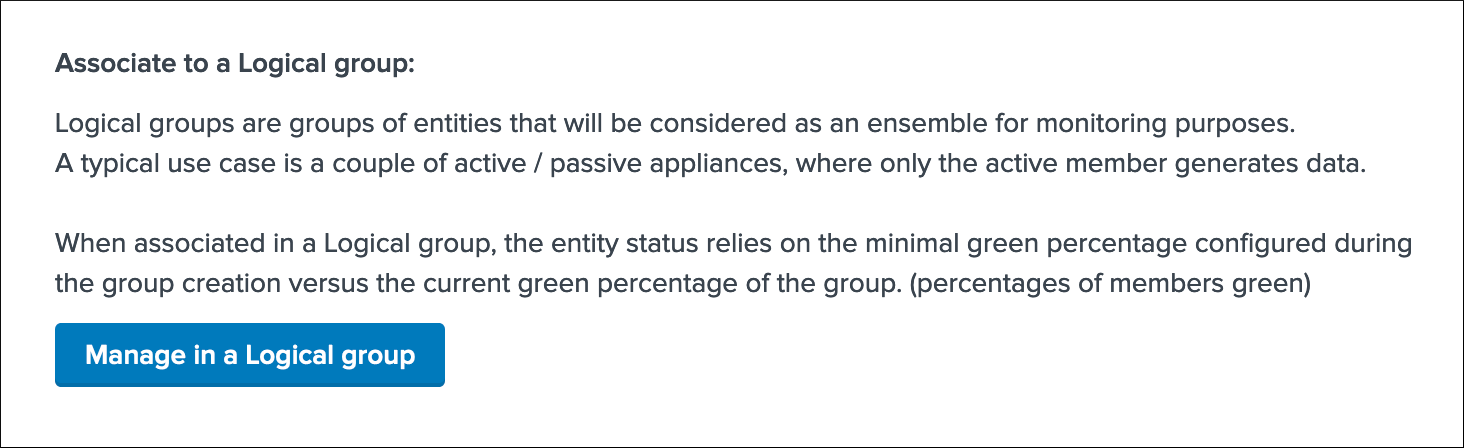

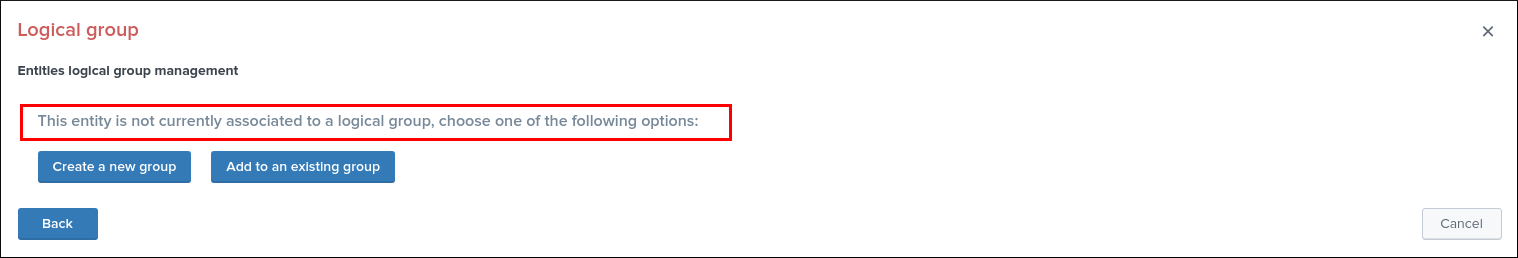

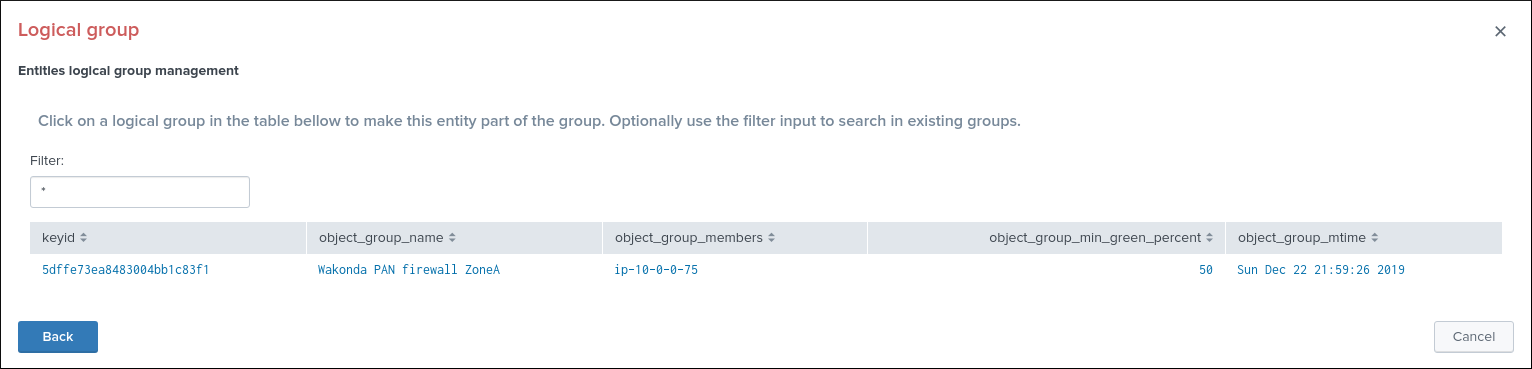

Associate to a logical group:

This option allows grouping data hosts and metric hosts into logical groups which are taken in consideration by groups rather than per entity.

See Logical groups (clusters) for more details about this feature.

Alerting policy: (data hosts only)

This option allows controlling on a per host basis the behaviour regarding the sourcetypes monitoring per host.

See Alerting policy for data hosts for more details about this feature.

Host distinct count threshold: (data sources only)

In some cases, you may want to be alerted when the number of distinct count hosts underneath a data source goes below a certain threshold.

Expected values are:

- “any” (default) which disables any verification against the hosts distinct count number

- A positive integer representing the minimal threshold for the dcount of hosts, if the current dcount goes below this value, the data source turns red

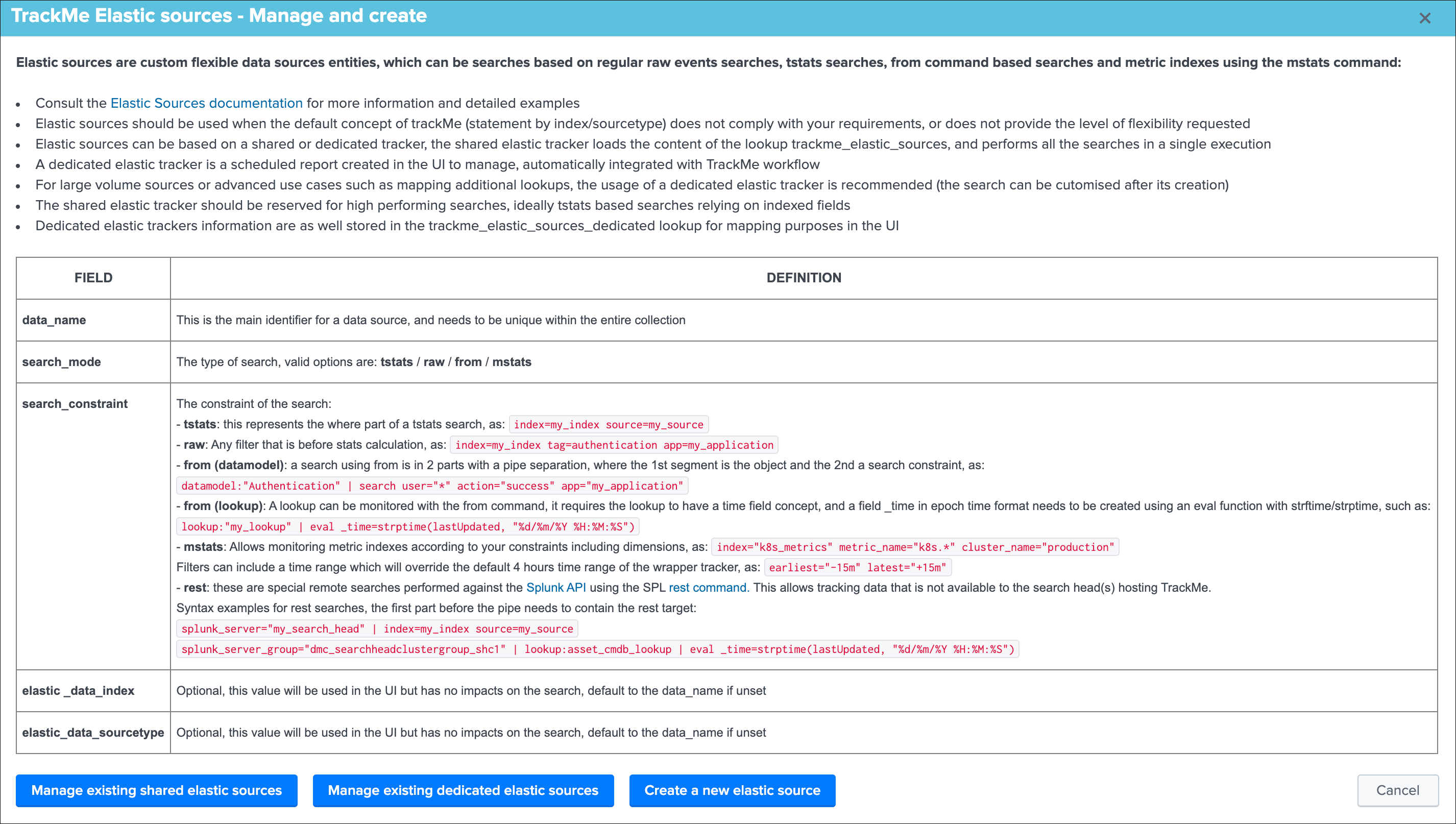

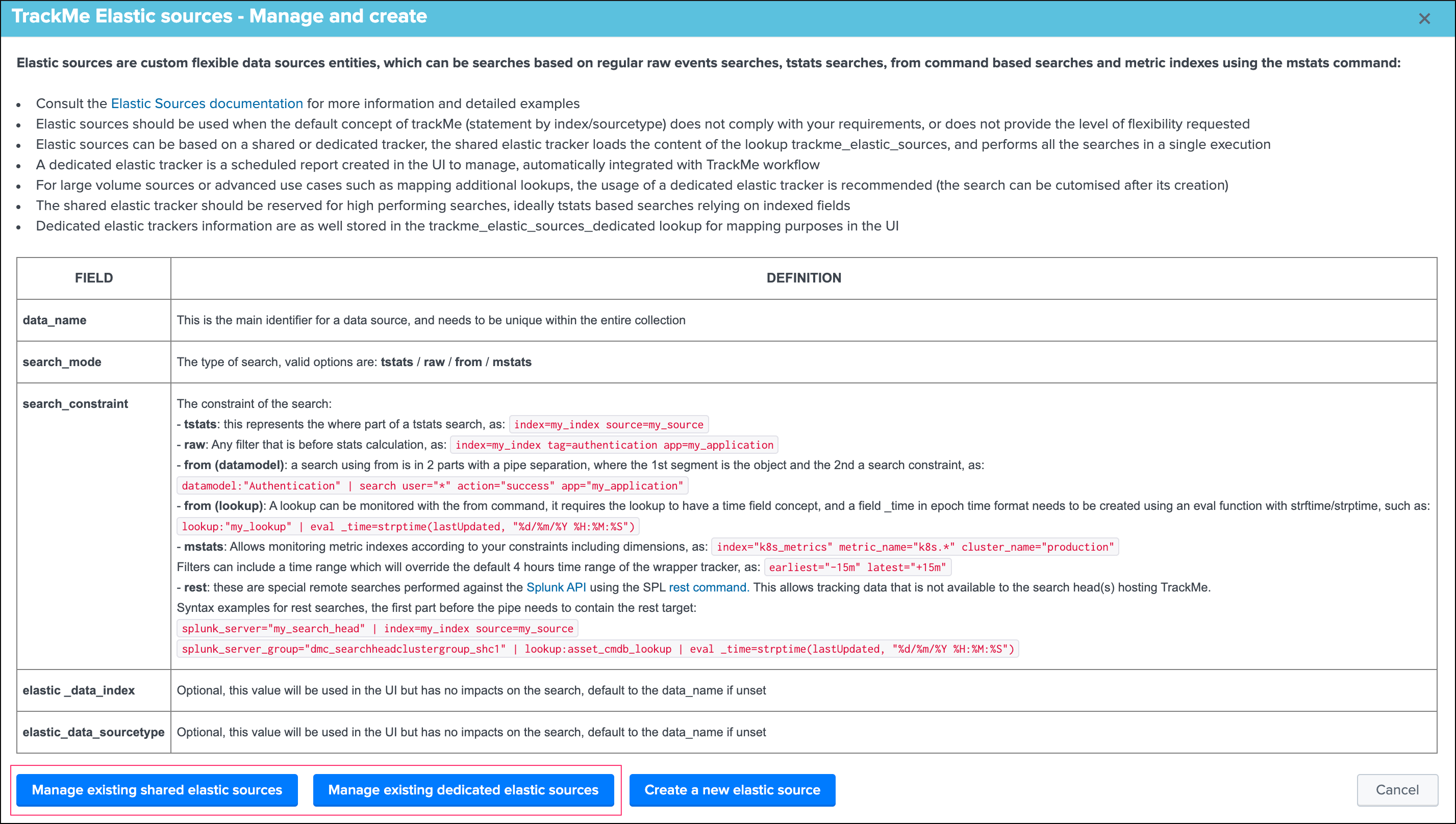

Elastic sources¶

Introduction to Elastic sources¶

Elastic sources feature

- The Elastic sources feature provides a builtin workflow to create virtual data sources based on any constraints and any Splunk language

- This extends TrackMe builtin features to allow dealing with any use case that the default data source concept does not cover by design

- Elastic Sources can be based on

tstats,raw,from (datamodel and lookup)andmstatssearches - In addition, Elastic Sources can be executed over a

restremote query which allows tracking data that the search head(s) hosting TrackMe cannot access otherwise (such as a lookup that is only available to a Search Head Cluster while you run TrackMe on a monitoring utility search head)

As we have exposed the main notions of TrackMe data discovery and tracking in Main navigation tabs, there can be various use cases that these concepts do not address properly, considering some facts:

- Breaking by index and sourcetype is not enough, for instance your data pipeline can be distinguished in the same sourcetype by breaking on the

Splunk source Metadata - In a similar context, enrichment is performed either at indexing time (ideally indexed fields which allow the usage of tstats) or search time fields (evaluations, lookups, etc), these fields represent the keys you need to break on to address your requirements

- With the default

data sourcestracking, this data flow will appear as one main entity and you cannotdistinguisha specific part of your data covered by the standard data source feature - Specific

custom indexed fieldsprovideknowledgeof the data in your context, such ascompany,business unitetc and these pipelines cannot be distinguished by relying on theindexandsourcetypeonly - You need to address any use case that the default main features do not allow you to

Hint

The Elastic source feature allows you to fulfil any type of requirements from the data identification and search perspective, and transparenly integrate these virtual entities in the normal TrackMe workflow with the exact same features.

The concept of “Elastic Sources” is proper to TrackMe, and is linked to the complete level of flexibility the feature provides you to address any kind of use cases you might need to deal with.

In a nutshell:

- An Elastic source can be added to the

shared tracker, or created as anindependent tracker - The search language can be based on

| tstats,rawsearches,| fromand| mstatscommands - Additionally, these searches can be run remotely over the Splunk rest API to address use cases where the data is not accessible to the search head(s) hosting TrackMe

- The shared tracker is a specific scheduled report named

TrackMe - Elastic sources shared trackerthat tracks in a single schedule execution all the entities that have been declared as shared Elastic sources via the UI - Because the

shared trackerperforms asingle execution, there are performance considerations to take into account and the shared tracker should be restricted to very efficient searches in term of run time - In addition,

Elastic sources sharedhave time frame restrictions which are the earliest and latest values of the tracker, you can restrict a shared entity time scope below these values but not beyond - A

dedicated Elastic sourceis created via the UI which generates a new tracker especially for it - As the dedicated Elastic source has its

own schedule report, this provides more capabilities to handle fewer performing searches and as well more freedom to address basically any kind of customisation Dedicated Elastic sourcescan be configured to address any time scope you need, and any search that is required including any advanced customisation you would need

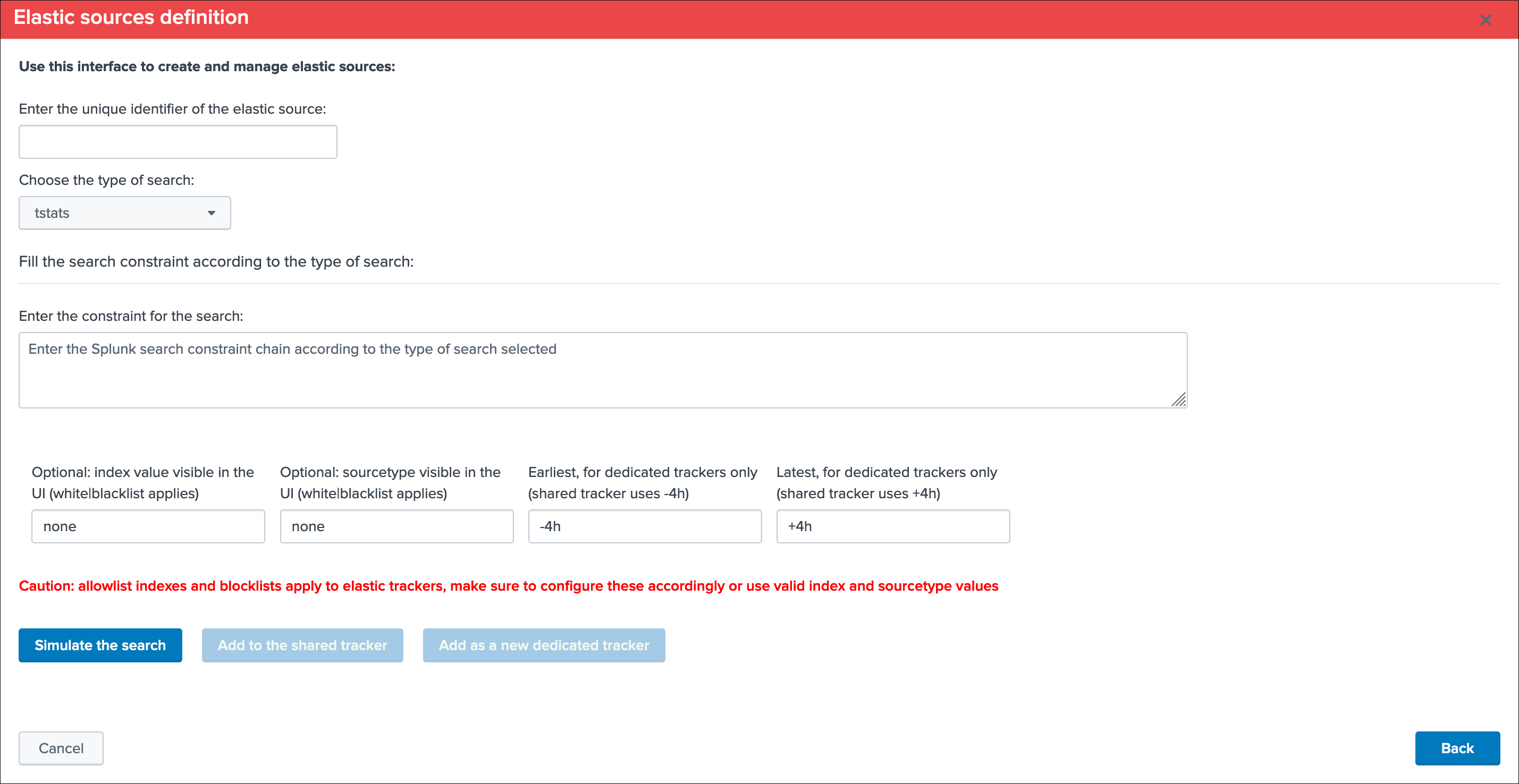

Accessing the Elastic source creation UI¶

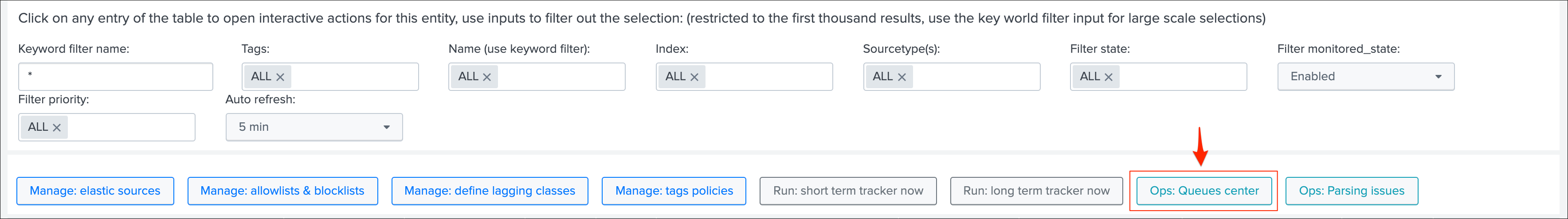

First, let’s expose how to access the Elastic sources interface, from the data sources tab in the main UI, click on the Elastic Sources button:

The following screen appears:

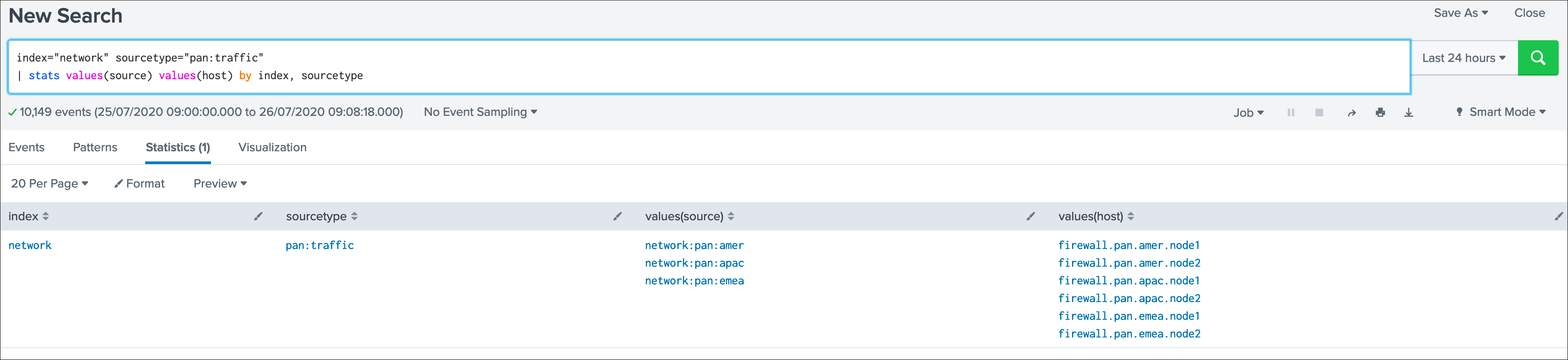

Elastic source example 1: source Metadata¶

Let’s take our first example, assuming we are indexing the following events:

data flow1 : firewall traffic for the region AMER

index="network" sourcetype="pan:traffic" source="network:pan:amer"

data flow2 : firewall traffic for the region APAC

index="network" sourcetype="pan:traffic" source="network:pan:apac"

data flow3 : firewall traffic for the region EMEA

index="network" sourcetype="pan:traffic" source="network:pan:emea"

It is easy to understand that the default standard for data source index + ":" + sourcetype does not allow us to distinguish which region is generating events properly, and which region would not:

In TrackMe data sources, this would appear as one entity and this is not helping me covering that use case:

What if I want to be monitoring the fact that the EMEA region continues to be indexed properly ? and other regions ?

Elastic Sources is the TrackMe answer which allows you to extend the default features with agility and address easily any kind of requirement transparently in TrackMe.

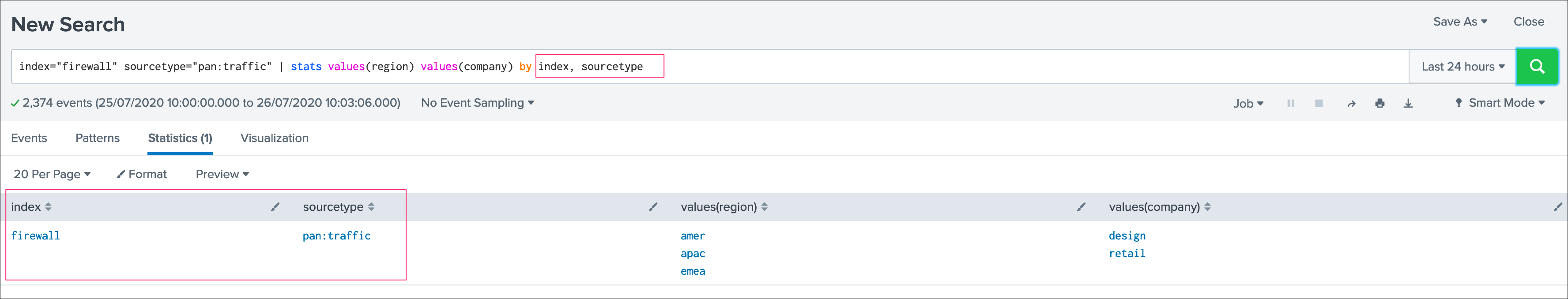

Elastic source example 2: custom indexed fields¶

Let’s extend a bit more the first example, and this time in addition with the region we have a company notion.

At indexing time, two custom indexed fields are created representing the “region” and the “company”.

Custon indexed fields can be created in many ways in Splunk, it is a great and powerful feature as long as it is properly implemented and restricted to the right use cases.

This example of excellence allows our virtual customer to work at scale with performing searches against their two major enrichment fields.

Assuming we have 3 regions (AMER / EMEA / APAC) and per region we have two companies (design / retail), to get the data of each region / company I need several searches:

index="firewall" sourcetype="pan:traffic" region::amer company::design

index="firewall" sourcetype="pan:traffic" region::amer company::retail

index="firewall" sourcetype="pan:traffic" region::apac company::design

index="firewall" sourcetype="pan:traffic" region::apac company::retail

index="firewall" sourcetype="pan:traffic" region::emea company::design

index="firewall" sourcetype="pan:traffic" region::emea company::retail

Note the usage of “::” rather than “=” which indicates to Splunk that we are explicitly looking at an indexed field rather a field potentially extracted at search time.

Indeed, it is clear enough that the default data source feature does not me with the answer I need for this use case:

Rather than one data source that covers the index/sourcetype, the requirement is to have 6 data sources that cover each couple of region/company.

Any failure on the flow level which is represented by these new data sources will be detected. On the opposite, the default data source breaking on on the sourcetype would need a total failure of all pipelines to be detected.

By default, the data source would show up with a unique entity which is not filling my requirements:

The default concept while powerful does not cover my need, but ok there we go and let’s extend it easily with Elastic sources!

Elastic source example 3: tracking lookups update and number of records¶

It is a very common and powerful practice to generate and maintain lookups in Splunk for numbers of purposes, which can be file based lookups (CSV files) or KVstore based lookups.

Starting with TrackMe 1.2.28, it is possible to define an Elastic Source and monitor if the lookup is being updated as expected.

A common caveheat with lookups is that their update is driven by Splunk searches, there are plenty of reasons why a lookup could stop being populated and maintained, such as scheduling issues, permissions, related knowledge objects updates, lack or changes in the data, and many more.

The purpose of this example is to provide a builtin and effiscient way of tracking Splunk lookup updates at scale in the easy way, and get alerted if an update issue is detected in the lookup according to the policies defined in TrackMe.

Let’s consider the simplistic following example, the lookup acme_assets_cmdb contains our ACME assets and is updated every day, we record in the field “lookupLastUpdated” the date and time of the execution of the Lookup gen report in Splunk. (in epoch time format)

The unique requirement for TrackMe to be able to monitor a lookup is to have a time concept which can use to define as the _time field which TrackMe will rely on.

Lookups have no such thing of a concept of _indextime (time of ingestion in Splunk), therefore TrackMe will by default make the index time equivalent to the latest _time from the lookup, unless the Splunk search that will be set in the Elastic Source defines a value based on information from the lookup.

Monitoring lookups with TrackMe allow you to:

- Get automatically alerted when the last update of the lookup is older than a given amount of time (which could indicate an issue on the execution side, such as an error introduced in the SPL code maintaining the lookup, a knowledge object that is missing, etc)

- Monitor and track the number of records, the outliers detection will automatically monitor the number of records in the lookup (which outliers settings can be fine tuned up to your needs, you could even gets alerted if the number of records goes beyond a certain limit)

The following example shows the behaviour with a lookup that is updated every 30 minutes:

Number of records are monitored automatically by the outliers detection, setting can be fined tuned to alert if the number of records goes below, and/or beyond a certain amount of records:

Elastic source example 4: rest searches¶

In some cases, the Splunk instance that hosts the TrackMe application may not not be able to access to a data you wish to monitor.

A very simple to understand use case would be:

- You have a Splunk Search Head Cluster, hosting for example your premium application for ITSI or Enterprise Security

- In addition, you either use your monitoring console host or a dedicated standalone search head for your Splunk environment monitoring, which is where TrackMe is deployed

- A lookup exists in the SHC which is the object you need to monitor, this lookup is only available to the SHC members and TrackMe cannot access to its content transparently

Using a rest command, you can hit a Splunk API search endpoint remotely, and use the builtin Elastic Source feature to monitor and track the lookup just as if it was available directly on the TrackMe search head.

In short, on the SHC you can run:

| inputlookup acme_assets_cmdb

On the TrackMe Splunk instance, we will use a search looking like:

| rest splunk_server_group="dmc_searchheadclustergroup_shc1" /servicesNS/admin/search/search/jobs/export search="| from lookup:acme_assets_cmdb | eval _time=strftime(lookupLastUpdated, \"%s\") | eventstats max(_time) as indextime | eval _indextime=if(isnum(_indextime), _indextime, indextime) | fields - indextime | eval host=if(isnull(host), \"none\", host) | stats max(_indextime) as data_last_ingest, min(_time) as data_first_time_seen, max(_time) as data_last_time_seen, count as data_eventcount, dc(host) as dcount_host | eval data_name=\"rest:from:lookup:example\", data_index=\"pseudo_index\", data_sourcetype=\"lookup:acme_assets_cmdb\", data_last_ingestion_lag_seen=data_last_ingest-data_last_time_seen" output_mode="csv"

Notes and technical details:

- See https://docs.splunk.com/Documentation/Splunk/latest/RESTTUT/RESTsearches for more information about running searches over rest

- See https://docs.splunk.com/Documentation/Splunk/latest/SearchReference/Rest for more information about the rest search command

restbased searches support all forms of searches supported by Elastic Sources:tstats,raw,from:datamodel,from:lookup,mstats- Search Heads you wish to target need to be configured as distributed search peers in Splunk, same requirement as for the Splunk Monitoring Console host (MC, previously named DMC)

- Most of the calculation part is executed on the target search head size, TrackMe will not attempt to retrieve the raw data first before performing the calculation for obvious performance gain purposes

- You can target a search head explicity using the

splunk_serverargument, or you can target a group of search heads (such as your SHC) using thesplunk_server_groupargument - When targeting a group of search heads, the query is executed on every search that is matched by the splunk_server_group, therefore you should limit using a target group to very effiscient and low cost searches such as a from lookup for example

- TrackMe in anycase will only consider the first result from the rest command (so only one search head answer during the rest execution, assuming search heads from the same group have the same data access), and will discard other search head replies

- The search needs to be properly performing, and should complete in a acceptable time window (use timeout argument which defaults to 60 seconds)

- Each result from the rest command, during the tracker execution or within the UI, passes through a Python based custom command to parse the CSV structure resulting from the rest command, to finally create the Splunk events during the search time execution

- Except for

| from lookup:rest searches, other types of searches automatically append the configured earliest and latest as arguments to the rest command (earliest_time, latest_time) - Earliest and Latest arguments are configurable for dedicated trackers only, shared trackers will use earliest:”-4h” and latest:”+4h” statically

- Additional parameters to the rest command can be added within the first pipe of the search constraint during the Elastic Source creation (such as timeout, count etc)

Warning

Currently the rest command generates a warning message “Unable to determine response format from HTTP Header”, this message can be safety ignored as it does not impact the results in anyway, but cannot unfortunately be removed at the moment, until it is fixed by Splunk.

Examples for each type of search:

tstats over rest:

splunk_server="my_search_head" | index=* sourcetype=pan:traffic

raw search over rest:

splunk_server="my_search_head" | index=* sourcetype=pan:traffic

from datamodel over rest:

splunk_server="my_search_head" | datamodel:"Authentication" action=*

from lookup over rest:

splunk_server="my_search_head" | from lookup:acme_assets_cmdb | eval _time=strftime(lookupLastUpdated, "%s")

mstats over rest:

splunk_server="my_search_head" | index=* metric_name=docker*

As a conclusion, using the rest based searches features successfully completes the Elastic Sources level of features, such that every single use case can be handled in TrackMe, whenever the Splunk instance cam access or not to the data you need to track!

Elastic source example 1: creation¶

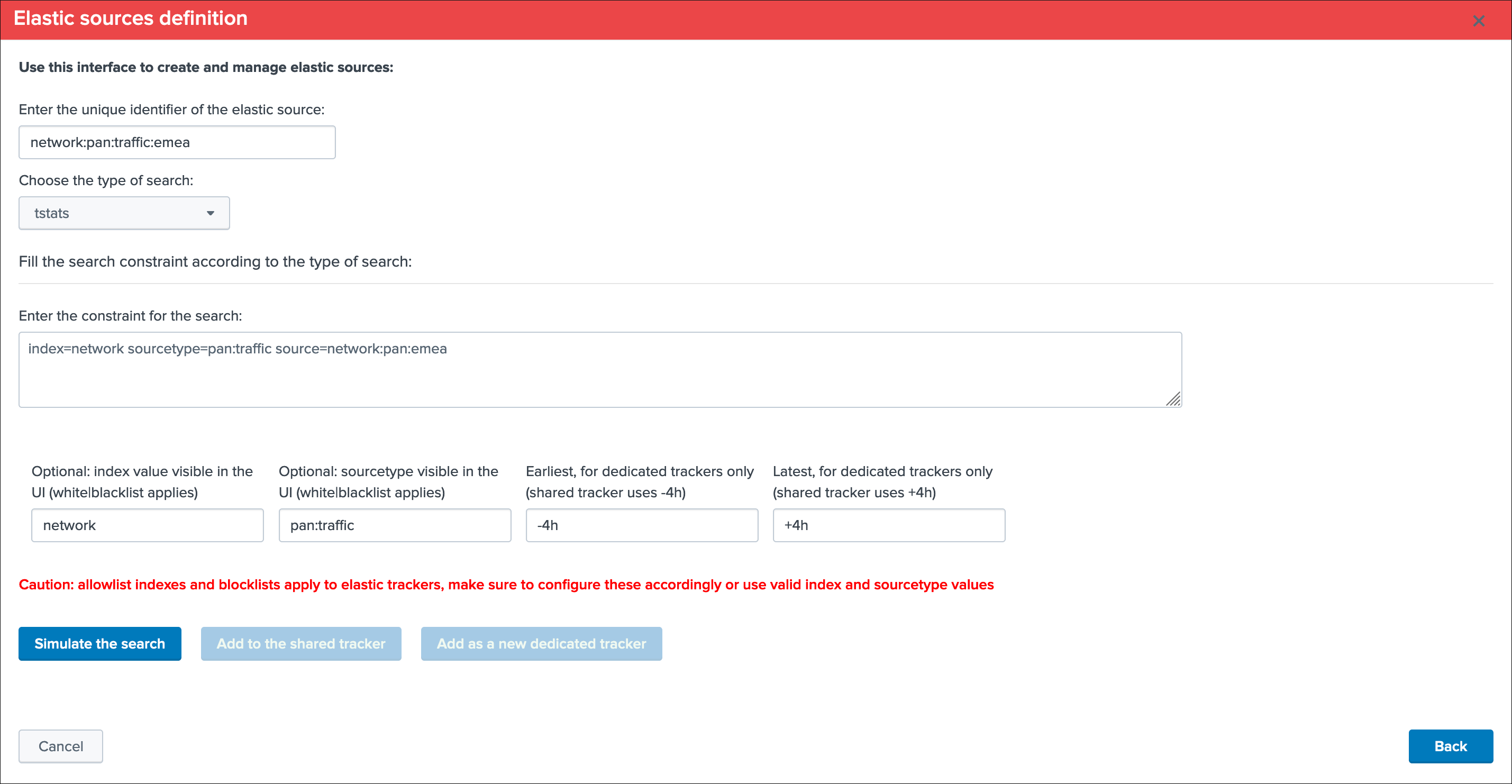

Now, let’s create our first Elastic Source which will meet our requirement to rely on the Splunk source Metadata, click on create a new Elastic source:

Which opens the following screen:

Summary:

- Define a name for the entity, this name is the value of the field

data_nameand needs to be unique in TrackMe - Shall that name you provide not be unique, a little red cross and a message will indicate the issue when we run the simulation

- We choose a

search language, because the source field is a Metadata, this is an indexed field and we can use the tstats command which is very efficient by looking at the tsdidx files rather than the raw events - We define our search constraint for the first entity, in our case

index=network sourcetype=pan:traffic source=network:pan:emea - We choose a value for the index, this is having

no influenceon the search itself and its result but determines how the entity is classified and filtered in the main UI - Same for the sourcetype, which does

not influencethe search results - Finally, we can optionally decide to define the earliest and latest time range, in our example we can leave that empty and rely on the default behaviour

Let’s click on this nice button!

This looks good isn’t it?

Shared tracker versus dedicated tracker:

In this context:

- Because this is a very efficient search that relies on tstats, creating it as a shared tracker is perfectly fair

- Shall I want to increase the earliest or the latest values beyond the shared tracker default of -4h / +4h, this would be reason to create a dedicated tracker

- While tstats searches are very efficient, a very high volume of events might mean a certain run time for the search, in such a case a dedicated tracker shall be used

- If you have to achieve any additional work, such as third party lookup enrichment, this would be a reason to create a dedicated tracker too

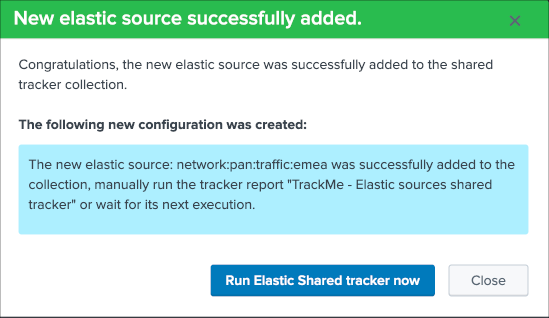

Fine? Let’s cover both, and let’s click on “Add to the shared tracker” button:

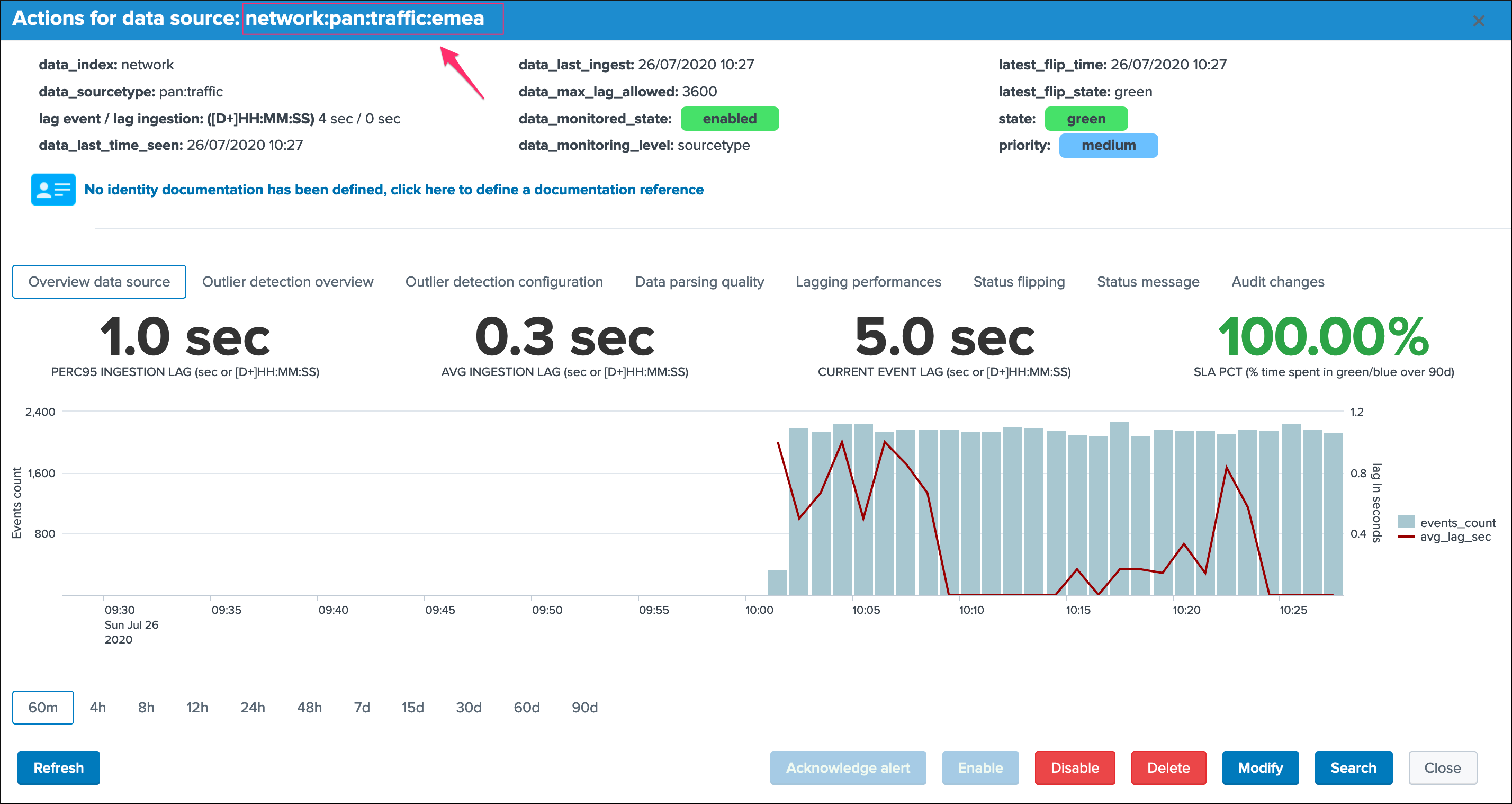

Nice! Let’s click on that button and immediately run the shared tracker, upon its execution we can see an all brand new data source entity that matches what we created:

Ok that’s cool!

Note: if you disagree with this statement, you are free to leave this site, free to uninstall TrackMe and create all of your own things we are not friends anymore that’s it.

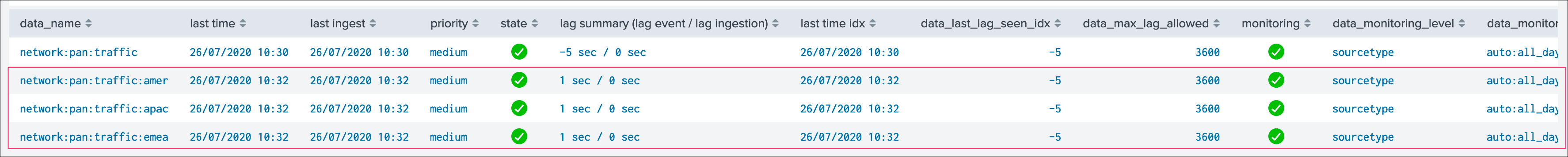

repeat the operation, which results in 3 new entities in TrackMe, one for each region:

“What about the original data source that created automatically?”.

We can simply disable the monitoring state via the disable button et voila!

Elastic source example 2: creation¶

Now that we had so much fun with the example 1, let’s have a look at the second example which relies on custom indexed fields.

source="network:pan:[region]:[company]"

For the purposes of the demonstration, we will this time create Elastic dedicated sources.

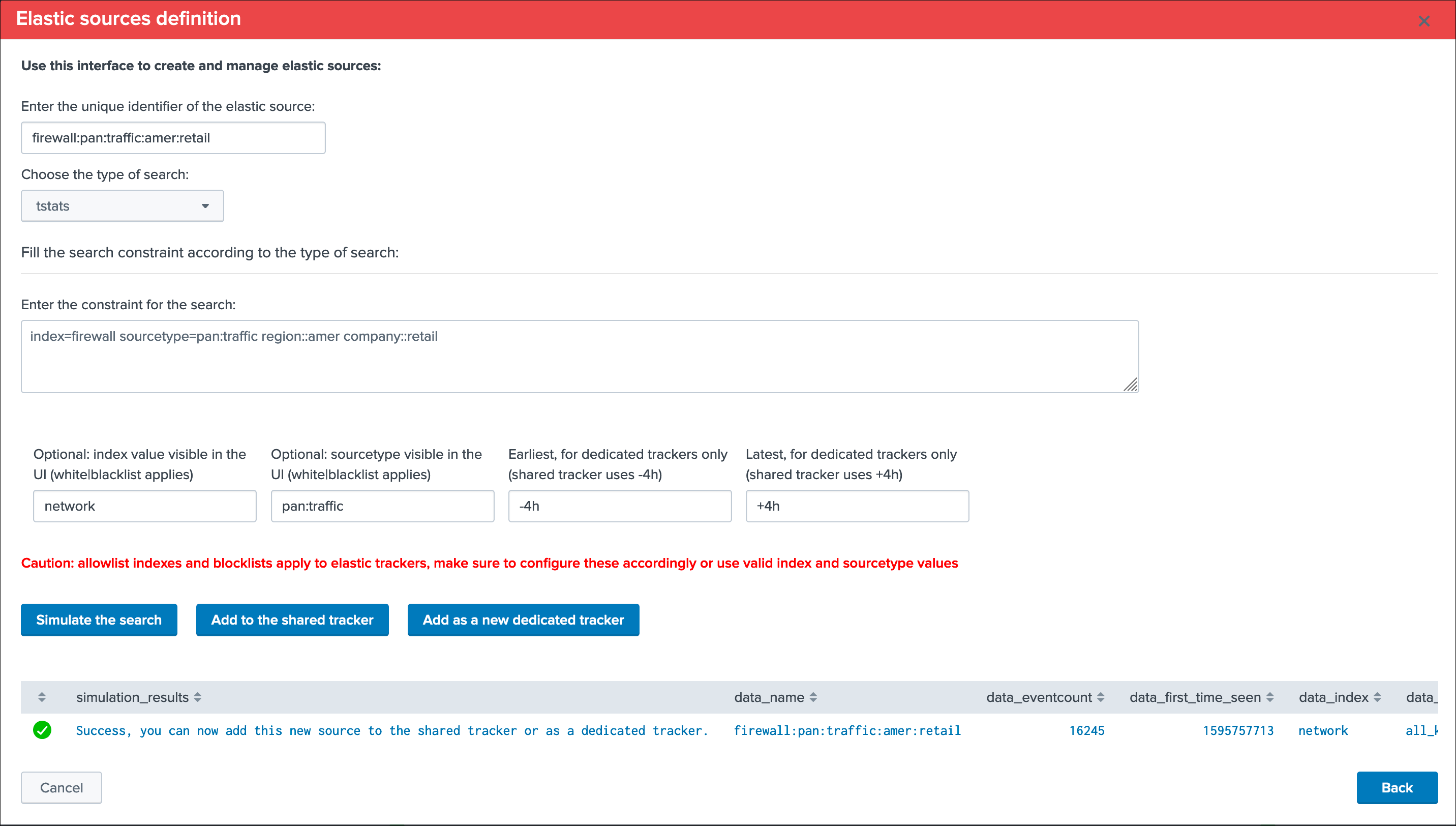

Let’s create our first entity:

Summary:

- Define a name for the entity, this name is the value of the field

data_nameand needs to be unique in TrackMe - Shall that name you provide not be unique, a little red cross and a message will indicate the issue when we run the simulation

- We choose a

search language, because the source field is a Metadata, this is an indexed field and we can use the tstats command which is very efficient by looking at the tsdidx files rather than the raw events - We define our search constraint for the first entity, in our case

index=firewall sourcetype=pan:traffic region::emea company::retail - We choose a value for the index and the sourcetype, this is having

no impactson the search itself and its result but determines how the entity is classified and filtered in the main UI - Finally, we can optionally decide to define the earliest and latest time range, in our example we can leave that empty and rely on the default behaviour

Note about the search syntax:

- We use

"::"as the delimiter rather than"="because these are indexed fields, and this indicates Splunk to treat them as such

Let’s create our first entity:

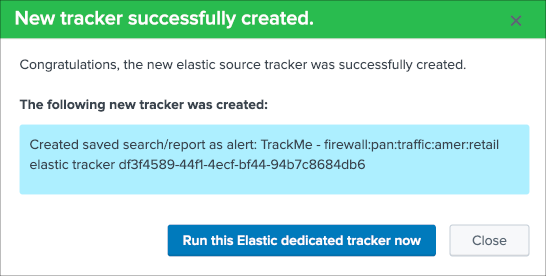

Once again this is looking perfectly good, this time we will create a dedicated tracker:

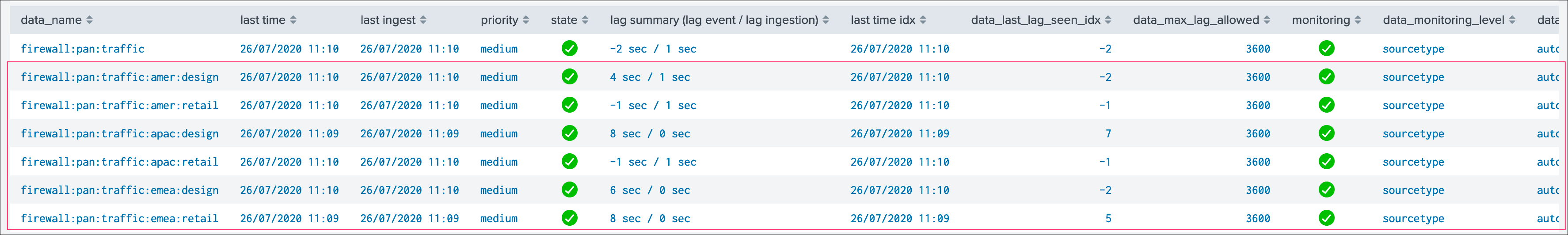

Nice, let’s click on the run button now, and repeat the operation for all entities!

Once we did and created all the six entities, we can see the following in the data sources tab:

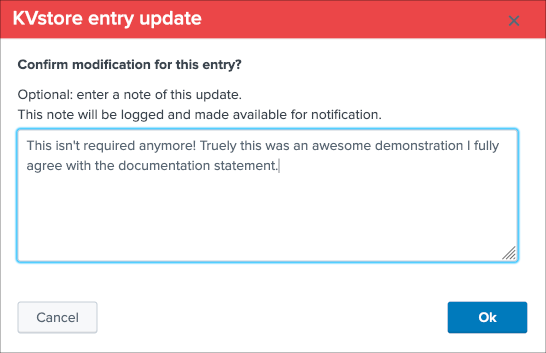

As we did earlier in the example 1, we will simply disable the original data source which is not required anymore.

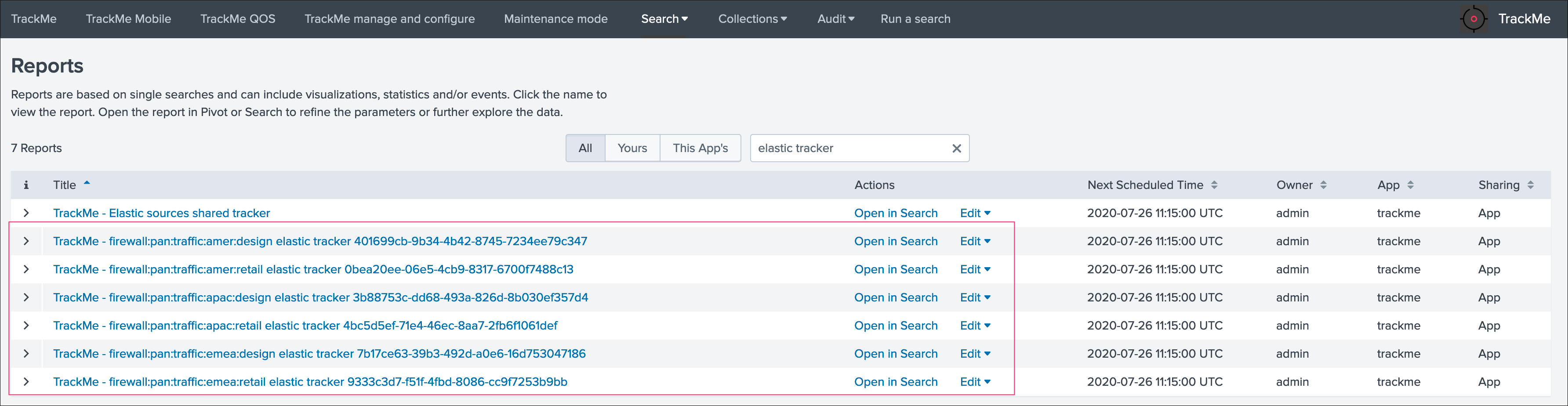

Finally, because we created dedicated trackers, let’s have a look at the reports:

We can see that TrackMe has created a new scheduled report for each entity we created, it is perfectly possible to edit these reports up to your needs.

Voila, we have now covered two complete examples of how and why creating Elastic Sources, there are many more use cases obviously and each can be very specific to your context, therefore we covered the essential part of the feature.

Elastic source example 3: creation¶

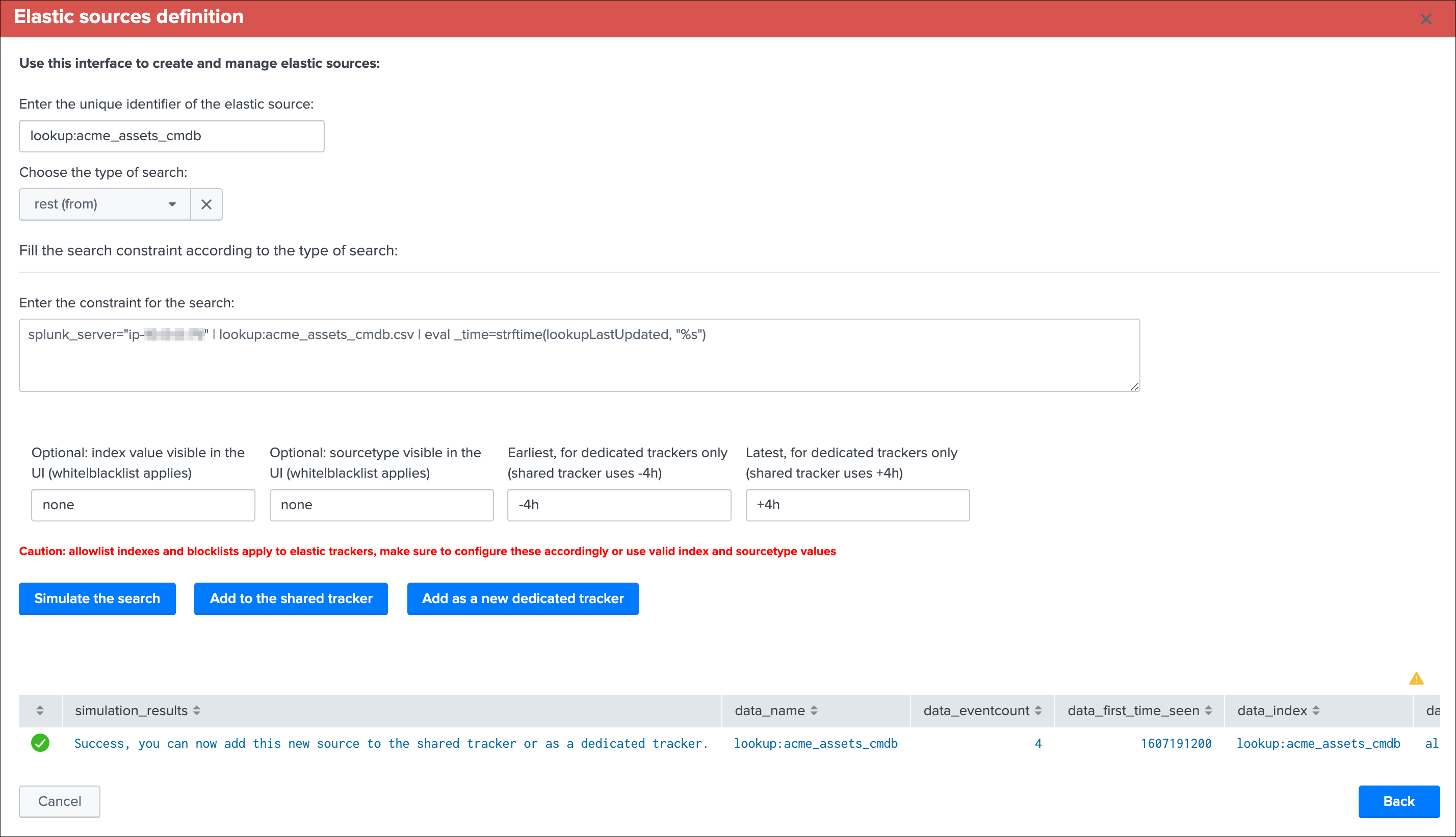

Let’s create our lookup based Elastic Source, for this we rely on the Splunk from search command capabilities to handle lookup, and we potentially define additional statements to set the _time and _indextime (if any)

Litteraly, we are going to use the following SPL search to achieve our target:

| from lookup:acme_assets_cmdb | eval _time=strftime(lookupLastUpdated, "%s")

If our lookupLastUpdated would have been in a human readable format, we could have used the stptime function to convert it into an epoch time, for example:

| from lookup:acme_assets_cmdb | eval _time=strptime(lookupLastUpdated, "%d/%m/%Y %H:%M:%S")

Applied to TrackMe in the Elastic Sources UI creation:

Notes:

- The “from ” key word is not required and will be substituted by TrackMe automatically (once you selected from in the dropdown)

- earliest and latest do not matter for a lookup, so you can leave these with their default values

- The index and sourcetype are only used for UI filtering purposes, so you can define the values up to your preference

- Depending on the volume of records in the lookup and the time taken by Splunk to load its content, you may consider using the shared tracker mode, or a dedicated tracker for longer execution run times

Once the Elastic Source has been created, and we ran the tracker:

As we can see, the current lagging corresponds to the difference between now and the latest update of the lookup, TrackMe will immediately starts to compute all metrics, the event count corresponds to the number of records (which allows the usage of outliers detection too), etc.

When TrackMe detects that the data source is a based on a lookup, the statistics are returned from the trackme metrics automatically.

Elastic source example 4: creation¶

As explained in the example 4 description, we can use a rest based search to monitor any data that is not available to the search head host TrackMe, let’s consider the example a lookup hosted on a different search head.

On the search head that owns the lookup, we can use the following query:

| from lookup:acme_assets_cmdb | eval _time=strftime(lookupLastUpdated, "%s")

Using a rest search, we will achieve the same job but this time remotely via a rest call to a search endpoint of the Splunk API using the rest command, the Elastic Source search syntax will be the following:

splunk_server="my_search_head" | from lookup:acme_assets_cmdb | eval _time=strftime(lookupLastUpdated, "%s")

The first pipe needs to contain the arguments passed to the rest command, the only mandatory argument is either splunk_server to target a unique Splunk instance, or splunk_server_group to target a group of search heads.

As well, any additional agrument can be given to the rest command by ading these in the first pipe of the search constraint. (timeout, count, etc)

Tip

- The Splunk server name needs to be between double quotes, ex: splunk_server=”my_search_head”

- In this example of a lookup, the knowledge objects needs to be shared properly such that it is available to be accessed via the rest API

Warning

Currently the rest command generates a warning message “Unable to determine response format from HTTP Header”, this message can be safety ignored as it does not impact the results in anyway, but cannot unfortunately be removed at the moment, until it is fixed by Splunk.

Once created, the new data source appears in the UI automatically, the following example shows the behaviour with a lookup that is updated every 30 minutes:

In the example of a lookup, the Search button would result in the following:

Elastic sources under the hood¶

Some additional more technical details:

Elastic sources dedicated¶

Each elastic source definition is stored in the following KVstore based lookup:

trackme_elastic_sources_dedicated

Specially, we have the following fields:

data_nameis the unique identifiersearch_constraintis the search constraintsearch_modeis the search command to be usedelastic_data_indexis the value for the index to be shown in the UIelastic_data_sourcetypeis the value for the sourcetype to be show in the UI

When the dedicated Elastic source tracker runs, the following applies:

- The report contains the structured search syntax that was automatically built by the UI when it was created

- The report calls different knowledge objects that are common to the trackers to insert and update records in the KVstore, generate flipping status records if any and generate the lagging metrics to be stored into the metric store

Besides the fact that Elastic sources appears in the data sources tab, there are no interactions between the data source trackers and the dedicated Elastic source trackers, there are independents.

In addition, the collection is used automatically by the main interface if you click on the Search button to generate the relevant search to access the events related to that entity.

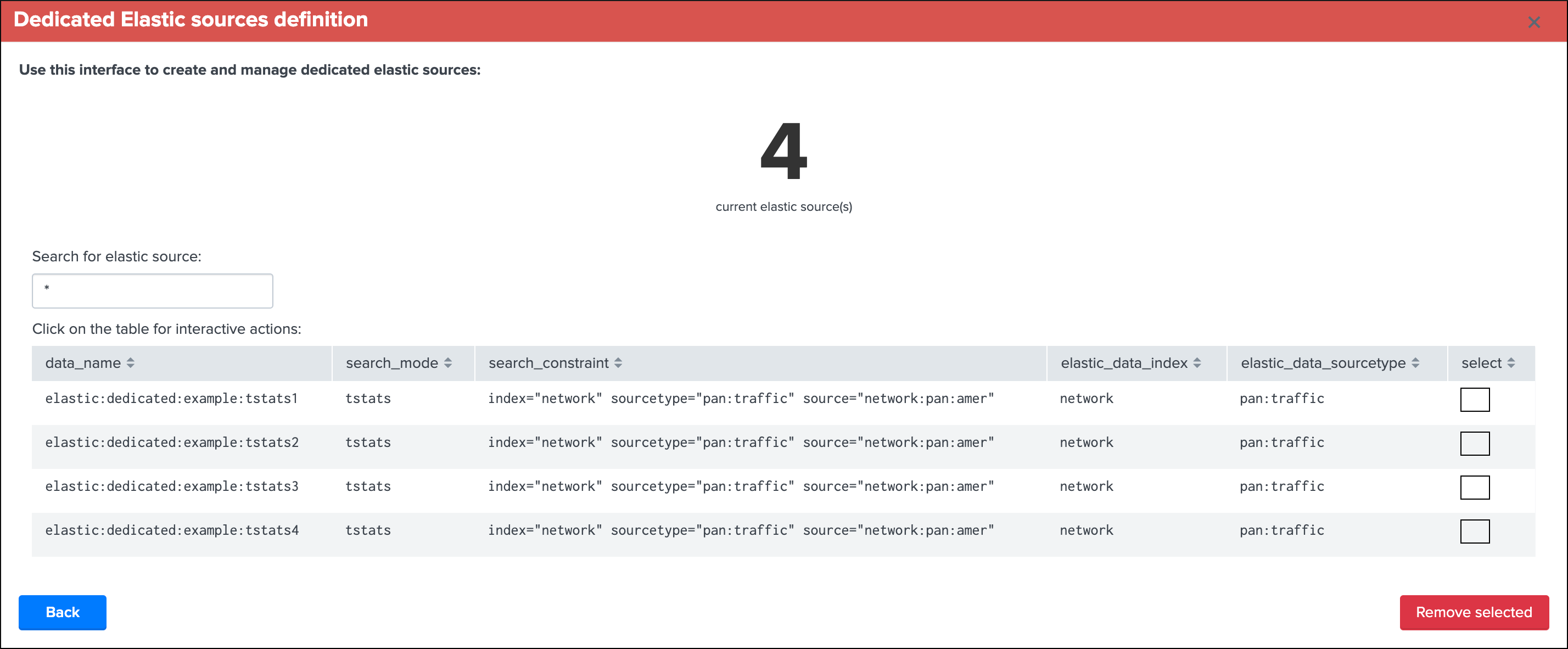

Remove Elastic Sources¶

You can delete one or more Elastic Sources, shared or dedicated, within the UI main screen:

Example with dedicated Elastic Sources:

When deleting Elastic Sources via the UI, the following actions are occurring:

- The UI calls a REST API endpoint via the REST API trackme SPL command

- API endpoints are elastic_shared_del / Delete a new shared Elastic Source and elastic_dedicated_del / Delete a new shared Elastic Source

- All related objects are suppressed automatically, this includes the Elastic Sources KVstore collections, the entities in the main Data sources collection, and the scheduled reports for dedicated Elastic Sources

- Actions and content are logges in the audit collection before their suppression

Outliers detection and behaviour analytic¶

Outliers detection feature

Outliers detection provides a workflow to automatically detect and alert when the volume of events generated by a source goes beyond or over a usual volume determined by analysing the historical behaviour.

How things work:

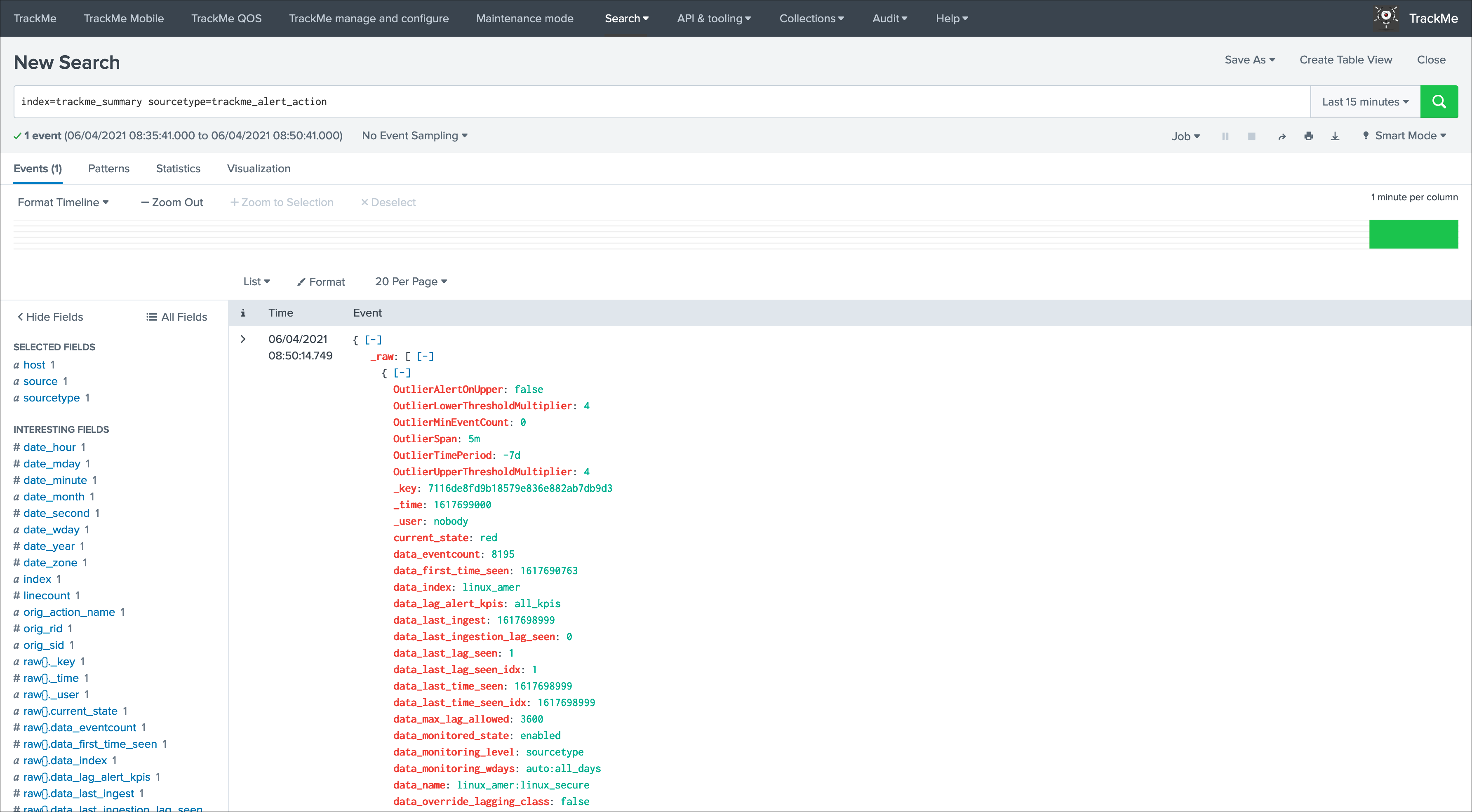

- Each execution of the data trackers generates summary events which are indexed as summary data in the same time that the KVstore collections are updated

- These events are processed by the Summary Investigator tracker which uses a standard deviation calculation based approach from the Machine Learning toolkit

- We process standard deviation calculations based on a 4 hours event count reported during each execution of the data trackers

- The Summary Investigator maintains a KVstore lookup which content is used as a source of enrichment by the trackers to define essentially an “isOutlier” flag

- Should outliers be detected based on the policy, which is customisable om a per source basis, the source will be reported in alert

- Different options are provided to control the quality of the outliers calculation, as controlling lower and upper threshold multipliers, or even switching to a static lower bond definition

- Built-in views provide the key feature to quickly investigate the source in alert and proceed to further investigations if required

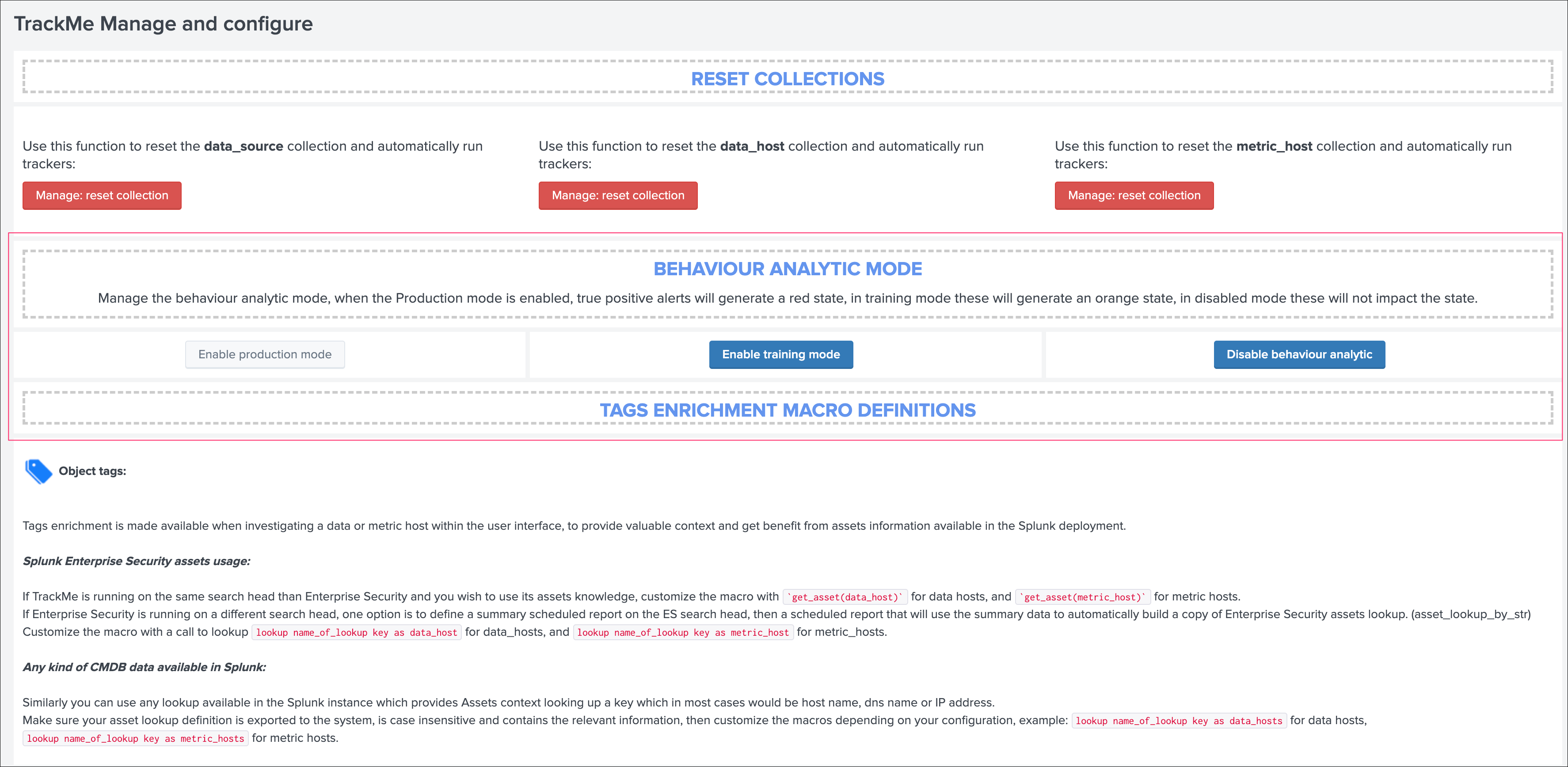

Behaviour Analytic Mode¶

By default, the application operates in Production mode, which means that an outlier detection occurring over a data source or host will influence its state effectively.

The behaviour analytic mode can be switched to the following status:

- production: affects objects status to the red state

- training : affects objects status to the orange state

- disabled: does nothing

The mode can be configured via UI in the “TrackMe manage and configure” link in the navigation bar:

Using Outliers detection¶

By default, the outlier detection is automatically activated for each data source and host, use the Outliers Overview tab to visualize the status of the Outliers detection:

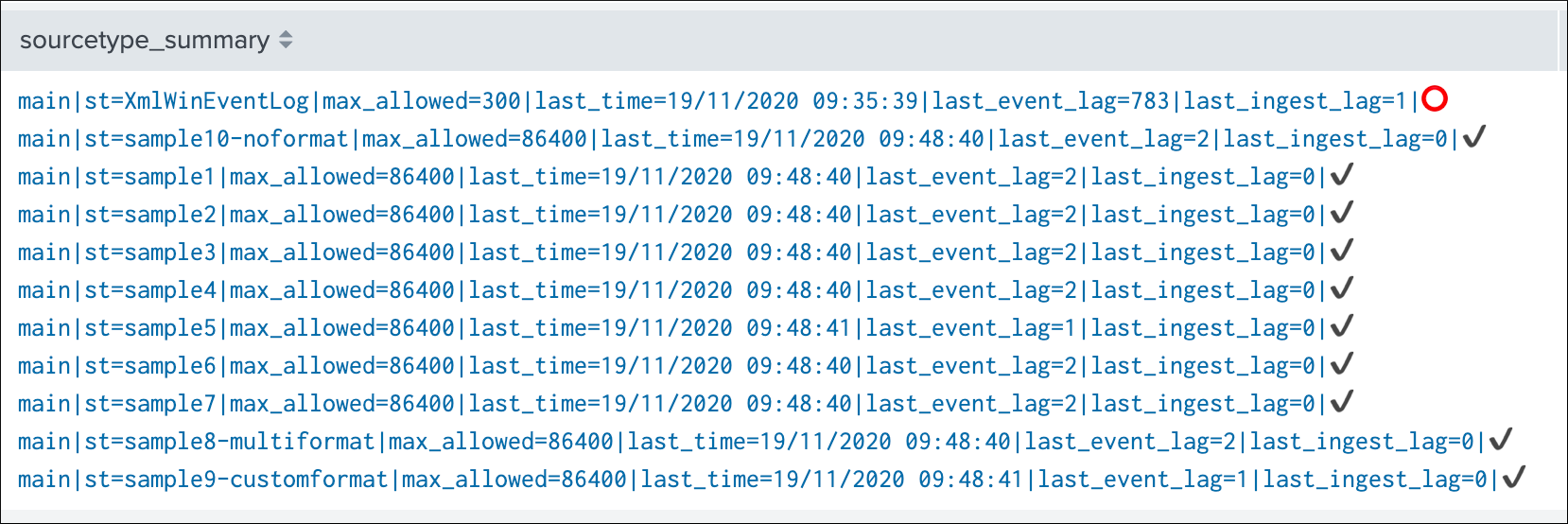

The table exposes the very last result from the analysis:

| field | Purpose |

|---|---|

| enable outlier | defines if behaviour analytic should be enabled or disabled for that source (default to true) |

| alert on upper | defines if outliers detection going over the upper calculations (default to false) |

| data_tracker_runtime | last run time of the Summary Investigator tracker which defines the statuses of Outliers detection |

| isOutlier | main flag for Outlier detection, 0=no Outliers detected, 1=Outliers detected |

| OutlierMinEventCount | static lower bound value used with static mode, in dynamic mode this is not set |

| lower multiplier | default to 4, modifying the value influences the lower bound calculations based on the data |

| upper multiplier | default to 4, modifying the value influences the upper bound calculations based on the data |

| lowerBound/upperBound | exposes latest values for the lower and upper bound |

| stddev | exposes the latest value for the standard deviation calculated for that source |

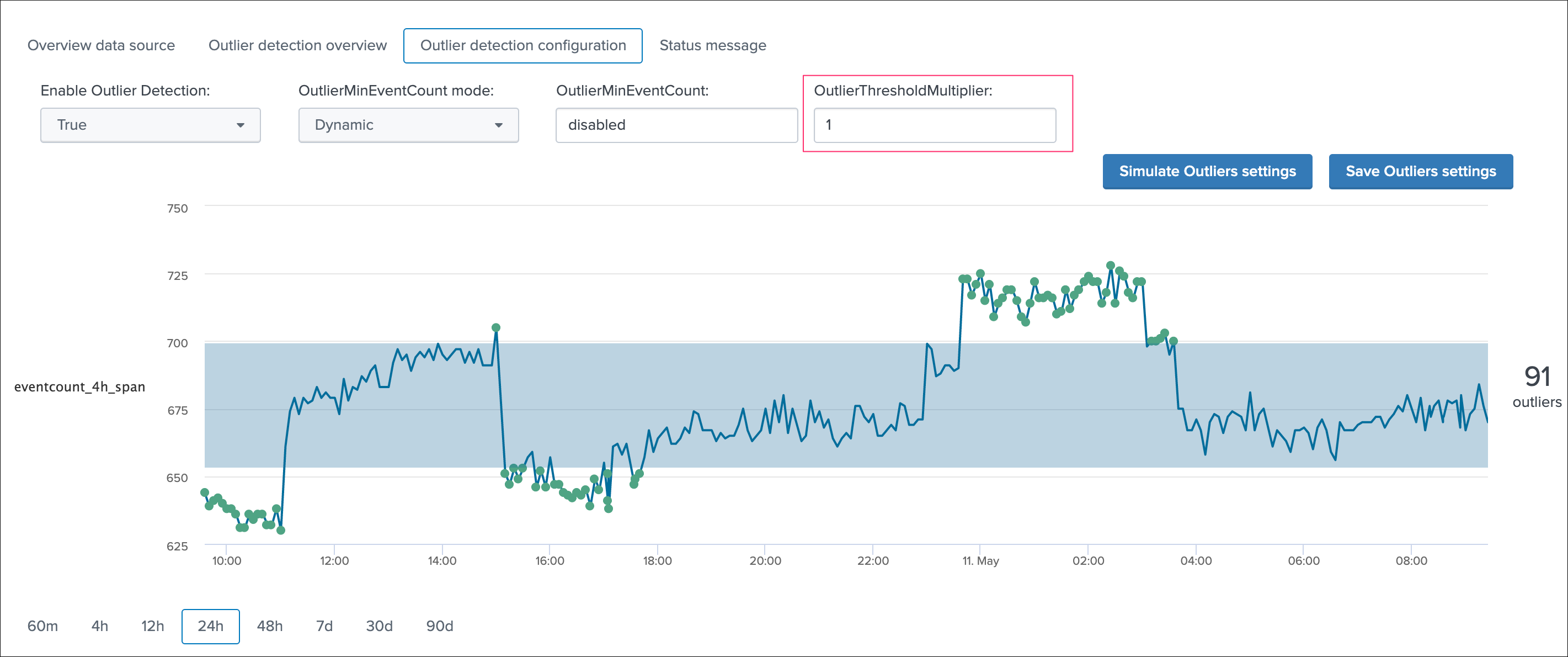

Simulating and adjusting Outliers detection¶

Use the Outliers detection configuration tab to run simulations and proceed to configuration adjustments:

For example, you can increase the value of the threshold multiplier to improve the outliers detection in regard with your knowledge of this data, or how its distribution behaves over time:

As well, in some cases you may wish to use a static lower bound value, if you use the static mode, then the outlier detection for the lower band is not used anymore and replaced by this static value as the minimal number of events:

Upper bound outliers detection does not affect the alert status by default, however this option can be enabled and the threshold multiplier be customised if you need to detect a large increase in the volume of data generated by this source:

Saving the configuration¶

Once you have validated the results from the simulation, click on the save button to immediately record the values to the KVstore collection.

When the save action is executed, you might need to wait a few minutes for it to be reported during the next execution of the Summary Investigator report.

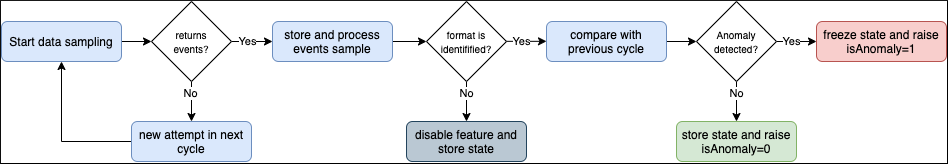

Data sampling and event formats recognition¶

Data sampling and event format recognition

The Data sampling and event format recognition feature is a powerful automated workflow that provides the capabilities to monitor the raw events formats to automatically detect anomalies and misbehaviour at scale:

- TrackMe automatically picks a sample of from every data source on a scheduled basis, and runs regular expression based rules to find “good” and “bad” things

- builtin rules are provided to identify commonly used formats of data, such as syslog, json, xml, and so forth

- custom rules can be created to extend the feature up to your needs

- rules can be created as rules that need to be matched (looking for a format or specific patterns), or as rules that must not be matched (for example looking for PII data)

- rules that must not match (exclusive rules) are always proceeded before rules that must match (inclusive), this guarantes that if any a same data source would match multiple rules, any first rule matching “bad” things will proceed before a rule matching “good” things (as the engine will stop at the first match for a given event)

- The number of events sampled during each execution can be configured per data source, and otherwise defaults to 100 events at the first sampling, and 50 events for each new execution

- checkout custom rule example creation in the present documentation

- since the version 1.2.35, you can choose to obfuscate the sampled events that are normally stored in the collection, this might be required to avoid unwanted data accesses if you have a population of users in TrackMe who need to have limited access

You access to the data sample feature on a per data source basis via the data sample tab when looking at a specific data source:

How things work:

- The scheduled report named

TrackMe - Data sampling and format detection trackerruns by default every 15 minutes - The report uses a builtin function to determine an ideal number of data sources to be processed according to the total number of data sources to be processed, and the historical performance of the search (generates a rate per second extrapolated to limit the number of sources to be processed)

- For each data source to be processed, a given number of raw events is sampled and stored in a KVstore collection named

trackme_data_sampling - The number of raw events to be sampled depends on wether the data source is handled for the first time (discovery), or if it is a normal run

- On each sample per data source, the engine processes the events and applies custom rules if any, then builtin rules are processed

- Depending on the conditions, a status and additional informational fields are determined and stored in the lookup collection

- The status stored as the field

isAnomalyis loaded by the data sources trackers and taken into account for the global data source state analysis

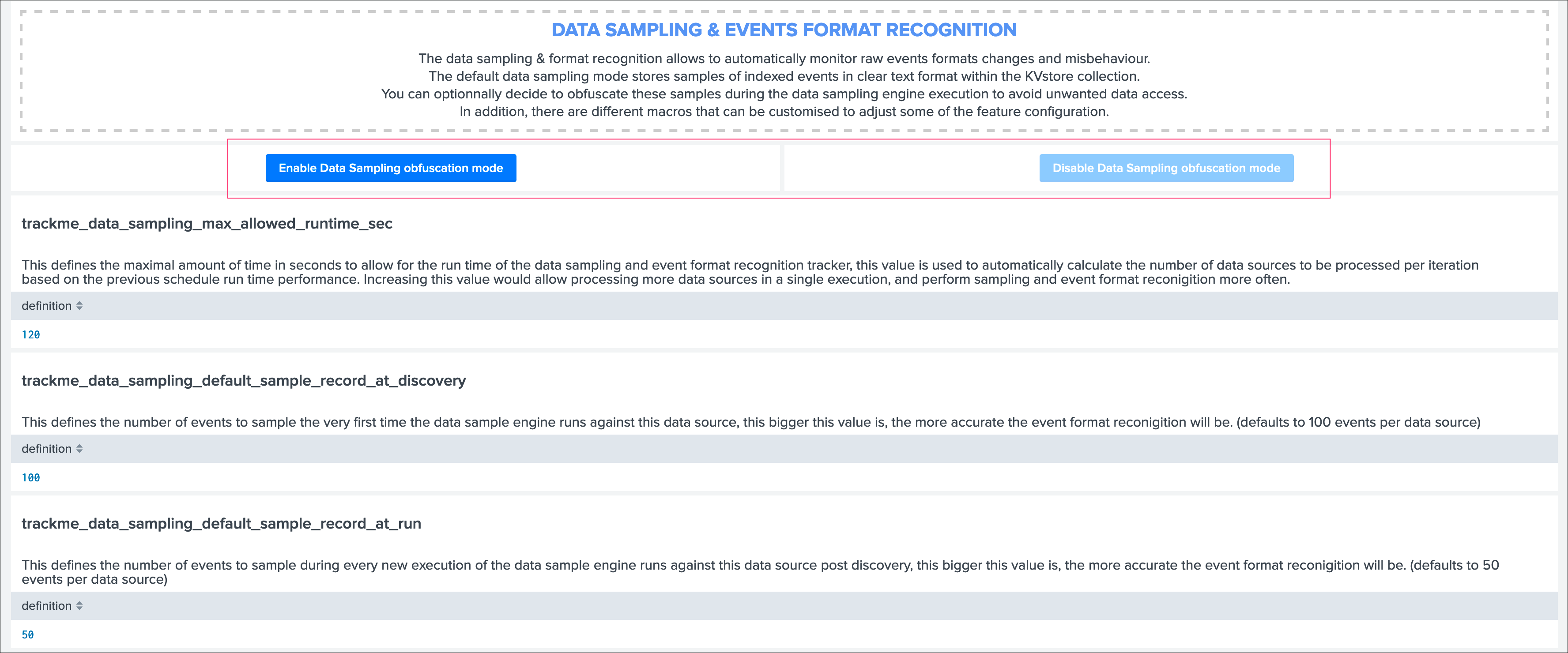

Data Sampling obfuscation mode¶

Access the configuration page from the navigation bar in TrackMe, “TrackMe manage and configure”:

- In the default mode, that is

Disable Data Sampling obfuscation mode, events that are sampled are stored in the data sampling KVstore collection and can be used to review the results from the latest sampling operation - In the

Enable Data Sampling obfuscation mode, events are not stored anymore and replaced by an admin message, the sampling processing still happens the same way but events cannot be reviewed anymore using the latest sample traces - In such a case, when then obfuscation mode is enabled, users will need to either run the rules manually to locate the messages that were captured to the conditions being met (bad format, PII data, etc) or use the Smart Smart Status feature to have TrackMe run this operation on demand

As a summary, you can enable the obfuscation mode if you have for instance a population of non admin users in TrackMe and you need to prevent them from accessing events they are not supposed to be able to accesss according to your RBAC policies in Splunk.

When a user attempts to create a new custom Data Sampling rule, the UI provides event sampling extracts:

These searches are performed on behalf on the user as normal Splunk searches, as such if the user cannot access to these data, there would be no results accessible.

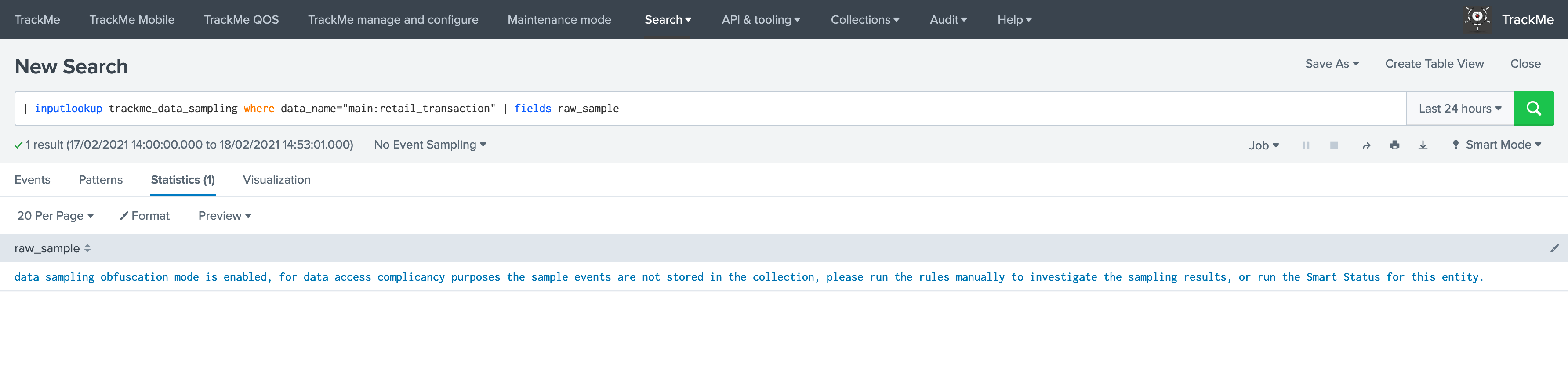

When the obfuscation mode is enabled, trying to access to the latest sample events via the UI (or directly via access to the collection) would result in the following content:

As a conclusion, enable the data sampling obfuscation mode if you are concerned about having users able to access to events they are not supposed to, when it is enabled, the collection cannot contain amymore any potentially sensitive information while the main and more valuable features are preserved.

Summary statuses¶

The data sampling message can be:

green:if no anomalies were detectedblue:if the data sampling did not handle this data source yetorange:if conditions do not allow to handle this data source, which can be multi-format detected at discovery, or no identifiable event formats (data sampling will be deactivated automatically)red:if anomalies were detected by the data engine, anomalies can be due to a change in the event format, or multiple events formats detected post discovery

Green state: no anomalies were detected, data sampling ran and is enabled

Blue state: data sampling engine did not inspect this data source yet

Orange state: data sampling was disabled due to events format recognition conditions that would not allow to manage this data properly (multiformat, no event formats identification possible)

Red state: anomalies were detected

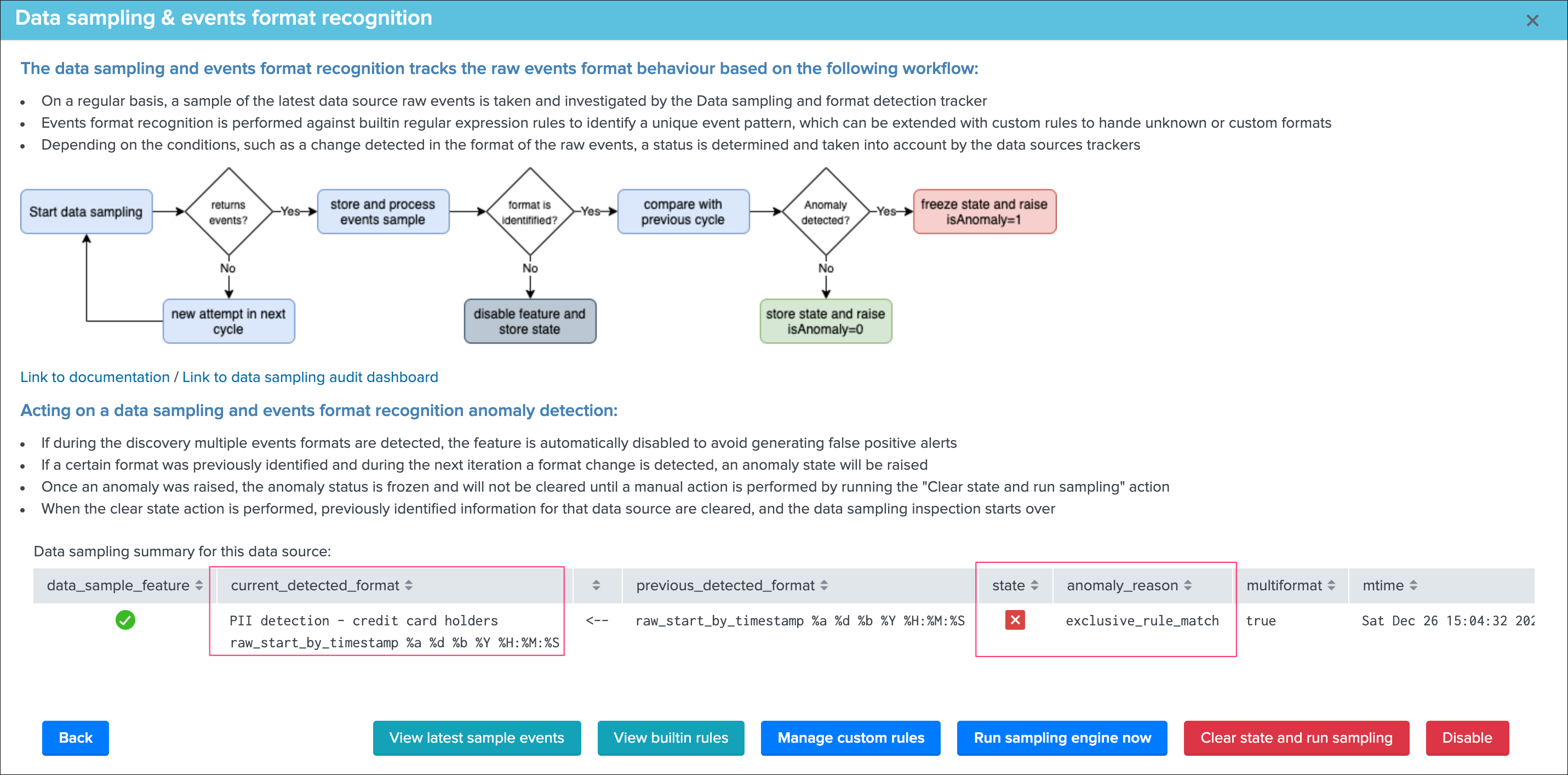

Manage data sampling¶

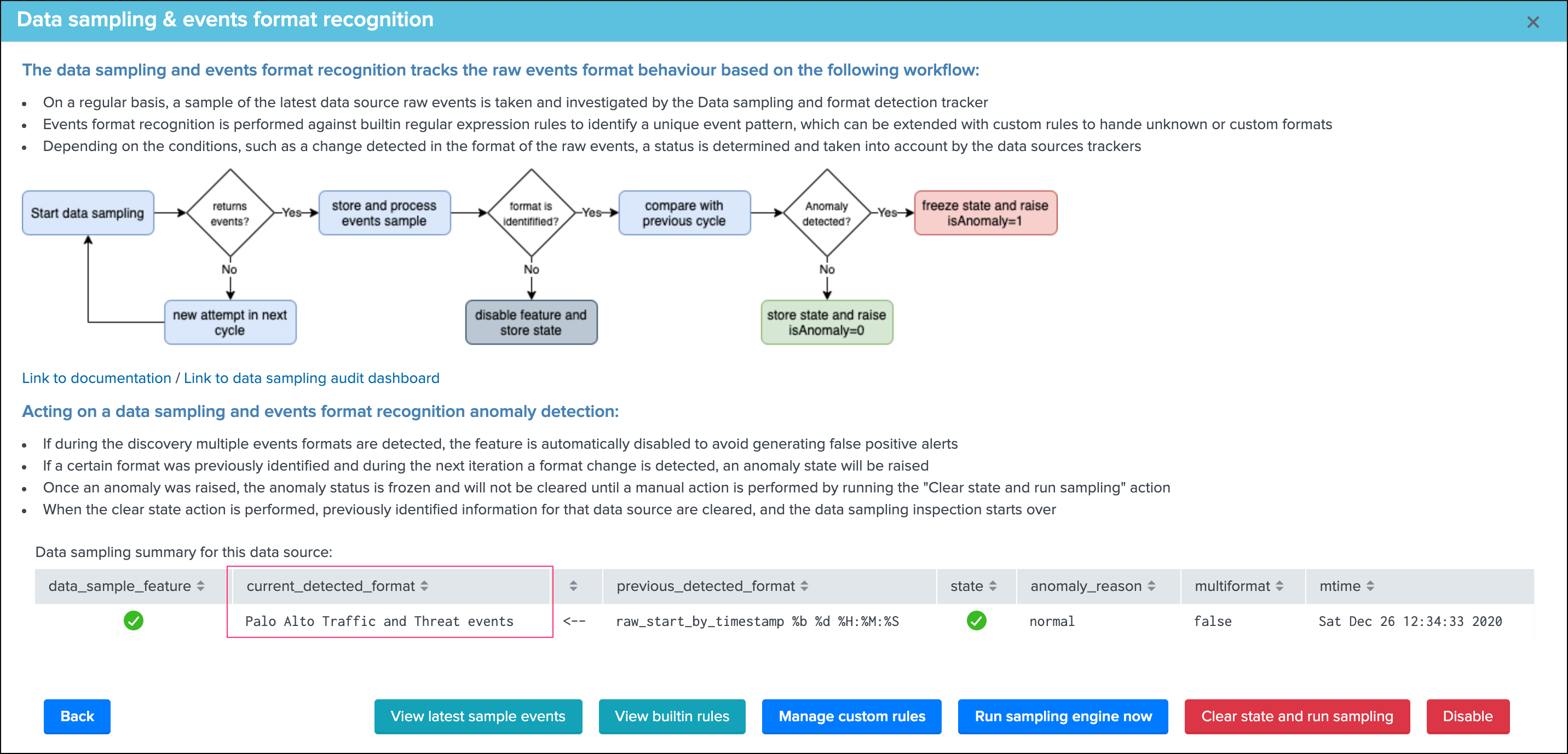

The Manage data sampling button provides access to functions to review and configure the feature:

The summary table shows the main key information:

data_sample_feature:is the data sampling feature enabled or disabled for that data source, rendered as an iconcurrent_detected_format:the event format that has been detected during the last samplingprevious_detected_format:the event format that was detected in the previous samplingstate:the state of the data sampling rendered as an iconanomaly_reason:the reason why an anomaly is raised, or “normal” if there are no anomaliesmultiformat:shall more than one format of events be detected (true / false)mtime:the latest time data sampling was processed for this data sourcedata_sampling_nr:the number of events taken per sampling operation, defaults to 100 events at discovery then 50 events for each new sampling (can be configured via the action Update records/sample)

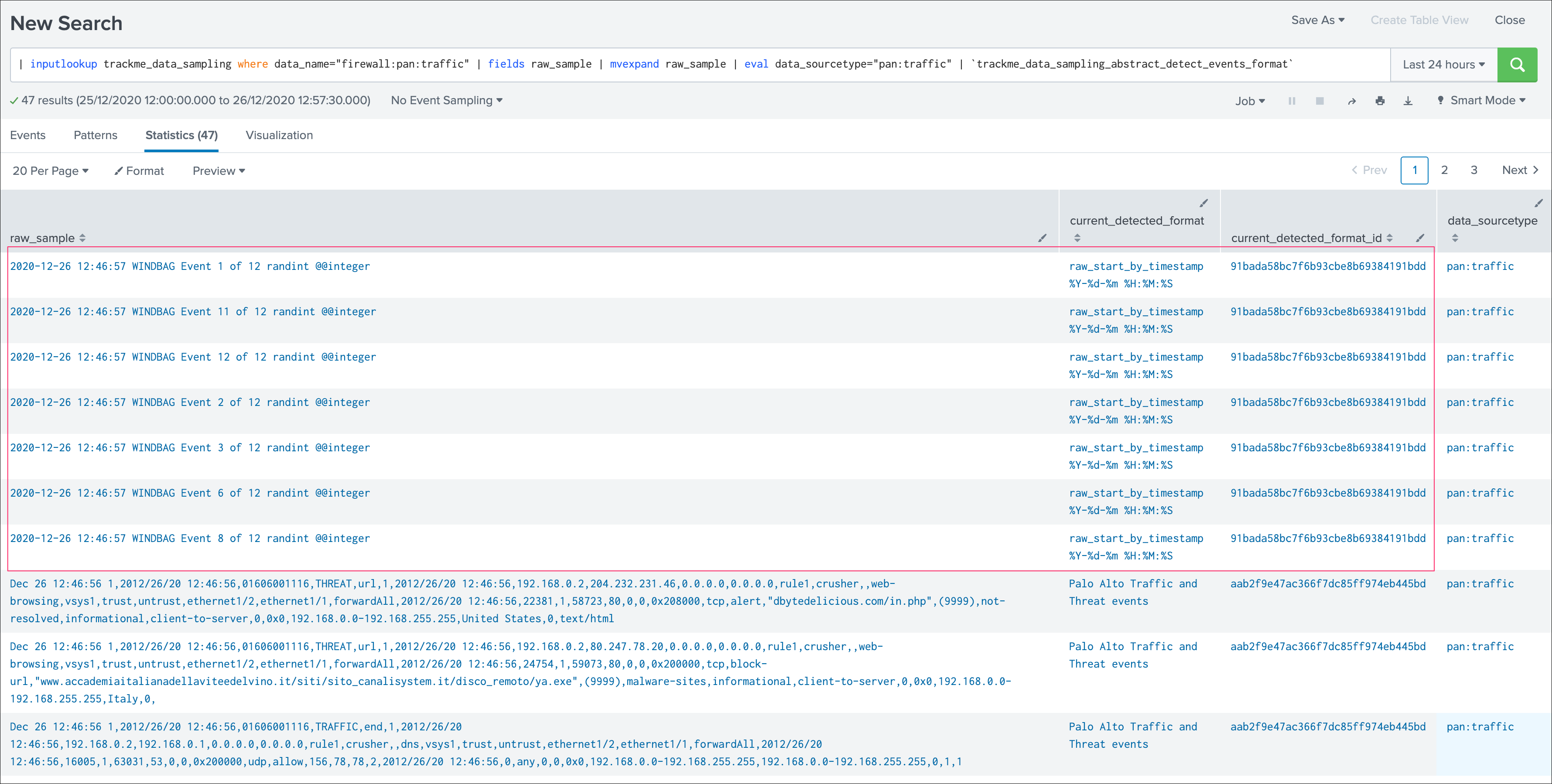

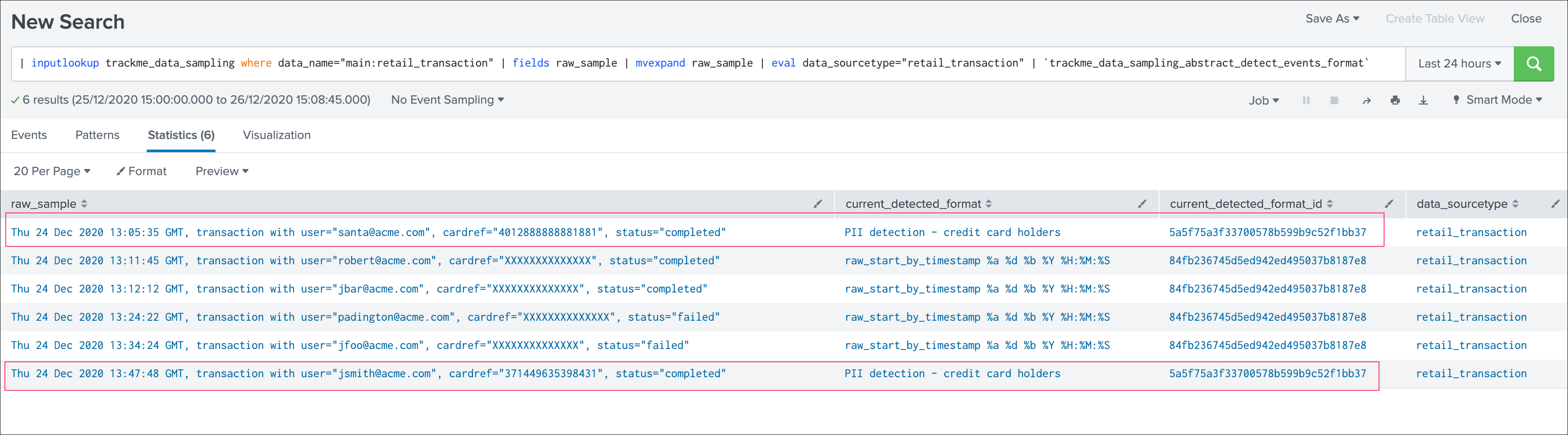

View latest sample events¶

This button opens in the search UI the last sample of raw events that were processed for this data source, the search calls a macro which runs the events format recognitions rules as:

| inputlookup trackme_data_sampling where data_name="<data_name>" | fields raw_sample | mvexpand raw_sample | `trackme_data_sampling_abstract_detect_events_format`

This view can be useful for trouble shooting purposes to determine why an anomaly was raised for a given data source.

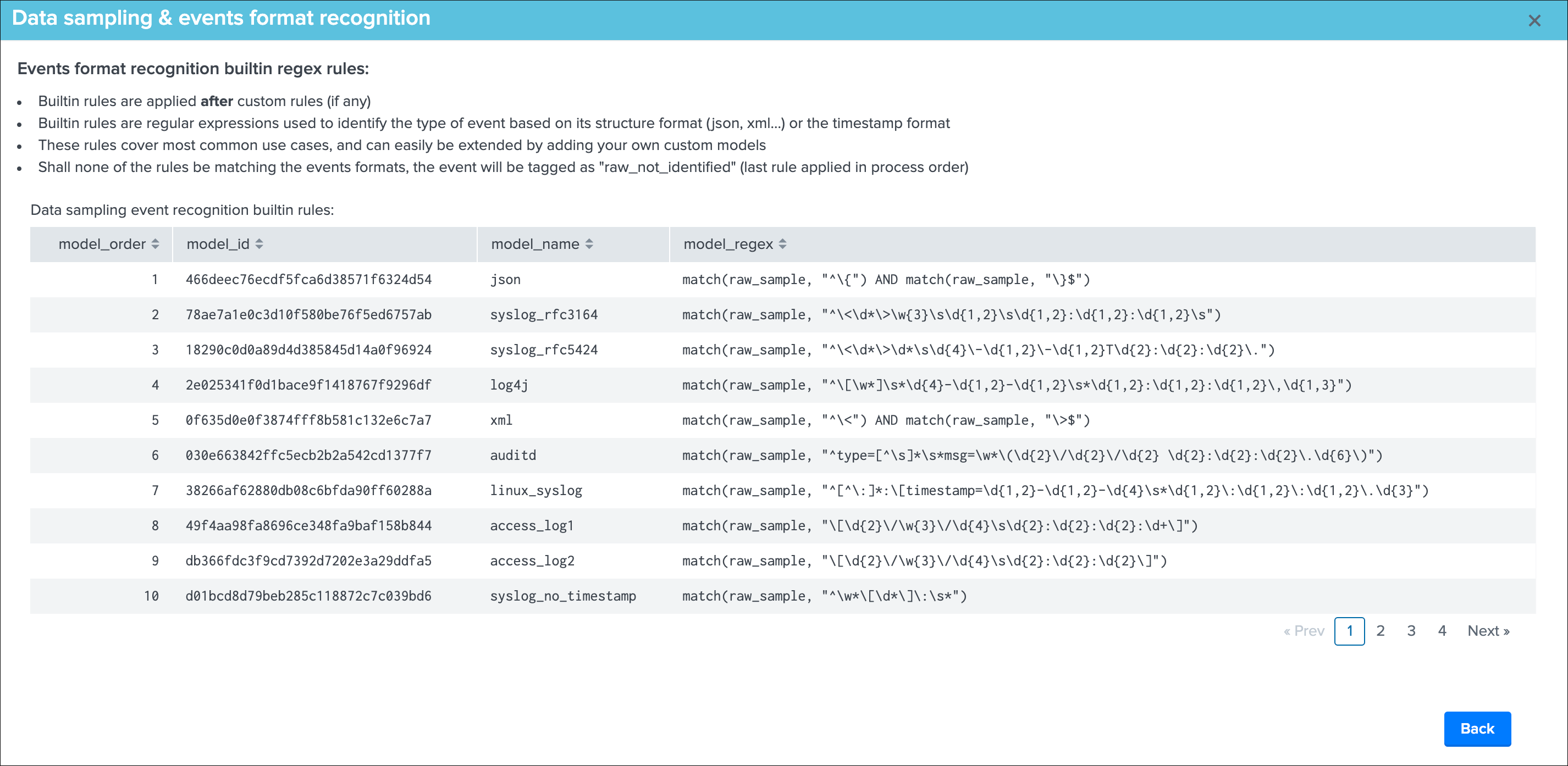

View builtin rules¶

This button opens a new view that exposes the builtin rules used by TrackMe, and the order in which rules are processed:

Builtin rules should not be modified, instead use custom rules to handle event formats that would not be properly identified by the builtin regular expression rules.

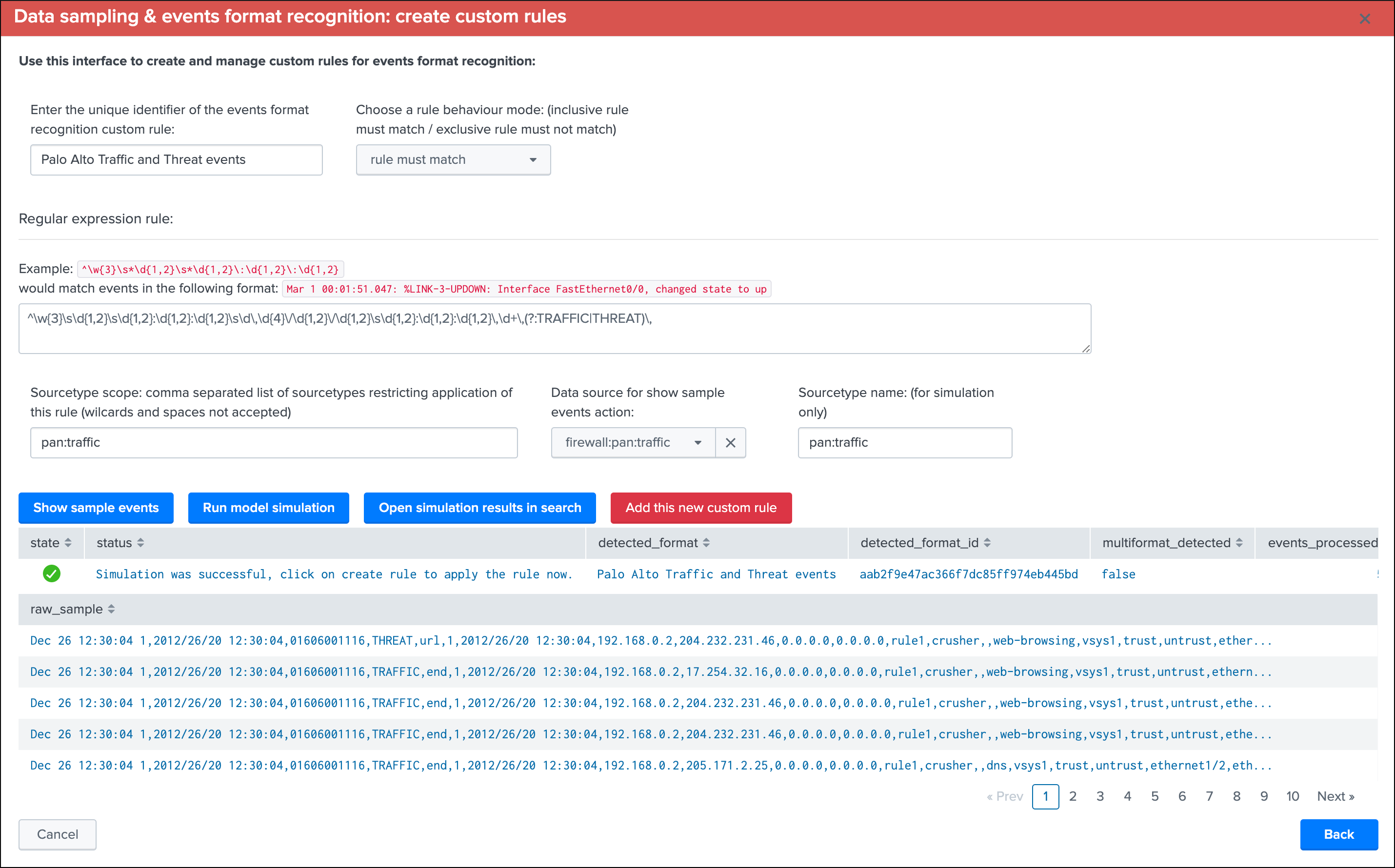

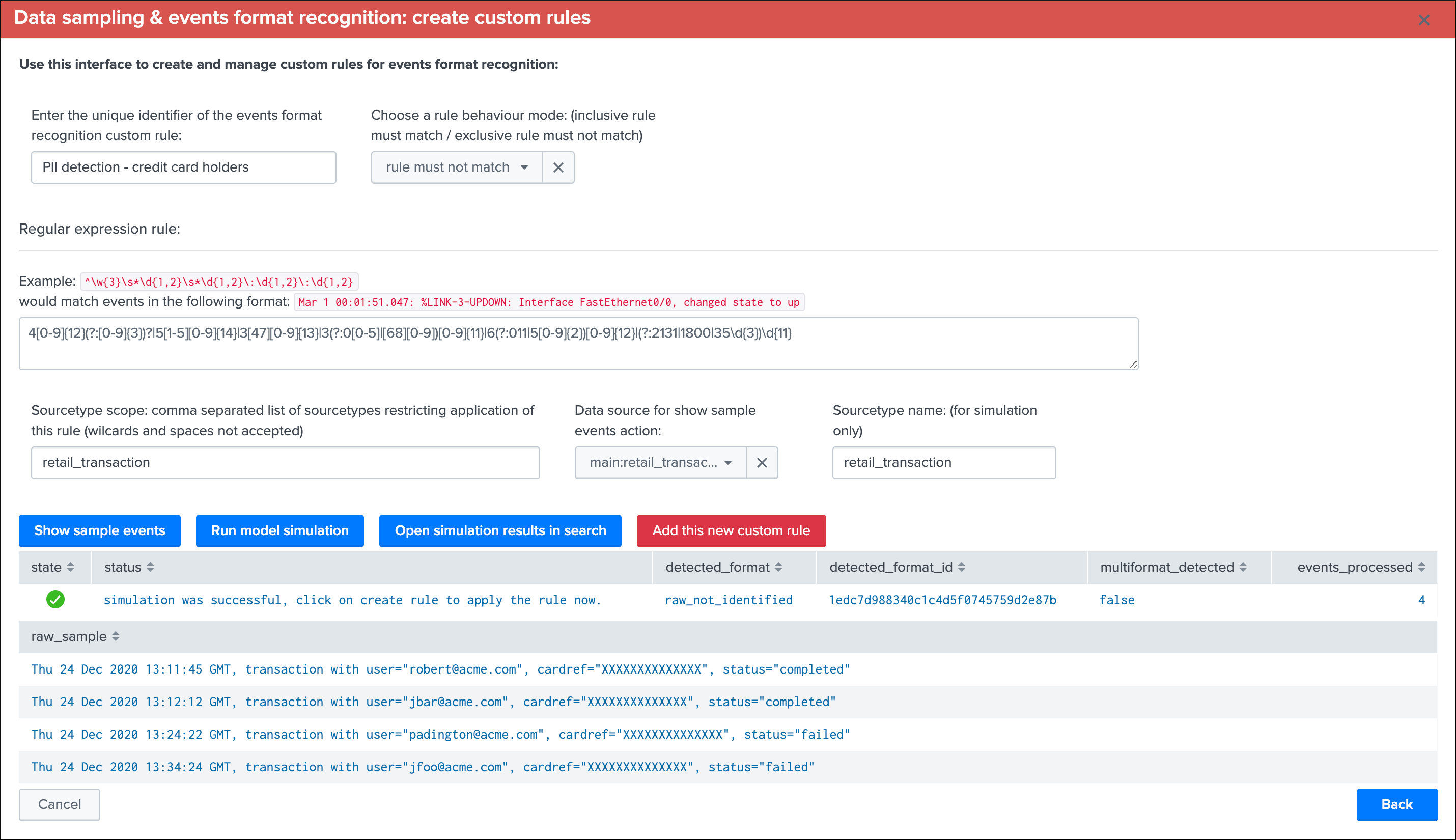

Manage custom rules¶

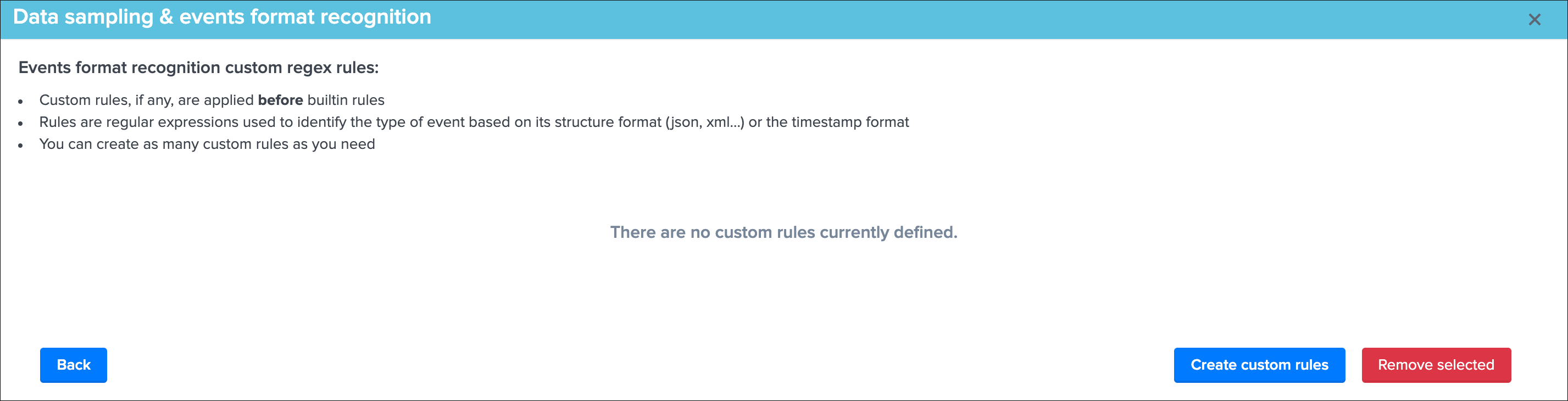

Custom rules provides a workflow to handle any custom sourcetypes and event formats that would not be identified by TrackMe, or patterns that must not be matched, by default there are no custom rules and the following screen would appear:

This view allows you to create a new custom rule (button Create custom rules) or remove any existing custom rules that would not be required anymore. (button Remove selected)

Tip

Each custom rule can be restricted to a given list of explicit sourcetypes, or applied against any sourcetype. (default)

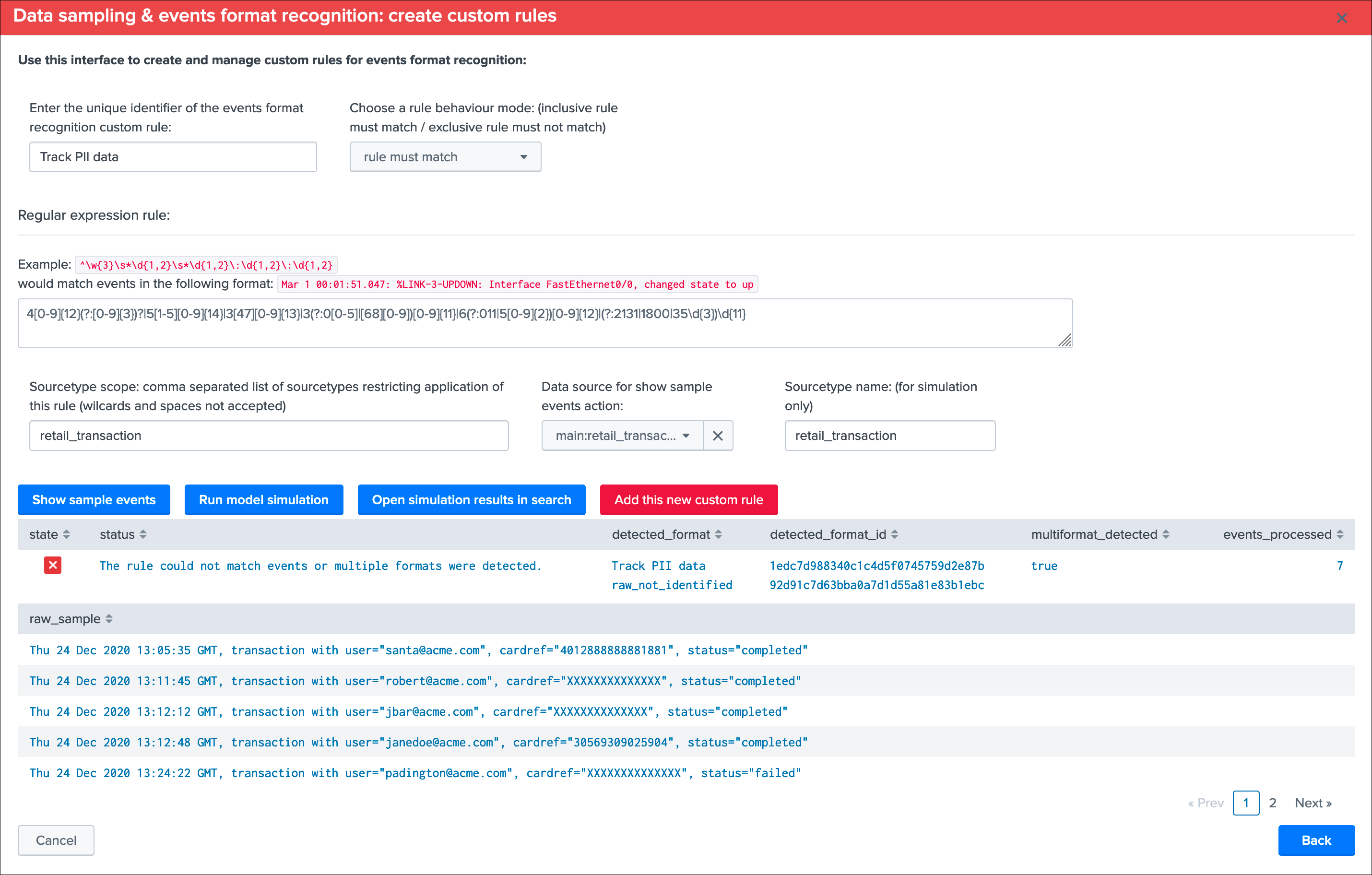

Create custom rules

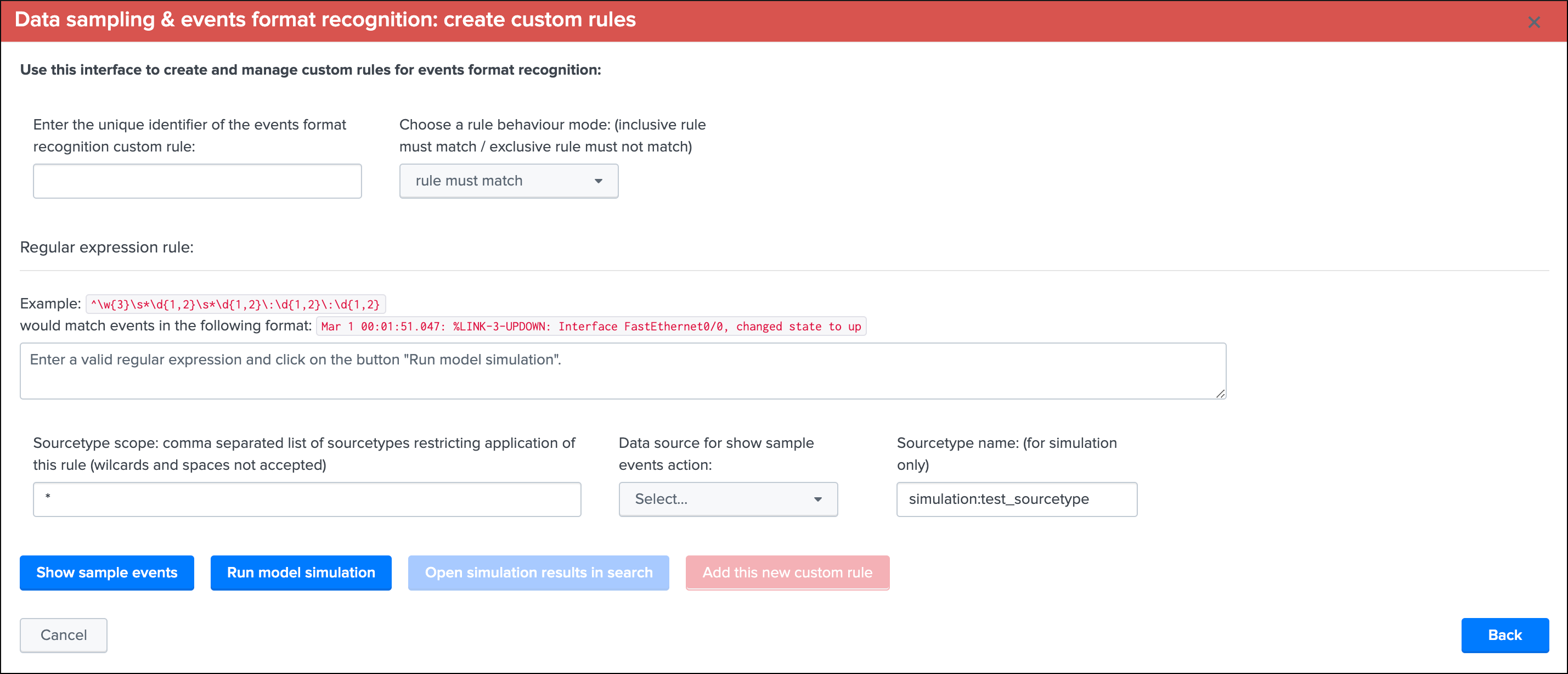

This screen alows to test and create a new custom rule based on the current data source:

Note: While you create a new custom rule via a specific data source, custom rules are applied to all data sources

To create a new custom rule:

- Enter a name for the rule, this value is a string of your choice that will be used to idenfity the match, it needs to be unique for the entire custom source collection and will be converted into an md5 hash automatically

- Choose if the rule is a “rule must match” or “rule must not match” type of rule, this will drive the match behaviour to define the state of the data sampling results

- Enter a valid regular expression that uniquely identifies the events format

- Optionally restrict the scope of application by sourcetype, you can specify one or more sourcetypes under the form of a comma separated list of values

- Click on “Run model simulation” to simulate the exectution of the new models

- Optionnaly click on “Show sample events” to view a mini sample of the events within the screen

- Optionnaly click on “”Open simulation results in search” to open the details of the rules processing per event in the search UI

- Finally if the status of the simulation is valid, click on “Add this new custom rule” to permanently add this new custom rule

Example:

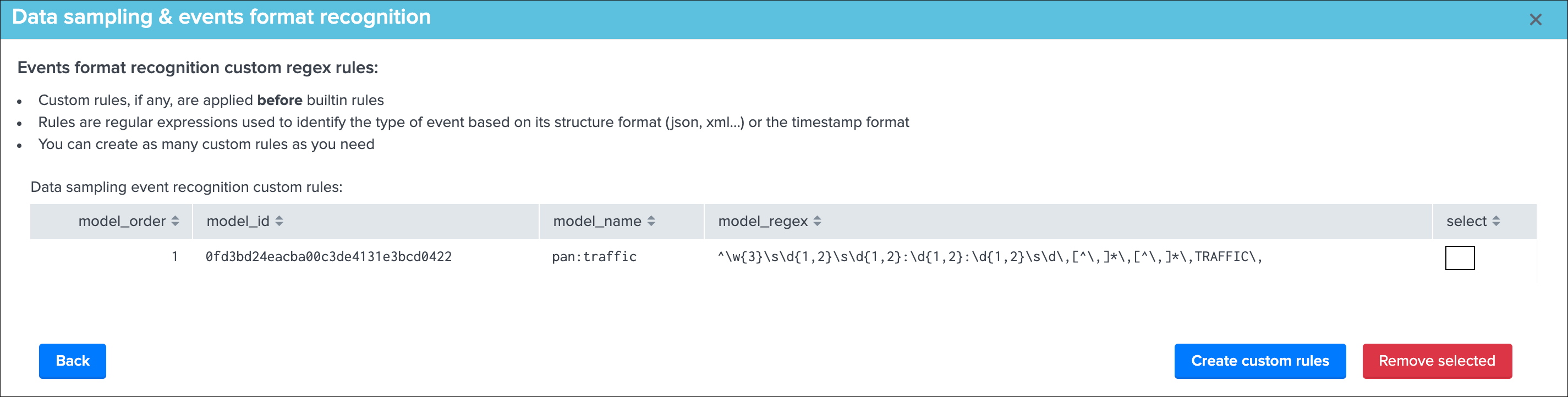

Once you have created a new custom rule, this rule will be applied automatically to future executions of the data sampling engine:

- If the format switches from a format idenfitied by the the builtin rules to a format identified by a custom rule, it will not appear in anomaly

- You can optionally clear the state of the data sampling for that data source to clean any previous states and force a new discovery

Remove custom rules

Once there is at least one custom rule defined, the list of custom rules appears in the table and can be selected for suppression:

When a custom rule is removed, future executions of the data sampling engine will not consider the rule deleted anymore, optionally you can run the data sampling engine now or clear the state for a data source.

Custom rules are stored in a KVstore collection which can as well be manually edited if you need to update an exising rule, or modify the order in which rules are processed:

trackme_data_sampling_custom_models

Run sampling engine now¶

Use this function to force running the data sampling engine now against this data source, this will not force a new discovery and will run the data sampling engine normally. (the current status is preserved)

When to use the run sampling engine now?

- You can can run this action at anytime and as often as you need, the action runs the data sampling engine for that data source only

- This action will have no effect if an anomaly was raised for the data source already, when an anomaly is detected the status is frozen (see Clear state and run sampling)

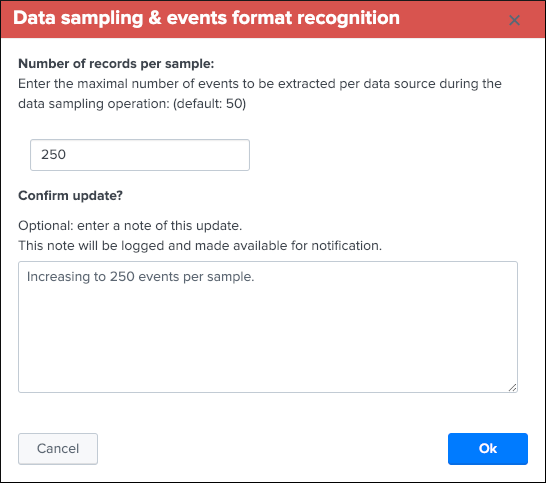

Update records/sample¶

You can define a custom number of events to be taken per sample using this action button within the UI.

By default, the Data sampling proceeds as following:

- When the first iteration for a given data source is processed, TrackMe picks a sample of 100 events

- During every new iteration, a sample of 50 events is taken

In addition, these values are defined globally for the application via the following macros:

- trackme_data_sampling_default_sample_record_at_discovery

- trackme_data_sampling_default_sample_record_at_run

Use this UI to choose a different value, increasing the number of events per sample improves the sampling process accuracy, at the cost of more processing and more memory and storage costs for the KVstore collection:

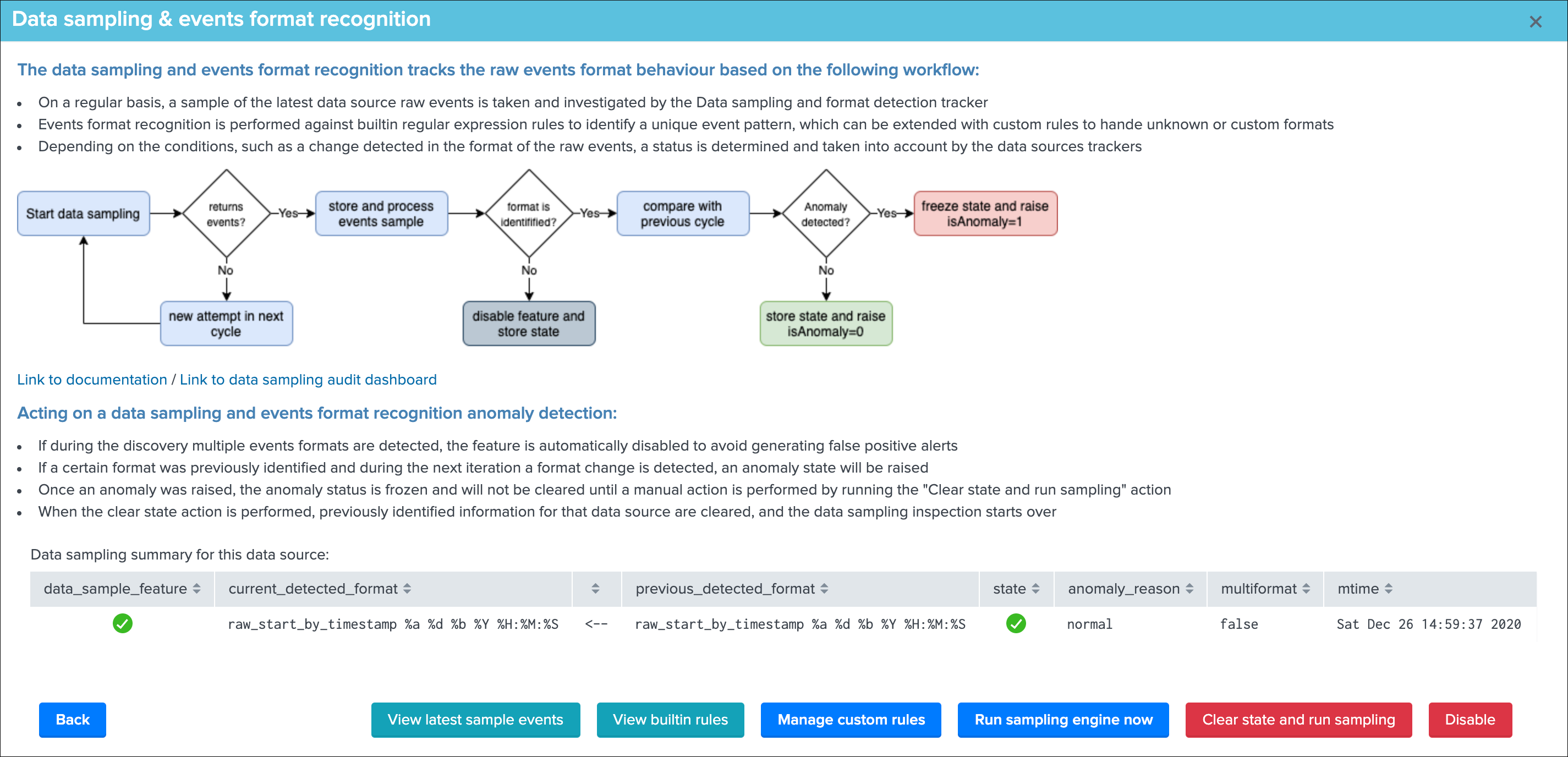

Clear state and run sampling¶

Use this function to clear any state previously determined, this forces the data source to be considered as it was the first time it was investigated by the data sampling engine. (a full sampling is processed and there are no prior status taken into account)

When to use the clear state and run sampling?

- Use this action to clear any known states for this data source and run the inspection from zero, just as if it was discovered for the first time

- You can use this action to clear an anomaly that was raised, when an alert is raised by the data sampling, the state is frozen until this anomaly is reviewed, once the issue is understood and fixed, run the action to clear the state and restart the inspection workflow for this data source

Disable Data sampling for a give data source¶

Use this function to disable data sampling for a given data source, there can be cases where you would need to disable this feature if for example there is a lack of quality which cannot be fixed, and some random formats are introduced out of your control.

Disabling the feature means defining the value of the field data_sample_feature to disabled in the collection trackme_data_sampling, once disabled the UI would show:

The Data sampling feature can be enabled / disabled at any point in time, as soon as a data source is disabled, TrackMe stops considering it during the sampling operations.

Data sampling Audit dashboard¶

An audit dashboard is provided in the audit navigation menu, this dashboard provides insight related to the data sampling feature and workflow:

Menu Audit / TrackMe - Data sampling and events formats recognition audit

Data sampling example 1: monitor a specific format¶

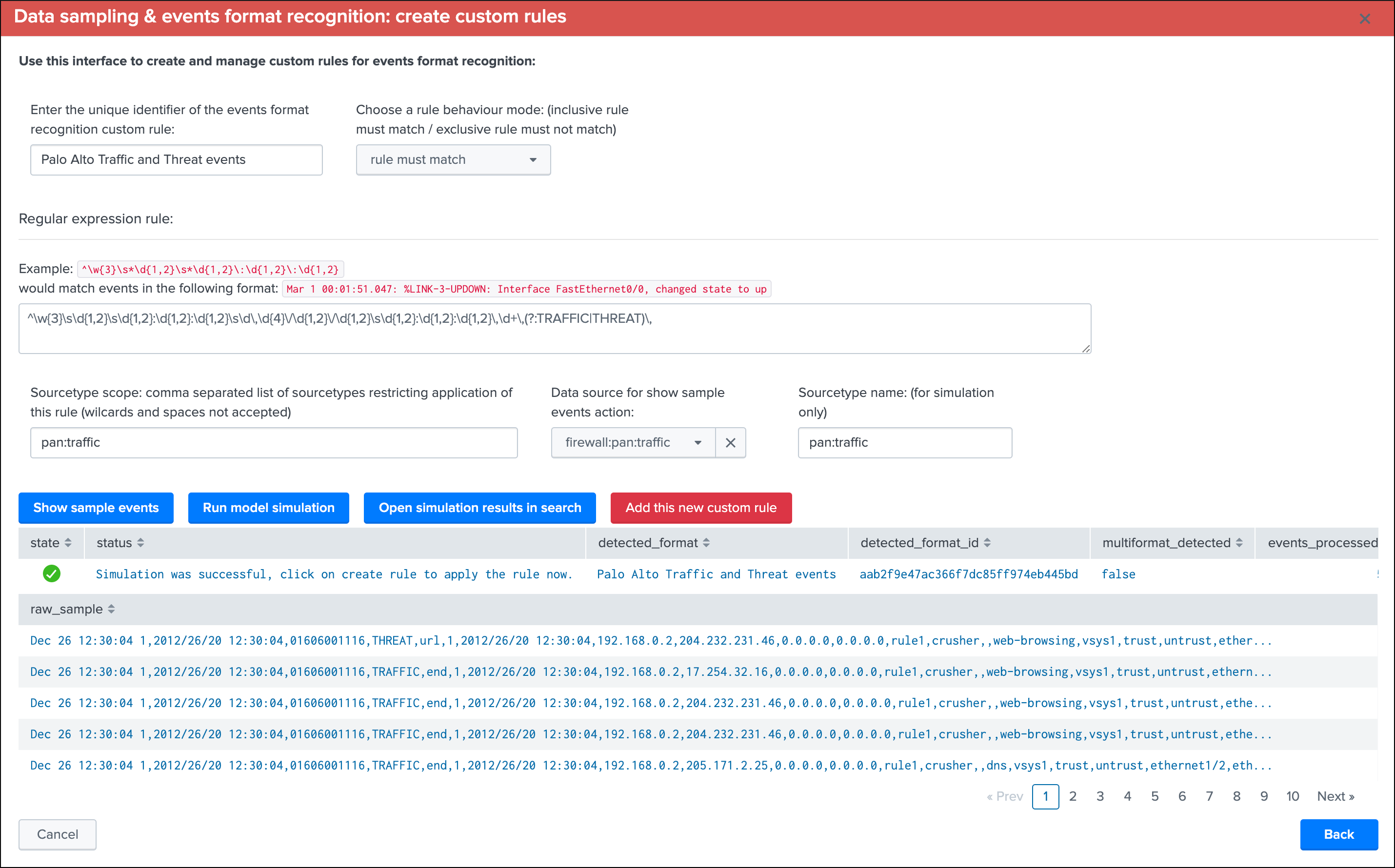

Let’s assume the following use case, we are ingesting Palo Alto firewall data and we want to monitor that our data is stricly respecting a specific expected format, any event that would not match this format would most likely be resulting from malformed events or issues in our ingestion pipeline:

Within the custom rules UI, we proceed to the creation of a new custom rule, in short our events look like:

Dec 26 12:15:01 1,2012/26/20 12:15:01,01606001116,TRAFFIC,start,1,2012/26/20 12:15:01,192.168.0.2,204.232.231.46,0.0.0.0,0.0.0.0,

Dec 26 12:15:02 1,2012/26/20 12:15:02,01606001116,THREAT,url,1,2012/26/20 12:15:02,192.168.0.2,204.232.231.46,0.0.0.0,0.0.0.0,

We could use the following regular expression to stricly match the format, the data sampling is similar to a where match SPL statement:

^\w{3}\s*\d{1,2}\s*\d{1,2}:\d{1,2}:\d{1,2}\s*\d\,\d{4}\/\d{1,2}\/\d{1,2}\s*\d{1,2}:\d{1,2}:\d{1,2}\,\d+\,(?:TRAFFIC|THREAT)\,

Note: the regular expression doesn’t have to be complex, it is up to your decide how strict it should be depending on your use case

Tip

The data sampling engine will stop at the first regular expression match, to handle advanced or more complex configuration, use the sourcetype scope to restrict the custom rule to sourcetypes that should be considered

We create a rule must match type of rule, which means that in normal circumstances we expect all events to be matched by our custom rule, otherwise this would be considered as an anomaly.

Once the rule has been created:

The next execution of the data sampling will report the name of the rule for each data source that is matching our conditions:

Should a change in the events format happen, such as malformed events happening for any reason, the data sampling rule would match these exceptions and render a status error to be reviewed.

Review of the latest events sample would clearly show the root cause of the issue: (button View latest sample events):

As the data sampling engine stops proceeding a data source as soon as an issue was detected, these events are the exact events that have caused the anomaly exception at the exact time it happened.

Once investigations have been performed, the root cause was identified and ideally fixed, a TrackMe admin would clear the data sampling state to free the current state and allow the workflow to proceed again in further executions.

Data sampling example 2: track PII data card holders¶

Let’s consider the following use case, we ingest retail transaction logs which are not supposed to contain PII data (Personally Identifiable Information) because the events are anonymised during the indexing phase. (this obviously is a simplitic example for the demonstration purposes)

In our example, we will consider credit card references which are replaced by the according number of “X” characters:

Thu 24 Dec 2020 13:12:12 GMT, transaction with user="jbar@acme.com", cardref="XXXXXXXXXXXXXX", status="completed"

Thu 24 Dec 2020 13:34:24 GMT, transaction with user="jfoo@acme.com", cardref="XXXXXXXXXXXXXX", status="failed"

Thu 24 Dec 2020 13:11:45 GMT, transaction with user="robert@acme.com", cardref="XXXXXXXXXXXXXX", status="completed"

Thu 24 Dec 2020 13:24:22 GMT, transaction with user="padington@acme.com", cardref="XXXXXXXXXXXXXX", status="failed"

To track for an anomaly in the process that normally anonymises the data, we could rely on a regular expression that targets valid credit card numbers:

See: https://www.regextester.com/93608

4[0-9]{12}(?:[0-9]{3})?|5[1-5][0-9]{14}|3[47][0-9]{13}|3(?:0[0-5]|[68][0-9])[0-9]{11}|6(?:011|5[0-9]{2})[0-9]{12}|(?:2131|1800|35\d{3})\d{11}

Should any event be matching this regular expression, we would most likely face a situation where we have indexed a clear text information that is very problematic, let’s create a new custom rule of a rule must not match type to track this use case automatically, to avoid false positive detection we will restrict this custom rule to a given list of sourcetypes:

Our data uses a format that is recognized automatically by builtin rules, and would appears as following in normal circumstances:

After some time, we introduce events containing real clear text credit card numbers, eventually our custom rule will automatically detect it and state an alert on the data source:

We can clearly understand the root cause of the issue reported by TrackMe, shall we investigate further (button View latest sample events):

Thanks to the data sampling feature, we are able to get an automated tracking that is working at any scale, keep in mind that TrackMe will proceed by picking up samples, which means a very rare condition will potentially not be detected.

However, there is statistically a very high level of chance that if this is happening on a regular basis, this will be detected without having to generate very expensive searches that would look at the entire subset of data. (which would be very expensive and potentially not doable at scale)

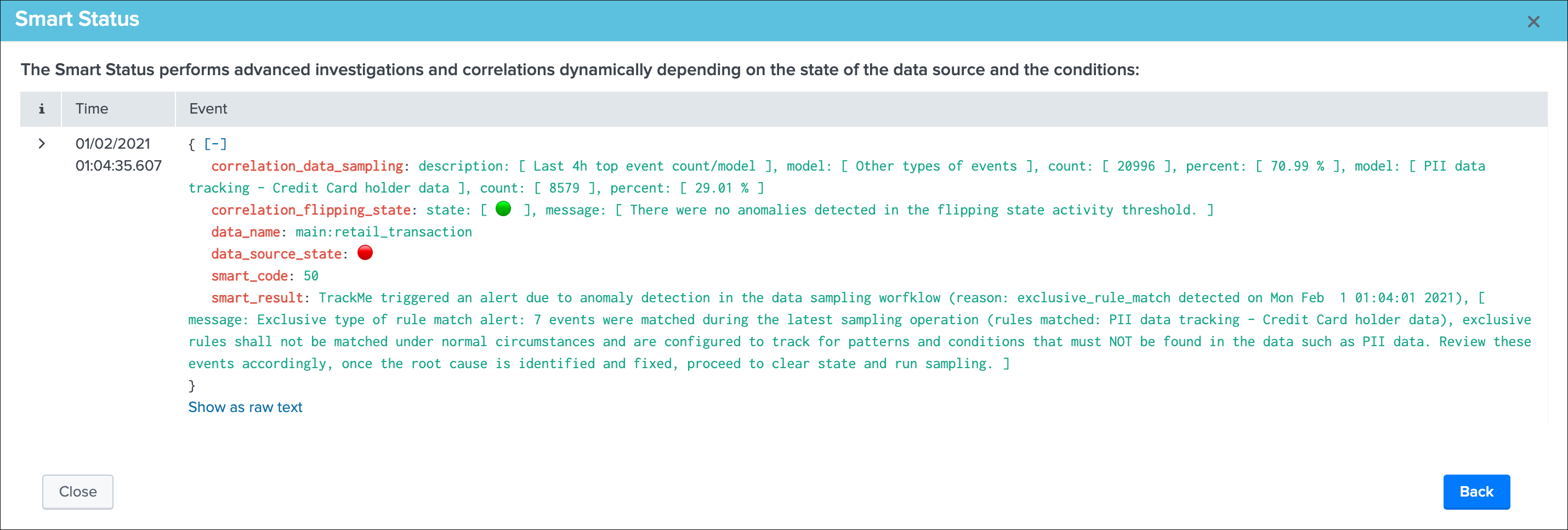

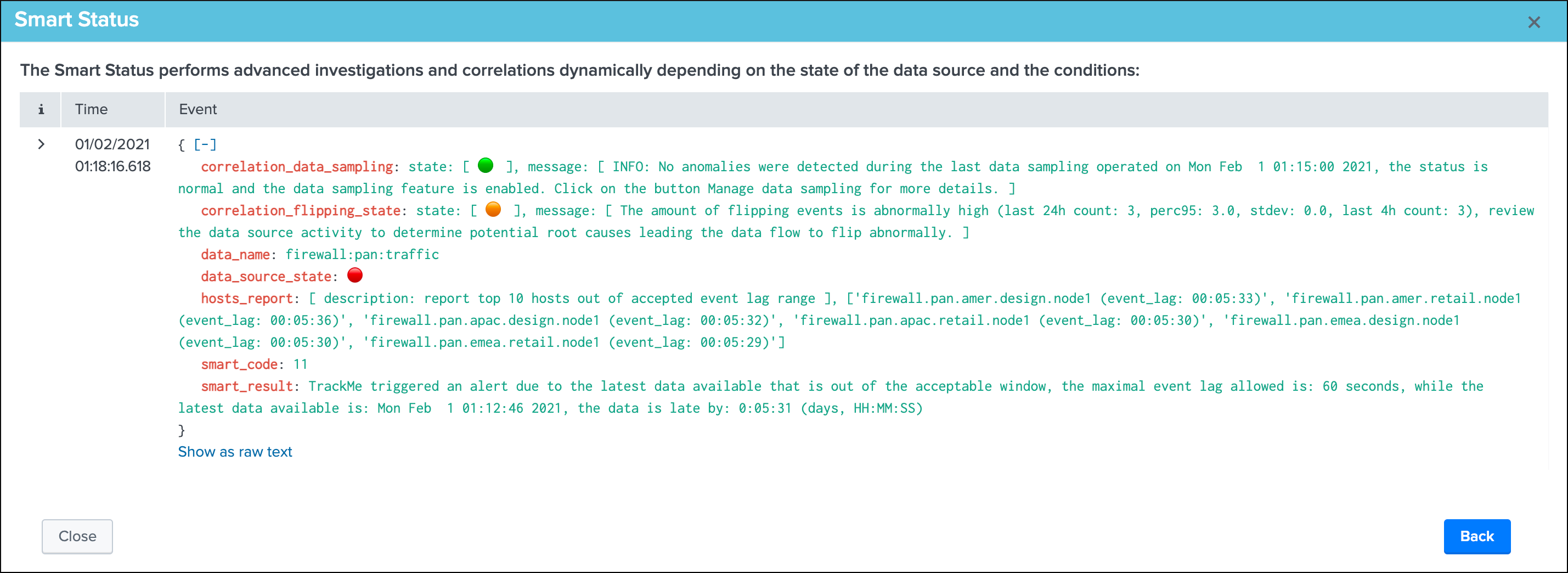

Smart Status¶

Smart Status Introduction¶

The Smart Status is a powerful feature that runs automated investigations and correlations.

Under the cover, the Smart Status is a Python based backend exposed via a REST API endpoint, it is available in the TrackMe UI via the REST API trackme SPL command and any third party integration via the Smart Status endpoints.

The feature uses the Python SDK for Splunk and Python capabilities to perform various conditional operations depending on the status of the entity, for instance in short for a data source it does:

- retrieve the current state of the entity

- perform a correlation over the flipping events to determine if the rate of flipping events is abnormal

- if the status is not green, determine the reason for the status and conditionally perform correlations and provide a report highlting the findings

- finally generate a JSON response with a status code depending on the investigations to ease and fast the understanding of the failure root cause

In short, the purpose of the feature is to quickly and automatically investigate the entity status, and provide a short path for investigations.

Smart Status within the UI¶

In the UI, access the Smart Status the open-up screen for a given entity, for data sources, hosts and metric hosts:

Smart Status example: (normal state entity)

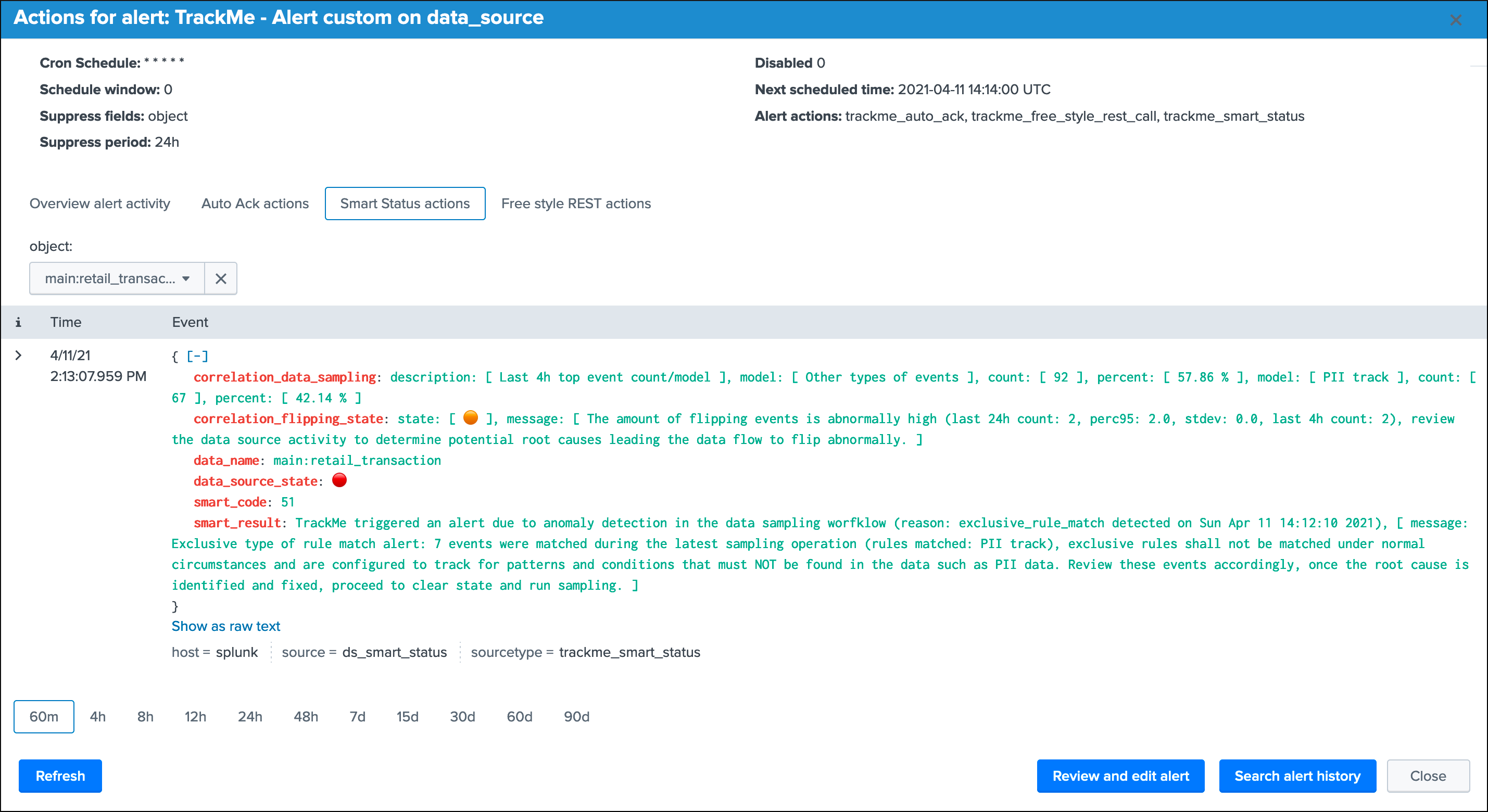

Smart Status example: (alert state entity due to outliers)

Smart Status example: (alert state entity due to data sampling exclusive rule matching PII data)

Smart Status example: (alert state entity due to lagging)

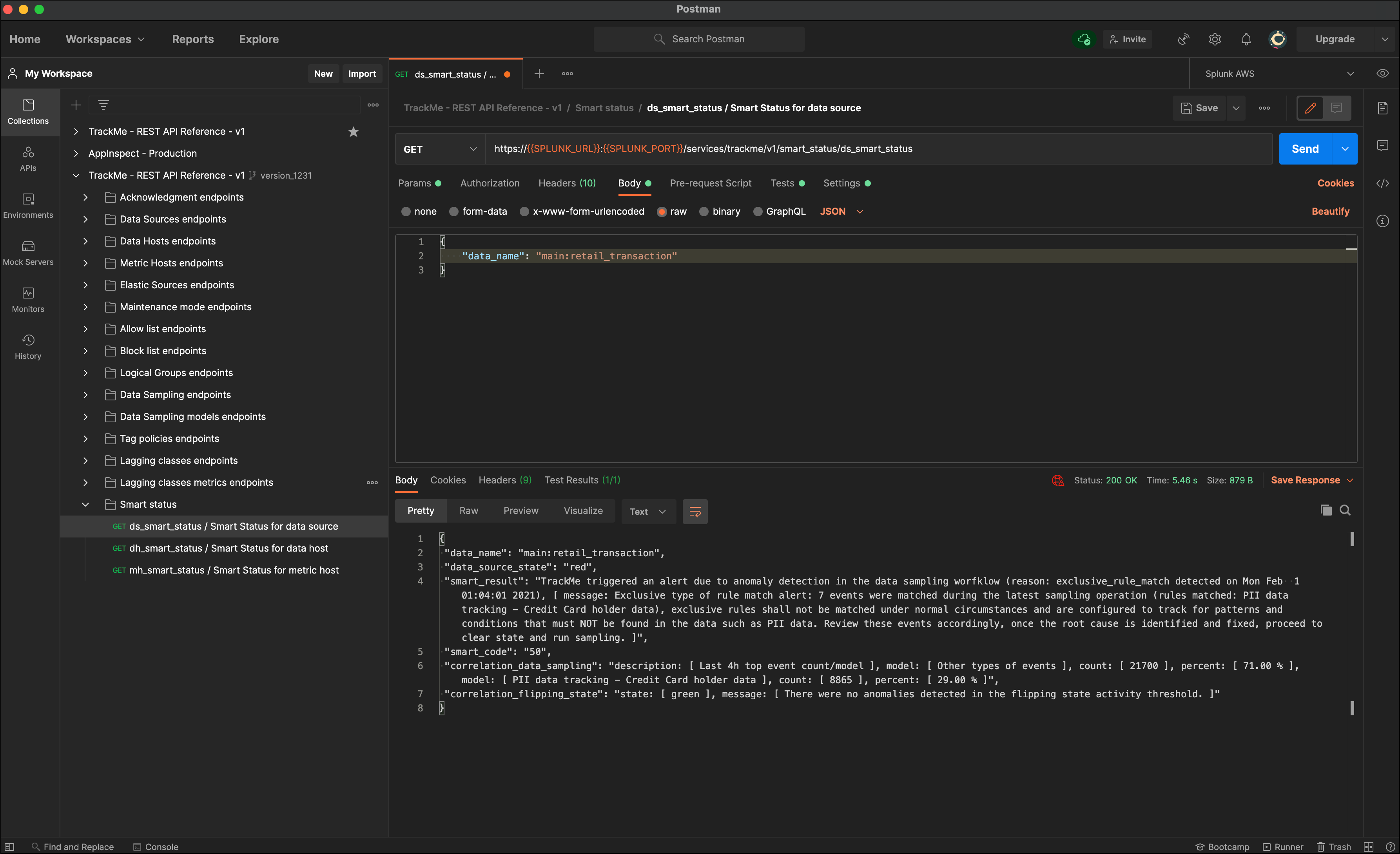

Smart Status from external third party¶

The Smart Status feature is serviced by a REST API endpoint, as such it can be requested via any external system, such as Splunk Phantom or any other automation plateforns:

Smart Status example via Postman:

Alerts tracking¶

Alerts tracking

- TrackMe relies on Splunk alerts to provide automated results based on your preferences and usage

- One template alert is provided per type of entities (data sources / data hosts / metric hosts) which you can decide to enable and start using straight away

- As well, you can create custom alerts via an assistant which templates a TrackMe alert based on your preferences and choices

- Finally, TrackMe provides builtin alert actions that are used to extend the application functionalities

The alert topic is as well discussed at the configuration step: Step 7: enabling out of the box alerts or create your own custom alerts

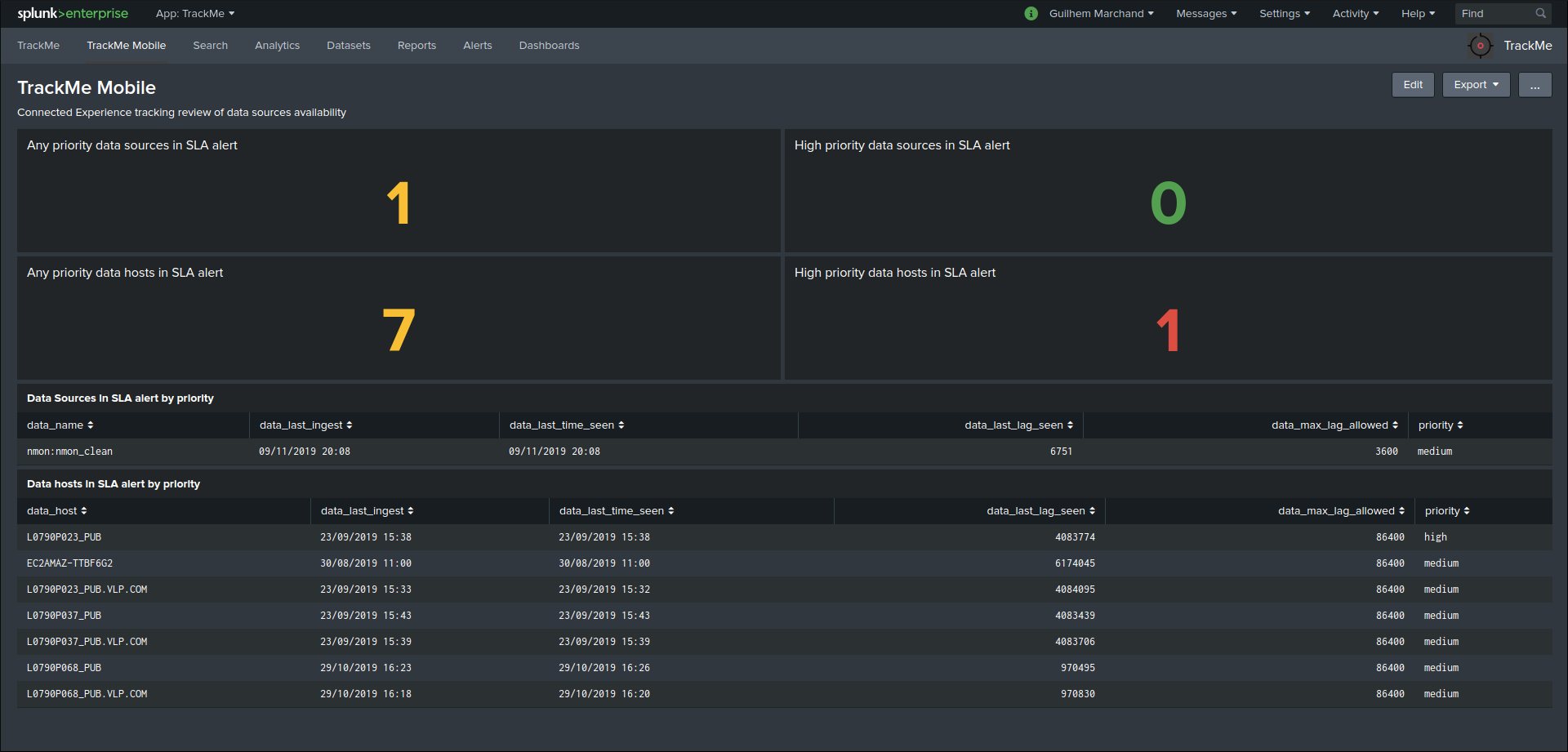

Alerts tracking main screen¶

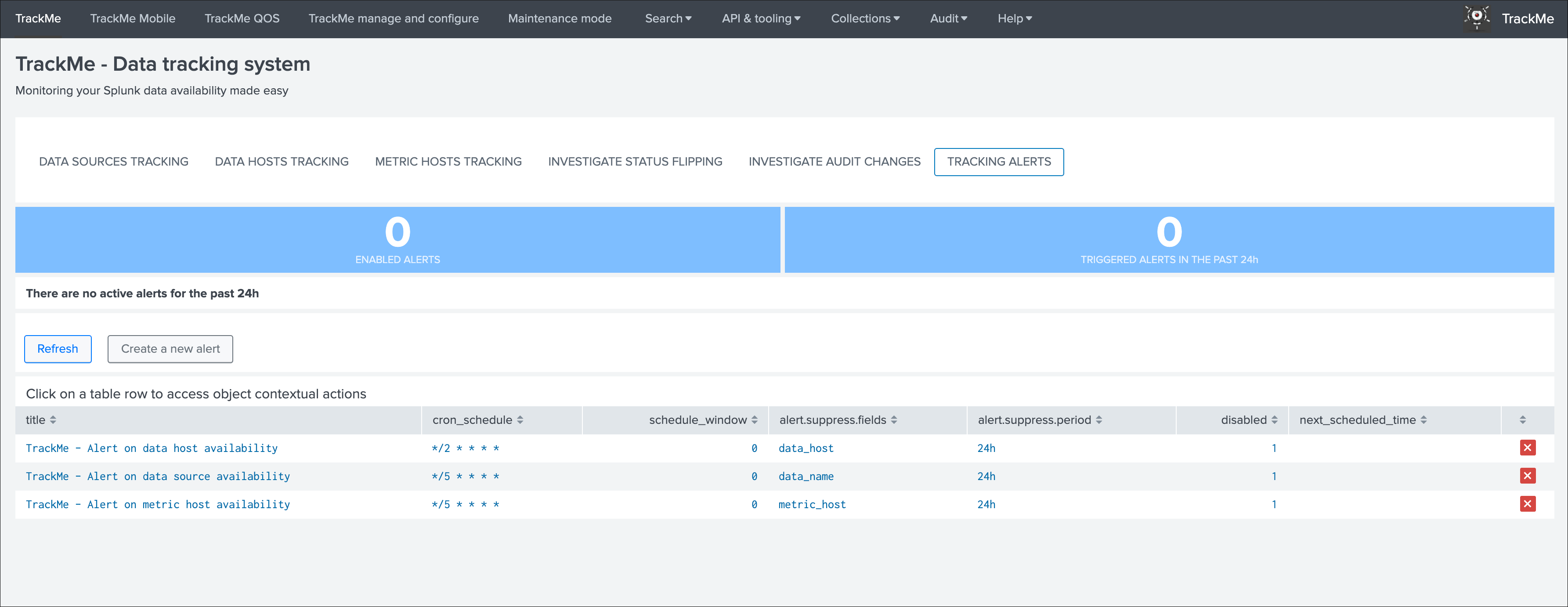

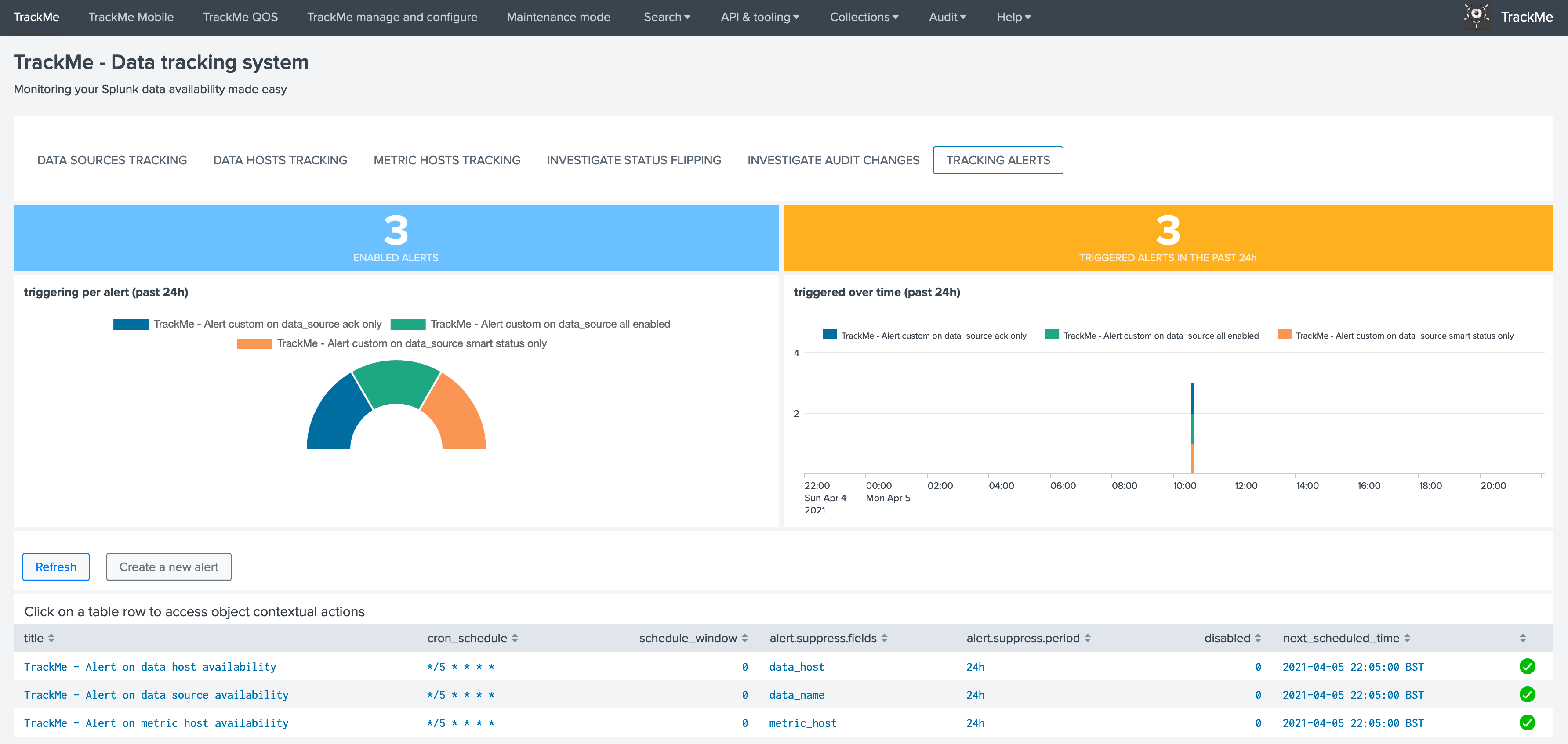

Within the main TrackMe UI, the alerts tracking screen is available as a selectable tab:

Depending on the alerts that were enabled, and the actiity of the environment, the screen shows a 24 hours overview of the alerts activity:

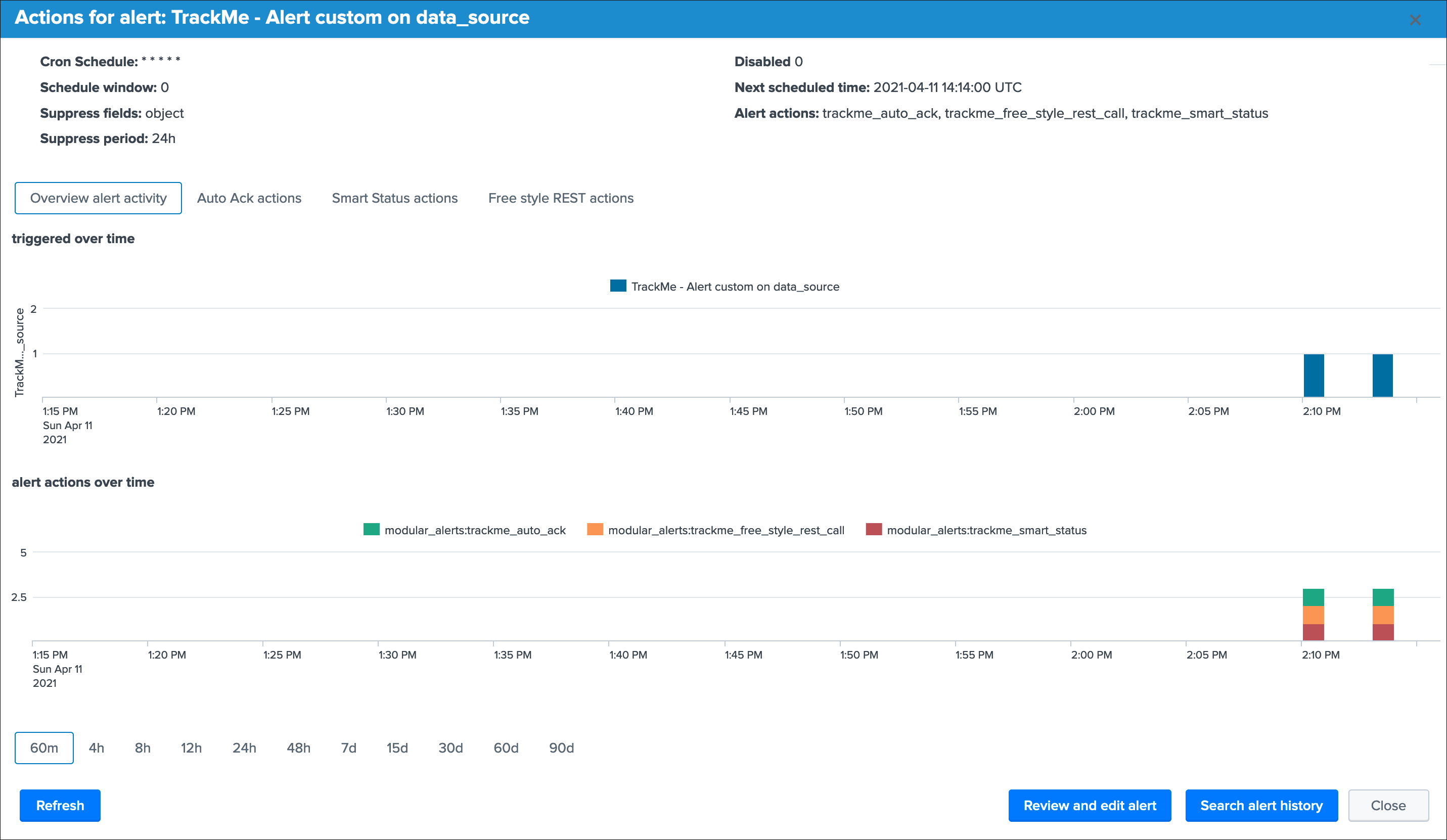

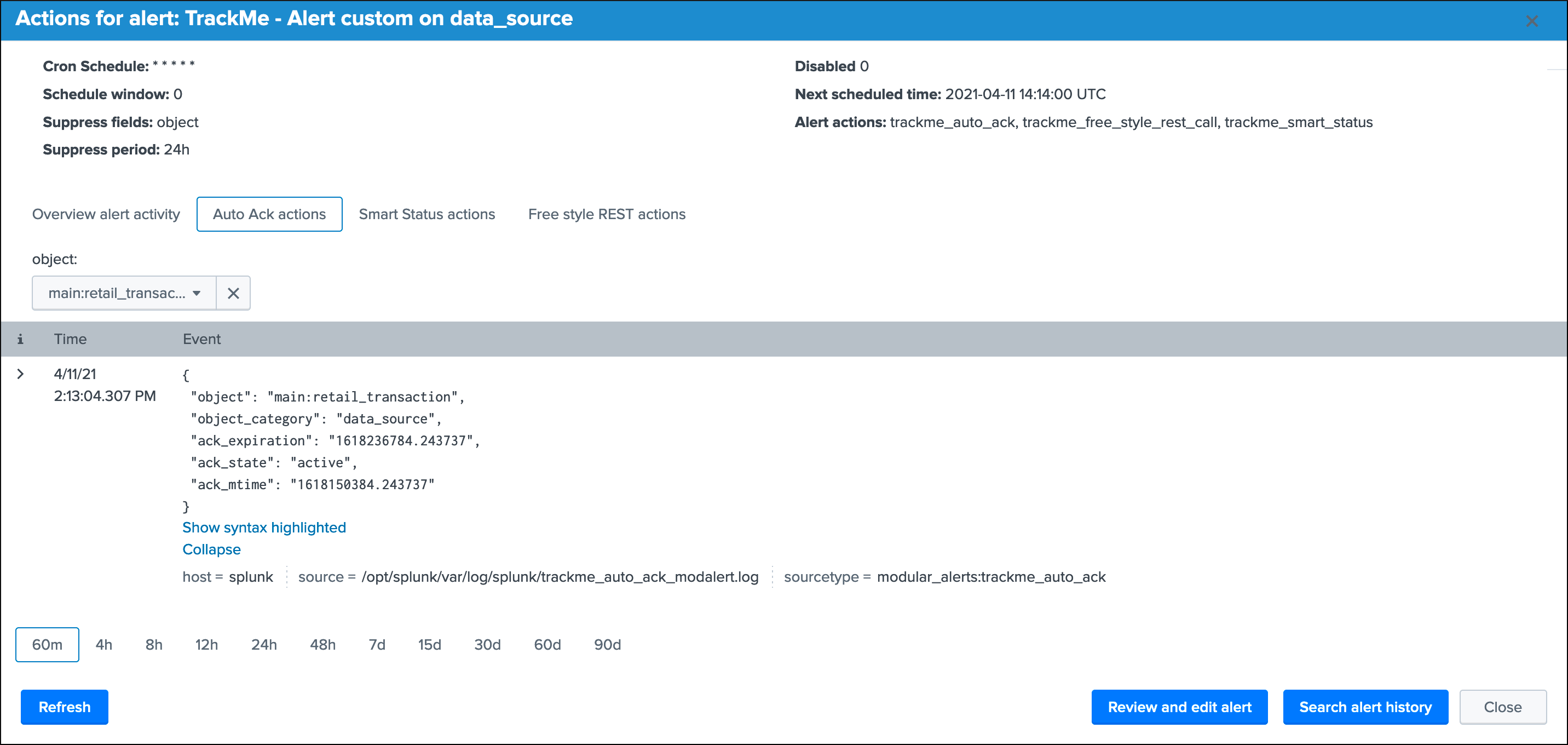

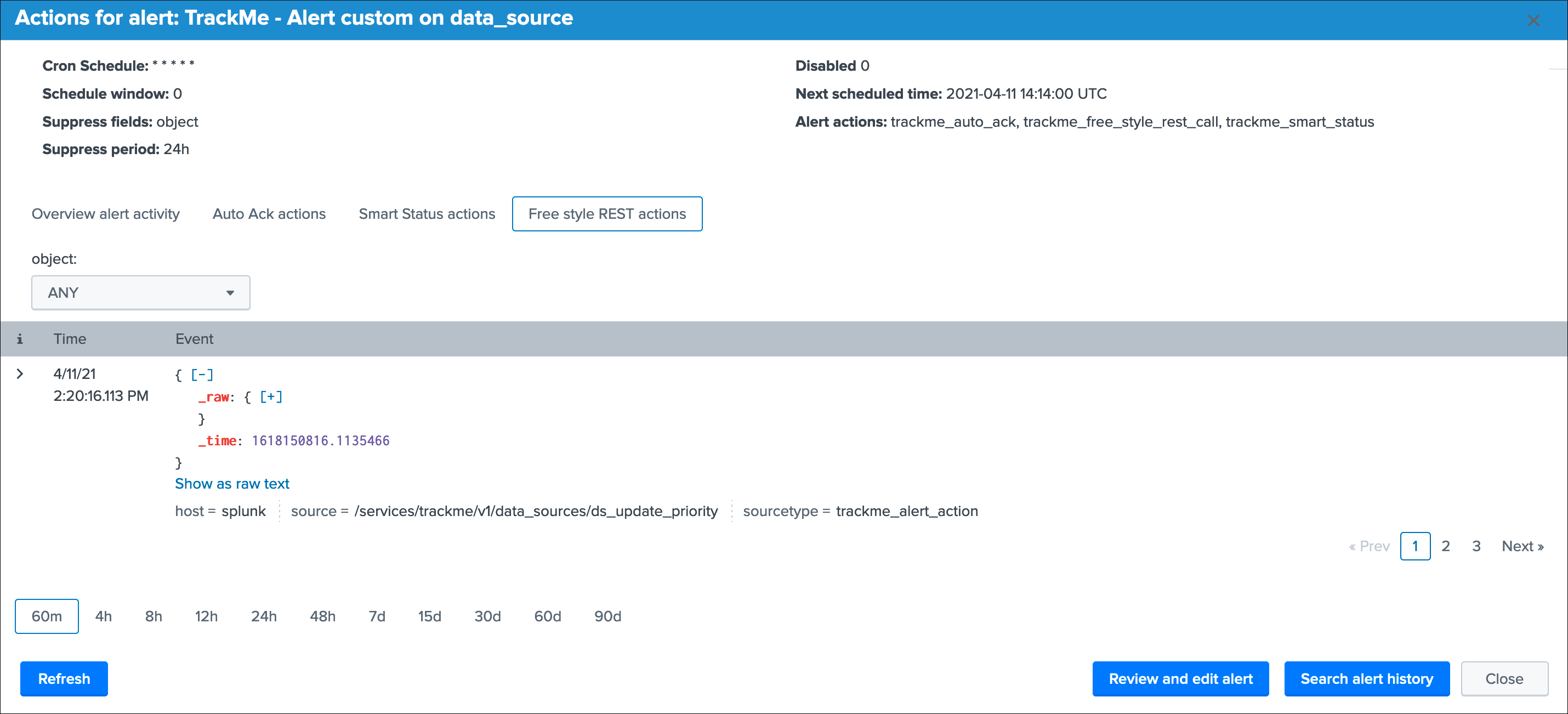

Clicking on any alert opens an overview window for this alert with shortcut to the Splunk alert editor and other functions:

Alerts tracking: out of the box alerts¶

Alerts are provided out of the box that cover the basic alerting for all TrackMe entities:

TrackMe - Alert on data source availabilityTrackMe - Alert on data host availabilityTrackMe - Alert on metric host availability

Hint

Out of the box alerts

- Out of the box alerts are disabled by default, you need to enable alerts to start using them

- Alerts will trigger by default on

high priorityentities only, this is controlled via the macro definitiontrackme_alerts_priority - Edit the alert to perform your third party integration, for example

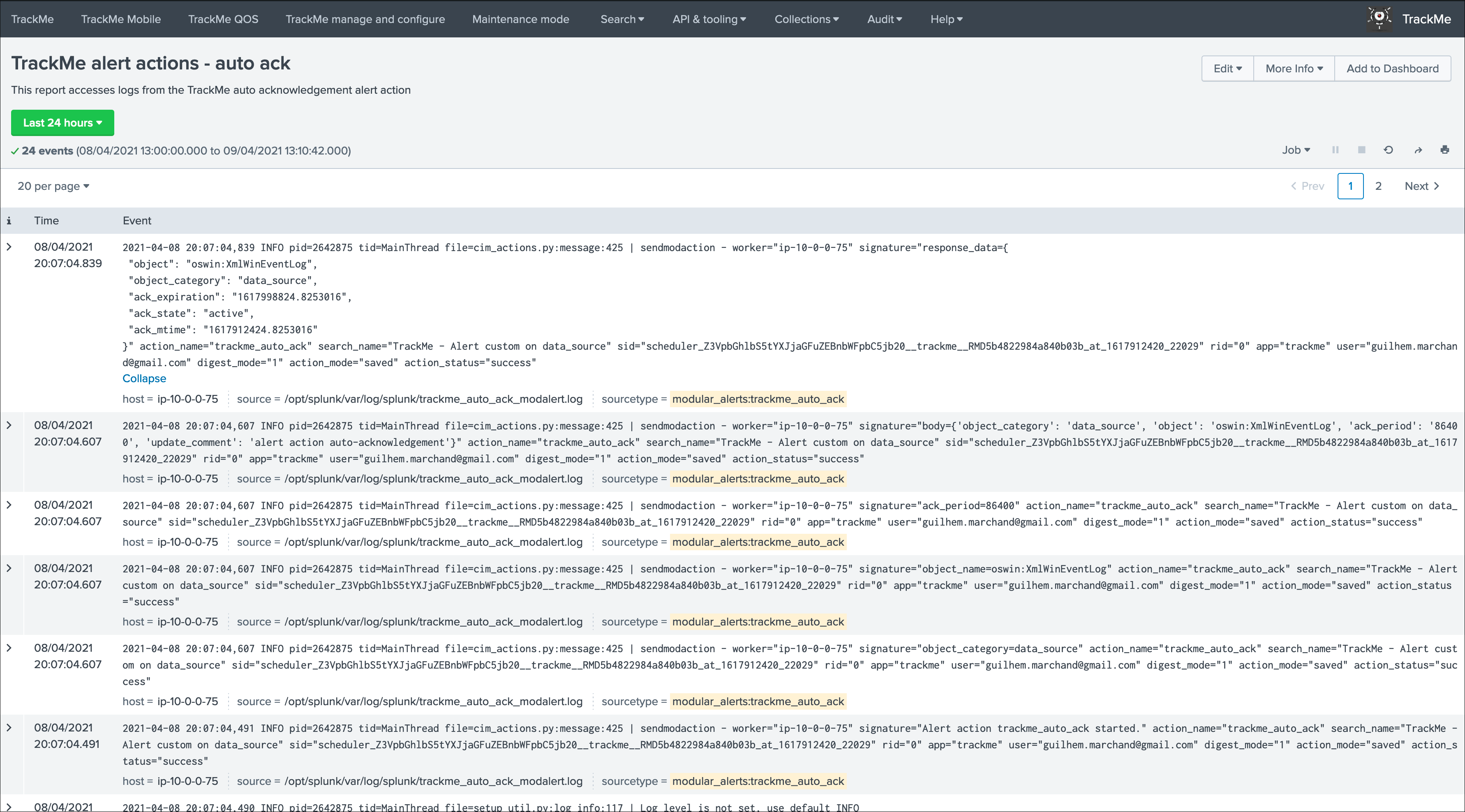

sending emailsor creatingJIRA issuesbased on Splunk alert actions capabilities - Out of the box alert enable by default two TrackMe alert actions,

automatic acknowledgementand theSmart Statusalert actions - The results of the

Smart Statusalert action are automatically indexed in the TrackMe summary index within the sourcetypetrackme_smart_statusand can be used for investigation purposes

Alerts tracking: custom alerts¶

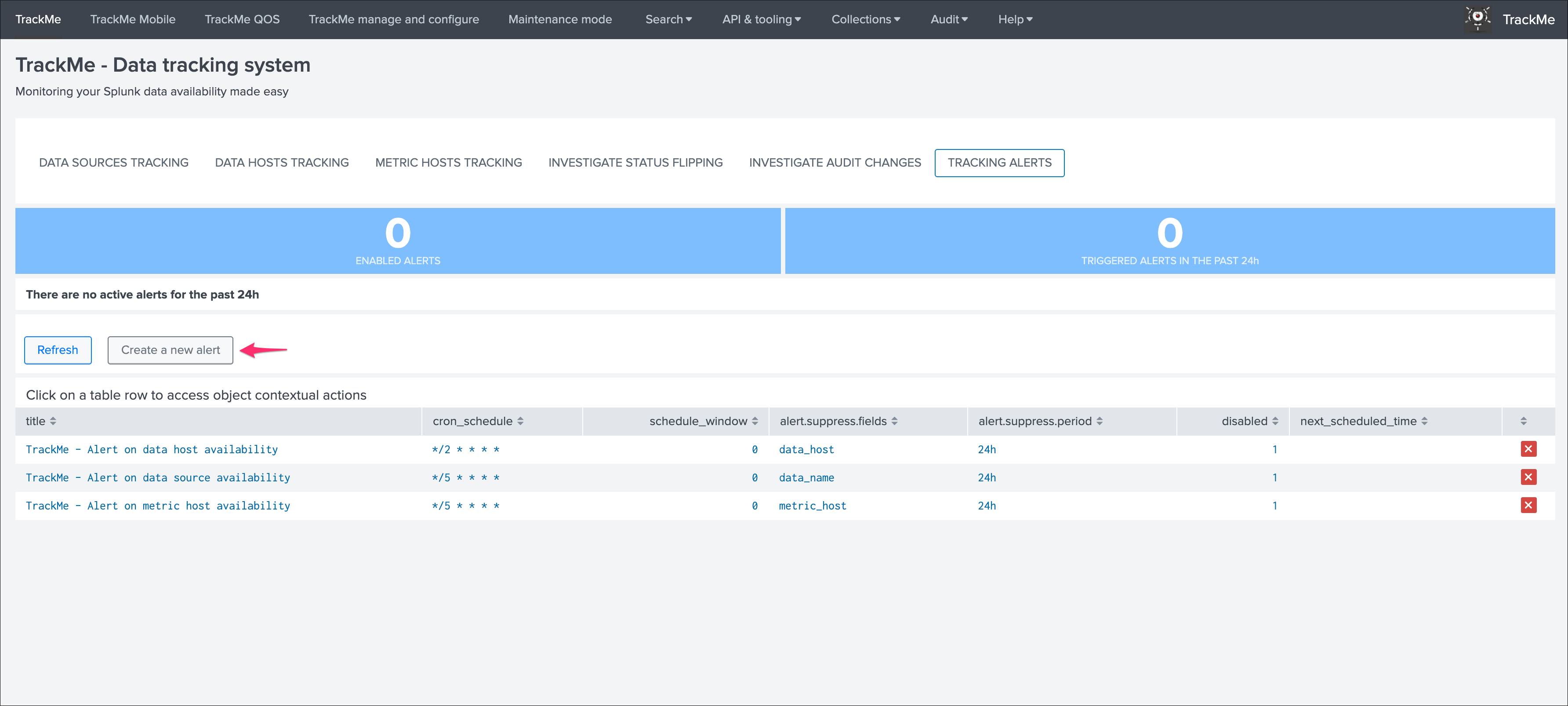

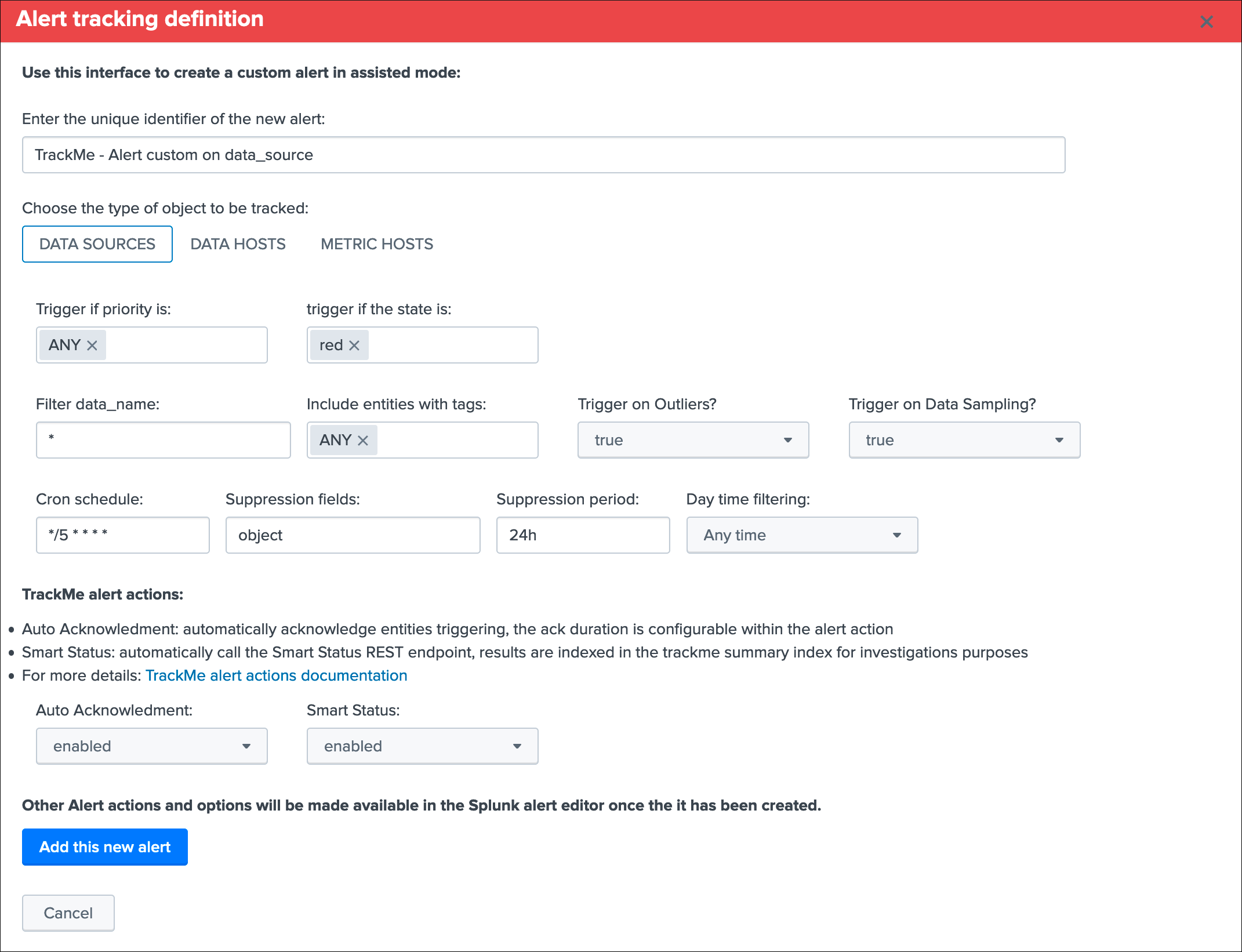

You can use this interface to a create one or more custom alerts:

This opens the assistant where you can choose between different builtin options depending on the type of entities to be monitoring:

Once you have created a new alert, it will be immediately visible in the tracking alerts UI, and you can use the Splunk built alert editor to modify the alert to up to your needs such as enabling third party actions, emails actions and so forth.

Hint

Custom alert features

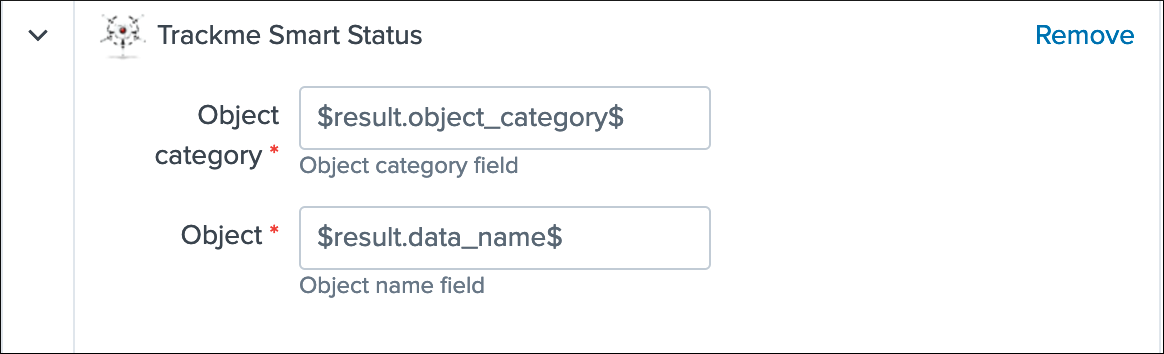

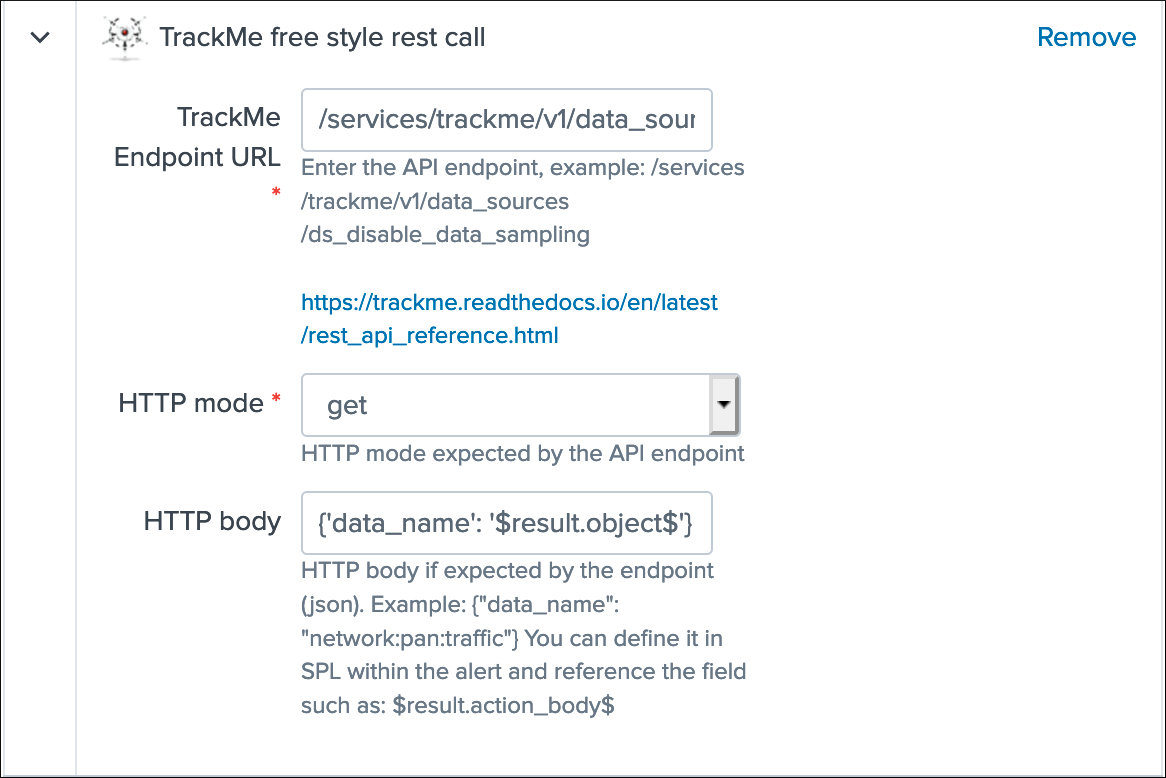

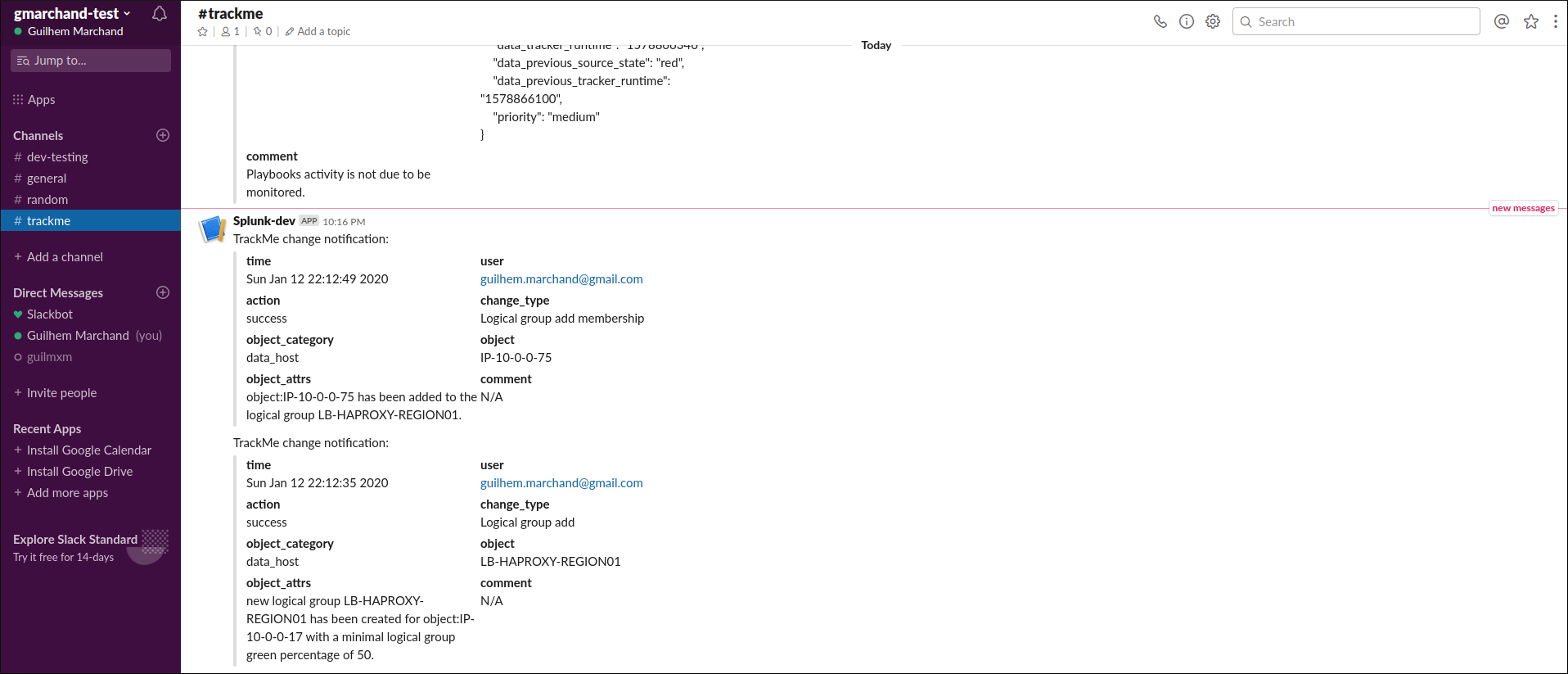

- Creating custom alerts provide several layers of flexibility depending on your choices and preferences